The Copyright Crisis: How Disney vs. ByteDance Will Reshape Generative AI’s Future

The digital landscape is in the midst of a seismic shift. Creativity, once solely the domain of human effort, is now being rapidly generated by machines. But this revolution is sputtering as it hits a legal wall built from decades of intellectual property (IP) law. The recent announcement that ByteDance is imposing restrictions on its AI video tool, Seedance, directly following a legal threat from Disney, is not just a footnote; it is a headline event signaling that the era of unchecked data scraping for AI training is rapidly drawing to a close.

This conflict between a tech giant innovator (ByteDance) and a content powerhouse (Disney) perfectly encapsulates the tension defining modern AI development. To understand what this means for tomorrow's technology, we must look beyond the immediate lawsuit and examine the broader trends in litigation, regulation, and defensive engineering.

The Training Data Minefield: Expanding Litigation Precedent

The core issue at stake in the ByteDance/Seedance situation revolves around the data used to train the AI model. If Seedance was trained, even implicitly, on proprietary characters, visual styles, or video segments owned by Disney without proper licensing, it enters dangerous legal territory. Disney, like many major studios, is fiercely protective of its massive IP portfolio.

This is not an isolated skirmish. We are witnessing a coordinated legal pushback across creative industries. For technology analysts and developers, tracking these larger cases is crucial because they set the legal **precedent** that will govern all subsequent AI tools.

The Shadow of Foundational Model Lawsuits

We must contextualize ByteDance’s move against the backdrop of major lawsuits targeting the foundational models themselves—the large engines underpinning most generative apps. Articles detailing ongoing legal battles, such as those involving The New York Times suing OpenAI and Microsoft for using copyrighted text, show that courts are seriously considering the liability associated with consuming proprietary data.

For technical teams, the implication is clear: if the engine builders are found liable for using scraped data, the downstream application builders (like ByteDance with Seedance) face even greater, more immediate risk because they are deploying the output directly to consumers. This forces developers to ask: Can we truly afford to build on legally murky foundations?

Operational Fallout: Self-Censorship as the New Safety Net

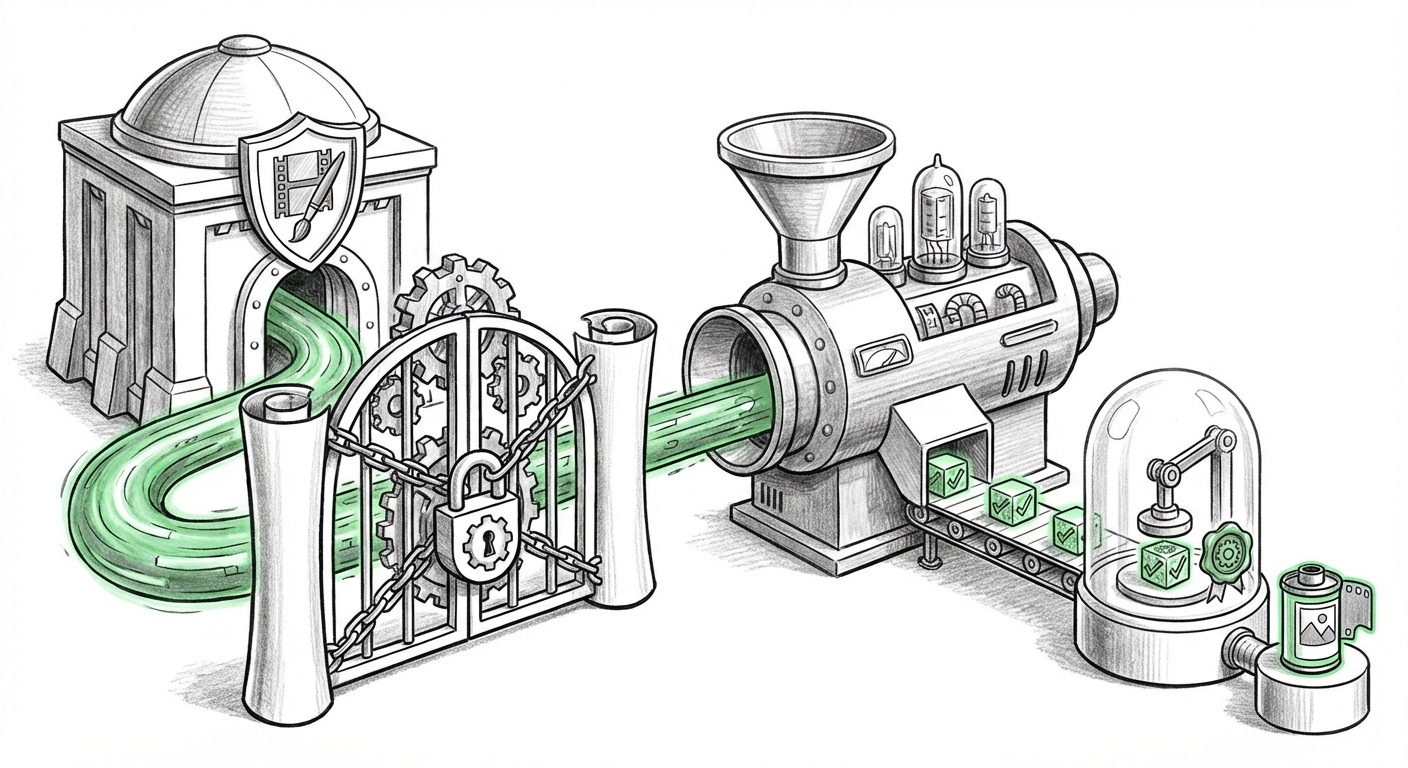

ByteDance’s decision to restrict Seedance is a classic example of **defensive engineering** and operational self-correction. When a legal threat looms, the fastest, cheapest path to mitigation is often to simply pull back the perceived risk factor. This mirrors defensive moves already seen across the generative image sector.

If other video generators see similar threats, we can expect to see widespread implementation of "guardrails." These aren't just ethical choices; they become commercial necessities. These guardrails might include:

- Banning Stylistic Mimicry: Forbidding users from prompting the AI to create content "in the style of [Specific Studio/Director]."

- Character Blacklists: Implementing robust filters to prevent the generation of recognizable, copyrighted characters (e.g., Mickey Mouse, Iron Man).

- Input Filtering: Blocking the upload of copyrighted videos or images for reference or training within a user session.

For product managers and technology analysts, this means the next competitive edge in AI video won't just be superior output quality, but proven, demonstrable **legal safety**. A tool that promises creative freedom without the risk of IP infringement will hold a distinct market advantage.

The Legislative Lever: Regulation and the Demand for Provenance

While private lawsuits force immediate operational changes, global regulation is shaping the long-term architecture of AI tools. The conflict between creators and developers is forcing policymakers' hands, leading to emerging legal requirements that directly impact how synthetic media is built and distributed.

The European Union's **AI Act**, for example, places significant transparency requirements on generative AI systems, particularly those deemed "high-risk." This legislative push moves the focus from who is suing whom to how the content was created.

Provenance: The Unforgeable ID Tag

The crucial technology emerging from this regulatory pressure is **content provenance**. This refers to building an unforgeable digital fingerprint or chain of custody for every piece of AI-generated content. Articles analyzing these legislative frameworks highlight the growing consensus that disclosure is key.

If an AI tool can reliably trace its training data lineage (e.g., "This output was generated solely from licensed public domain data, and is watermarked as synthetic"), it drastically reduces legal exposure. For society, this addresses the fear of deepfakes and misinformation; for businesses, it becomes the mandatory license to operate.

What This Means for the Future of AI Technology

The ByteDance restriction is a watershed moment. It solidifies the transition of generative AI from a purely technical frontier to a highly regulated, commercially complex industry. The future pathway for AI content creation will be defined by three major shifts:

1. The Pivot to Ethically Sourced Data Ecosystems

The days of purely "scrape-first, apologize-later" data acquisition are ending. We are entering an era where AI developers must actively seek out and pay for high-quality, proprietary datasets. This drives a necessary, albeit expensive, shift toward licensing deals (similar to the Shutterstock deals already underway) or the creation of completely synthetic training sets.

For investors, this means the value proposition of an AI startup will increasingly be tied to the cleanliness and legality of its data holdings, rather than just the sophistication of its algorithms. Future funding rounds will demand audit trails of training inputs.

2. The Ascent of the Licensing Intermediary

As content owners demand compensation, new business models will emerge centered around licensing their IP for machine consumption. Imagine major film archives or music libraries creating specialized AI training pools. These intermediaries will become vital links between legacy IP holders and bleeding-edge AI labs. This will fundamentally change the economics of AI development, adding substantial, ongoing operational costs.

3. Architectural Changes: From Open to Auditable

The technology itself must adapt. We will see AI architecture moving away from opaque "black boxes" toward more transparent, modular systems where training data sources can be easily isolated and verified. This technical challenge—building models that can operate efficiently while maintaining strict auditability—will be the next great engineering hurdle.

Practical Implications: Actionable Insights for Businesses

Whether you are developing consumer-facing AI apps or relying on them for internal workflows, the IP landscape demands proactive steps:

- Conduct IP Audits on Deployed Models: If your company uses or licenses any generative tool, you must immediately understand the source of that tool’s training data. Assume *everything* is under legal scrutiny until proven otherwise.

- Prioritize Provenance Technology: If you are building an AI tool, integrate content watermarking and metadata tracking *now*. Do not wait for regulations to mandate it; treat it as a core feature that ensures market access.

- Budget for Licensing: Factor the high cost of legally cleared data—whether written, visual, or audio—into your long-term operational budgets. Free access to vast public datasets is becoming a legacy concept.

- Focus on Human Curation: The safest AI content will be that which is fine-tuned on clearly licensed, curated data sets, rather than vast, uncurated web scrapes. Invest in smaller, high-quality, legally pristine datasets.

The ByteDance situation is a potent warning shot across the bow of the generative AI industry. Innovation cannot exist in a legal vacuum. The integration of sophisticated IP protection, stringent regulatory compliance, and transparent data sourcing will determine which AI companies survive the coming years and which are sidelined by well-defended copyright claims. The era of limitless digital synthesis is being replaced by an era of *accountable* digital synthesis.