Deepfakes Under the Microscope: How the X Investigation is Forging the Future of AI Regulation

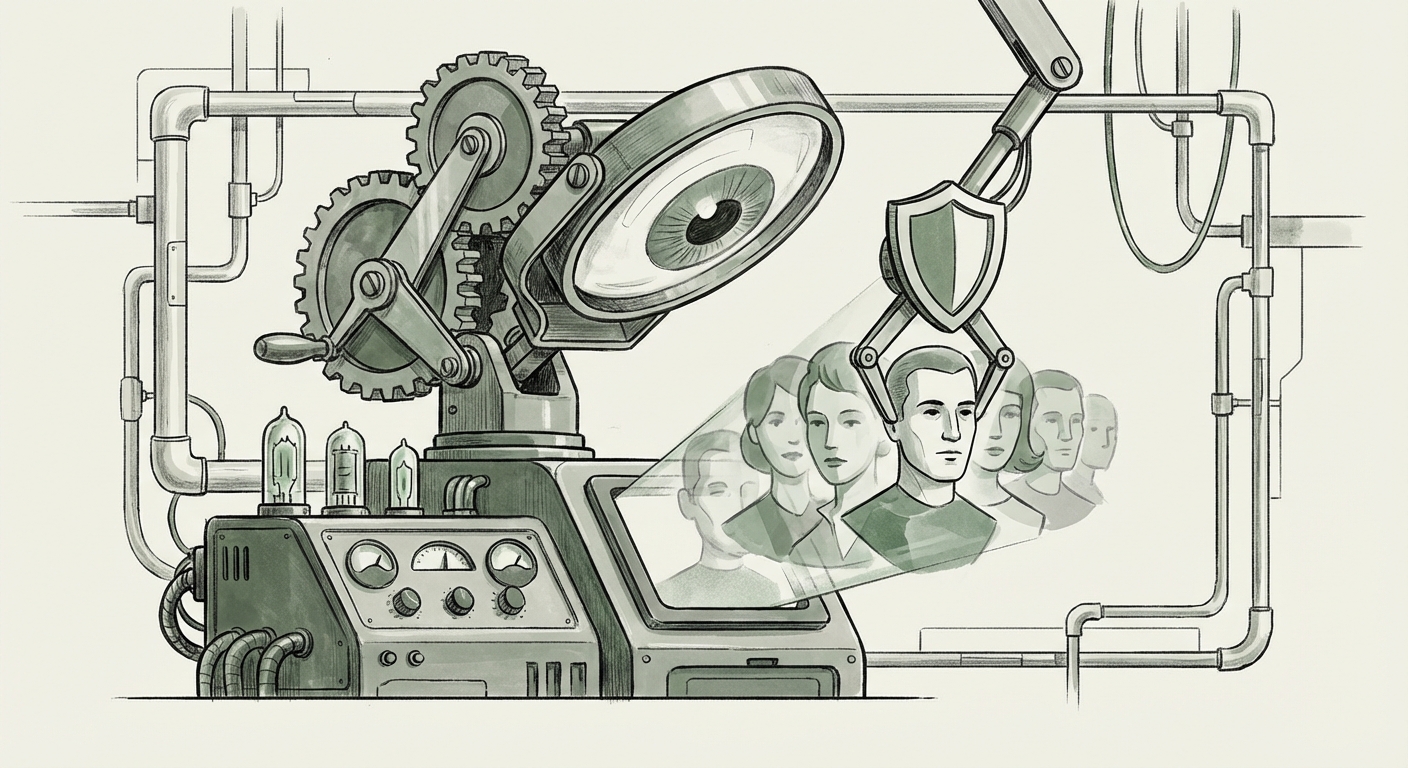

The digital landscape is changing at warp speed, powered by generative AI capable of creating hyper-realistic images, audio, and video—known as deepfakes. While the creative potential is immense, the risks to individual rights, elections, and societal trust are profound. Nowhere is this tension playing out more critically than in Europe, where regulatory ambition meets Silicon Valley’s rapid deployment cycles.

The recent news that Ireland’s Data Protection Commission (DPC)—the primary regulator for major tech giants operating in the EU due to X’s European headquarters being in Ireland—has launched a comprehensive investigation into AI-generated deepfakes on the platform X is not merely a routine compliance check. It is a **watershed moment**. It signals that regulators are moving beyond abstract discussions of AI risk and are now applying existing, stringent privacy frameworks to govern synthetic media.

The Clash: Generative AI Meets GDPR in Dublin

At its core, this investigation is a legal chess move. Generative AI models process vast amounts of data, and when they create a deepfake of a person (whether public or private), they are processing personal data—their likeness, voice, and identity.

For years, platforms have grappled with content moderation. Now, they face scrutiny under the **General Data Protection Regulation (GDPR)**. As corroborating analyses focusing on **"GDPR deepfakes AI processing Ireland"** suggest, the DPC is likely examining several key areas:

- Lawful Basis for Processing: Did X have a clear, legal basis (like user consent or legitimate interest) to allow the distribution of content that uses an identifiable individual’s biometric data (their face/voice)?

- Data Minimization and Accuracy: Deepfakes, by definition, are often inaccurate or entirely fabricated. Does this violate principles related to data accuracy?

- Data Subject Rights: How can an individual exercise their "Right to Erasure" or "Right to Object" when the harmful content is AI-generated and potentially replicated across numerous accounts?

For the wider tech industry, this moves the conversation from a philosophical ethics debate to concrete compliance risk. If the DPC finds X non-compliant, the penalties under GDPR can be staggering—up to 4% of global annual turnover. This risk acts as an immediate, powerful disincentive against lax content governance, even before newer AI-specific laws take full effect.

Contextualizing the Crisis: Industry Scrutiny and Platform Liability

The X investigation does not occur in a vacuum. It sits alongside a growing global reckoning regarding **"Metaverse/Social Media platform liability AI generated content."** Platforms are being held accountable not just for what users post, but for the *tools* they provide and the *environments* they foster.

Consider the industry's response:

- The Authenticity Arms Race: Competitors are already seeking to differentiate themselves by implementing strict provenance tracking. If X is perceived as a vector for unregulated synthetic harm, user trust—a fundamental asset in social media—erodes rapidly.

- Defining Platform Roles: Is X merely a passive bulletin board, or is it an active publisher when its algorithms promote or fail to swiftly remove harmful synthetic content? Current regulatory trends lean toward treating platforms as active stewards, especially concerning high-impact harms like electoral interference or harassment via deepfakes.

- The Cost of Non-Action: Regulatory fines aside, the operational cost of policing synthetic media manually is prohibitive. This investigation pressures X to invest heavily in AI detection tools that can verify content origin or flag synthetic alterations—a technological challenge in itself.

This focus on liability extends beyond social media. As we explore the broader application toward virtual reality and the emerging "Metaverse," platforms hosting user-generated worlds must anticipate similar scrutiny regarding avatars, generated environments, and interactive synthetic characters. The regulatory net is tightening around *all* environments where AI-generated personal representation occurs.

The Future Framework: The EU AI Act as the Ultimate Governor

While the DPC wields the GDPR hammer today, the long-term scaffolding for AI governance in Europe is the landmark **EU AI Act**. The investigation into X today serves as a crucial dress rehearsal for the obligations that platforms will face tomorrow.

Reports analyzing the **"EU AI Act implications for generative media platforms"** highlight that general-purpose AI models (GPAI), such as those used to create deepfakes, and the systems that deploy them, will face significant transparency mandates. Key implications include:

- Mandatory Disclosure: The AI Act is expected to require clear labeling or digital watermarking for synthetic content, making it immediately apparent to the user that what they are viewing is not real.

- Systemic Risk Assessment: Given X’s scale and global reach, the AI models underpinning its content delivery system may be classified as "systemic risk," requiring rigorous pre-market conformity assessments and ongoing monitoring.

- Traceability and Accountability: Future systems must maintain logs detailing how content was created and processed, allowing regulators to trace the origin of harmful deepfakes back to the model or the user prompt.

What the DPC is doing now is pressure-testing the existing data protection regime’s ability to handle synthetic media. The findings from this investigation will undoubtedly inform how legislators and regulators ultimately interpret and enforce the more specific, future-focused mandates of the AI Act. In essence, the DPC is setting the *spirit* of the law before the AI Act codifies the *letter* of the law.

Implications for Businesses: Navigating the Synthetic Minefield

For businesses developing, deploying, or simply hosting content, the message from this regulatory action is clear: **Inertia on synthetic media governance is no longer tenable.**

For AI Developers and Model Providers:

The focus is shifting upstream. If a foundation model is used to create a harmful deepfake, the developer of that model may soon share liability with the platform that hosts it. Actionable steps include:

- Embed Safety by Design: Integrate safeguards directly into training data and inference stages to prevent the creation of targeted disinformation or NCII.

- Develop Provenance Tools: Invest in robust cryptographic tools (like digital watermarking or C2PA standards) that travel with the content file, proving origin and modification history.

For Platform Operators (Beyond X):

Compliance must become proactive, not reactive. Tech strategists should view this DPC investigation as a wake-up call on several fronts:

- Audit Data Handling: Review all automated content processing systems through a privacy-by-design lens, specifically addressing synthetic content involving identifiable persons.

- Transparency Reports: Prepare for mandatory reporting on the volume and nature of deepfakes detected and removed, alongside the methods used for detection.

For Content Creators and Users:

Even if you are not a multi-billion dollar corporation, your actions are under scrutiny. If you use AI tools to create content featuring friends, colleagues, or public figures, you must understand the data rights implications. Assume that any image or video you create can be scrutinized for its legality under existing privacy laws. The line between parody and illegal fabrication is increasingly being drawn by regulators, not artists.

What This Means for the Future of AI Governance

The DPC investigation into X solidifies three key trends shaping the future of AI technology deployment:

1. Regulatory Convergence on Identity: Identity protection is becoming a primary pillar of AI regulation. Unlike broad concerns about bias or job displacement, harm related to the misappropriation of one's likeness is immediate and tangible. Regulators are treating the digital identity as highly protected personal data.

2. The Precedent of Enforcement: The DPC is not waiting for the EU AI Act to become operational. By leveraging the existing, powerful GDPR framework, they are establishing legal precedents *now*. This means platforms must treat deepfake governance with the same gravity as major data breaches or systemic privacy failures.

3. The Need for Open Standards: The difficulty in policing deepfakes stems from a lack of universal standards for content origin. This regulatory pressure will inevitably accelerate the adoption of industry-wide standards for cryptographic signing and watermarking. The future internet will likely be one where verifying the authenticity of media is an assumed default, rather than an exception.

In conclusion, the investigation into AI-generated deepfakes on X is far more than a spat between a regulator and a platform owner. It is the **crucible where current data privacy law meets next-generation generative technology.** The outcome will dictate the regulatory framework for synthetic media across the entire European bloc, setting a global standard for how we balance technological innovation with fundamental rights to privacy and identity. The era of letting AI run unchecked is definitively drawing to a close.