DPO, AMD, and the Future of Enterprise LLMs: Algorithmic Prowess Meets Infrastructure Power

The landscape of Artificial Intelligence is evolving at a blinding pace, driven not just by bigger models, but by smarter training techniques and the specialized hardware needed to support them. For businesses looking to move Large Language Models (LLMs) from exciting demos to reliable, profit-driving tools, the focus has sharpened on two critical areas: how we teach the AI (fine-tuning algorithms) and where we run it (enterprise infrastructure).

Recent analyses, such as those comparing Direct Preference Optimization (DPO) against the older standard, Proximal Policy Optimization (PPO), and the emergence of powerful, dedicated hardware like the AMD MI355X, signal a tectonic shift. This isn't just incremental improvement; it’s a convergence defining the next era of AI deployment.

The Great Alignment Shift: From PPO Complexity to DPO Simplicity

Training an LLM to be helpful, harmless, and aligned with human intent is the core challenge in modern AI. This alignment process often relies on Reinforcement Learning from Human Feedback (RLHF). Historically, PPO has been the workhorse for RLHF, but it is notoriously complex.

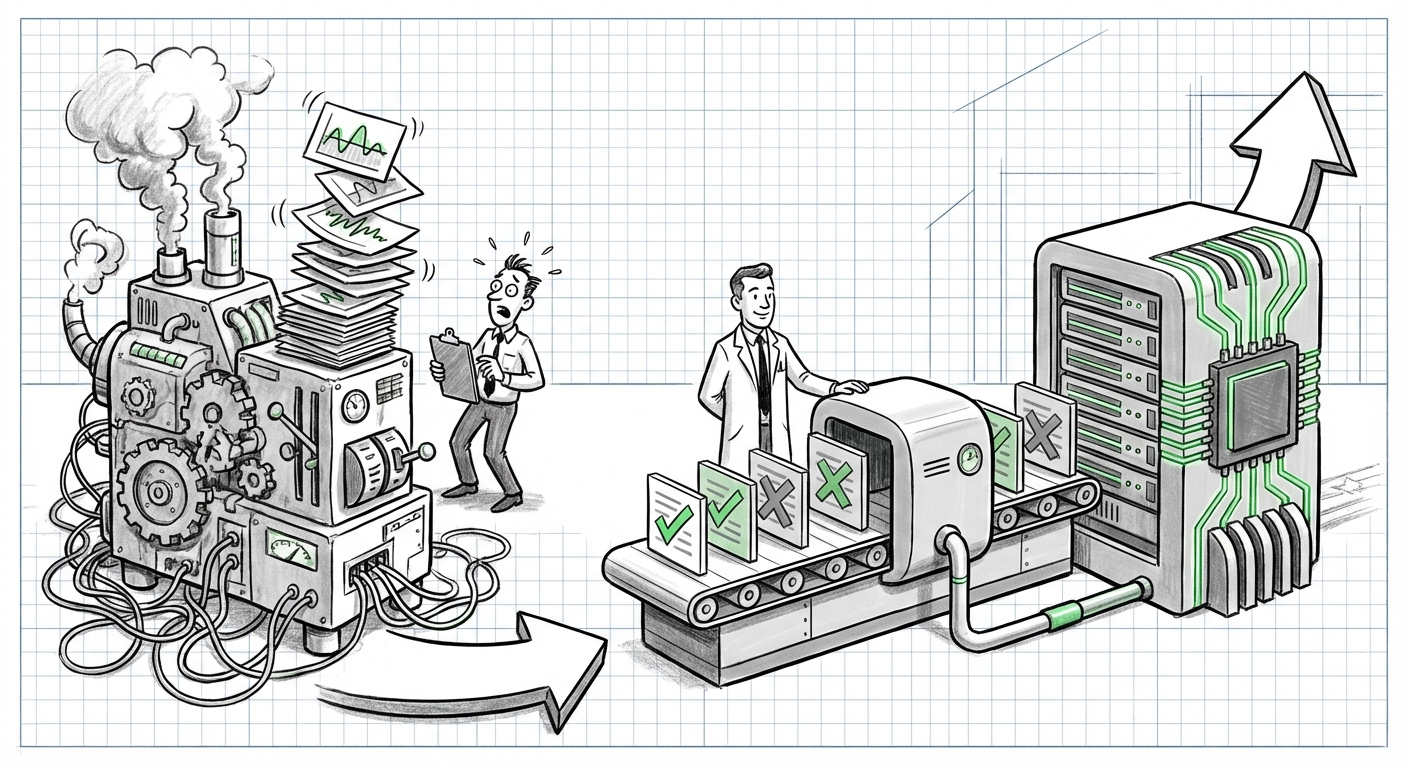

Imagine teaching a very smart student: PPO requires multiple separate training steps, careful balancing of many complex parameters, and is highly sensitive to small changes—it’s like balancing a wobbly stack of plates. This complexity translates directly into high computational costs, instability, and long iteration cycles in an enterprise setting.

Enter Direct Preference Optimization (DPO). DPO bypasses the need for complex reinforcement learning stages entirely. Instead of using complicated reward models, DPO reframes the problem: it directly optimizes the LLM based on pairs of preferred and rejected responses. It’s a cleaner, more mathematically direct approach.

For the business world, this simplification is profound. It means:

- Faster Iteration: Less complex pipelines mean faster tuning cycles, allowing companies to adapt models to their specific data and brand voice quicker.

- Reduced Overhead: Cutting out complex reward modeling saves significant GPU time and cloud computing costs.

- Greater Stability: The alignment process becomes more predictable, reducing the risk of a costly "model collapse" during fine-tuning.

As research delves deeper into these methods, it becomes clear that DPO offers a pathway to cost-effective alignment, making enterprise-grade customization feasible for a wider range of companies. It's the move from a complicated, multi-stage factory process to a streamlined, efficient assembly line for customized AI behavior.

The Hardware Backbone: Why Infrastructure Matters More Than Ever

Fine-tuning algorithms are only as good as the hardware they run on. The initial excitement around LLMs often focuses on the model weights, but the true bottleneck for enterprises is deployment—running inference (getting answers) reliably and affordably at scale. This is where the hardware conversation heats up.

The mention of the AMD MI355X (or similar next-generation accelerators) within guides covering LLM training and inference signifies a crucial trend: the diversification of the AI compute market. For years, the industry relied almost exclusively on one dominant player.

Why does this matter?

- Cost & Availability: A competitive hardware ecosystem drives down prices and ensures supply chains are more robust. When high-performance alternatives are available, enterprises gain leverage in procurement.

- Specialization: Newer chips are often designed specifically with LLM requirements in mind—high memory bandwidth and specific tensor core capabilities crucial for both large-scale training (like DPO/PPO) and rapid inference.

- Memory Scaling: LLMs demand vast amounts of memory (VRAM). The ability of these new accelerators to handle memory scaling efficiently directly impacts how large a model an enterprise can run without resorting to slow, distributed systems.

The future isn't just about buying the fastest chip; it's about optimizing performance per watt and performance per dollar for the specific task. If DPO allows for cheaper training, pairing it with infrastructure optimized for high-throughput, low-latency inference creates an incredibly powerful, economically viable package for production LLMs.

Synthesizing the Trends: The Convergence Point

The real story emerges when we look at the intersection of these two developments: optimized algorithms meeting specialized hardware.

Practical Implications for Enterprise Adoption

For Chief Technology Officers (CTOs) and IT Architects, this convergence offers a clear path forward:

1. Customization is the New Baseline: Generic, off-the-shelf LLMs will soon be insufficient. Businesses need models that understand niche terminology, regulatory frameworks, or proprietary customer data. Techniques like DPO make this custom tuning accessible. If you can align a model efficiently (DPO) and run it affordably (optimized hardware), you can create proprietary intelligence that competitors cannot easily replicate.

2. The Inference Challenge: The focus is rapidly shifting from "Can we train it?" to "Can we serve it efficiently?" Research into scaling LLM deployment highlights that the main cost in AI operations is running the model in production, not the initial training. Therefore, hardware solutions that offer superior inference throughput—meaning they can handle many user requests simultaneously without lagging—become mission-critical assets.

3. Managing Complexity: The evolution toward DPO reflects a broader technological maturity. We are moving past experimental methods that required specialized PhD-level teams to manage daily. Modern MLOps teams need platforms that abstract away the PPO complexity, allowing them to focus on data quality and business outcomes.

What Comes Next? Looking Beyond DPO

While DPO represents a significant technological leap today, the relentless pace of AI research means it likely won't be the final word in alignment. As we look to the future, the most insightful analysts must ask: What is DPO optimizing for, and what are its blind spots?

If we continue researching the "Future of preference learning in LLMs beyond DPO," we see explorations into areas like Reinforcement Learning from AI Feedback (RLAIF) or methods that bake alignment directly into the pre-training phase. These future methods might further simplify alignment, potentially making even DPO obsolete in five years.

The implication is clear: infrastructure built today must be flexible. Systems anchored too rigidly to one algorithmic approach risk obsolescence. Hardware vendors offering flexible software stacks (like robust ROCm support for AMD GPUs) become more valuable than those offering proprietary, closed ecosystems.

Actionable Insights for Navigating the Transition

To stay ahead of this rapidly converging curve of algorithm and infrastructure, organizations should focus on three actionable areas:

- Audit Alignment Strategy: If your current alignment pipeline relies heavily on traditional PPO methods, begin a proof-of-concept migration to DPO for non-critical fine-tuning tasks. Measure the reduction in computational cost and iteration time.

- Adopt Hardware Agnostic Benchmarking: Do not allow vendor lock-in to dictate your future. Benchmark your LLM workflows—both training and inference—across multiple hardware platforms (NVIDIA, AMD, and specialized ASICs if applicable). Focus on standardized frameworks to ensure portability. For context on this race, performance comparisons are essential, tracking metrics like [AMD MI355X vs NVIDIA H100 performance LLM benchmarks].

- Invest in MLOps for Efficiency: Treat LLMs as continuous products, not one-off deployments. Invest in MLOps tools that automate the deployment pipeline, abstracting the underlying math (PPO/DPO) so engineers can focus purely on preference data inputs and output quality.

Conclusion: The Era of Efficient, Bespoke Intelligence

We are witnessing the maturation of the LLM field. The raw horsepower race is beginning to yield to the efficiency race. The transition from PPO’s complexity to DPO’s elegance frees up precious computational resources, making high-quality model alignment democratically accessible. Simultaneously, the rise of competitive, enterprise-focused hardware, symbolized by platforms like the AMD MI355X, ensures that the cost of running these powerful, customized models is becoming manageable.

The future of AI in business belongs to those who can efficiently align their models to proprietary needs using streamlined techniques and deploy them on diversified, performance-optimized infrastructure. This synergy between smart algorithms and robust hardware is the true engine that will drive the next wave of enterprise AI transformation.