The Alignment Revolution: Why DPO is Reshaping LLM Training Faster Than PPO

The race to build the next generation of Large Language Models (LLMs) is no longer just about size; it’s about alignment. How do we ensure these powerful systems are helpful, harmless, and follow human instructions? For years, the standard answer was Reinforcement Learning from Human Feedback (RLHF), which heavily relied on Proximal Policy Optimization (PPO). However, a newer, leaner approach—Direct Preference Optimization (DPO)—is rapidly gaining dominance. This fundamental shift is more than a technical update; it signals a new era of efficiency, accessibility, and speed in how we customize AI for enterprise use.

From Complexity to Simplicity: Deconstructing the DPO vs. PPO Divide

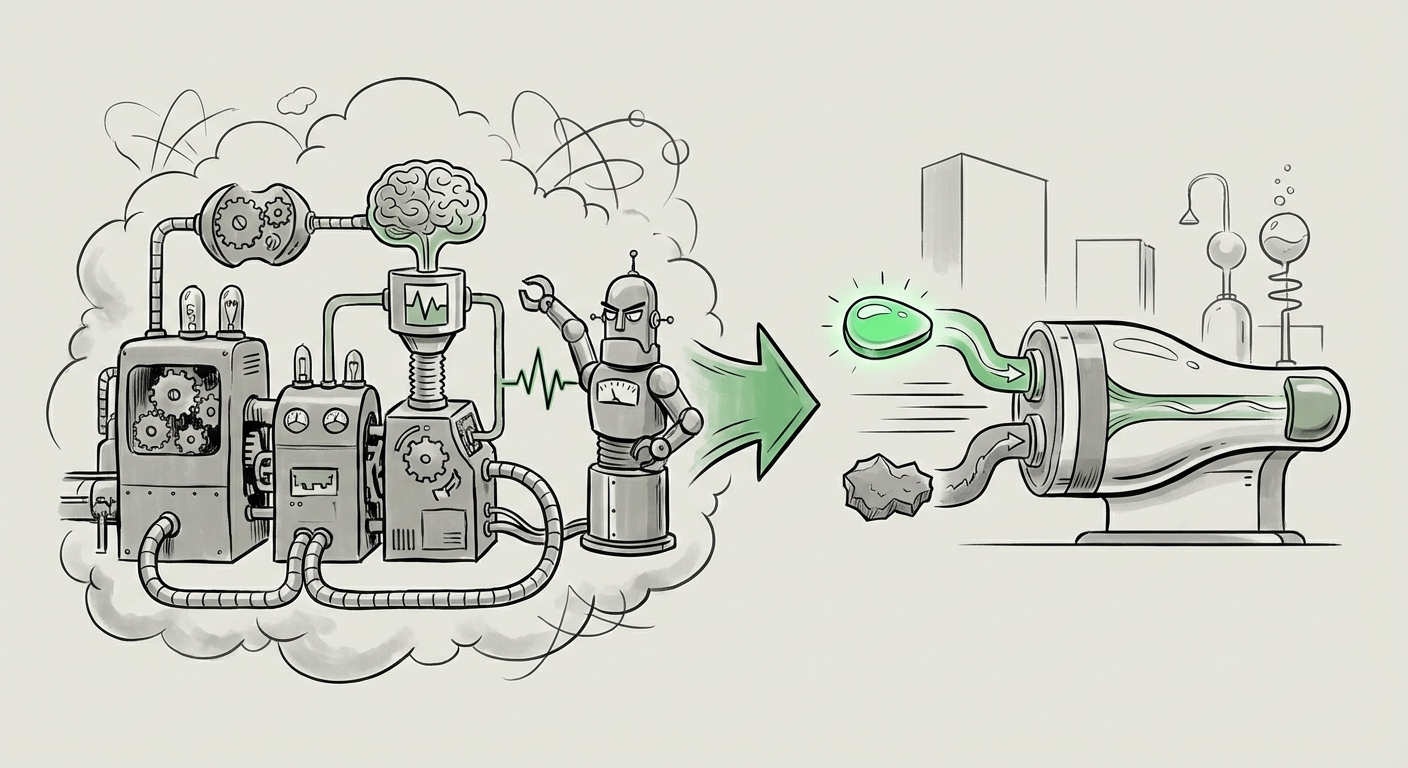

To understand the impact of DPO, we must first appreciate the difficulty of PPO. PPO, the workhorse of classic RLHF, is computationally demanding. It requires training three separate models simultaneously: the policy model (the LLM itself), a reference model, and, most critically, a Reward Model (RM).

Think of the Reward Model as a demanding teacher that grades the LLM’s answers. The LLM then tries to improve its answers just to get a better grade from the RM. This process involves sampling responses, getting feedback from the RM, and iteratively updating the policy model. This iterative nature is unstable, memory-intensive, and slow.

DPO flips this paradigm on its head. As researchers summarized in foundational work, DPO recognizes that “Your Language Model is Secretly a Reward Model” [1]. Instead of training a separate RM, DPO bypasses the entire reinforcement learning loop. It reframes the alignment problem as a simple classification task, directly optimizing the policy model using pairs of preferred and rejected answers.

For a technical audience, this means replacing complex sampling and value estimation with a single, stable loss function. For a broader audience, it means moving from a complicated, multi-step assembly line to a streamlined, direct production process. This efficiency is the first major trend shaping the future of AI.

Trend 1: The Democratization of Model Alignment via Efficiency

The complexity of PPO created a high barrier to entry for effective alignment. You needed significant compute resources, deep expertise in reinforcement learning, and time to stabilize the training runs.

DPO’s simplicity directly attacks these barriers. By eliminating the need for a separate, highly tuned Reward Model, DPO drastically reduces both training time and memory footprint. This democratization is evident as the AI community explores a broader landscape of alignment techniques [2].

We are seeing the emergence of related methods, such as Identity Preference Optimization (IPO) and various rejection sampling techniques, all building on the conceptual simplification initiated by DPO. The future trajectory is clear: alignment methods must become simpler, more stable, and less reliant on perfect reward modeling to keep pace with the rapid release cycles of foundational models.

Practical Implication: Faster Iteration Cycles

For organizations looking to fine-tune open-source models (like Llama or Mistral) for specific customer service scripts or internal coding assistants, the speed of iteration matters. If a PPO-based tuning run takes days or weeks to stabilize, that’s time lost in the market. DPO allows teams to test new preference datasets and deploy updated alignment checkpoints in hours, fundamentally changing the agility of AI development teams.

Trend 2: Hardware Agnostic Optimization Meets Enterprise Deployment

The mention of specialized hardware, such as the AMD MI355X mentioned in initial enterprise guides, underscores a critical, often overlooked aspect of LLM development: the cost and scalability of the underlying silicon.

Alignment choices are no longer just theoretical; they are dictated by hardware constraints. PPO’s memory requirements—storing the policy, reference, and reward models concurrently—demand massive GPU clusters. In contrast, DPO’s more streamlined memory profile makes it significantly more amenable to deployment on a wider variety of existing infrastructure, including less memory-rich accelerators or on-premise server stacks.

This efficiency has direct financial implications. Analyzing LLM inference and training costs reveals that reducing the required GPU hours through a simpler alignment method translates directly into lower Operational Expenditure (OpEx) [3]. For CTOs overseeing multi-million dollar AI budgets, migrating an alignment pipeline from PPO to DPO is not just a technical preference; it’s a strategic cost-saving measure that extends the lifespan of existing hardware investments.

Trend 3: Fidelity vs. Efficiency—The Performance Tightrope

While efficiency is compelling, the most rigorous AI scientists rightly ask: Does simplicity sacrifice quality? Can DPO truly capture the complex, nuanced alignment that a dedicated Reward Model (as used in PPO) can provide?

This is where the analysis must mature beyond simple speed tests. Research into the practical trade-offs highlights that while DPO is superior in general preference alignment, PPO might retain an edge in certain highly specialized scenarios [4].

For example, if a model needs to demonstrate complex, multi-step mathematical reasoning *while* maintaining strict safety guardrails, the explicit reward signals provided by a sophisticated PPO reward model might still offer superior performance fidelity on those benchmarked tasks. DPO, because it optimizes based on a direct preference classification, sometimes struggles to generalize beyond the distribution of preferences it was trained on, potentially leading to slight degradations in core reasoning capabilities if not handled carefully.

The future of alignment likely involves a hybrid approach: leveraging DPO for the bulk of general instruction-following and stylistic alignment, and reserving PPO or targeted, smaller-scale RL techniques for hardening models against highly specific, complex edge cases where fidelity is non-negotiable.

Future Implications: What This Means for Your AI Strategy

The DPO vs. PPO battle is a microcosm of the entire AI industry’s maturation. We are moving from the "proof-of-concept" phase, which favored brute-force techniques like PPO, to the "industrialization" phase, which demands efficiency, stability, and cost control.

For AI Strategy Leaders: Embrace Alignment Agility

Your organization should prioritize tooling and talent that supports preference-based tuning paradigms like DPO. The ability to quickly inject proprietary business data (customer service logs, proprietary documentation) into a model via DPO will become a primary source of competitive advantage. Delaying this shift means accepting slower deployment cycles and higher compute bills.

For Applied Scientists and Engineers: Master the Benchmarks

It is crucial not to discard PPO entirely. Engineers must deeply understand the specific benchmarks where DPO might falter. Actionable insight here involves creating robust validation suites that test for safety, accuracy, and reasoning ability, ensuring that the speed gained by using DPO does not introduce unacceptable risks or drift from core capabilities.

For Technology Infrastructure Teams: Heterogeneous Compute is Key

As alignment methods become decoupled from monolithic RL frameworks, infrastructure planning can become more flexible. The focus shifts to optimizing throughput on diverse hardware—whether utilizing newer, cost-effective accelerators or maximizing utilization on existing NVIDIA or AMD fleets. Efficiency in alignment algorithms translates directly into efficient hardware planning.

Actionable Insights for Today's AI Leader

The synthesis of current trends provides a clear path forward:

- Audit Current Alignment Pipelines: If you are still reliant solely on full RLHF/PPO pipelines, begin immediate migration feasibility studies for DPO. Quantify the expected savings in GPU hours and time-to-deployment.

- Invest in Preference Data Curation: Since DPO relies heavily on high-quality preference pairs, the value of human labelers shifts from grading abstractly (the RM role) to curating definitive "Good vs. Bad" examples. Treat this data as your most valuable asset.

- Standardize on Open Benchmarks: Use industry surveys and academic comparisons [2] to establish objective quality gates. Do not adopt a new alignment method based on hype alone; prove its performance on tasks critical to your domain.

The evolution from PPO to DPO is more than just swapping one algorithm for another; it is a necessary technological refinement that makes advanced AI alignment practical, affordable, and deployable at the scale enterprise demands. The future belongs to those who can align models quickly and cost-effectively, and DPO is currently leading that charge.