The Wikipedia Schism: Why Generative AI is Forcing Digital Communities to Choose Between Speed and Sovereignty

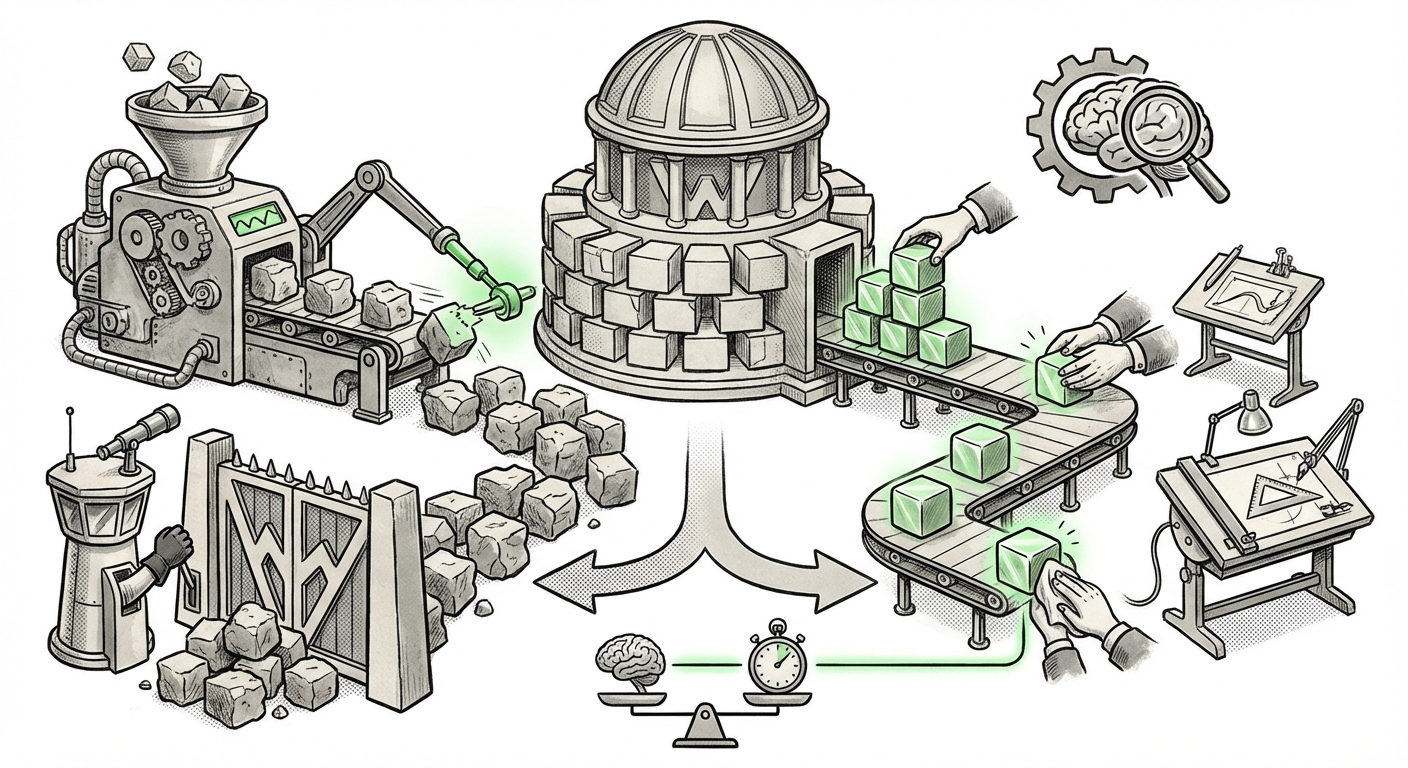

The digital landscape is currently witnessing a profound test of trust, centralized control, and community governance—all catalyzed by the sudden explosion of Large Language Models (LLMs). Nowhere is this tension more visible than within the sprawling ecosystem of Wikipedia, the world's largest collaborative knowledge base. A recent development has crystallized this conflict: the German-language Wikipedia community enacted a sweeping ban on AI-generated content, putting them directly at odds with the more flexible, adaptive approach favored by the global Wikimedia Foundation and other language editions.

This divergence is more than just a localized editorial dispute; it is a crucial case study highlighting how established, human-centric knowledge platforms must immediately confront the dual challenges of technological acceleration and maintaining ontological integrity. For businesses and technologists building the next generation of AI applications, understanding this friction point between speed and sovereignty is essential.

The Core Conflict: Ban vs. Adapt

The decision by the German-language Wikipedia editors suggests a prioritization of quality assurance and source verification over the efficiency gains offered by generative AI tools. Their stance is hardline: content created purely by machines is prohibited. This reflects a deep-seated skepticism regarding the current state of LLMs, particularly concerning nuance, factual grounding (hallucinations), and potential copyright wash-in from training data.

In contrast, the broader Wikimedia Foundation and several other language communities are exploring a "softer approach." This typically involves framing AI as a powerful tool—an advanced grammar checker, a summarization assistant, or a first-draft generator—but always insisting on rigorous human oversight and accountability for every published word. The philosophy here is one of integration rather than exclusion, aiming to leverage AI to lower the barrier to contribution without sacrificing the rigorous citation standards inherent to Wikipedia.

This fundamental disagreement forces us to ask: When does an AI-assisted contribution cease to be human-authored, and when does efficiency compromise the very definition of knowledge integrity?

The Foundation’s Roadmap vs. Local Enforcement

To truly understand the pressure points, one must examine the directives from the top down. The **Wikimedia Foundation’s official policy on generative AI editing** often emphasizes cautious experimentation, recognizing that banning the technology outright might leave their platforms irrelevant in an AI-driven world. They understand that editors using AI to translate or structure data could speed up article creation significantly, particularly in underserved language communities.

However, platform governance is often decentralized. Local communities hold the ultimate authority over content policy for their specific language domain. The German community, known for its high standards and historical emphasis on precise sourcing, appears to have judged that the risks associated with current AI outputs—namely, subtle falsehoods and potential plagiarism vectors—outweigh the speed benefits. This localized, deeply engaged skepticism serves as a powerful check on centralized corporate or organizational enthusiasm for rapid deployment.

The Academic and Philosophical Firewall Against Synthetic Content

The concerns voiced within Wikipedia are mirrored across the academic and scholarly publishing world. We are seeing an **academic debate on using LLMs for encyclopedia content** that centers on epistemic authority. For an encyclopedia, the value lies not just in the information itself, but in the traceable, verifiable human consensus that produced it.

If an LLM creates a perfectly worded paragraph on the nuances of 18th-century German trade routes, but that paragraph is synthesized from ten different, unverified sources, who is responsible if a single fact is wrong? The original human editor who accepted the output without deep re-checking? The model developers? The German community seems to be drawing a line: if the initial creation is not human, the chain of accountability is broken.

This skepticism is rooted in the phenomenon of AI hallucination. While AI is excellent at pattern recognition and fluent prose generation, its core mechanism lacks genuine understanding. When tackling niche or complex subjects—exactly the areas where Wikipedia prides itself on depth—LLMs can confidently assert falsehoods, rendering the content actively detrimental to an objective knowledge base.

Broader Industry Trends: The Human Oversight Imperative

Wikipedia is not acting in a vacuum. The tension it faces reflects a massive industry-wide reckoning concerning **'AI-generated content' vs. 'human oversight' in digital publishing trends**.

- News Media: Many major news organizations have introduced strict guidelines requiring clear disclosure for AI-assisted articles and placing senior editors in the final sign-off loop, effectively treating AI as a junior reporter whose work must be fact-checked against primary sources.

- Scientific Journals: Prominent scientific publishers have generally banned LLMs from being listed as authors, explicitly stating that authorship requires accountability—a concept AI cannot fulfill. They allow AI use only in methodology or drafting, provided it is fully disclosed.

- Collaborative Coding (e.g., GitHub): Tools like GitHub Copilot are embraced for efficiency, but the fundamental expectation remains that the human developer is responsible for the security and functionality of the code they commit. A failed AI-generated segment is still the programmer's liability.

In every sector where correctness or liability matters, the pattern emerges: AI accelerates the draft, but the human must own the final publication. The German Wikipedia’s decision simply applies this liability standard to the content creation itself, enforcing a full human veto before even the drafting stage begins.

What This Means for the Future of AI and Knowledge Integrity

The "Wikipedia Schism" offers critical insights into the short-to-medium-term trajectory of knowledge technology, affecting businesses that rely on information accuracy and content creation.

1. The Rise of 'Trust Tiers' in Digital Content

We are entering an era where content will be implicitly or explicitly categorized into tiers of trustworthiness. Content explicitly labeled as **"Human Vetted (HVT)"** or **"Zero-AI Input"** will likely command a premium in sectors like law, medicine, and specialized finance. Conversely, rapidly generated, low-stakes content (e.g., basic marketing copy, quick summaries) will leverage AI heavily for speed.

For businesses, deciding which tier their public-facing content belongs to will become a strategic necessity. Using unverified AI for core product documentation or legal disclosures, for instance, could lead to immediate reputational and financial risk, mirroring the concerns driving the German ban.

2. Governance Will Define Adoption Speed

The speed at which enterprises adopt generative AI is no longer purely a technical question; it is a governance question. Communities that move too fast without establishing clear, enforceable policies on verification, attribution, and liability risk internal revolt or external quality collapse. The German Wikipedia episode serves as a warning: if the community feels its core mission is threatened by the technology, they will assert their local sovereignty.

3. The Importance of Nuance and Localization

Why did the German edition ban AI while others adopted a softer stance? This suggests that AI’s current weaknesses are exacerbated in certain linguistic or cultural contexts. LLMs are generally trained predominantly on English data. When tasked with generating content in German—a language rich in complex compounding, precise grammar, and specific cultural context—the models might struggle more significantly to capture subtle accuracy, leading to more pervasive, yet harder-to-spot, errors.

Actionable Insight for Businesses: Do not assume global AI policies will work universally. Any company operating in multiple languages must stress-test AI outputs locally, as the tolerance for error varies significantly by language and subject matter expert availability.

Actionable Insights for Technologists and Strategists

As we move forward, the goal is not to halt progress but to build AI systems that respect the need for verifiable knowledge sovereignty.

For AI Developers: Focus efforts on improving grounding and attribution mechanisms. Future models must provide traceable pathways back to their source material. If an AI can cite its own sources with verifiable confidence scores, the adoption friction felt by platforms like Wikipedia will decrease significantly.

For Platform Managers and Content Strategists: Implement clear "AI Disclosure Standards" immediately. Whether you ban AI completely or treat it as an assistant, transparency builds user trust. Invest in tools that flag high-probability AI-generated text not just for deletion, but for mandatory human review (a necessary bridge between the German ban and the Foundation’s adaptive path).

For Policy Makers: The friction highlights a regulatory gap. Who governs synthetic content that erodes shared factual reality? Policymakers must look at these platform governance battles as early indicators of where societal standards for digital truth are being set, long before legislative action is taken.

Conclusion: The Future is Hybrid, But Trust is the Currency

The conflict tearing through the Wikipedia landscape—speed versus sovereignty—is the defining technological struggle of this decade. Generative AI offers the promise of unprecedented productivity, capable of drafting an entire library overnight. Yet, for knowledge platforms built on consensus and verifiable contribution, speed without absolute control is simply rapid degradation.

The consensus emerging from this "Wikipedia Schism" suggests the future of reliable digital knowledge will not be purely human, nor purely synthetic. It will be a hybrid ecosystem: AI will handle the heavy lifting of drafting, summarizing, and initial classification, but human expertise—motivated by community standards and accountability—will remain the indispensable final layer of authentication. The platforms that thrive will be those that successfully marry AI's efficiency with human-enforced, context-aware standards of truth.