Beyond Generation: Why Yann LeCun’s JEPA and World Models Are the Future of AGI

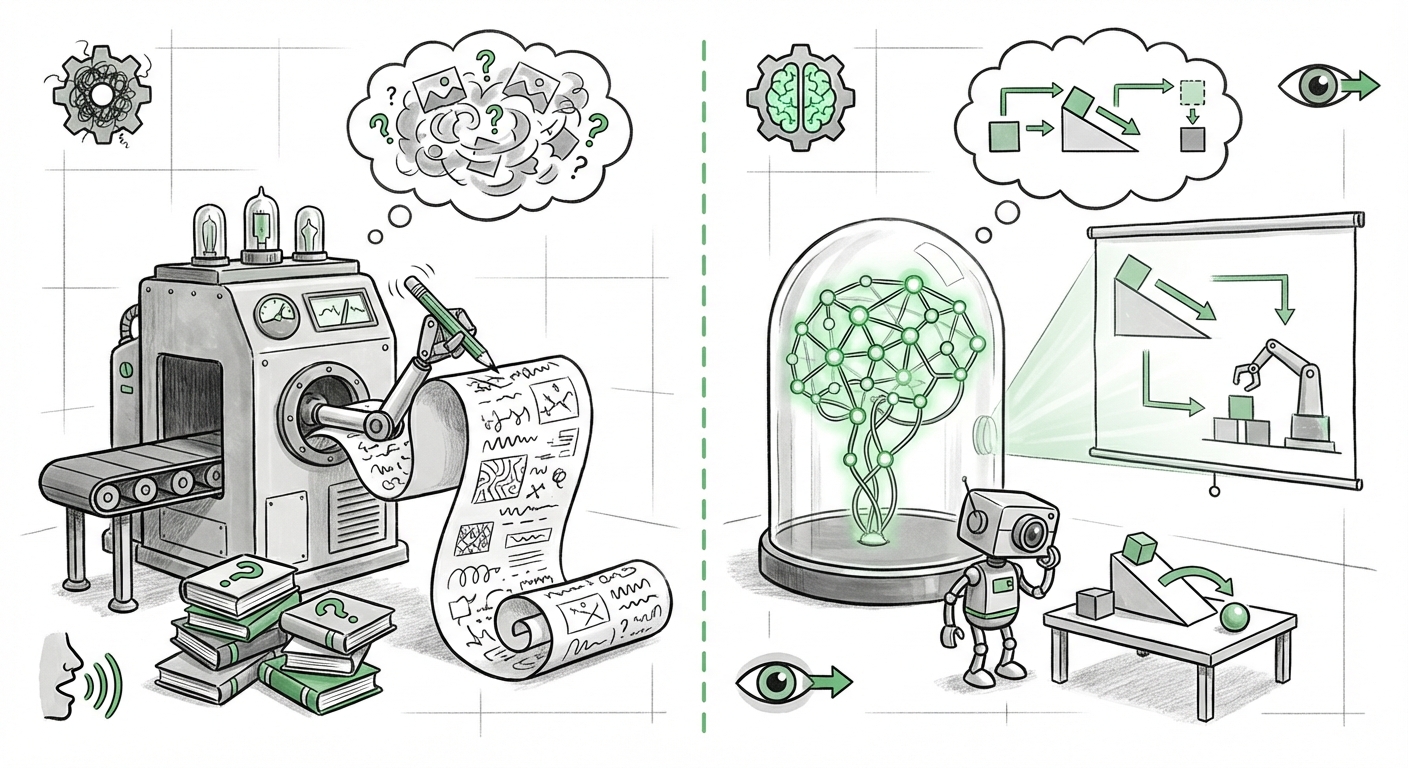

For the past several years, the landscape of Artificial Intelligence has been dominated by generative models. Whether it's crafting strikingly realistic images from text prompts or writing code that rivals junior developers, Large Language Models (LLMs) and Diffusion Models have captured the public imagination and massive investment. However, a rising counter-movement, championed by AI pioneer Yann LeCun, suggests that true Artificial General Intelligence (AGI) won't be built by teaching machines to generate the world, but by teaching them to *predict* it.

This shift centers on the concept of World Models, grounded by a specific architecture called the Joint Embedding Predictive Architecture (JEPA). This isn't just a minor technical update; it represents a fundamental philosophical pivot in how we aim to imbue machines with common sense and predictive reasoning.

The Generative Ceiling: Why Prediction is More Important Than Production

Current state-of-the-art models excel at pattern matching. LLMs, for instance, are superb at predicting the next statistically likely word in a sequence. While this leads to fluent, coherent text, LeCun and others argue that this method inherently lacks deep comprehension of the underlying reality being described. They are experts in symbolic representation but poor at physical representation.

Imagine asking an AI to understand a physical interaction: if you push a block off a table, what happens? A generative model might describe the result based on millions of texts it has read about gravity and falling objects. A predictive World Model, however, aims to build an internal, abstract representation of the block's physics—its mass, velocity, and trajectory—and simulate the outcome internally before generating a response. This is the crucial difference: understanding causality versus mimicking language.

The JEPA Breakthrough: Learning the "What If"

JEPA is LeCun’s proposed architectural solution to this problem. It moves away from requiring models to predict every single detail of the future (like predicting every pixel in the next video frame). Predicting the raw pixel data is computationally wasteful and often irrelevant to high-level reasoning.

Instead, JEPA works in a compressed, abstract space called the latent space. This is like creating a highly efficient summary or blueprint of the world. Here is how it differs, conceptually:

- Compression: JEPA learns to summarize complex data (like an image or a scene) into a compact, meaningful representation (the latent embedding).

- Prediction in Latent Space: Instead of predicting the next raw pixel, the model predicts the *summary* or *embedding* of the next moment in time.

- Abstraction: By ignoring the minor, irrelevant details (like slight lighting changes or background noise), the model is forced to focus on the essential elements that define causality and structure.

This approach is far more efficient for building general intelligence. As researchers exploring the foundational concepts note, this method allows the AI to learn the underlying rules governing reality without being bogged down in the noise of every possible sensory input.

Corroborating the Shift: Where JEPA Fits in the AI Ecosystem

The push toward predictive World Models is not happening in a vacuum. Our ability to find supporting evidence for this trend confirms that this debate is currently central to cutting-edge AI research.

The Embodied AI Imperative

The most direct beneficiaries of JEPA-style World Models are systems that interact with the physical world: robotics and autonomous agents. When an AI needs to navigate a messy kitchen or safely operate a drone, generating text about navigation is useless; it needs a reliable internal simulator of physics.

The significance of World Models for Embodied AI and Robotics is clear: they promise a path toward agents that can learn safer, more complex tasks with less real-world trial-and-error. A robot can run millions of complex planning scenarios internally within its JEPA-derived World Model—simulating failure without causing physical damage—before deploying a refined strategy in the real world. This drastically reduces the data bottleneck that currently plagues robotics training.

The Philosophical Divide: Predictive vs. Autoregressive

To fully grasp the importance of JEPA, one must understand what LeCun is arguing against. This leads directly to examining the critiques of purely generative models for AGI. Generative models, particularly autoregressive models (like LLMs that predict token-by-token), are masters of correlation but often fail at causation. They learn that "fire trucks are red" because they see those words together frequently, but they don't understand the *property* of redness or the *function* of a fire truck.

When LeCun critiques this approach, he highlights its inherent inefficiency for robust intelligence. Why generate billions of sentences if all you need is a functional understanding of object permanence? The focus on generative output forces the model to solve two problems at once: understanding the world *and* synthesizing realistic data about it. JEPA attempts to decouple these, focusing solely on the understanding part first.

Grounding the Theory in Academia

Any major architectural proposal requires rigorous academic foundation. Seeking out the original JEPA papers on arXiv is necessary to move past the popular summaries. These documents lay out the mathematical proofs, the specific loss functions, and the proposed benchmarks that demonstrate JEPA’s superiority in predictive tasks over standard modeling techniques. This level of detail is crucial for engineers tasked with building the next generation of AI infrastructure.

Future Implications: What This Means for Businesses and Society

The dominance of generative AI has focused business strategy on content creation, marketing copy, and immediate text synthesis. The JEPA/World Model shift signals that the next wave of enterprise value will come from AI that can *act* intelligently and understand complex, dynamic environments.

For Business: Smarter Automation and Digital Twins

If AI moves from being a sophisticated parrot to a sophisticated simulator, the impact on industry is profound. Companies relying on automation, logistics, and complex process management will see radical improvements.

1. **Hyper-Accurate Digital Twins:** Businesses can create high-fidelity digital replicas of factories, supply chains, or even complex biological systems. JEPA-based models will power these twins, allowing managers to run predictive "what-if" scenarios with much greater certainty than current simulation software, predicting cascading failures or optimal resource deployment.

2. **Next-Generation Robotics:** Manufacturing, warehousing, and last-mile delivery will become far more adaptable. Robots powered by predictive world models won't just follow pre-programmed paths; they will intuitively handle novel obstacles (like an unexpected pile of boxes or a slippery floor) by predicting the consequences of their actions.

3. **Scientific Discovery:** In areas like drug discovery or materials science, an AI that can predict molecular interactions based on underlying physical laws (its internal world model) will accelerate R&D exponentially, moving beyond mere data correlation.

Societal Implications: Toward More Reliable AI

The shift away from pure generation also has significant societal implications, particularly regarding reliability and safety.

Generative models are prone to "hallucination"—producing confident but factually incorrect output—because their goal is statistical fluency, not factual accuracy relative to reality. A predictive model, focused on accurately modeling the physical constraints of the world, is inherently more grounded.

When self-driving cars or automated medical diagnostic tools rely on internal world models, the baseline expectation for error should decrease. The AI is less likely to devise a logically coherent, yet physically impossible, solution because its latent space is constrained by learned laws of physics and causality.

Actionable Insights: Preparing for the Predictive Era

For technologists and leaders, the writing is on the wall: the foundational research emphasis is moving from output quality to internal understanding. Here is what leaders should be doing now:

- Invest in Latent Representation Skills: Teams need to pivot from purely focusing on prompt engineering and diffusion parameters toward understanding embedding spaces, contrastive learning, and predictive coding frameworks. Expertise in handling high-dimensional latent representations will become premium.

- Prioritize Embodied Data Pipelines: If your business involves physical assets (robotics, machinery, infrastructure), start structuring your data collection to support building robust *sequences* of sensory input, rather than just static data dumps. This data will feed the World Models directly.

- Evaluate Current Systems for World Understanding Gaps: Audit any critical AI system (especially autonomous ones) to see where current generative or correlational methods might break down when faced with novel, real-world edge cases. JEPA offers a potential roadmap for hardening these systems against unexpected variance.

The race for AGI is entering a fascinating new phase. While the generative models of today have shown us the power of scale, Yann LeCun’s advocacy for JEPA and World Models suggests the path to true intelligence lies not in mimicking the surface of reality, but in building an efficient, internal blueprint of how that reality works.