The Next Frontier: How MoE, Custom Silicon, and Mamba Are Reshaping AI Hardware and Architecture

Artificial Intelligence, particularly the Large Language Model (LLM) landscape, is evolving at a staggering pace. What was state-of-the-art six months ago is already being optimized, challenged, or rendered obsolete. The foundation of this rapid change lies at the intersection of two critical domains: how we build the models (architecture) and what we run them on (hardware).

Recent analyses focusing on enterprise-ready hardware, such as the AMD MI355X accelerator, alongside discussions of foundational models like the Transformer and its efficient successor, Mixture-of-Experts (MoE), reveal a clear direction: AI is moving toward massive scale paired with radical efficiency. This analysis synthesizes these developments, exploring the competitive hardware arena, the architectural shifts already underway, and the ultimate implications for enterprise deployment.

The Architectural Split: Efficiency Through Sparsity (MoE)

For years, the Transformer architecture, with its powerful self-attention mechanism, reigned supreme. However, the downside is brute force: every part of the model (every parameter) must be calculated for every piece of data processed. This leads to massive computational requirements for both training and inference.

Understanding Mixture-of-Experts (MoE)

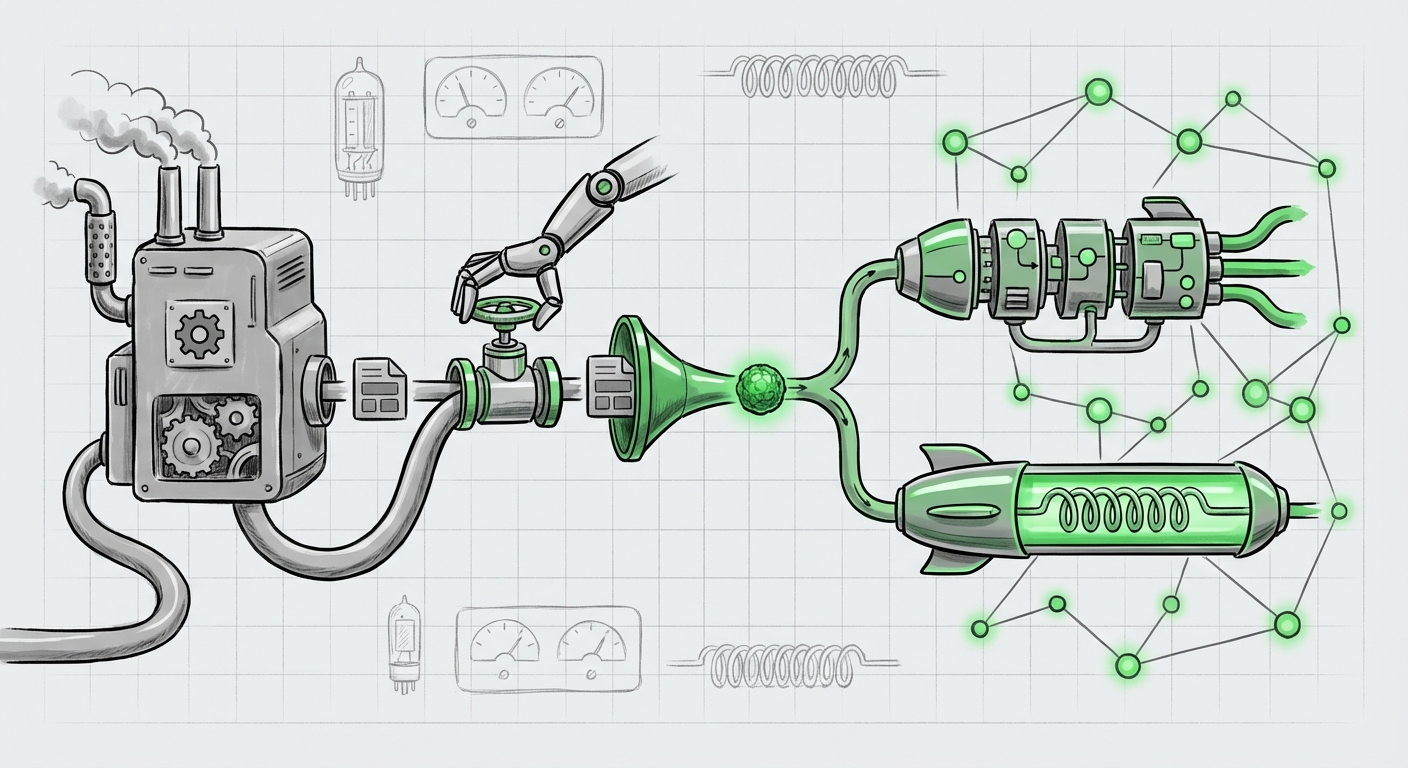

The Mixture-of-Experts model is the industry's current primary answer to this computational bottleneck. Think of a dense model as a massive, single supercomputer tackling every problem sequentially. An MoE model, conversely, is like a decentralized network of specialized experts.

When a request comes in (a query), a small routing network decides which specific "expert" modules in the model should handle that input. Only those selected experts activate. This results in conditional computation. While the total number of parameters in an MoE model might be huge (making it powerful), the actual computation required for any single inference or training step is significantly lower—sometimes by a factor of two or more.

For the Business Audience: This means faster responses (lower latency) and lower running costs (cheaper inference) for models that are intellectually much larger. If you are deploying customer service bots or advanced code assistants, MoE is the key to keeping the monthly cloud bill manageable while offering GPT-4 level complexity.

The Next Leap: Mamba and State Space Models (SSMs)

While MoE adds sparsity to the Transformer, researchers are actively pursuing entirely new foundational structures. State Space Models (SSMs), prominently featuring the Mamba architecture, are gaining serious traction. SSMs aim to solve the core problem of the Transformer: the quadratic scaling of attention.

A Transformer struggles to look at very long sequences (like entire books or complex codebases) because the memory and time required grow exponentially. Mamba offers linear scaling. It processes data sequentially but maintains an efficient internal state, allowing it to "remember" context much further back without the computational explosion. This is crucial for applications requiring deep context, such as legal discovery, advanced scientific modeling, or processing high-resolution video streams.

This architectural evolution signifies a move away from simply building bigger models toward building smarter, faster models that use fewer cycles per token generated.

The Hardware Wars: Custom Silicon Heats Up the AI Accelerator Market

Architecture dictates demand, but hardware dictates feasibility. For years, the AI training landscape has been characterized by NVIDIA’s near-monopoly on high-performance GPUs (like the H100/H200). However, the high cost and supply constraints are forcing major enterprises and cloud providers to seek alternatives.

AMD's Push and the Diversification Mandate

The emergence of hardware like the AMD MI355X (and its predecessors/successors) is significant because it validates the necessity of a competitive landscape. These chips are specifically designed to handle the high-bandwidth memory and matrix multiplication operations central to deep learning. For large organizations concerned about supply chain risk or total cost of ownership (TCO), having a robust alternative to NVIDIA is paramount.

The challenge for AMD, and any challenger, is not just raw FLOPS (floating-point operations per second), but the software ecosystem. Training AI requires mature frameworks, compilers, and libraries. The MI355X’s success relies heavily on AMD’s ability to seamlessly integrate with dominant frameworks like PyTorch, ensuring that an ML engineer can switch hardware with minimal code rework.

The Rise of Dedicated AI ASICs (Intel Gaudi)

The competition is not limited to traditional GPU makers. Companies like Intel, with their Gaudi accelerators, are focusing on Application-Specific Integrated Circuits (ASICs) tailored precisely for AI training workloads. When comparing chips like the Intel Gaudi 3 vs. AMD MI350 vs. NVIDIA H200, we are seeing a fracturing of the market based on workload optimization.

ASICs can sometimes outperform general-purpose GPUs on specific, highly optimized tasks because their design eliminates overhead not needed for AI. This competition is healthy: it drives down costs and accelerates innovation across the board, forcing better performance from everyone involved.

Deployment Realities: Bridging the Gap Between Training and Inference

Training a massive LLM costs millions. Running it effectively—inference—can cost far more over the model's lifetime. This is where engineering optimization becomes mission-critical.

The Necessity of Quantization

To move large models from massive, multi-million-dollar training clusters onto smaller, cheaper inference servers, a technique called quantization is indispensable. In simple terms, quantization means reducing the precision of the numbers used to store the model's knowledge (weights).

Standard AI models often use 16-bit or 32-bit floating-point numbers. Quantization compresses this data down to 8-bit, 4-bit, or even 2-bit integers. This radically shrinks the model’s memory footprint, allowing it to fit onto less powerful hardware and run much faster, as less data needs to be moved around.

The key trade-off, monitored closely by MLOps teams, is the balance between latency vs. accuracy. Pushing quantization too far risks degrading the model's intelligence—making the AI subtly dumber. Mastering 4-bit or lower quantization without significant performance loss is the current "secret sauce" for cost-effective, real-time AI deployment in the enterprise.

Future Implications: What This Means for Technology and Society

The convergence of these trends—efficient MoE architectures, diverse high-performance hardware, and aggressive inference optimization—paints a clear picture of the next three years in AI.

1. Democratization of Power

The era of only the wealthiest tech giants being able to afford cutting-edge AI is ending. When MoE models are paired with efficient hardware like the MI355X or Gaudi 3, and then aggressively quantized, organizations of nearly any size can deploy highly capable models affordably. This decentralization of compute power will fuel innovation across smaller startups and specialized vertical industries (e.g., specialized legal, medical imaging, or niche manufacturing AI).

2. The Shift in AI Talent Focus

The demand for pure "Transformer architects" will slightly wane, giving way to intense demand for two new roles: Efficiency Engineers and Hardware-Aware Developers. The future belongs to those who can effectively utilize MoE routing, implement Mamba architectures, and deploy optimized workloads across heterogeneous hardware stacks (AMD, Intel, and NVIDIA).

3. Context Becomes the Competitive Edge

As raw reasoning power becomes commoditized by widely available, efficient models, the new competitive advantage will lie in context length. Because architectures like Mamba promise linear scaling for long sequences, the ability to feed an AI vast, nuanced data sets—be it a company’s entire internal knowledge base or continuous streams of sensor data—will become the primary differentiator for superior AI performance.

Actionable Insights for Technology Leaders

To navigate this dynamic environment, leaders must adjust procurement, strategy, and engineering focus:

- Strategic Hardware Procurement: Do not lock into a single vendor. Evaluate the TCO of next-generation chips from AMD and Intel alongside NVIDIA for your specific training and inference mix. Supply chain resiliency is now a compute resiliency factor.

- Architectural Readiness: Begin pilots using MoE-based models now. Understand the complexity of routing and balancing these sparse models in your production environment. Simultaneously, monitor State Space Models like Mamba for future shifts in long-context applications.

- Inference Optimization as a Core Metric: Treat quantization and low-bit deployment as crucial engineering tasks, not afterthoughts. The difference between 8-bit and 4-bit inference can be the difference between product viability and prohibitive cost.

The current state of LLMs—defined by the efficiency of MoE, the challenge to the GPU status quo by custom silicon, and the search for next-generation memory-efficient models—is not just an evolution; it is a re-architecture of the entire AI stack. The future of AI isn't just bigger; it's faster, cheaper, and more widely accessible than ever before, provided we master the engineering required to tame this new complexity.