The Multi-Agent Revolution: Why OpenAI Hiring OpenClaw's Creator Signals the Future of Personal AI

In the fast-moving world of Artificial Intelligence, hiring decisions are often more telling than press releases. The recent news that Peter Steinberger, the creator of the notable OpenClaw framework, is joining OpenAI signals a critical pivot point. This move is not just about adding another brilliant engineer; it’s about OpenAI prioritizing the next, most complex evolution of AI: **truly autonomous, personal agents.**

CEO Sam Altman has frequently championed a future that is "extremely multi-agent." This isn't just a buzzword; it’s a complete paradigm shift away from the current model where we ask a chatbot a question and get a single, static answer. Steinberger’s arrival, explicitly tasked with building agents "even his mother can use," shows that the industry’s focus is moving from raw intelligence to **reliable, usable autonomy.**

From Chatbots to Co-Pilots: Understanding the Multi-Agent Leap

To appreciate the significance of this news, we must first understand the difference between a large language model (LLM) and an AI agent. Current tools like ChatGPT are incredibly sophisticated—they are expert conversationalists. However, they are fundamentally reactive. You prompt them, they respond.

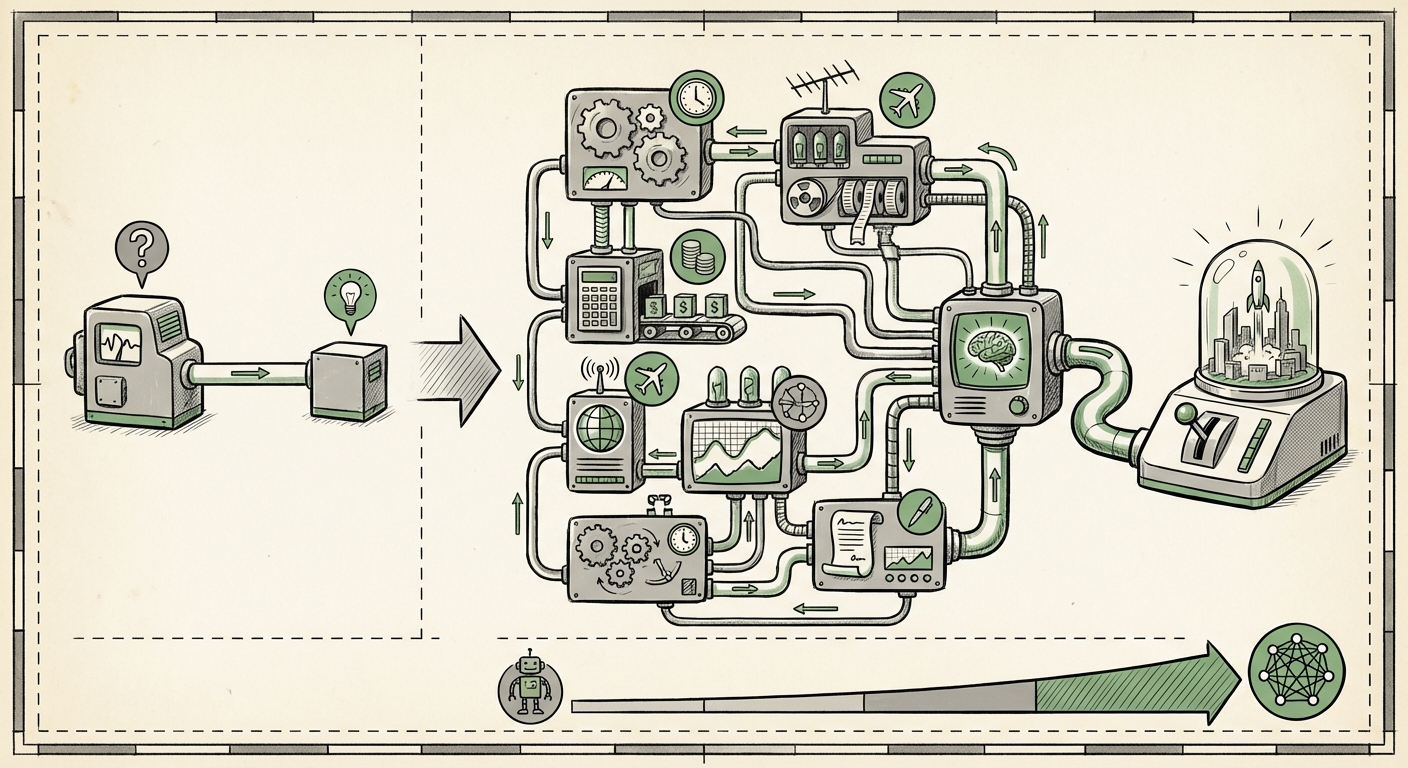

An **AI Agent**, in contrast, is designed to act autonomously to achieve a goal. It doesn't just answer; it plans, executes, self-corrects, and uses tools (like browsing the web, running code, or sending emails) to complete multi-step tasks. This is the essence of Sam Altman’s "extremely multi-agent" concept:

- Individual Agents: A personal agent might handle your travel planning, negotiating prices, booking flights, and setting calendar reminders—all without moment-to-moment prompting.

- Multi-Agent Coordination: In a complex scenario, your Travel Agent might need to coordinate with a separate "Finance Agent" to check budget constraints, or a "Work Agent" to ensure meeting schedules don't conflict. These agents talk to each other to solve the larger problem.

Steinberger’s background with OpenClaw suggests expertise in scaffolding these complex interactions. Open Source frameworks in this space—like those that inspired OpenClaw—tackle the difficult engineering challenges of memory management, tool integration, and workflow orchestration. Recruiting this specific expertise indicates OpenAI is ready to move beyond the theoretical promise of agents into scalable, production-ready systems.

Corroboration: The Industry’s Obsession with Agentic Systems

This talent acquisition is perfectly timed with broader industry shifts. Analysts tracking the competitive landscape note a significant acceleration in agent research. Frameworks that allow LLMs to interact with external APIs and maintain state are seen as the key competitive differentiator moving forward. The industry is clearly aligning its resources towards this goal, confirming that the "multi-agent" environment is now the mainstream destination.

For technical audiences, this means the immediate future involves mastering concepts like:

- Tool Use & Function Calling: Giving the AI reliable access to the outside world.

- Self-Reflection/Critique: The ability for the agent to evaluate its own output and try again if it fails a test.

- Persistent Memory: Ensuring the agent remembers past actions and long-term goals across sessions.

The Usability Hurdle: Agents Your Mother Can Use

The most fascinating part of Steinberger’s mandate is the emphasis on usability. Powerful AI that requires complex prompt engineering to function is niche technology. For AI to become infrastructure—like electricity or the internet—it must be intuitive.

The goal of creating an agent "even his mother can use" speaks directly to solving the Trust and Transparency Gap. When an AI system is autonomous, users worry about failure modes. What if the travel agent books the wrong flight? What if the personal finance agent makes an unauthorized purchase?

Democratizing Autonomy

This challenge involves solving major hurdles in UX/UI:

- Guardrails: Building inherent, understandable safety mechanisms. A user must be able to pause or veto an agent’s action easily.

- Explainability: The agent must clearly articulate *why* it made a decision, showing the steps it took, which directly relates to the need for robust internal logging and reasoning chains.

- Interface Simplicity: The interface must hide the complexity of the backend multi-agent coordination, presenting the user only with high-level goals and clear confirmation prompts.

This is the "last mile" problem in AI deployment. Many experts believe that the next wave of trillion-dollar companies will be those that successfully bridge this gap—making highly complex, personalized automation accessible via simple, human-like interaction.

Implications for Business: Automation Beyond the Inbox

For businesses, the transition to personal, multi-agent systems will restructure workflows far more profoundly than current generative tools have. We are moving from using AI to draft emails to using AI to *manage entire project lifecycles*.

The Restructuring of Digital Workflows

Consider a scenario in software development or market research. Today, an analyst might use an LLM to summarize findings. Tomorrow, a personal agent suite will:

- Task Breakdown: Receive the high-level goal ("Develop a competitive analysis for Product X").

- Sub-Agent Delegation: Delegate "Market Data Collection" to a web-browsing agent, "Data Modeling" to a code-executing agent, and "Report Drafting" to a writing agent.

- Synthesis and Review: The main Personal Agent synthesizes the outputs, flags any discrepancies found between the data agent and the writing agent, and presents a final, comprehensive document for human sign-off.

This requires robust API integration and orchestration layers—the exact type of system expertise Steinberger brings. Businesses need to start identifying complex, multi-step processes within their organizations that can be broken down into these agentic sub-tasks. The ROI isn't just faster task completion; it's the ability to run complex operations 24/7 with near-perfect consistency.

Societal Ripples: Trust, Responsibility, and Digital Personas

On a broader societal level, the rise of deeply personalized, autonomous agents raises complex questions about digital identity and responsibility. If an agent acts on your behalf across multiple platforms—managing finances, scheduling healthcare appointments, even social communication—where does human accountability end and machine autonomy begin?

The push for usability, driven by the "mother-test," suggests OpenAI is keenly aware of this liability landscape. A system that can only be operated by AI experts invites regulatory scrutiny and user rejection. A system that is intuitively controllable is more likely to achieve widespread, safe integration.

The Evolution of Digital Ownership

If your personal AI agent learns your preferences so well that it writes convincingly in your style or negotiates contracts on your behalf, does that digital persona become an extension of you? This convergence of specialized agents into a single, powerful personal assistant forces us to redefine digital agency. It also places immense importance on data privacy and security—if a single point of entry controls access to all your digital functions, that entry point must be impregnable.

Actionable Insights for Navigating the Agent Era

For those leading technology strategy, the signals from OpenAI are clear. Here is how to prepare for the imminent shift toward reliable, multi-agent systems:

1. Invest in Orchestration Talent, Not Just Model Knowledge

Knowing how to prompt GPT-4 is table stakes. The future demands developers who understand how to chain LLM calls, manage state, integrate external tools, and build resilient agent architectures. Look for expertise in areas like state machines, symbolic reasoning integration, and error handling specifically designed for iterative, autonomous loops.

2. Audit Workflows for "Agent Potential"

Don't look for single tasks to automate; look for processes that involve handoffs between people, software, and data sources. Any workflow that requires more than three distinct steps and two different software applications is a prime candidate for agentic restructuring. Prioritize these processes for early internal testing.

3. Design for "Fail-Safe" Transparency

If you are developing consumer-facing AI, assume the user will need to interrogate the AI's decisions constantly. User interfaces must evolve from simple chat windows to "dashboard views" that illustrate the agent’s reasoning process, memory usage, and current goal state. Trust is the currency of autonomy.

The recruitment of Peter Steinberger into OpenAI is a strong indicator that the age of simple LLMs is concluding. We are entering the age of the **Agent Economy**, where AI assistants will actively manage tasks, coordinate with other AIs, and become indispensable partners in both professional and personal life. The success of this revolution hinges entirely on transforming raw intelligence into reliable, user-friendly action—a challenge that Steinberger is now tasked with solving at the industry's leading edge.