The Open Weight Arms Race: How Alibaba's Qwen 3.5 Signals a New Era of Global AI Competition and Efficiency

The global race to build the most powerful Artificial Intelligence models has long been framed as a showdown between closed labs in Silicon Valley and well-funded Chinese counterparts. However, the recent release of Alibaba’s Qwen 3.5 signals something more profound than just incremental competition: it confirms the sustained, high-velocity innovation occurring within China’s open-weight ecosystem, forcing the entire industry to rethink efficiency and accessibility.

This is not just another model release; it’s a strategic declaration. By making Qwen 3.5 freely available, Alibaba is challenging the dominance of models like Meta’s Llama series and Mistral AI in the crucial "open" category. To fully grasp the implications, we must examine four key dimensions of this development: competitive positioning, underlying architectural genius, strategic national context, and the global ripple effect on open-source adoption.

The Benchmark Battle: Closing the Performance Gap

For any new model to gain traction, it must prove its mettle against established leaders. Qwen 3.5 is explicitly designed to compete head-to-head with the best Western open models available. This pursuit of parity is critical for developer adoption. If a Chinese-developed model can offer comparable or superior performance while remaining open, it inherently attracts a massive global user base.

The need for corroboration here is clear: independent benchmarks are the truth serum of the AI world. When analysts look at performance tables—often found on community leaderboards—they are looking for models that punch above their weight class. The very act of striving to match models with potentially magnitudes more parameters demonstrates an aggressive R&D cycle that refuses to lag behind proprietary systems.

This intensity in benchmarking means that the barrier to entry for competitive AI performance is being lowered rapidly. Developers, who might have been hesitant to use non-Western models previously due to perceived capability gaps, now have a compelling, high-performing alternative available for fine-tuning and commercial use.

What This Means for Developers: More Choice, Faster Iteration

For the average developer building an application, this constant competition is a massive benefit. It means that the state-of-the-art capabilities filtering down into accessible, open-weight frameworks are accelerating. Instead of waiting years for proprietary breakthroughs to trickle down, developers now have access to advanced techniques almost immediately, fostering faster iteration cycles globally.

The Architectural Secret Sauce: Efficiency Through Hybridization

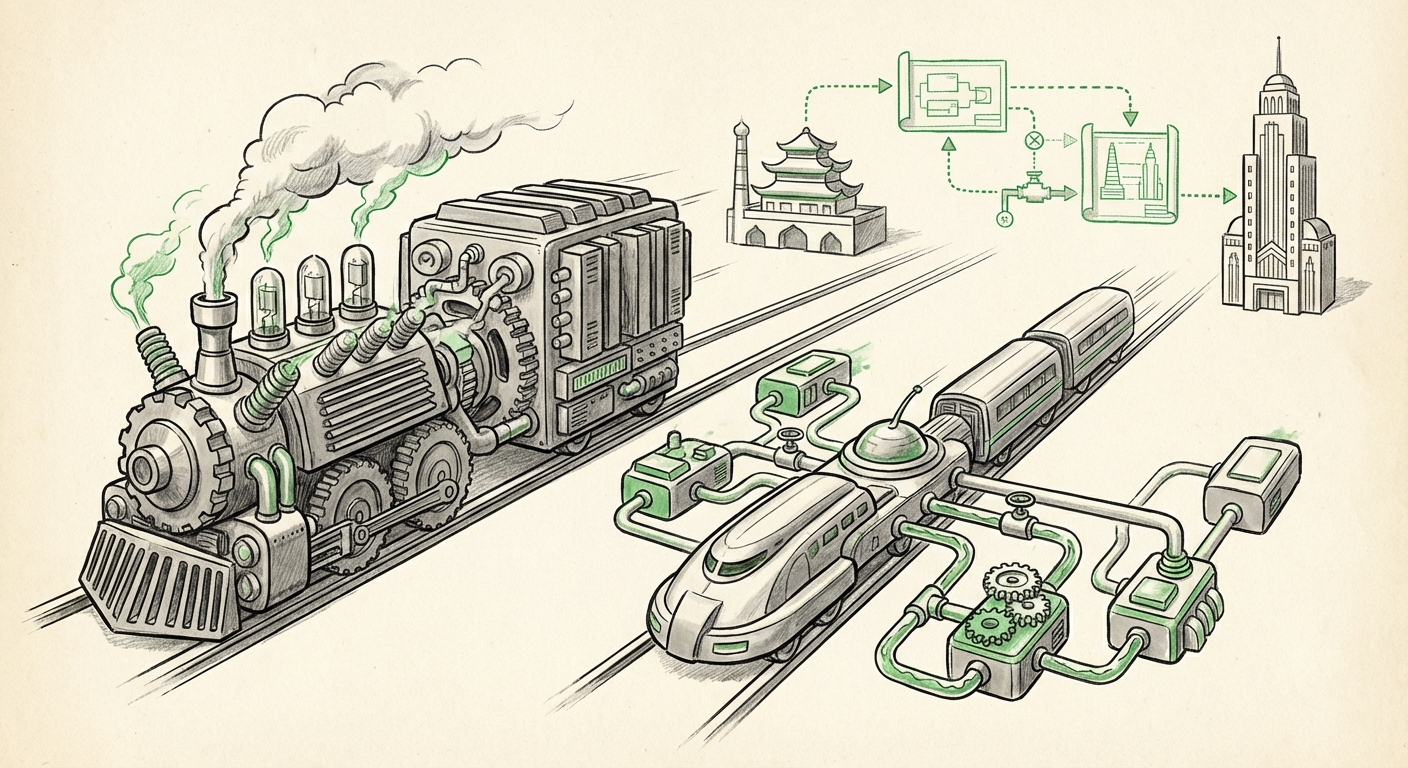

Perhaps the most technically fascinating aspect of Qwen 3.5 is its architecture. The model uses a hybrid approach, cleverly combining two distinct, powerful concepts: Linear Attention and Mixture-of-Experts (MoE).

To simplify for a broader audience, imagine a traditional AI model as one massive, highly trained brain that must be fully consulted for every single question asked. This is computationally expensive (like turning on every light in a stadium just to read a single book).

Qwen 3.5 uses two innovations to make this process smarter:

- Mixture-of-Experts (MoE): This technology is like having many small specialist brains (the "experts"). When a question comes in, a traffic cop (the router) quickly decides which 2 or 3 specialists are best equipped to answer it, leaving the rest of the brain idle. This saves enormous computing power during inference.

- Linear Attention: Traditional Transformer models use attention mechanisms that get exponentially slower as the input text gets longer. Linear attention offers a shortcut, allowing the model to process much longer contexts without hitting a computational wall.

The article notes that Qwen 3.5 achieves this high performance while keeping only 17 billion parameters active per query, despite potentially having more total parameters overall. This efficiency metric is the game-changer. It suggests that future high-performance models may not necessarily require the trillion-plus parameter counts currently associated with closed giants. Instead, intelligence will be measured by *how smartly* those parameters are activated.

The Future of Inference: Running Power on a Budget

This architectural trend points toward a future where running sophisticated LLMs is far less resource-intensive. Companies worried about the massive GPU costs of deploying models will look eagerly toward these efficient architectures. It lowers the cloud bill, democratizes deployment capabilities, and makes edge deployment—running powerful AI directly on local devices—a more tangible reality.

The Geopolitical Context: China’s Open-Source Strategy

Alibaba is not operating in a vacuum. The sustained, rapid shipping of high-quality models from Chinese labs is intrinsically tied to a broader national strategy regarding AI infrastructure and technological self-sufficiency. As research suggests, this open-weight approach serves multiple strategic aims:

- Domestic Ecosystem Building: By providing powerful, free-to-use base models, Chinese companies encourage the growth of a vast domestic developer community that builds applications on top of their foundational technology, locking in future enterprise usage.

- Soft Power in Open Source: Open-weight models are a powerful form of technological diplomacy. By contributing high-quality, accessible code to the global open-source commons, Chinese labs gain influence over global standards, datasets, and community development norms, competing directly with the influence wielded by US-based open-source champions.

- Bypassing Export Controls: While advanced hardware (like cutting-edge GPUs) faces strict export controls, software—especially open-weight models—can flow more freely. This allows Chinese innovation to penetrate international markets unimpeded by hardware restrictions.

The message is clear: while the West focuses on guarding proprietary advantages, China is leveraging the open-source movement to accelerate development velocity and market penetration across the entire stack.

Global Ripples: The Globalization of the Open Model Community

The success of Qwen 3.5 underscores the fact that the "open-weight LLM adoption outside the US and Europe" is no longer niche; it is mainstream. When a company as significant as Alibaba commits its best research to an open license, it injects massive trust and resources into the global developer community.

This has profound implications for how businesses choose their AI stack. Before, the conversation was dominated by OpenAI APIs or Meta’s Llama releases. Now, the global pool of viable, high-quality open models is diversifying rapidly. This diversity mitigates risks associated with over-reliance on any single provider or geopolitical jurisdiction.

Practical Implications for Businesses

- Diversified Sourcing: Enterprises should actively test and benchmark models from both Western and Asian providers (like Qwen). Building an AI strategy dependent on a single vendor pool is increasingly risky.

- Efficiency First: CTOs must prioritize models that demonstrate efficiency gains (like MoE architectures). The long-term cost of running massive, dense models will become unsustainable compared to optimized, sparse alternatives.

- Localized Fine-Tuning: For international firms, accessing open models from different regions allows for superior localization. Qwen models, trained on extensive Chinese data sets, offer native advantages in certain linguistic or cultural contexts that general Western models may lack.

Future Trajectory: Incrementalism Meets Architectural Leaps

The release schedule suggests we are moving beyond the era of waiting 12-18 months for the next major breakthrough. We are now in a phase of rapid, iterative improvement driven by open competition. Future developments will likely focus on:

- Context Window Wars: Expect continued breakthroughs in making context windows—how much information the model can read at once—effectively infinite, driven by advancements like the linear attention seen in Qwen 3.5.

- The Death of Scale Worship: The industry will increasingly pivot away from simply comparing parameter counts. True performance metrics will revolve around training efficiency (compute-per-flop) and inference efficiency (active parameters).

- Standardization of Open-Source Tooling: As more high-caliber models appear from all corners of the globe, the tooling around quantization, deployment, and safety evaluation will need to become robustly platform-agnostic to manage this complexity.

Qwen 3.5 is not just keeping pace; it is actively setting the tempo for the next stage of AI development—a stage defined by efficiency, accessibility, and fierce global competition in the open arena.