China's Open-Weight LLM Blitz: How Qwen3.5 Accelerates the Global AI Race and Redefines Efficiency

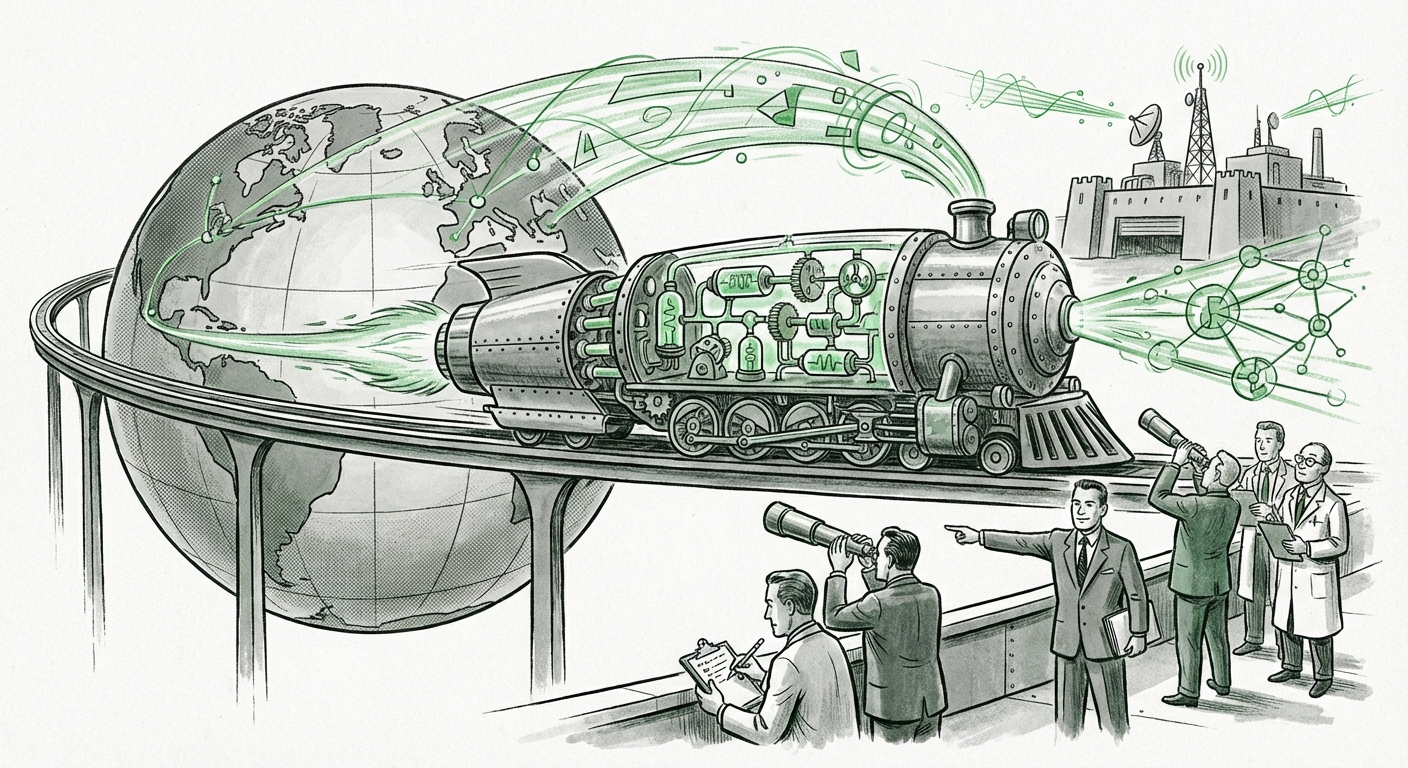

The global race for Artificial Intelligence supremacy is not just being fought in closed labs with trillion-parameter models; it is being fiercely contested in the open-source arena. The recent release of Alibaba’s Qwen3.5, offered freely and boasting significant performance gains, serves as a powerful signal: China's commitment to shipping rapid, high-quality, open-weight Large Language Models (LLMs) is far from slowing down. This trend is fundamentally altering how AI capabilities are distributed, developed, and consumed worldwide.

Qwen3.5 is more than just another iteration; it represents a convergence of strategic openness and deep engineering sophistication. By reportedly matching top Western models while utilizing a novel, efficient architecture, it forces a necessary recalibration of what developers and businesses expect from "mid-sized" foundational models.

The Three Pillars of the Qwen3.5 Signal

To understand the gravity of this release, we must look beyond the benchmark scores and examine the underlying strategy and technology. The significance of Qwen3.5 rests on three interlocking trends:

- Performance Parity: The explicit goal to match or exceed leading Western open models (like Meta’s Llama family) proves that the talent and resources deployed in Chinese labs can yield state-of-the-art results.

- Architectural Innovation for Efficiency: The use of a hybrid architecture combining Mixture-of-Experts (MoE) principles with linear attention to keep only 17 billion parameters active per query is a direct assault on the cost of inference.

- The Open-Weight Strategy: Releasing the model for free access democratizes high-level AI, fostering immediate community adoption and feedback loops—a strategy that contrasts sharply with the proprietary approaches favored by some global leaders.

Context: The Roaring Chinese LLM Ecosystem

Alibaba is not operating in a vacuum. The pace of model deployment across Chinese tech giants and specialized AI startups is staggering. This rapid iteration cycle is fueled by significant national and corporate investment, creating an environment where models mature incredibly quickly. A critical analysis requires checking performance against this dynamic field. Recent evaluations on key benchmarks often show intense competition among models from Baidu, Zhipu AI, and others, all vying for the top spot among open or semi-open releases. The ability of Qwen3.5 to carve out a leading position confirms the robust health and competitive intensity of the entire ecosystem [See context on the broader Chinese LLM competition].

This rapid pace is critical. When a top-tier model is released, the community immediately starts fine-tuning, building applications, and finding flaws. This feedback loop accelerates development far faster than a closed ecosystem allows. The consequence is a constant raising of the baseline standard for open-source AI capabilities.

Decoding the Efficiency Breakthrough: MoE Meets Linear Attention

For many, the most exciting aspect of Qwen3.5 is how it achieves high performance without the immense compute requirements typically associated with large models. This efficiency hinges on two advanced architectural concepts:

1. The Power of Sparse Activation (MoE)

Think of traditional LLMs like a massive library where every single book must be consulted for every question asked. This is computationally expensive. The Mixture-of-Experts (MoE) architecture, which Qwen3.5 seems to leverage, uses a routing mechanism. Instead of activating the whole model, the router selects only the most relevant "expert" subnetworks needed to process the input. In Qwen3.5’s case, having only 17 billion parameters active *per query*—even if the total underlying model size is larger—dramatically reduces the computational load (FLOPs) required for inference. This is a game-changer for deployment costs [See technical context on MoE optimization].

2. The Role of Linear Attention

Traditional attention mechanisms in Transformers scale poorly with the length of the input text (quadratic scaling). If you double the sentence length, the computation required for attention quadruples. Linear attention mechanisms aim to solve this scaling problem, often by approximating the full attention mechanism but doing so much faster. By combining this with MoE, Alibaba is building a model that is both smart (using specialized experts) and fast (using efficient attention across long contexts).

What this means for the future: Efficiency is the new battleground. As models grow larger, the cost of running them (inference) becomes the primary bottleneck for widespread commercial adoption. Innovations that decouple performance gains from brute-force parameter scaling will allow smaller companies, individual developers, and resource-constrained regions to deploy highly capable LLMs locally. Qwen3.5 demonstrates that cutting-edge performance can now fit into more accessible hardware footprints.

The Geopolitics of Openness: Strategy vs. Secrecy

The decision to release Qwen3.5 as an open-weight model carries significant geopolitical and market weight. This strategy directly contrasts with the tendency of some leading US AI labs, which often employ more gated access, limited APIs, or purely proprietary training methods for their most advanced models.

The open-weight approach has several powerful effects:

- Rapid Ecosystem Building: Free distribution instantly seeds the model across thousands of developers, leading to quick identification of bugs, creative downstream applications, and the development of specialized versions (fine-tunes).

- Talent Magnetism: It positions Alibaba and the broader Chinese AI sector as champions of open contribution, attracting global researchers who prefer working with fully accessible architectures.

- Standardization Influence: Widespread adoption of an open architecture helps establish that architecture as an industry standard, giving the originator significant influence over future development trajectories [See geopolitical context on open-source AI].

For policymakers and strategists, this signals a divergence in approach. If top-tier capability is being freely offered by players outside traditional Western tech strongholds, the perceived technological moat around closed systems shrinks considerably. This forces international businesses to weigh the benefits of proprietary lock-in against the agility and cost-effectiveness of readily available, highly capable open models.

Future Implications: Velocity, Velocity, Velocity

The continuous, rapid shipment of powerful open models from China suggests a future defined by extreme velocity in AI deployment. This velocity has profound implications across technology and business sectors.

Implication 1: The Democratization of Advanced AI

If a model can perform nearly as well as the industry leaders but requires significantly less compute to run, the cost of entry for building sophisticated AI products plummets. This is not just about having a chatbot; it's about integrating complex reasoning and large-scale data processing into smaller applications, manufacturing processes, and localized enterprise solutions. Developers no longer need access to massive GPU clusters to innovate; they need a strong laptop and Qwen3.5.

Implication 2: Shifting Global AI Supply Chains

The reliance on a few key Western providers for foundational models is being actively challenged. Businesses globally, particularly those sensitive to geopolitical risk or data sovereignty, now have viable, high-performance alternatives originating from China. This diversification of foundational model sources mitigates single-point-of-failure risks and fosters healthy competition that drives down overall pricing.

Implication 3: The Talent Arms Race Continues

The speed at which these models appear—Qwen3.5 following previous iterations—is a direct reflection of intense investment and organizational commitment. Reports tracking AI investment velocity confirm that both government support and major tech players are prioritizing LLM R&D, ensuring this fast cadence is sustainable for the foreseeable future [See context on China's AI investment velocity]. This sustained pressure forces Western labs to move faster, increasing the overall pace of global AI advancement.

Actionable Insights for Businesses and Developers

The era of waiting for the next major US release is over. Businesses must adapt to a dynamic, fast-moving landscape where leading-edge performance is frequently available for free.

For Developers: Benchmark and Deploy Now

Developers should immediately begin stress-testing Qwen3.5 against their specific use cases. Don’t wait for official confirmations; the open-source community moves faster. Focus optimization efforts on techniques that maximize MoE efficiency. The ability to run powerful reasoning locally or on cheaper infrastructure is the key competitive advantage offered here.

For Business Leaders: Reassess Vendor Lock-In

If your current AI strategy relies heavily on proprietary API access with associated variable costs, it is time to model the TCO (Total Cost of Ownership) using highly efficient open-weight models like Qwen3.5. Evaluating the trade-offs between convenience (API access) and control/cost (self-hosting efficient open models) is now a high-priority strategic decision.

For Researchers: Focus on the Next Frontier

Since the 'medium-sized' model space is rapidly being saturated with powerful, efficient options, researchers should pivot focus. The next major leap will likely come from mastering multi-modality integration on these efficient bases, or developing novel reasoning paradigms that MoE structures inherently support or restrict.

Conclusion: The Open Frontier is Fierce

Alibaba’s Qwen3.5 is not just a technical achievement; it is a geopolitical and market statement delivered in code and weights. It confirms that the "open-weight race" originating from China is highly competitive, technically sophisticated, and accelerating rapidly. By merging cutting-edge efficiency techniques like MoE with an open distribution model, Qwen3.5 sets a new standard for accessible, high-performance AI.

The global implication is clear: the technological gaps between proprietary and open systems are closing at an unprecedented rate. The future of AI development will be defined by this continuous, high-velocity competition, forcing incumbents to innovate just as rapidly as the challengers who are willing to share their breakthroughs.