Beyond the Flood: Why Context Quality, Not Quantity, Is the Next Frontier for AI Coding Agents

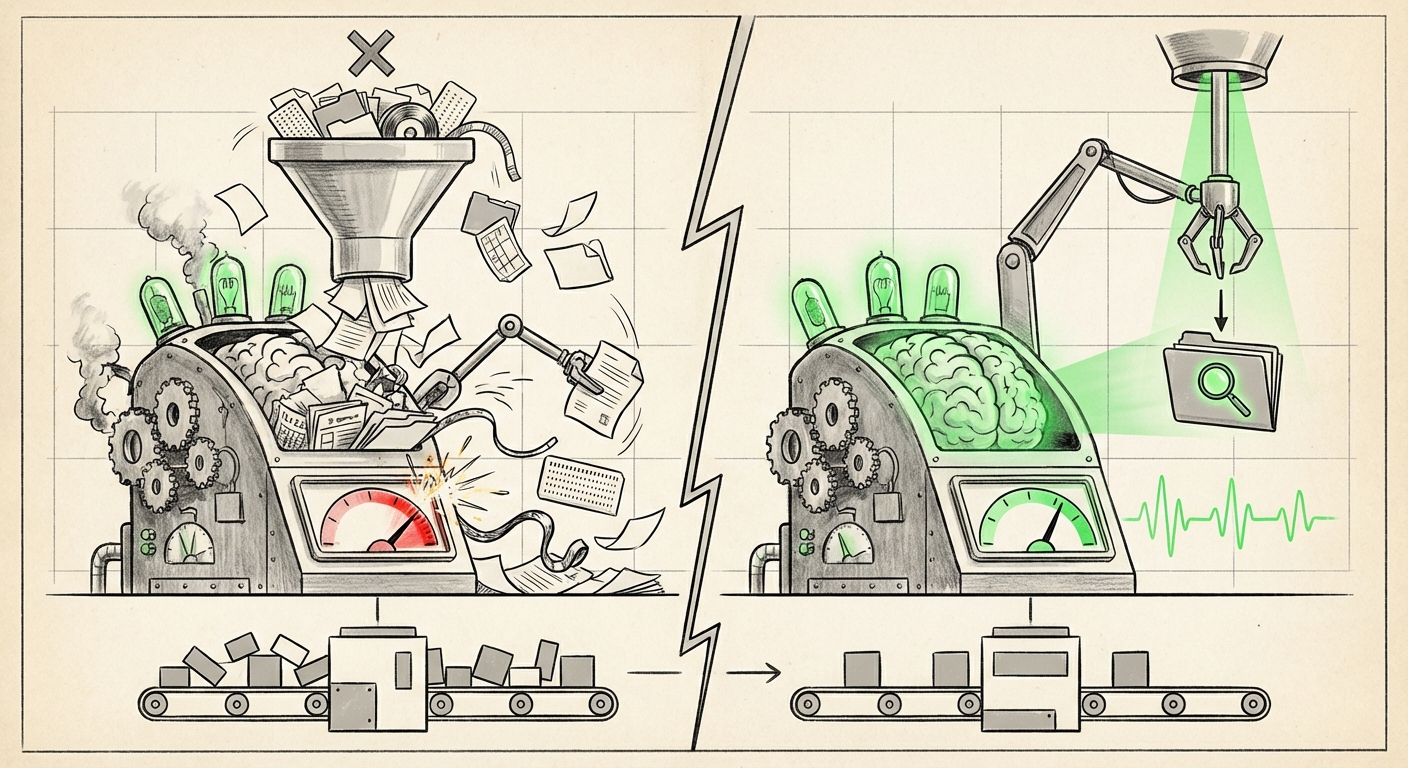

The promise of Artificial Intelligence in software development is seductive: instantly generate complex code, debug entire modules, and migrate legacy systems with a single prompt. The tools built to achieve this—AI coding agents—have largely relied on one core strategy to handle massive, complex projects: throwing more information at them. If an agent needs to understand your 100,000-line codebase, the prevailing thought has been to feed it as much of that code as possible.

However, recent, crucial research is suggesting this "more is better" approach is fundamentally flawed. New findings indicate that providing coding agents with extensive, uncurated context files often doesn't just fail to help; it actively hurts performance. This discovery challenges the entire current roadmap for AI augmentation and signals a major pivot point for the technology.

The Paradox of Plenty: When Context Becomes Noise

Imagine asking a new colleague to fix a bug. Instead of pointing them to the single file causing the issue, you hand them the keys to the entire server rack, hoping they stumble upon the right connection. That is essentially what we have been doing with current Retrieval-Augmented Generation (RAG) systems applied to codebases.

The initial research highlights a clear disconnect: context files, meant to ground the AI in the specific environment of a project, are frequently irrelevant or contradictory to the immediate task. When the agent’s context window—its temporary working memory—is filled with hundreds of lines of unrelated configuration files, utility functions, or old documentation, the model struggles to isolate the critical signals needed to generate the correct answer.

Implication 1: Context Quality Over Quantity

This is the most immediate takeaway for tool builders. Bigger context windows (like those now exceeding 1 million tokens) are impressive marketing features, but they are only useful if the data being fed through them is precise. For a business relying on AI for efficiency, this means investing heavily in retrieval systems, not just the foundational LLMs themselves. If the retrieval step selects five irrelevant files for every one crucial file, the whole process stalls.

Implication 2: A Direct Critique of Basic RAG

Retrieval-Augmented Generation is the backbone of most specialized AI tools. It works by searching a massive database (the codebase, indexed as vectors) for text snippets related to the user's prompt and inserting those snippets into the prompt before asking the LLM to generate a response. The failure here suggests that simple vector similarity search is inadequate for complex, relational data like code. Code isn't just text; it’s a highly structured graph of dependencies, classes, and inheritance chains.

Implication 3: The Need for Smarter Curation

The future generation of coding agents must incorporate advanced filtering. They need to understand the *intent* of the request—Is this a data model change? A frontend styling tweak? A security patch?—and use that understanding to select only the most relevant files via hierarchical indexing or dynamic dependency mapping, rather than a blanket retrieval.

The Technical Root: Understanding the 'Lost in the Middle' Phenomenon

To grasp why quality trumps quantity, we must look inside the machine. The Transformer architecture, which powers all modern LLMs, struggles to maintain perfect fidelity across extremely long inputs. This is not a flaw that is easily fixed by simply making the model bigger; it's baked into how these attention mechanisms function.

Research into **"LLM lost in the middle" context window performance degradation** shows that models become remarkably adept at recalling information presented at the very beginning or the very end of a prompt, while information buried deep in the middle becomes fuzzy or ignored entirely. When we feed a coding agent a massive dump of 50 files, the most critical file defining the current task is often relegated to the middle of that massive prompt, drowned out by the noise of every other file included.

This technical reality dictates that simply scaling context windows, while useful for summarizing large documents, is a poor strategy for precise, complex task execution like coding. It’s the difference between reading a book summary (large context) and finding a specific line of code in a functional dependency tree (precise retrieval).

The Engineering Pivot: Moving Towards Grounded, Intelligent Retrieval

If dumping context is inefficient, the engineering focus must immediately shift upstream to the grounding layer—the retrieval mechanism. This is where the exciting, cutting-edge work is happening, focusing on context that is smart rather than just large.

For practitioners building production AI applications, the solution lies in exploring **advanced retrieval augmented generation (RAG) code indexing** techniques. We are seeing a move away from simple embedding searches toward methods that map the semantic and structural relationships within the code:

- Graph RAG: Instead of treating the codebase as a flat list of text chunks, Graph RAG builds a knowledge graph that maps out how files, functions, classes, and variables relate to each other. When a developer asks, "How do I add a payment gateway to the checkout service?", the agent queries the graph to traverse only the necessary nodes (the `checkout.js` file, its dependency on the `payment_processor` module, and the relevant API definitions), providing only the tightest, most relevant context.

- HyDE (Hypothetical Document Embeddings): This advanced technique involves asking the LLM to first generate a *hypothetical* ideal answer to the query. The embedding (digital fingerprint) of this hypothetical answer is then used to search the database, often resulting in much more accurate retrieval than using the raw, short query itself.

- Multi-Hop Reasoning: For complex tasks, agents must learn to ask clarifying questions or perform sequential searches. For instance, the first step might retrieve the main class definition. The second step uses that definition to query *which* helper functions it calls, providing a refined context for the final code generation step.

These methods acknowledge that code understanding is hierarchical and relational. They are the necessary evolution for agents to move from being suggestive auto-completers to reliable software partners.

The Human Factor: Reducing Cognitive Load for Developers

The implications of context overload extend beyond the computational efficiency of the LLM; they critically impact the human developer. The goal of AI assistance is to increase productivity, but if the tool forces the user to spend extra time filtering misinformation, the overall workflow suffers.

When exploring the **cognitive load impact of context switching in AI agents**, we see that irrelevant information acts as "interface friction." Developers already manage immense cognitive loads—tracking business logic, memory usage, security implications, and dependency conflicts. An AI that floods the screen with tangential code forces the developer into an unnecessary, frustrating filtering role.

The design principle for the next generation of AI tools must prioritize curation over volume. Actionable insights for product designers include:

- Context Summarization Layers: Agents should summarize large retrieved files into actionable bullet points detailing their relevance before presenting the full code block, allowing developers to quickly triage relevance.

- Confidence Scoring: If the retrieval mechanism is unsure, the agent should respond with a low-confidence warning or, better yet, ask the developer for clarification ("I found three potential configuration files; which one governs the database connection?") rather than guessing with a confused context set.

- Agentic Delegation: Complex tasks should be delegated to sub-agents. One agent handles context filtering, another handles external documentation lookup, and a final agent synthesizes the result. This mimics how expert human teams collaborate.

This shift means that the most effective AI tools of the future won't be the ones that claim the largest context window, but the ones that provide the smallest, most perfect set of necessary facts for the task at hand.

Practical Implications: What Businesses Must Do Now

For technology leaders and CTOs, the recent findings serve as a mandate to critically evaluate current AI investments:

- Audit Your RAG Strategy: If you are using off-the-shelf context loading for proprietary code, assume performance degradation unless you have empirical evidence otherwise. Focus R&D spend on developing proprietary, code-aware indexing and retrieval mechanisms.

- Prioritize Intent Understanding: Business value is derived from task completion. Move beyond simple keyword matching in your prompts. Invest in techniques that allow your agents to truly understand the *purpose* of a request, which drives better context selection.

- Adopt a Phased Approach to Agent Deployment: Start AI agents on small, well-defined tasks where context is inherently limited (e.g., "Refactor this specific 500-line function"). Only once retrieval is proven accurate should you attempt to deploy them across massive, loosely coupled repositories.

Conclusion: The Age of Precision Intelligence

The era of simply scaling context windows into the stratosphere for coding assistants is meeting a sharp reality check. The human brain, and by extension, the LLM architecture, can only handle so much noise before core functionality degrades. This is not a setback for AI; it is a necessary maturation.

We are moving from the Age of Brute-Force Context to the Age of Precision Intelligence. Future AI coding agents will be defined not by how much they can ingest, but by how intelligently they can filter, synthesize, and deliver the exact knowledge required, precisely when it is needed. This renewed focus on deep, smart grounding promises to unlock the next true leap in developer productivity, making AI agents not just helpful tools, but indispensable, expert colleagues.