The Regulatory Frontier: How Anthropic & Infosys Are Forging Safe AI Agents for High-Stakes Industries

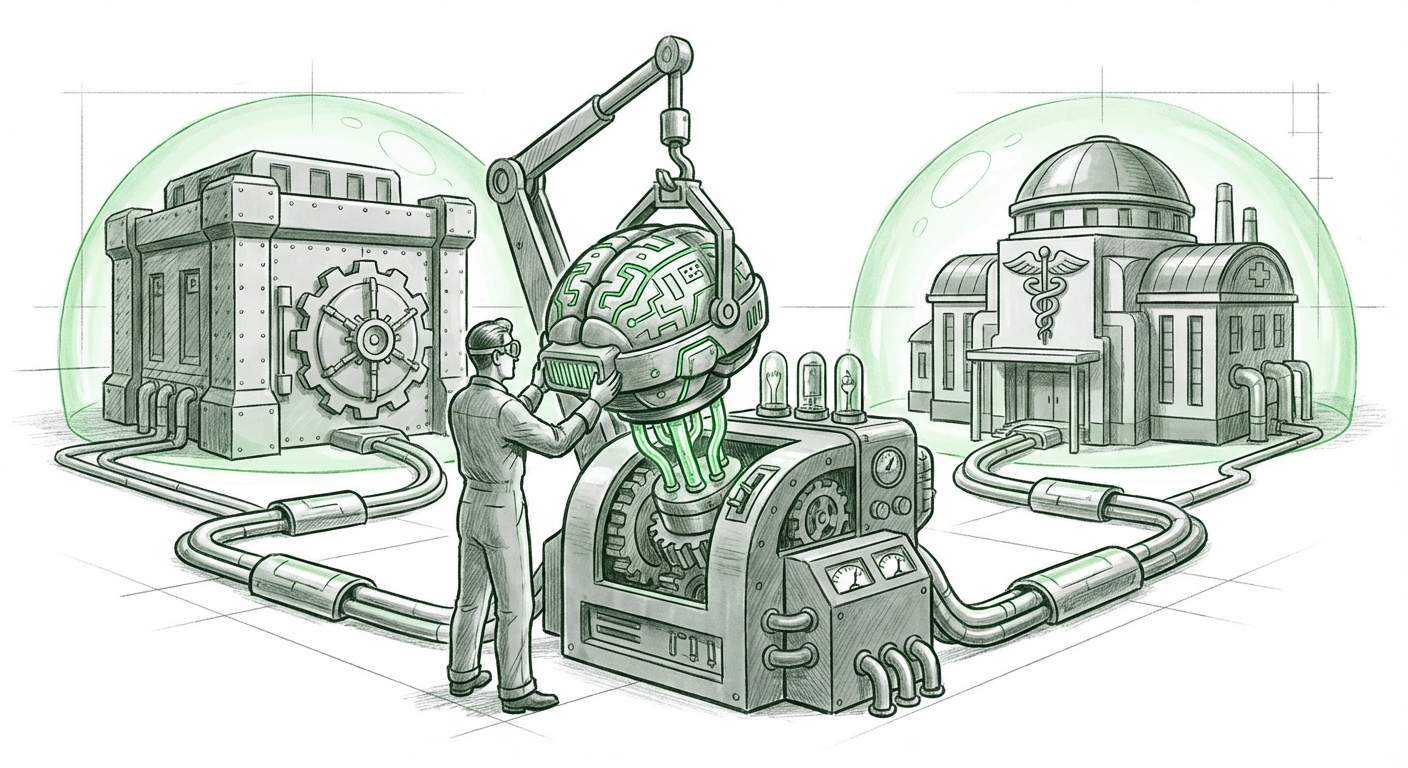

The world of Artificial Intelligence is rapidly moving past flashy demos and simple chatbots. The true test of this technology lies not in its novelty, but in its reliability and trustworthiness within the systems that govern our modern economy—finance, healthcare, legal services, and infrastructure. A recent announcement signaling a partnership between Anthropic and the IT services giant Infosys brings this future into sharp focus. They are teaming up to build AI agents specifically designed for these heavily regulated industries.

This isn't just another enterprise software deal; it represents a critical inflection point. It merges Anthropic’s pioneering work in building safer, more steerable Large Language Models (LLMs) with Infosys’s massive capability in implementing and managing complex, mission-critical systems globally. To understand the gravity of this move, we must look beyond the headline and investigate the underlying market needs and technological capabilities supporting this alliance.

The Urgent Need: Why Regulated Industries Are Prime Targets for AI Agents

Why focus on industries defined by red tape, audits, and massive liability? Simply put, these sectors hold the most valuable, yet most labor-intensive, processes ripe for intelligent automation. Consider the daily grind in a large bank or hospital:

- Regulatory Reporting: Vast amounts of unstructured data must be synthesized, interpreted against ever-changing legal texts, and formatted for auditors. Errors lead to steep fines.

- Risk Management: Analyzing thousands of contracts or monitoring complex market data streams for anomalies requires speed and precision beyond human capacity.

- Compliance Checks (KYC/AML): Identifying suspicious activities or ensuring client onboarding meets strict governmental standards demands persistent vigilance.

External market context strongly supports this focus. Industry analysis consistently points toward a high demand for Generative AI solutions in financial services, yet often highlights compliance challenges as the primary barrier to widespread adoption [Example of a relevant theme: IBM's view on the challenges of adopting AI in financial services]. This tension—high demand versus high risk—is precisely where Anthropic and Infosys aim to intervene.

For these industries, the old model of AI simply *suggesting* answers is insufficient. They need agents—systems capable of taking an instruction ("Review all contracts signed last quarter for compliance clause X and flag deviations") and autonomously executing the necessary steps, interacting with databases, verifying outputs, and logging every decision for audit trails. This transition from passive insight to active execution is what makes this partnership so significant.

The Anthropic Advantage: Safety as a Selling Point, Not an Afterthought

When deploying AI into environments where a single hallucination could cost millions or endanger patient safety, model provenance and safety are paramount. This is Anthropic's core differentiator. Unlike competitors who might prioritize sheer performance above all else, Anthropic has built its reputation on Constitutional AI—a framework designed to make models inherently steerable toward helpful, honest, and harmless outputs.

When we look at the capabilities of models like Claude 3, especially in enterprise settings, we see features tailored for compliance:

- Stronger Reasoning & Fewer Hallucinations: Superior reasoning capabilities mean the agent is less likely to fabricate regulatory citations or misinterpret complex clauses.

- Steerability and Guardrails: Because the model is trained with a specific "constitution" of rules, it is easier for Infosys to implement hard guardrails necessary for regulated processes. This directly addresses the concerns highlighted in deep dives into Anthropic’s commitment to responsible AI development [Anthropic's commitment to responsible AI development].

For Infosys, partnering with Anthropic means they aren't just selling advanced technology; they are selling *auditable, responsible* advanced technology. In the regulated space, this safety focus is the ticket to entry. It means their deployed agents can function under the intense scrutiny of regulators who demand transparency and accountability.

Infosys’s Role: Bridging the Gap from Lab to Legacy Systems

A brilliant LLM means little if it cannot connect to a bank’s mainframe, integrate with a hospital’s Electronic Health Record (EHR) system, or interface with a global supply chain platform. This is where Infosys, a titan of IT services, becomes indispensable. They possess the critical expertise in:

- System Integration: Connecting the cutting-edge reasoning engine (Anthropic’s LLM) to decades-old, often proprietary, enterprise software architecture.

- Data Governance: Ensuring that the data piped into the agent and the data output by the agent adhere to stringent data residency and privacy laws (like GDPR or HIPAA).

- Workflow Orchestration: Designing the complex, multi-step agentic workflows that mimic high-level human decision-making, ensuring checks and balances are enforced at every step.

This partnership is a clear signal that IT services giants are no longer just helping companies adopt AI; they are pivoting to *build* the autonomous systems themselves. As noted in industry analysis regarding the future of IT services, the focus is shifting rapidly toward specialized, AI-driven automation [A recent article discussing the shift in IT services to focus on AI-driven automation]. Infosys isn’t just providing consultants; they are positioning themselves as the architects of operational transformation via agentic systems.

The Shift to Agentic Workflows: What This Means for the Future

The term "AI Agent" implies a level of autonomy that moves significantly beyond the current state of LLM usage. An AI agent is an entity designed to perceive its environment, make complex decisions, and take actions toward a long-term goal. In regulated industries, this means the AI isn't just summarizing a finding; it is initiating the next compliant action, such as submitting a regulatory filing or blocking a potentially fraudulent transaction.

Implications for Business Operations

The successful deployment of these safe agents will drastically compress processing times in high-friction areas:

- Hyper-Personalized Service at Scale: Insurance claims processing agents could verify policy details against legal precedent and process payment within minutes, not weeks.

- Proactive Compliance: Instead of reacting to regulatory changes, agents could continuously scan for new mandates and automatically suggest or implement necessary procedural adjustments in real-time.

This shift reflects the broader evolution discussed in AI research, where the focus moves toward complex, agentic AI systems capable of sustained, goal-oriented behavior [An analysis of the evolution toward agentic AI systems].

Implications for Technology Strategy

For technology departments, this partnership suggests a future where the integration pipeline is as crucial as the model itself. Success will depend less on which foundational model is "best" and more on the quality of the system integrator who can reliably wrap that model in governance, security, and legacy connectivity.

Navigating the New Landscape: Actionable Insights

For executives and technologists watching this space, the Anthropic-Infosys alliance provides a clear roadmap for future AI investment. Here are actionable insights:

1. Prioritize Governance Over Raw Power

If your business operates under strict regulatory requirements (e.g., healthcare, energy, defense), do not select an AI partner based solely on benchmarks. The partnership we are observing underscores that trustworthy scaffolding—the safety features, the explainability layers, and the auditability baked into the model—is the ultimate competitive advantage. Demand proof of governance frameworks before signing contracts.

2. Invest in Agentic Integration Talent

The value proposition of Infosys is their ability to *integrate*. Organizations must build internal or partner capabilities focused specifically on designing agentic workflows. This requires skill sets bridging traditional software engineering, workflow automation (RPA), and AI prompt engineering. It’s about designing the "brain surgery" for the AI agent.

3. Start Small with Verifiable Use Cases

The temptation will be to automate the most complex, high-risk processes immediately. Instead, businesses should follow the path validated here: identify processes where regulatory compliance is high-stakes but the data input is relatively structured (e.g., specific data extraction from legal documents). Successfully deploying a *safe* agent here builds the institutional confidence needed for broader adoption.

Conclusion: Trust as the Next AI Commodity

The collaboration between Anthropic and Infosys is a powerful statement: the next wave of AI maturity will be defined by its ability to operate reliably within guardrails. As we move toward a future populated by increasingly autonomous AI agents, the premium will be placed on systems that can execute complex tasks while remaining firmly tethered to human-defined ethical and legal constraints. The future of enterprise AI isn't just about intelligence; it’s about trust enforced through technology, and this partnership is building the engine for that very trust.