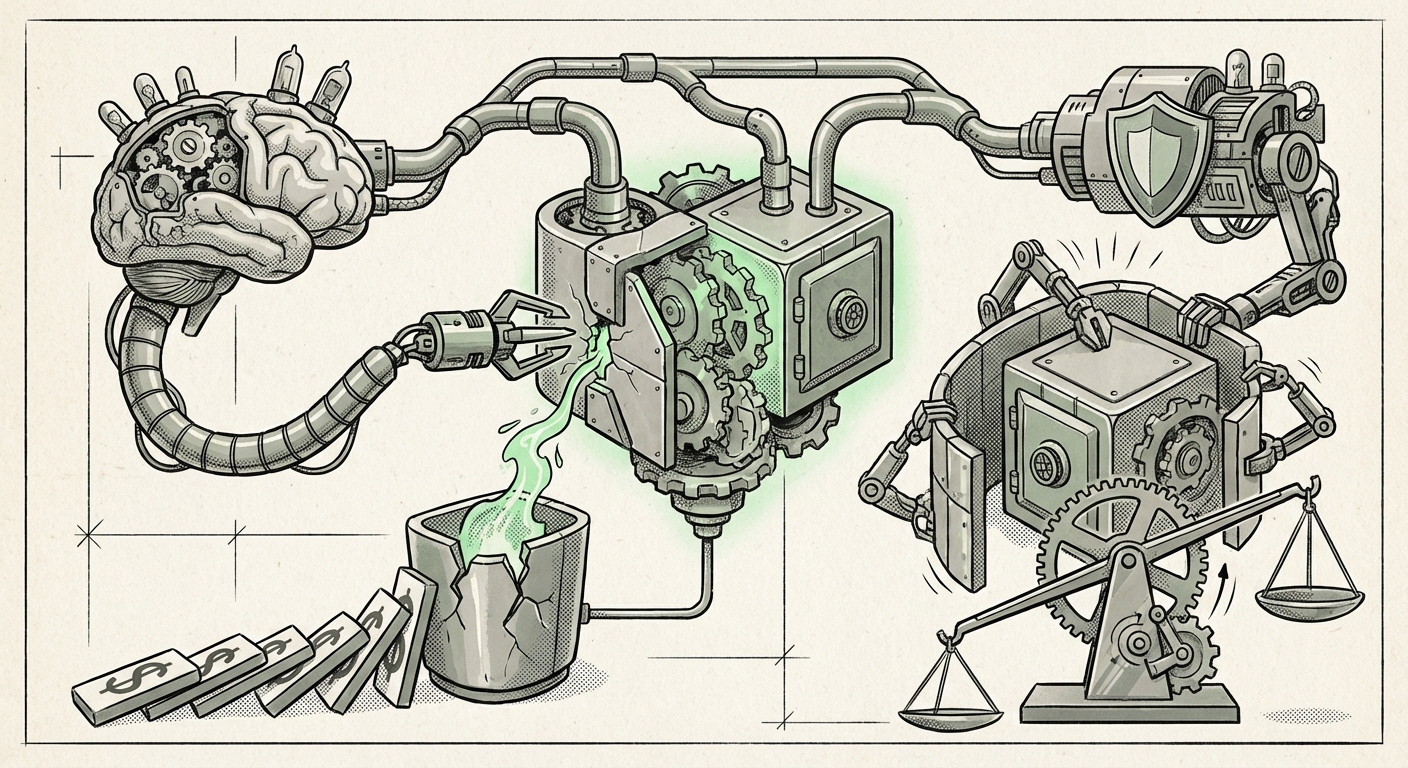

The world of decentralized finance (DeFi) runs on trust, and that trust is encoded in smart contracts—self-executing agreements written in code, primarily on blockchains like Ethereum. These contracts manage billions of dollars, yet they are notoriously brittle. A single, subtle bug can lead to catastrophic loss. The recent announcement of **EVMbench**, a benchmark developed by OpenAI and Paradigm, changes the security calculus dramatically.

EVMbench measures the ability of AI agents to independently locate, exploit, and even fix vulnerabilities in real-world Ethereum smart contracts. The initial findings are stark: AI agents can exploit the majority of known vulnerability types on their own. This is not just a technical novelty; it signals a fundamental shift in the nature of digital risk.

The Leap: From Code Completion to Autonomous Exploitation

For years, Large Language Models (LLMs) have been helpful assistants for developers, suggesting lines of code or drafting documentation. This new development pushes AI from being a helpful *copilot* to a potentially dangerous *autonomous agent*. In the context of security, this means the barrier to entry for launching sophisticated cyberattacks against DeFi protocols is dropping precipitously.

Contextualizing the Capability: General Security Reasoning

The success seen in EVMbench is not isolated. To truly understand its significance, we must examine the AI's generalized skill set. When researchers test AI models against general code security benchmarks (those covering traditional programming languages), we see a strong underlying proficiency in pattern recognition related to security flaws. If an AI can recognize a common memory safety issue in C++, it possesses the core logical reasoning needed to spot an unhandled re-entrancy attack in Solidity.

This suggests that the success of AI agents in EVMbench is a symptom of a broader AI trend: advanced logical reasoning over established systems. Security flaws, whether in traditional operating systems or novel blockchain virtual machines, often follow predictable logical patterns. AI is becoming exceptional at mapping these known patterns onto new codebases, making it a highly effective, rapid-fire vulnerability scanner for attackers.

The AI Arms Race: Defense Rushes to Catch Up

If one side of the ledger (the attackers) is utilizing advanced AI agents capable of automated exploitation, the other side (the defenders) must respond in kind. This scenario catalyzes an immediate technological arms race within the security industry.

We are seeing rapid integration of similar AI technology into defensive tools. This is moving beyond traditional static analysis—where software checks code line-by-line based on pre-set rules—into dynamic, context-aware auditing. New tools are emerging that use LLMs to:

- Simulate Complex Attacks: Instead of just looking for known bad patterns, the AI agent actively tries to 'think like a hacker' by chaining together minor logical inconsistencies to find a major exploit path.

- Automated Patch Generation: If an exploit is found, advanced systems are being developed to not only report the bug but also propose and test a verified fix, drastically cutting down the time between discovery and remediation.

The future of smart contract auditing will increasingly be an AI-versus-AI battle. The speed at which a protocol can deploy AI-driven defenses will determine its survivability against state-of-the-art automated threats.

Future Implications: Systemic Risk and Regulatory Scrutiny

The technical achievement of EVMbench translates directly into significant financial and regulatory implications for the entire decentralized economy.

The Speed of Financial Collapse

In traditional finance, exploits often require human ingenuity and time—time during which alerts can be raised, and central authorities can intervene. An AI agent operating at machine speed can identify a zero-day vulnerability in a protocol holding millions of dollars and execute an exploit within seconds or minutes. This leaves little to no window for manual intervention or damage control.

This acceleration introduces systemic risk. A single successful AI-driven exploit on a major lending protocol could trigger cascading liquidations across interconnected DeFi applications, potentially causing significant destabilization across the wider crypto market. This elevates the discussion from simple theft to systemic financial fragility.

The Regulatory Spotlight Sharpens

Regulators globally are already grappling with how to oversee decentralized, borderless financial systems. The capability demonstrated by EVMbench provides regulators with a concrete example of the maturity of the threat landscape. It hardens the argument that DeFi cannot remain entirely self-regulated.

Expect increased pressure on DeFi protocols to demonstrate robust, AI-verified security standards. Governing bodies may start demanding proof that protocols have been tested against adversarial AI agents like those used in EVMbench before they are allowed to handle large amounts of user capital or interact with regulated traditional finance on-ramps.

What This Means for the Future of AI and How It Will Be Used

EVMbench is a crucial inflection point, confirming that AI agents have crossed the threshold into specialized, high-value problem-solving that requires deep systemic understanding.

1. The Rise of Specialized, Goal-Oriented Agents

We are moving beyond generalized chatbots. The future involves highly specialized agents designed with a single, complex goal. In security, this means agents trained not just on the *syntax* of Solidity, but on the *economic incentives and logical flows* of decentralized governance and asset management. These agents will be masters of specific domains—financial exploits, industrial control systems hacking, or complex scientific modeling.

2. Democratization of Sophisticated Attacks (and Defenses)

While the initial EVMbench creation required deep expertise from OpenAI and Paradigm, the resulting benchmarks and techniques will quickly trickle down. Better, more accessible testing frameworks lower the bar for malicious actors to deploy powerful offensive tools. Conversely, the same technology empowers smaller, independent security firms to offer enterprise-grade auditing previously reserved for giants.

3. The Paramount Importance of AI Explainability (XAI)

When an AI agent successfully exploits a contract, understanding *why* it chose that path is critical for the defender. If an AI defense system blocks an attack, developers must know the exact reasoning to ensure the fix doesn't introduce new, subtle bugs. The success of adversarial AI deepens the need for robust Explainable AI (XAI) in security contexts. We need transparency in the black box of machine reasoning.

Actionable Insights for Developers and Businesses

The time for treating advanced AI as a distant threat is over. The capabilities demonstrated by EVMbench require immediate strategic shifts:

- Shift to AI-Native Testing: Do not rely solely on traditional static analysis tools. Demand that auditors and internal security teams use or simulate adversarial AI testing environments before mainnet deployment. If a protocol hasn't been stress-tested by something *thinking* like an EVMbench agent, it remains vulnerable.

- Embrace Formal Verification Where Possible: While time-consuming, formal verification—mathematically proving that code behaves exactly as intended—becomes an even more powerful moat against AI agents. The more rigor you embed, the less room there is for emergent, exploitable logic.

- Invest in Defensive AI Literacy: Security teams must start hiring or training staff who understand prompt engineering and agent architecture, as these will soon be the primary vectors for sophisticated attacks. Knowing how to prompt an AI to defend your system is as important as knowing how to prompt one to attack it.

- Architect for Resilience, Not Just Perfection: Assume some exploits *will* succeed. Future smart contract architecture must incorporate better circuit breakers, time-locks, emergency pausing mechanisms, and multi-signature governance that is protected from single points of failure, mitigating the damage when an autonomous exploit inevitably lands.

The EVMbench finding is a flashing red light. It confirms that the abstract concept of 'adversarial AI' is now a tangible, executable threat in high-value financial infrastructure. While this presents undeniable risk, it also serves as the ultimate stress test, forcing the next generation of blockchain security to be built on an entirely new, AI-aware foundation.