Decoding AI's Real Cost: Beyond the Hype of Model Tiering and Hardware Wars

The initial gold rush phase of Artificial Intelligence—where the primary goal was simply "build it bigger"—is rapidly evolving. The current challenge facing every major enterprise is not just about *access* to powerful AI chips, but about *affordability* and *sustainability*. Recent industry analysis focusing on enterprise-ready hardware guides, such as those detailing cost controls using platforms like the AMD MI355X, confirm a crucial shift: AI deployment is now firmly intersecting with rigorous financial management.

This isn't just about buying GPUs anymore; it’s about operationalizing AI at scale. We are moving from the "Proof of Concept" budget to the "Production Reality" budget. To understand the long-term implications of this new reality, we must synthesize lessons from hardware competition, model efficiency, and enterprise governance.

The New AI Battleground: Hardware Economics and the Rise of Alternatives

The narrative around high-performance computing for AI has long been dominated by a single architecture. However, as demand for specialized silicon outstrips supply, the economics become brutal. A guide focusing on the operational deployment of hardware like the AMD MI355X highlights that enterprises are actively diversifying their infrastructure bets.

Hardware Competition Drives Strategic Choice

When discussing AI acceleration, the immediate comparison point is often NVIDIA. However, for IT decision-makers, reliance on a single vendor—especially one with constrained supply—is a major financial risk. When we look at the market dynamics, such as analyzing the "NVIDIA H100 vs AMD MI350 supply chain costs," we see that the cost of entry isn't just the sticker price; it’s the ability to procure and scale without disruptive delays. AMD's presence signals that future procurement strategies will hinge on a dual-sourcing approach.

For the business audience, this means that negotiating power might slowly shift. For infrastructure architects, it means designing flexible pipelines that can handle different instruction sets and memory configurations. The hardware dictates the initial capital expenditure (CapEx), but the operational expenditure (OpEx) is determined by how cleverly we use it.

The Technical Lever: Model Tiering and the Efficiency Imperative

The most insightful concept emerging from recent cost discussions is **Model Tiering**. In simple terms, this means stopping the practice of using a massive, cutting-edge LLM (like a fully loaded GPT-4 equivalent) for every single task. Imagine using a Ferrari to run a short errand to the corner store; it works, but it's wildly inefficient.

Inference vs. Training: The OpEx Crunch

Training an LLM is a massive, upfront, time-bound expense. *Inference*—the act of the model actually answering questions or generating code in real-time—is an ongoing, compounding operational cost. This is where costs spiral out of control.

The search for "Efficiency in large language models inference optimization trends" reveals why tiering is essential. Enterprises are realizing they need:

- Tier 1 (The Supermodel): For highly complex reasoning, legal drafting, or novel research. (High cost, low frequency use).

- Tier 2 (The Specialist): Smaller, fine-tuned models for specific departmental tasks (e.g., summarizing customer service tickets).

- Tier 3 (The Utility Model): Highly quantized or pruned models for simple classification, routing, or data extraction. (Lowest cost, highest frequency use).

This strategic choice validates the technical guideposts provided by hardware vendors like AMD, which offer performance metrics for various memory and core configurations suited for different inference loads. This shift moves AI development from purely performance-driven metrics to *performance-per-dollar* metrics.

The Governance Layer: Bringing AI Spending into FinOps

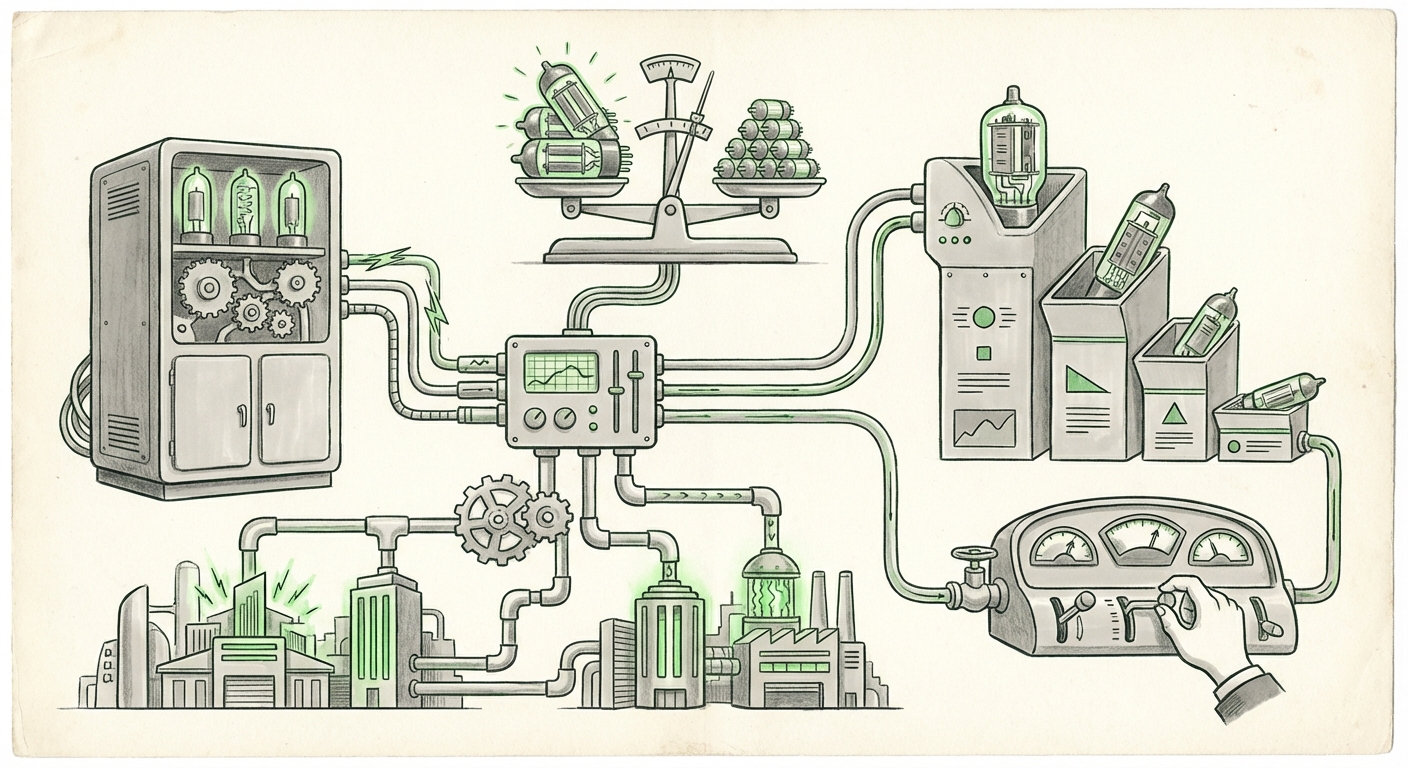

If the hardware is the engine and the model is the fuel, **governance** is the dashboard and the speedometer. Technical controls like "throttling" mentioned in the cost control guides are useless unless they are enforced within a robust organizational structure. This is the domain of AI FinOps.

From Ad Hoc Spending to Accountable Allocation

Cloud FinOps—the discipline of combining financial accountability with technology operations—is now being rapidly extended to AI infrastructure. The query regarding "Integrating AI governance with cloud FinOps frameworks" reveals that businesses are treating AI compute like any other critical utility.

This means:

- Chargebacks: Departments using the AI cluster must be accurately billed for their compute consumption, linking directly to their P&L (Profit and Loss).

- Budget Allocation: Pre-approved budgets must be set for inference usage. If a team exceeds its allocation, throttling mechanisms automatically slow down or pause non-critical jobs.

- Visibility: Real-time dashboards showing cost per query, cost per user, and ROI for specific models.

For the CFO, this transforms AI from a nebulous tech expense into a measurable investment. If a model is consuming $50,000 a month in compute power, governance ensures that this cost yields at least $50,001 in measurable business value.

The Semiconductor Horizon: Why Memory Scaling Matters

Underneath all these operational decisions lies the physical reality of computing power. The initial analysis correctly highlighted the importance of "memory scaling," often referencing HBM (High Bandwidth Memory). Why does this matter so much for cost?

Large language models are incredibly hungry for data throughput. They need to pull massive amounts of model weights rapidly into the processing cores. If the memory subsystem (how fast data moves) is slow, the expensive processing cores sit idle, waiting for work. This translates directly to wasted dollars on hardware that isn't performing.

The Race Beyond HBM3

When analysts research the "HBM-next memory architecture AI accelerator," they are looking past today’s constraints. The next generation of memory technology—whether it’s incremental improvements in HBM3e or entirely new paradigms—is the long-term solution to cost control. Better memory efficiency means the same dollar spent on silicon can run a larger, faster model, or run the same model much cheaper.

For the hardware engineer, this is the key area for innovation. For the business strategist, this means understanding that the current high costs are somewhat temporary; capital investment cycles in memory technology will eventually lead to significant deflation in the cost of complex inference.

Future Implications: From Control to Contextual AI

What do these converging trends—hardware competition, model optimization, and FinOps governance—mean for the next five years of AI deployment?

1. Democratization through Efficiency: As smaller, cheaper-to-run models become highly effective (Tier 2 and 3), organizations previously locked out by massive compute bills will deploy AI across more use cases, not just the few "mission-critical" ones. This democratizes access, pushing AI from central labs into front-line operations.

2. The Rise of the AI Procurement Specialist: Future IT departments will require staff skilled not only in cloud engineering but in semiconductor economics and model quantization strategies. Procurement will shift from annual refresh cycles to dynamic, real-time optimization based on fluctuating market prices and performance benchmarks.

3. AI as a Fully Costed Utility: The concept of an "AI budget" will dissolve, replaced by AI compute being woven seamlessly into departmental operational budgets, just like software licenses or electricity bills. This forces accountability and weeds out AI projects that lack clear, quantifiable returns.

Actionable Insights for Today's Leaders

For enterprises looking to navigate this cost-controlled AI landscape, immediate action is required:

- Audit Your Inference Needs: Map every current LLM application against its required reasoning complexity. If you are using a 70-billion parameter model for basic summarization, you are overspending. Immediately start developing Tier 3 alternatives.

- Mandate FinOps Visibility: Implement clear tagging and cost allocation for all AI compute resources today. Use dashboards to show compute consumption by project owner, not just by cluster.

- Maintain Hardware Flexibility: Do not lock entirely into one accelerator vendor. Design your MLOps platforms (like those utilizing the MI355X capabilities) to be adaptable to future competitive offerings, ensuring you can pivot when a better price-to-performance ratio emerges.

- Invest in Optimization Research: Dedicate a small team to explore techniques like sparse activation, knowledge distillation, and quantization. These research efforts directly translate into massive savings on inference OpEx tomorrow.

The era of unconstrained AI spending is concluding. The companies that thrive in the coming years will be those that master the operational, architectural, and financial discipline required to run powerful models sustainably. Control is not stagnation; it is the foundation for enduring, scalable innovation.