The End of AI’s Wild West: Why Cost Controls, Model Tiering, and Hardware Choice Are the Future of Enterprise AI

For the past few years, the Artificial Intelligence landscape has felt like a gold rush. Companies chased capability, striving to build or deploy the largest, most complex Large Language Models (LLMs) possible, often operating with near-unlimited budgets fueled by venture capital or sheer strategic imperative. However, that era of unchecked spending is drawing to a close. The conversation has fundamentally shifted from "What *can* AI do?" to the far more pressing question: "What is the *return on investment* for the AI we deploy?"

Recent deep dives into enterprise AI operations, such as guides detailing specific hardware like the AMD MI355X focusing on AI Cost Controls: Budgets, Throttling & Model Tiering, illustrate this maturation. This is the signal that AI has officially moved out of the pure research lab and into the complex, financially scrutinized world of mainstream enterprise deployment. For businesses, this operational reality means TCO (Total Cost of Ownership) and measurable ROI are now the dominant factors shaping AI strategy.

The Economic Reality Check: Why Cost Control is King

The initial excitement surrounding generative AI has collided with the cold hard physics of compute cost. Training state-of-the-art models requires astronomical amounts of power and specialized hardware, but the real sustained cost often lies in *inference*—running the model repeatedly for millions of user queries.

This economic pressure is validated by broader industry analysis. Reports on the State of AI Infrastructure and Cloud Spending in 2024 consistently highlight massive capital expenditures by major tech players, confirming that the underlying hardware investment is staggering. This spending isn't abstract; it trickles down to every business using cloud services or building private clusters. If the biggest players are spending billions, smaller enterprises must be ruthlessly efficient to survive.

This is where the initial guide’s focus on budgeting and throttling becomes crucial. It’s not about stopping AI development; it’s about applying financial discipline. We are seeing a mandate from the CFO’s office that turns AI infrastructure management into a core operational competency.

The Importance of Hardware Diversification

The focus on the AMD MI355X in operational guides is not accidental; it is a strategic maneuver in the face of market concentration. For years, NVIDIA’s GPUs have been the de facto standard. While performance is undeniable, dependence creates risk, limits negotiating power, and often locks users into specific software ecosystems.

When enterprises look at Enterprise AI hardware diversification beyond NVIDIA, they are looking for leverage. The emergence of viable alternatives, such as AMD’s data center offerings (Reuters reports confirm AMD is actively challenging this dominance [https://www.reuters.com/technology/amd-challenges-nvidias-ai-chip-dominance-2024-06-03/]), signals a healthy, competitive market is forming. For the CTO, adopting alternative silicon like the MI355X is about mitigating supply chain risk and driving down the initial cost of procurement.

Looking ahead, the strategic calculus of custom AI silicon versus Hyperscalers becomes central. Enterprises must decide if they want to rent compute indefinitely from the big cloud providers or invest upfront in their own, potentially more specialized, infrastructure. The decision hinges on long-term utilization—the TCO crossover point. If AI workloads become constant and predictable, owning the hardware, even from a challenger vendor, wins financially.

Architectural Innovation: Software Meets the Silicon

Even with the best hardware, inefficient software can drain budgets. The next major area of cost control lies in optimizing *how* the models run. This is where the concept of Model Tiering moves from a buzzword to an implementable standard.

The Power of Model Cascades

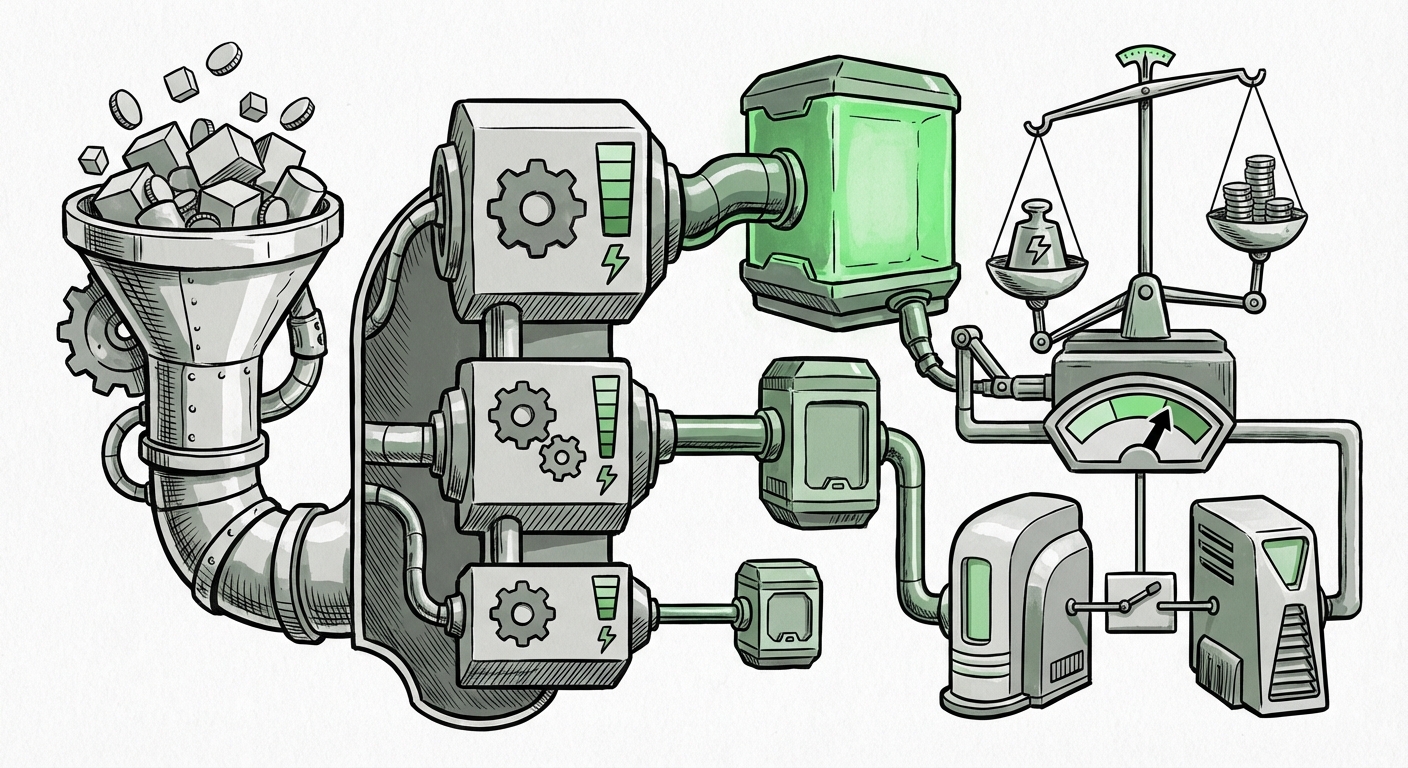

Model tiering means creating a hierarchy of models to match the difficulty of the user request. Think of it like using the right tool for the job:

- Tier 1 (The Giant): A massive, high-parameter model (e.g., GPT-4 class) reserved only for highly complex reasoning, legal analysis, or creative generation. This model is expensive to run.

- Tier 2 (The Specialist): A medium-sized, highly fine-tuned open-source model handling specific domain tasks (e.g., summarizing medical notes).

- Tier 3 (The Workhorse): A small, heavily optimized model running extremely fast for basic tasks like sentiment analysis or simple classification. This runs cheaply, maybe even on a CPU or the specialized inference chips mentioned in recent guides.

Implementing this requires sophisticated routing logic, as detailed in discussions on best practices for serving multiple LLM versions in production. Cloud providers offer tools to help build these high-throughput, low-latency services [https://aws.amazon.com/blogs/machine-learning/build-a-high-throughput-and-low-latency-inference-service-for-large-language-models-using-amazon-sagemaker/]. The goal is simple: never use a sledgehammer (Tier 1) when a lightweight hammer (Tier 3) will suffice.

Making Models Smaller, Faster, Cheaper

Cost control forces innovation in model compression. If companies cannot afford to run the largest possible models, they must learn how to shrink them without losing too much accuracy. This drives deep interest in quantization and pruning techniques for LLM cost reduction. The work done by the open-source community, often detailed in guides from platforms like Hugging Face [https://huggingface.co/docs/optimum/concept_quantization], shows how reducing the precision of a model’s numbers (quantization) or removing unnecessary connections (pruning) can dramatically lower memory and compute requirements.

For the Data Scientist, the future involves balancing model size against task performance. Deploying smaller, aggressively optimized open-source models via fine-tuning often delivers 90% of the performance for 10% of the cost compared to relying solely on massive, closed-source APIs.

The Hidden Cost Sinkholes: Beyond the Chip Price

When focusing on hardware acquisition, enterprises can overlook the chronic operational expenses that bleed budgets dry. Two areas are becoming focal points for financial scrutiny:

The Cloud Egress Trap

When deploying models in the public cloud, the cost of *sending data out* (egress) can become paralyzing, especially for applications that serve many users globally or those that must frequently pull proprietary data into the model environment. Analyzing the cloud egress fees LLM deployment impact reveals that while computation might be cheap during initial access, data movement costs can force the "build vs. buy" conversation in favor of on-premise solutions or specialized edge deployments.

Maximizing Utilization Metrics

Finally, just as critical as buying the right chip is ensuring it’s busy. A GPU sitting idle is a wasted investment. Discussions around AI utilization metrics and server farm efficiency highlight the need for advanced orchestration tools. If a company buys expensive MI355X accelerators, those systems must run at near-peak capacity. Model tiering helps here too—by matching smaller tasks to less powerful tiers, you ensure the massive Tier 1 GPUs are always busy with the tasks only they can handle, maximizing the ROI on every clock cycle.

Future Implications: A More Sustainable AI Ecosystem

What does this shift toward rigorous cost control mean for the trajectory of AI innovation?

- Democratization through Efficiency: When efficiency mandates specialization, it opens the door for competitors. The ability to run high-quality inference on less proprietary, less dominant hardware levels the playing field. This fosters a more robust ecosystem where innovation is judged not just by headline performance but by real-world economic feasibility.

- The Rise of the MLOps Finance Specialist: MLOps teams will increasingly need financial modeling skills alongside their Kubernetes and Python expertise. Understanding the TCO crossover points and managing cloud consumption budgets will be as important as model accuracy.

- Edge and Decentralization: Lowering inference costs, especially by using smaller, quantized models, pushes AI capabilities closer to the end-user (the edge). This reduces latency and mitigates cloud egress costs, enabling new classes of real-time, privacy-preserving applications.

- Focus on Specificity over Generality: The immense cost of general-purpose LLMs will ensure that enterprises double down on highly specialized, smaller models trained specifically for their niche. The future isn't one model to rule them all; it’s a federation of perfectly sized, cost-optimized agents.

The operational reality outlined in guides concerning budgets, throttling, and hardware choices like the AMD MI355X is not a temporary belt-tightening measure. It is the new normal. The next phase of AI success will belong not just to those who can conceive of grand AI visions, but to those who can execute them profitably, efficiently, and sustainably. The era of the AI spend spree is over; the era of the disciplined AI builder has begun.