Mandatory AI Use & Promotion: Is Your Job Safe? Analyzing Corporate Tech Mandates

The modern workplace is changing faster than ever, driven by the rapid maturation of Artificial Intelligence. No longer is AI a futuristic concept discussed in R&D labs; it is being integrated directly into the performance review process. The recent revelation that a global giant like Accenture is tying employee promotions to the documented usage of their internal AI tools sends a powerful signal across the entire knowledge economy: adoption is no longer optional; it is a prerequisite for career advancement.

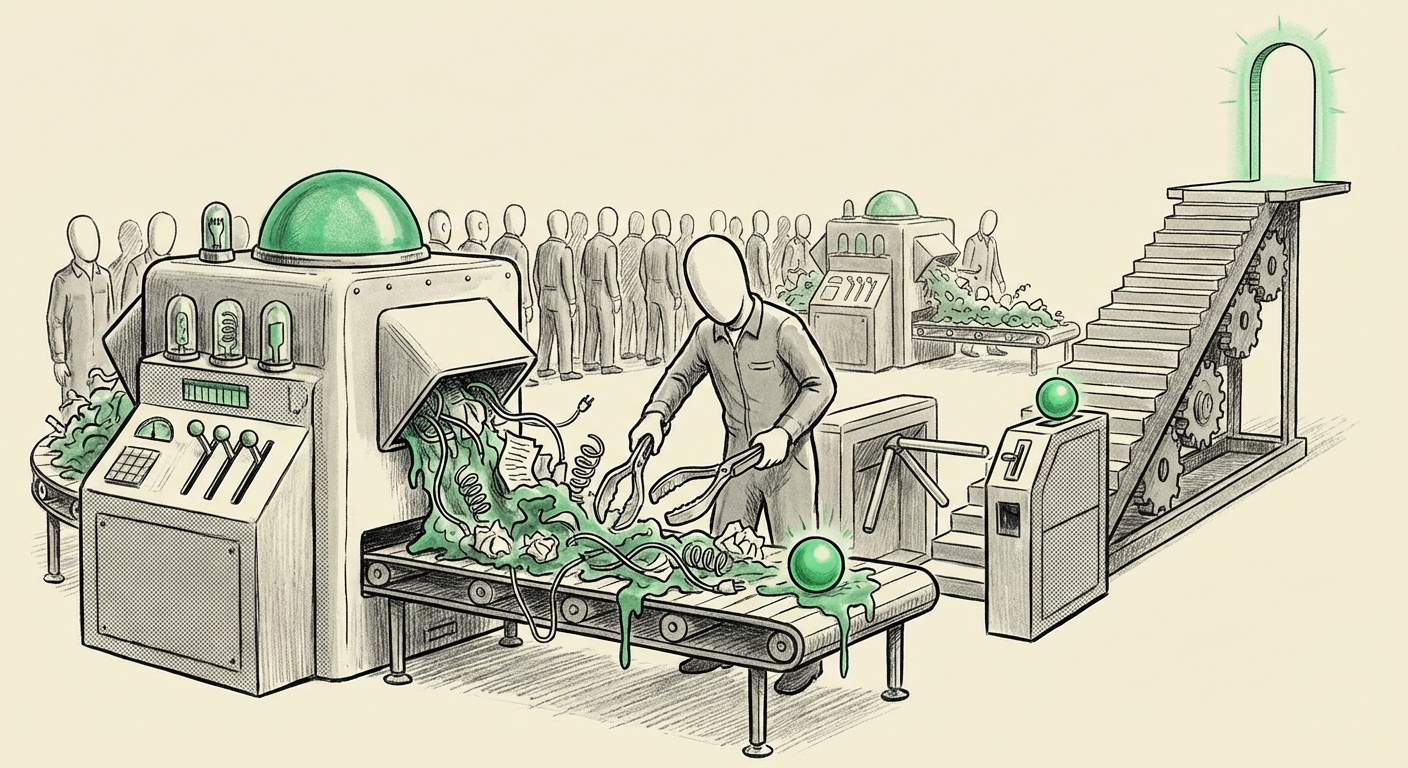

However, this mandate is immediately met with friction. Reports indicate that some employees view these mandated tools as "broken slop generators"—technology that produces low-quality, unusable output, yet they are still required to use it. This dynamic creates a fascinating, and potentially volatile, tension between management’s vision for hyper-efficiency and the current, imperfect reality of enterprise AI.

As an AI technology analyst, this scenario is the clearest sign yet that we are past the experimental phase of AI. We are entering the enforcement phase. To understand what this means for the future of AI usage, we must dissect the forces driving this mandate, the real-world limitations of today’s tools, and the cultural shockwaves being sent through professional services.

The Inevitability of AI Adoption: Contextualizing the Mandate

Accenture’s move is not simply about using a new piece of software; it is about embedding a new workflow philosophy. Management views these AI tools as essential building blocks for future profitability. If an employee is not using the tool to automate routine tasks, they are, by definition, working slower and costing the firm more money in the long run.

1. The Rise of AI in Performance Monitoring (Query 1 Focus)

This promotion linkage is part of a larger trend in Human Resources technology. We are seeing a significant shift away from simple activity tracking (like login times) toward measuring *output leverage*.

The search for context in this area reveals the growth of sophisticated productivity analytics, sometimes dubbed "Bossware." While monitoring AI usage is less invasive than monitoring keystrokes, it serves the same fundamental purpose: quantifying productivity gains. Business strategists are looking to tie investment in expensive AI licenses directly to measurable employee behavioral changes. Reports, such as those frequently cited by analysts like Gartner, discuss how firms are rapidly adopting systems to score employees based on adoption rates of new productivity software. For the C-suite, if you pay millions for AI infrastructure, you must see a return, and linking it to promotions is the most direct way to force uptake.

For HR Technology Leaders: This signals that performance management is becoming intrinsically linked to technological fluency. The focus shifts from *what* you produce to *how efficiently* you produce it using the firm's prescribed tools.

2. The "Slop Generator" Problem: Reliability in the Enterprise (Query 2 Focus)

The employee frustration—calling the tools "broken slop generators"—is critical context. It validates what many in the tech community already know: Generative AI, especially when fine-tuned on proprietary or siloed corporate data, is often inconsistent. Large Language Models (LLMs) are prone to hallucinations (making up facts) or generating logically flawed analyses.

If an AI tool generates unusable first drafts that require 80% rework, then using it actually slows down the high-performing employee. Employees who are skilled enough to bypass the tool and produce high-quality work manually are being penalized for their superior judgment.

Contextual articles on enterprise AI adoption frequently highlight the "grounding" problem. Companies must secure their data while ensuring the model uses only verified internal sources. This technical tightrope walk often results in models that are overly cautious or simply inaccurate for complex, nuanced tasks. The battle here is between the *promise* of AI efficiency and the *current engineering reality*.

The Future of Knowledge Work: Forced Reskilling or Obsolescence?

When a massive firm enforces tool usage under the threat of stagnation, it redefines the fundamental contract between employer and employee. This is where the future implications become stark.

3. Automation Anxiety and Role Reclassification (Query 3 Focus)

Why is Accenture so aggressive? Because the consulting industry is facing unprecedented pressure from automation. If an AI can draft 70% of a standard proposal or analysis memo, the value shifts away from the person doing the drafting and toward the person who can effectively prompt, verify, and strategize based on that draft.

Industry forecasts from bodies like the McKinsey Global Institute often map out which routine tasks within consulting will be automated first. Proficiency in AI is thus framed not as an extra skill, but as the *new baseline* skill required to remain in the role. Not adopting the tool means accepting a lower tier of work, and consequently, a lower career ceiling.

For Career Counselors: The message is clear: the title "consultant" will soon mean "expert AI collaborator," not just "expert analyst."

4. Cultural Friction in Forced Upskilling (Query 4 Focus)

Mandates breed resistance. The comparison here is not just to other software rollouts (like old ERP systems) but to fundamental shifts in thinking. Forcing employees to use immature technology feels like pointless bureaucracy. If the AI outputs garbage, the employee feels they are wasting time cleaning up the garbage just to log the usage metric.

This resistance leads to internal cynicism and burnout. Employees may learn to game the system—using the tool minimally just to register a login—without actually integrating it meaningfully into their workflow. True transformation requires **buy-in**, which is severely undermined when the tool is perceived as a management weapon rather than an enabler.

The Double-Edged Sword: Implications for AI Development

The Accenture situation serves as a massive, involuntary A/B test on AI adoption strategies. What can we learn about the future of AI from this tension?

Implication 1: Prioritizing Human-in-the-Loop Design

The "slop generator" complaint forces developers to shift focus. The goal cannot just be generating *content*; it must be generating *trustworthy, actionable* content. Future enterprise AI must be designed with a robust **Human-in-the-Loop (HITL)** interface that makes verification and correction faster than manual creation. If the tool forces the user to constantly stop and double-check basic facts, it has failed in its primary mission of efficiency.

Implication 2: Metrics Must Evolve Beyond Simple Usage

Tying promotions *only* to logins is a blunt instrument. It rewards activity over genuine impact. The future of successful AI integration will require nuanced metrics:

- Impact Score: Did using the AI tool lead to faster client delivery or improved quality scores on verified projects?

- Prompt Engineering Proficiency: Are employees demonstrating an ability to extract value, or just running the default script?

- Iterative Improvement: Is the employee's prompt sequence leading to a better final product than a non-AI approach?

If metrics remain simple usage counts, firms will end up with highly compliant employees using poor tools poorly, leading to widespread internal disillusionment.

Implication 3: The Blurring Line Between "User" and "Developer"

In this new environment, the most valuable knowledge workers will be those who can both use the AI and provide high-quality feedback to IT/Engineering teams. They become crucial translators between the business need and the model's limitations. This mandates a new form of literacy where understanding the *limitations* of the technology is as important as knowing its *capabilities*.

Actionable Insights for Businesses and Employees

This moment requires strategic maneuvering from both sides of the corporate desk.

For Business Leaders and HR Strategists:

- Validate First, Mandate Second: Before tying career progression to a tool, rigorously test its utility against real-world tasks. If your tool fails basic accuracy checks, mandating its use under threat damages morale faster than it improves productivity.

- Incentivize Smart Use, Not Just Clicks: Redesign performance reviews to reward measurable AI-driven outcomes (e.g., "Reduced drafting time by 30% using AI verification") rather than mere platform engagement.

- Be Transparent About Tool Maturity: Acknowledge imperfections. Telling employees, "This tool is currently at 70% reliability, and we need your feedback to push it to 95%," fosters partnership. Calling it mandatory without acknowledging flaws breeds resentment.

For Knowledge Workers and Employees:

- Master the Interface: Regardless of current frustration, learning the required AI interface is now a professional imperative. Treat it like learning a new programming language or complex enterprise software; skill atrophy is a real risk here.

- Document the Flaws: If you must use a "slop generator," document *why* the output is poor. Collect empirical evidence of where the tool failed. This data is invaluable when discussing career progression or providing feedback to the IT department.

- Focus on High-Leverage Tasks: Use the AI for the 80% of tasks it *can* do adequately (summarization, formatting, first drafts), freeing up your time for the 20% only you can do (strategy, complex negotiation, ethical decision-making).

The story coming out of Accenture is a microcosm of the global AI transformation. Technology is no longer just a tool; it is becoming a fundamental requirement for professional participation. The challenge ahead is not just integrating AI, but integrating it *meaningfully*—ensuring that the technology truly elevates human performance rather than simply becoming a metric for forced compliance.