The Cognitive Leap: How DeepMind's Aletheia Signals the Shift to Slow, Deliberative AI Reasoning

For years, the dominant narrative in Artificial Intelligence revolved around speed. Large Language Models (LLMs) and generative AI excelled because they could process vast amounts of data and generate plausible answers almost instantaneously. This is akin to the human brain’s *System 1* thinking: fast, intuitive, and pattern-based.

However, as AI tackles problems of greater complexity—scientific discovery, formal verification, or long-term planning—the limitations of pure speed become starkly apparent. An answer that is generated quickly but contains a logical fallacy is useless, sometimes even dangerous. This realization is driving the next major evolution in AI architecture, powerfully exemplified by DeepMind’s recent work on **Aletheia**.

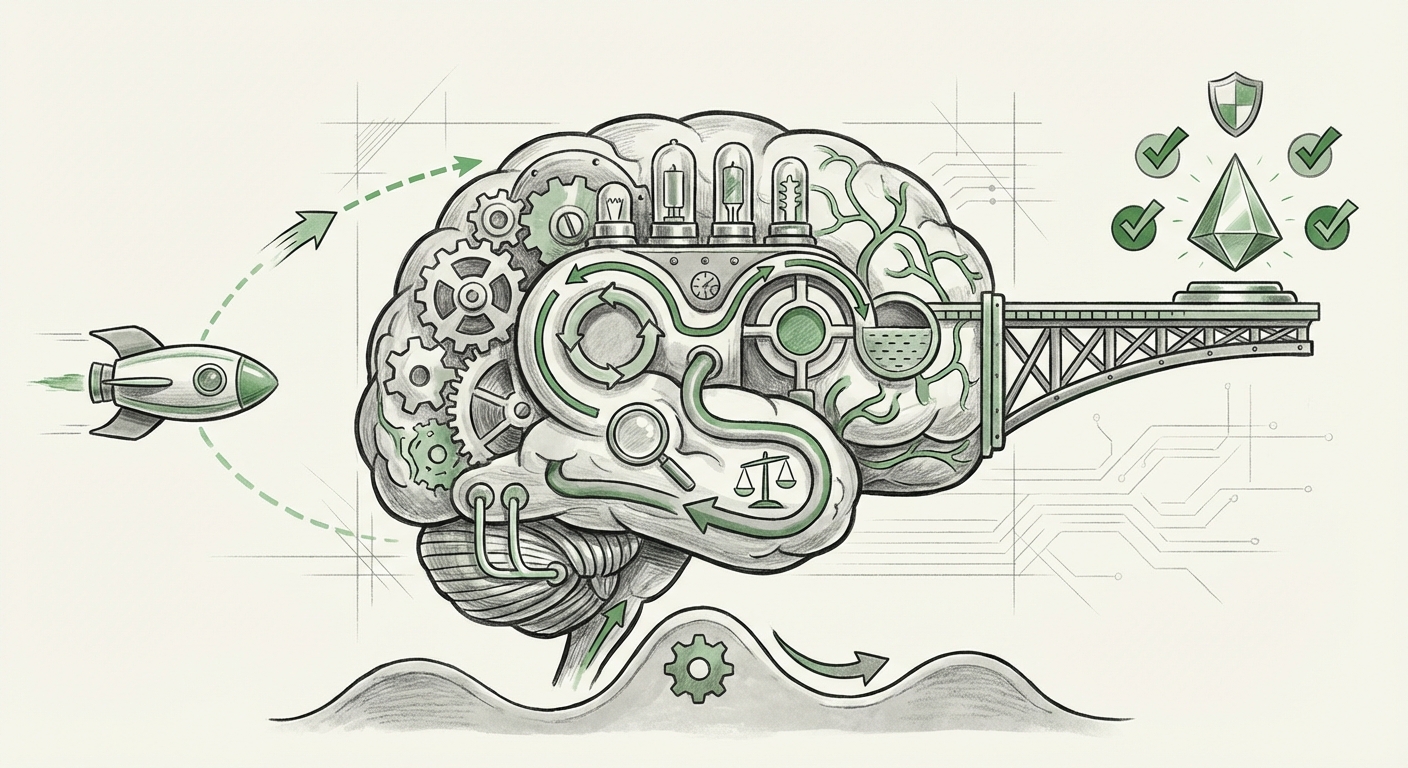

Aletheia, as highlighted in recent analyses, is not just another bigger model; it represents a deliberate move toward integrating *System 2* thinking—the slow, effortful, and verifiable reasoning process we use for complex mathematics or critical decision-making. This shift from "Fast Discovery" to "Slow Thinking, Fast Discovery" marks a crucial inflection point for the entire field. To understand its significance, we must contextualize this development against broader industry trends and cognitive theory.

The Architecture of Deliberation: System 2 AI Emerges

The human brain operates using two distinct modes of thought, famously categorized by psychologist Daniel Kahneman. System 1 is automatic and emotional; System 2 is effortful, logical, and intentional. Current generative AI heavily favors System 1.

DeepMind’s Aletheia architecture appears to bridge this gap. It suggests that optimal discovery requires a hybrid approach: using fast inference to generate initial hypotheses, but then employing a slower, iterative, and self-correcting mechanism to vet and refine those hypotheses before presenting a final answer. This mirrors how a scientist works: hypothesize, test, analyze errors, and refine the theory.

This quest for System 2 capabilities is not exclusive to DeepMind. Research across the field is converging on similar solutions. Techniques like **Chain-of-Thought (CoT) prompting** are early, simple attempts at forcing models to articulate intermediate reasoning steps—a rudimentary form of slow thinking. However, dedicated architectures like Aletheia suggest we are moving toward built-in mechanisms for this deliberation.

External research confirms this architectural shift. Studies focusing on **"Self-Correction and Iterative Refinement in Large Language Models"** detail methods where models essentially "re-run" their internal processes, checking consistency across multiple independent reasoning chains. This iterative refinement is computationally expensive—it takes time, hence the "slow thinking"—but the resulting accuracy gains are transformative for hard problems.

Why This Matters for Engineers

For AI researchers and ML engineers, this means the future might involve designing models not just for parameter count, but for *cognitive workflow*. Instead of demanding one perfect output in a single pass, engineering focus will shift toward designing loops, verifiers, and critique modules that force the AI to argue with itself until a logically sound conclusion is reached. This elevates model performance not just by scale, but by design integrity.

The Mandate for Trust: Neuro-Symbolic AI and Verifiability

One of the most compelling reasons to invest in "slow thinking" is the increasing demand for trust. As AI moves from generating marketing copy to assisting in drug discovery or autonomous financial modeling, its outputs must be auditable. This is where the concept of **Neuro-Symbolic AI** becomes crucial.

Pure deep learning (the "neuro" part) is fantastic at recognizing messy patterns in raw data, but it struggles with formal logic, rules, and mathematical certainty (the "symbolic" part). Aletheia’s emphasis on structured reasoning suggests it is leaning into this hybrid approach. It aims to wrap the intuitive power of neural networks within a framework that adheres to logical constraints.

Consider a legal or medical scenario. A System 1 AI might quickly suggest a diagnosis based on millions of similar cases. A System 2-enabled, neuro-symbolic approach would generate that suggestion but then immediately test it against known medical laws, regulatory statutes, and established patient history—all formalized symbolic rules. If the suggestion violates a hard rule, the system rejects it and reasons toward a verifiable alternative.

Implications for High-Stakes Industries

This trend is essential for AI adoption in regulated industries. Regulatory bodies are increasingly concerned with the "black box" nature of current models. Architectures that facilitate **formal verification**—proving that the AI's reasoning adheres to a specific set of constraints—will unlock massive commercial potential in finance, aerospace, and healthcare. Businesses betting on AI compliance must prioritize architectures that inherently support this structural validation.

The Gemini Context: Reasoning Across Modalities

It is impossible to discuss DeepMind's reasoning advancements without acknowledging the ecosystem they inhabit. The recent unveiling of **Google’s Gemini** model family showcased remarkable multi-modal reasoning—the ability to seamlessly understand text, images, audio, and code simultaneously. While Gemini is a foundational model, architectural innovations like Aletheia often serve as the specialized engines enabling the most complex capabilities within these larger systems.

If Gemini excels at complex tasks that require integrating visual evidence with textual instructions (e.g., analyzing a complex engineering diagram described in a long document), it is likely leveraging underlying mechanisms for iterative, deliberate thought—the very mechanisms Aletheia champions. The move "beyond transformers" in raw architecture is less about abandoning the transformer block and more about adding sophisticated layers *on top* of it to manage complex, stateful reasoning.

For the industry, this means the future LLM is not a singular, monolithic entity. It is an integrated agent composed of specialized reasoning modules, where one module handles fast retrieval, another handles visual integration, and a third—perhaps Aletheia’s core concept—handles the slow, sequential logic necessary to synthesize the final, reliable answer.

Actionable Insights: Preparing for Deliberative AI

The transition toward System 2 AI requires a shift in how we develop, deploy, and even measure AI systems. Here are actionable insights based on this cognitive leap:

- Re-evaluate Performance Metrics: Accuracy alone is insufficient. Future benchmarks must heavily weigh *logical consistency*, *verifiability scores*, and the *depth of the reasoning chain* required to reach a solution, not just the final outcome.

- Embrace Hybrid Pipelines: Businesses should begin structuring their AI deployments as pipelines rather than single calls. A fast LLM generates drafts, but a secondary, dedicated reasoning module (potentially inspired by Aletheia) must validate those drafts against business logic or domain constraints.

- Invest in Explainability Tools: As reasoning becomes slower and more complex, the tools needed to visualize and debug that reasoning must advance. We need better visualization layers for iterative refinement processes to ensure human operators can trust the system’s slow path.

- Focus on Formalization: For any critical application, identify the core symbolic rules (the "ground truth" axioms) that the AI must never violate. Architectural readiness for Neuro-Symbolic integration will determine which systems succeed in regulated environments.

Conclusion: The Dawn of Truly Intelligent Systems

The excitement surrounding DeepMind's Aletheia architecture is not hyperbole; it marks a fundamental maturation in AI research. For years, we have been building incredibly fast calculators. Now, we are beginning to build thoughtful deliberators.

The integration of slow, effortful reasoning alongside fast pattern recognition moves AI closer to what we intuitively understand as "intelligence." It promises a future where AI isn't just better at predicting what comes next, but better at figuring out what *must* come next based on established principles. This cognitive architecture promises reliability, verifiability, and a genuine acceleration in scientific and complex industrial discovery. The race is no longer just about who has the biggest model; it’s about who has the best, most trustworthy thought process.