DeepMind's Aletheia: Why 'Slow Thinking' AI is the Next Frontier in Reasoning

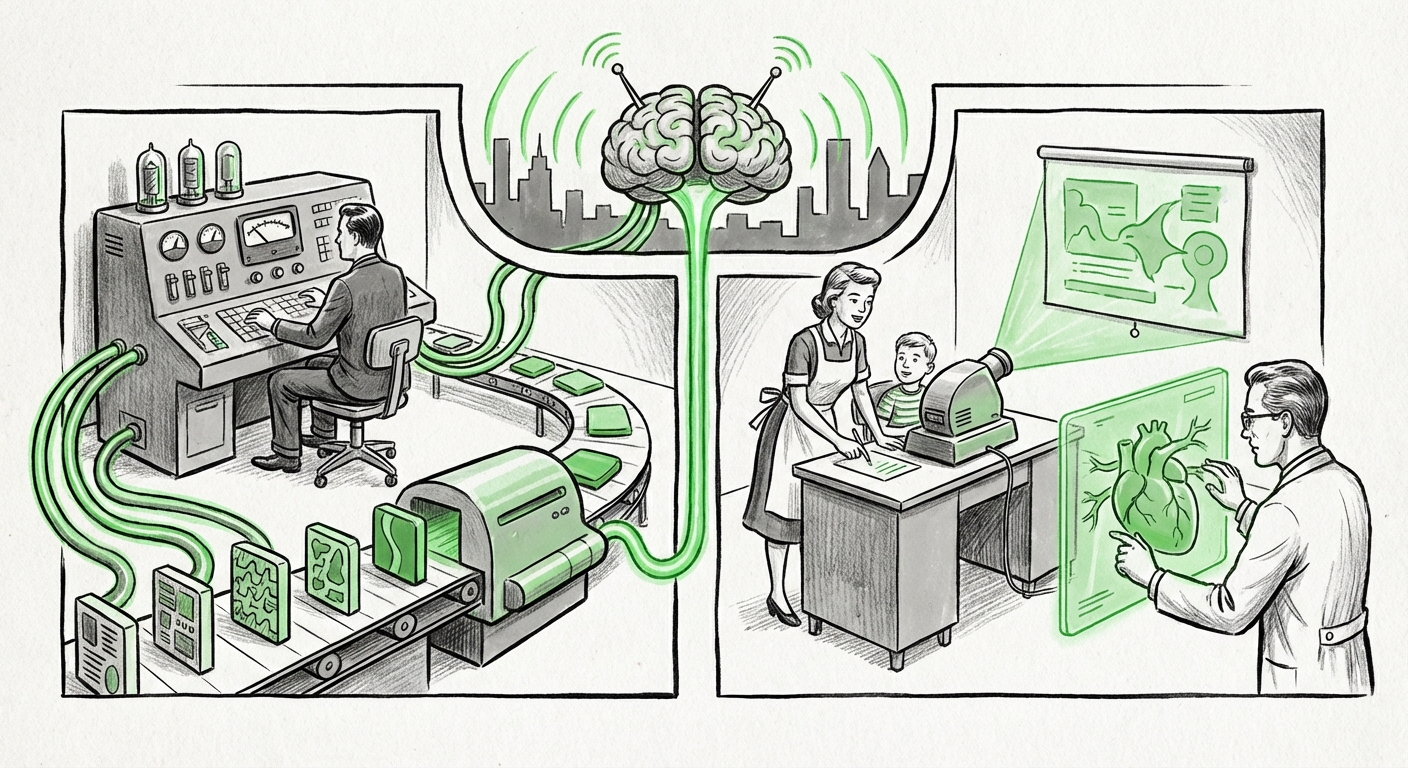

The Artificial Intelligence landscape has, for years, been defined by speed. Faster processing, quicker response times, and massive, nearly instantaneous inferences have driven both research and commercial adoption. However, a recent development from DeepMind—the Aletheia architecture—signals a crucial pivot. As highlighted in analyses like "The Sequence AI of the Week #809," Aletheia champions "Slow Thinking, Fast Discovery." This suggests that the next major breakthrough in AI won't be about getting answers faster, but about getting better, more verifiable answers by allowing the model time to truly reason.

The Speed Trap: Where Current LLMs Fall Short

Today’s Large Language Models (LLMs), built largely on the Transformer architecture, are masters of prediction. Given a sequence of text (a prompt), they calculate the most statistically probable next word or token. This process is incredibly fast, making chatbots and content generation seamless. However, this speed often masks a critical deficiency: a lack of deep, verifiable reasoning.

Think of it like a math test. A fast LLM might guess the answer based on seeing similar problems before (pattern matching). A human taking a challenging exam, however, uses 'slow thinking': breaking the problem down, checking assumptions, trying different formulas, and only then writing down the final result. This deliberative quality is what Aletheia appears to be modeling.

To understand Aletheia’s breakthrough, we must look at how reasoning frameworks have evolved. Initial advances came through techniques like Chain-of-Thought (CoT) prompting, where we ask the model to "think step-by-step." While effective, CoT often forces the model down a single, linear path. If the first step is slightly flawed, the entire conclusion is compromised.

The Evolution of Reasoning: Beyond Linear CoT

The discussion around reasoning architectures shows Aletheia is entering a sophisticated evolutionary stage. Research exploring the contrast between established methods provides excellent context. For instance, comparing CoT against Tree-of-Thoughts (ToT) helps us map Aletheia’s function:

- Chain-of-Thought (CoT): Linear path. One thought leads directly to the next. Fast but brittle.

- Tree-of-Thoughts (ToT): Branching exploration. The model evaluates several potential next steps, samples promising branches, and backtracks if a path leads to a dead end. This is inherently slower but far more robust.

Aletheia seems to formalize this search process into a deliberate, potentially multi-round "slow thinking" phase, akin to a deep, selective search within a vast reasoning tree. This methodology ensures that the model explores the problem space sufficiently before committing to the "Fast Discovery"—the final, highly accurate output.

The Architecture of Deliberation: Convergence with Symbolic Logic

Why does DeepMind believe this "slow thinking" is necessary? Because true intelligence—the kind needed for scientific breakthroughs—requires more than just predicting the next word; it requires understanding structure, causality, and adhering to formal rules. This naturally leads to the conversation surrounding Neuro-Symbolic AI.

Pure deep learning is excellent at the "neuro" side—learning complex patterns from raw data. However, it often fails at the "symbolic" side—manipulating abstract concepts logically (e.g., "If A implies B, and B is false, then A must be false").

Aletheia’s deliberative process suggests it is integrating symbolic checks or structured planning into its neural inference loop. The "slow" part isn't just generating more tokens; it’s likely dedicated to verification, constraint satisfaction, and iterative refinement guided by internal logic modules. This mirrors the grand ambition of Neuro-Symbolic AI: creating a hybrid system that learns from data (neuro) while reasoning with rigor (symbolic).

A Legacy of Hard Science: DeepMind's Iterative Success

This focus on deep, resource-intensive search is not new for DeepMind. Their history suggests this is a recognized path to solving monumental problems. Consider AlphaFold, the revolutionary tool that predicted protein structures. Solving the protein folding problem was not a quick answer; it involved iterative refinement, complex physical simulations, and scoring functions that guided the model toward the most stable structure.

Just as AlphaFold benefited from deep, iterative simulation guided by the laws of physics, Aletheia suggests that advanced problem-solving in mathematics, complex strategy, or drug discovery requires the AI to engage in similarly time-consuming, iterative refinement guided by logical constraints.

The Trade-Off: Latency vs. Reliability in Deployment

While the scientific promise of Aletheia is immense, its architectural shift presents immediate, practical challenges for businesses and product managers. The core trade-off is stark: Accuracy vs. Latency/Cost.

For a customer asking a chatbot "What is the capital of France?", speed is paramount; any delay is frustrating. For Aletheia, if the task is "Develop a novel catalyst for carbon capture," latency is secondary to correctness, reliability, and the ability to generate verifiable steps.

This distinction carves out a future where AI solutions will specialize:

- Fast LLMs: Used for high-volume, low-stakes interactions (customer service, quick summaries, content drafting).

- Slow Reasoning Engines (like Aletheia): Reserved for high-value, high-stakes applications (scientific modeling, legal analysis, complex engineering design).

The analysis of inference costs for multi-step reasoning models shows that these slower architectures demand significantly more computational resources per query. CTOs must now grapple with a new cost metric: the "reasoning cost." Deploying an Aletheia-like model for everyday tasks would be prohibitively expensive and frustratingly slow. The challenge for the next few years will be optimizing the trigger: knowing precisely when to switch from the fast, pattern-matching mode to the slow, deliberative reasoning mode.

Future Implications: Actionable Insights for Technology Leaders

The rise of "Slow Thinking" models compels leaders across technology and industry to adjust their strategic roadmaps. This is not merely an academic exercise; it redefines what AI is capable of delivering.

1. Embrace Specialized Reasoning Pipelines

Future AI systems will rarely be monolithic. Companies should begin designing hybrid pipelines where incoming queries are first routed by a lightweight classifier. Simple queries go to fast models; complex, open-ended, or deductive queries are shunted to high-reasoning engines. This optimizes both user experience and operational expenditure.

2. Invest in Verifiability Over Plausibility

For domains like finance, engineering, and medicine, an AI answer that sounds plausible but is subtly wrong can be catastrophic. The Aletheia philosophy forces a focus on explainability and auditability. Future procurement should prioritize models that can articulate how they reached a conclusion, providing the reasoning steps (the "slow thinking") that engineers and scientists can stress-test.

3. The New Talent Gap

The talent required to build and manage these systems moves beyond prompt engineering. We need engineers deeply familiar with graph theory, search algorithms, formal verification, and the integration of symbolic knowledge bases. The intersection of classical computer science and modern deep learning is becoming the hottest area for AI innovation.

Conclusion: The Dawn of Deliberate AI

DeepMind’s Aletheia architecture is a powerful signal that the AI community is maturing. We are moving past the phase of simply making models bigger and faster toward making them fundamentally smarter through structure and deliberation. By prioritizing "Slow Thinking," we are building AI systems capable of tackling the truly hard problems—the ones that require patience, exploration, and rigorous self-correction.

The future of AI, particularly in driving scientific and industrial discovery, will not belong solely to the fastest processors, but to the most thoughtful architectures.