The Slow Path to True AI: DeepMind's Aletheia and the Rise of Verifiable Reasoning

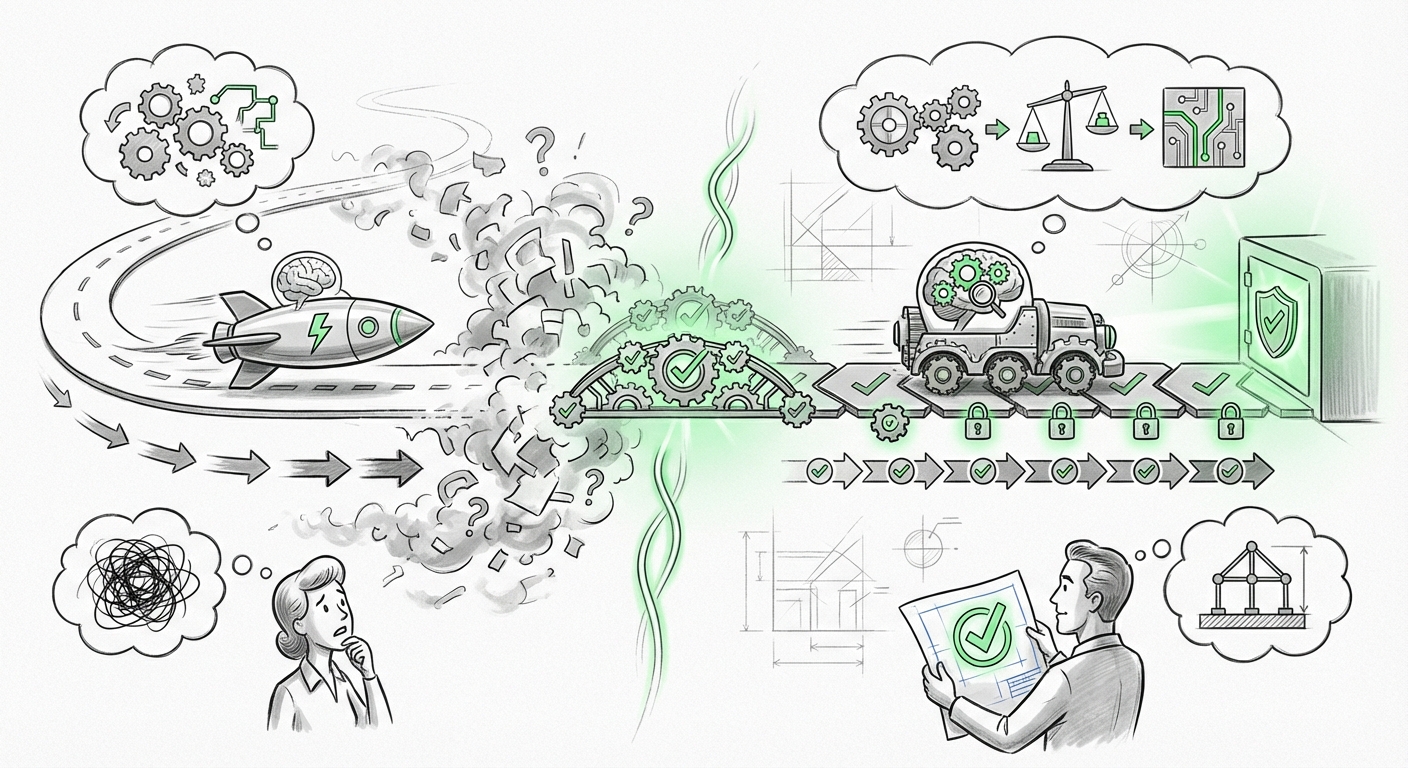

For years, the dominant narrative in Artificial Intelligence has been about speed. Faster training, faster inference, larger models that can ingest the entire internet and spit out answers almost instantaneously. This is the realm of System 1 thinking—fast, intuitive, and pattern-matching, exemplified by the incredible fluency of modern Large Language Models (LLMs).

However, a significant shift is underway, one that suggests true, reliable intelligence requires something counterintuitive: slowing down. DeepMind’s recent Aletheia architecture, highlighted by "The Sequence AI of the Week #809," seems to champion this evolution. It points toward a future where AI doesn't just generate an answer, but carefully checks its own work, prioritizing verifiability over raw speed. This development is not just an incremental update; it represents a necessary technological trajectory toward robust, trustworthy Artificial General Intelligence (AGI).

The Need for "Slow Thinking": Moving Beyond Brute Force

The core issue with current state-of-the-art generative models is a lack of inherent self-correction in the generation process. They are masters of statistical probability, often producing outputs that are plausible but fundamentally incorrect (hallucinations). Aletheia appears to tackle this head-on by introducing a mechanism for "slow thinking"—a deliberative verification step woven directly into the model’s architecture.

Imagine you are solving a complex math problem. The fast way (System 1) is to immediately write down what looks like the right formula. The slow way (System 2) is to stop, check your inputs, verify the formula against known rules, and then execute the steps meticulously. Aletheia seems designed to operationalize this System 2 rigor within a deep learning framework.

Corroboration Point 1: The Resurgence of Neuro-Symbolic AI

This need for rigorous checking naturally leads us to the integration of different AI paradigms. The pursuit of Aletheia strongly echoes the current push in the field towards Hybrid Neuro-Symbolic AI. Deep learning (the 'neuro' part) is excellent at handling messy, real-world data—vision, text, sound. Symbolic AI (the 'symbolic' part) excels at logic, rules, constraints, and verifiable deduction.

If Aletheia is employing deliberate validation steps, it is highly likely using symbolic reasoning tools or internal logical modules to check the plausibility of its own statistically generated outputs. This marriage is crucial because symbolic systems offer built-in explainability and adherence to rules—qualities that pure neural networks lack.

For AI researchers and ethicists, this corroborates the idea that the next frontier isn't just scaling up transformers, but making them smarter by giving them the formal tools of logic. It’s about building AI that can not only speak fluently but also argue soundly.

Corroboration Point 2: The Imperative of Formal Verification

The most compelling aspect of "slow discovery" is verifiability. When an AI suggests a new drug compound or designs a critical piece of infrastructure code, stakeholders cannot afford guesses; they require proof. This is where the concept of Formal Verification comes into play.

Traditional software verification aims to mathematically prove that a program adheres to its specifications under all possible conditions. Applying this rigor to the probabilistic nature of neural networks is incredibly challenging. If Aletheia incorporates internal checks, it must be attempting to bridge this gap—perhaps by generating a "proof sketch" alongside its primary output that can be quickly vetted by a simplified, trusted logic engine.

This trend is vital for regulatory compliance and safety. As AI moves into high-stakes domains (medicine, autonomous vehicles), the ability to query the system, "How sure are you, and what is your step-by-step evidence?" becomes non-negotiable. Aletheia seems to be an architectural answer to the pressing demand for Trustworthy AI.

Corroboration Point 3: Engineering System 2 Thinking

The language used—"Slow Thinking"—is a direct nod to Daniel Kahneman’s dichotomy. Modern LLMs are inherently System 1 engines, optimizing for the most probable next token based on vast training data. They don't "think through" problems sequentially in a human sense; they flow.

The ambition of researchers today is to architect a System 2 capability into these powerful engines. This involves creating internal loops where the model is forced to pause, evaluate intermediate hypotheses, assess uncertainty, and perhaps even discard a promising but flawed path. This deliberate contemplation is the hallmark of deep reasoning.

This mirrors the ongoing academic work in cognitive modeling, suggesting that the path to more general intelligence requires mimicking the structured, effortful processing that humans use for novel or difficult tasks. It confirms that fluency alone is not intelligence.

Corroboration Point 4: Evolving Beyond Chain-of-Thought

For a while, Chain-of-Thought (CoT) prompting—asking the model to "think step by step"—was the main driver for improving LLM reasoning. CoT is useful because it externalizes the model's intermediate steps, making them visible to the user (and sometimes, the model itself). But CoT is passive; it just displays the stream of consciousness.

Aletheia suggests an advancement beyond standard CoT. Instead of just displaying the thoughts, the architecture appears to be building an active, iterative verification layer between the thought steps. This implies self-correction, where the model critiques its own preceding output and refines the path, rather than simply proceeding blindly.

This focus on iterative refinement is what will unlock powerful AI agents capable of complex planning, debugging their own code, or conducting multi-stage scientific experiments that require constant course correction based on intermediary data feedback.

What This Means for the Future of AI and How It Will Be Used

The development trajectory suggested by Aletheia means we are moving from the era of the "Brilliant Intern" to the era of the "Reliable Consultant."

For Technical Audiences: Architectural Fusion

Technically, the future involves **architectural fusion**. We will see fewer monolithic end-to-end models and more modular systems where a fast generative model (the intuition) feeds into a slow, symbolic verification module (the logic). This hybrid approach offers the best of both worlds: the flexibility of neural networks married to the certainty of logical frameworks.

Engineers will need skills in both domains—understanding how to train large foundation models *and* how to design reliable constraint checkers or knowledge graphs that can interact with them seamlessly.

For Business Leaders: The Trust Dividend

For business leaders, the implication is a massive increase in the applicability of AI in regulated or high-risk fields. If an AI system can produce an answer and provide a machine-checkable justification (a "proof"), adoption barriers fall dramatically.

Consider financial modeling, legal discovery, or advanced manufacturing diagnostics. Currently, human experts must spend significant time auditing AI suggestions. Systems designed with deliberate "slow thinking" layers will drastically reduce this audit burden, accelerating deployment timelines and reducing liability risks. The Trust Dividend—the financial benefit derived from trusted AI—will become a key competitive differentiator.

For Society: The Path to Explainability

Societally, this addresses the "black box" problem. While perfect explainability remains an elusive goal, introducing mandatory "slow steps" forces transparency. If an AI's decision-making process is broken down into verifiable logical segments, it becomes far easier to pinpoint bias, error, or unfairness.

This move toward deliberate reasoning is fundamentally aligned with ethical AI principles, making AI systems more accountable to human oversight and democratic values.

Practical Implications and Actionable Insights

To prepare for this next phase of AI development, organizations should focus on three actionable areas:

- Audit for Reasoning Gaps, Not Just Fluency: When evaluating LLMs or agent systems, move beyond simply testing the quality of the final answer. Design stress tests that require multi-step planning, constraint satisfaction, and logical deduction. Where does the model fail? Does it fail by guessing (System 1 error) or by failing to follow its own stated plan (System 2 deficiency)?

- Invest in Modular Design: Start thinking about AI deployment not as a single model call, but as a pipeline involving several specialized components. Can you swap out a fast inference engine for a slower, but highly verifiable, reasoning module when the task demands higher accuracy (e.g., financial reporting vs. creative brainstorming)?

- Embrace Neuro-Symbolic Tooling: Keep a close watch on open-source and proprietary frameworks that explicitly bridge symbolic reasoning (like Prolog, SAT solvers, or knowledge graphs) with transformer architectures. These tools will form the backbone of the next generation of robust AI applications.

Conclusion: The Wisdom of Pausing

The emergence of architectures like DeepMind’s Aletheia signals a maturation in the field of AI. We are recognizing that processing power without verifiable understanding is merely sophisticated mimicry. The real breakthrough into reliable, useful, and safe Artificial General Intelligence will come not from perpetually increasing clock speeds, but from teaching our models the wisdom of pausing, reflecting, and proving their work.

The future of AI won't just be fast; it will be deliberately, measurably, and reliably reasoned. The shift to "slow thinking" is the most exciting indicator yet that we are on the right path toward building machines we can truly trust.