The Next Frontier: Why Fei-Fei Li's $1 Billion Bet on Spatial Intelligence Signals AGI's Physical Awakening

The artificial intelligence landscape has been dominated for the last few years by the mesmerizing capabilities of Large Language Models (LLMs). These systems, masters of text, code, and 2D imagery, have captured the public and commercial imagination. However, a quiet but profound consensus has been forming among the world's leading AI minds: *true intelligence requires a body and an understanding of the physical world.*

This consensus just received a billion-dollar validation. The news that Fei-Fei Li’s startup, World Labs, secured a staggering $1 billion in financing to pursue "spatial intelligence" is not just a financial headline; it is a strategic marker indicating the next major axis of the AI revolution. This investment signals a decisive move away from purely digital cognition toward embodied intelligence—AI that can perceive, reason about, and interact robustly within our three-dimensional reality.

The LLM Ceiling: Why Text Isn't Enough

To understand the magnitude of this shift, we must first look at the limitations of the current state-of-the-art. Models like GPT-4 are incredibly fluent, but they lack fundamental intuition about physics, cause-and-effect, and object permanence—the basic knowledge a toddler gains just by playing with blocks.

For an AI, knowing the word "cup" is easy; knowing that if you tip the cup, the liquid inside will fall out, requires understanding spatial relationships and physics. Current LLMs operate in a flat, 2D representation derived from the internet (text and images). They can write an essay about fixing a leaky faucet, but they cannot safely apply a wrench to the pipe.

Dr. Li, a pioneer in computer vision and a leading voice in AI ethics and research, is championing the solution: Spatial Intelligence. This means training AI not just on what things look like or what words describe them, but how they exist and behave in space and time.

The Core Technology: World Models

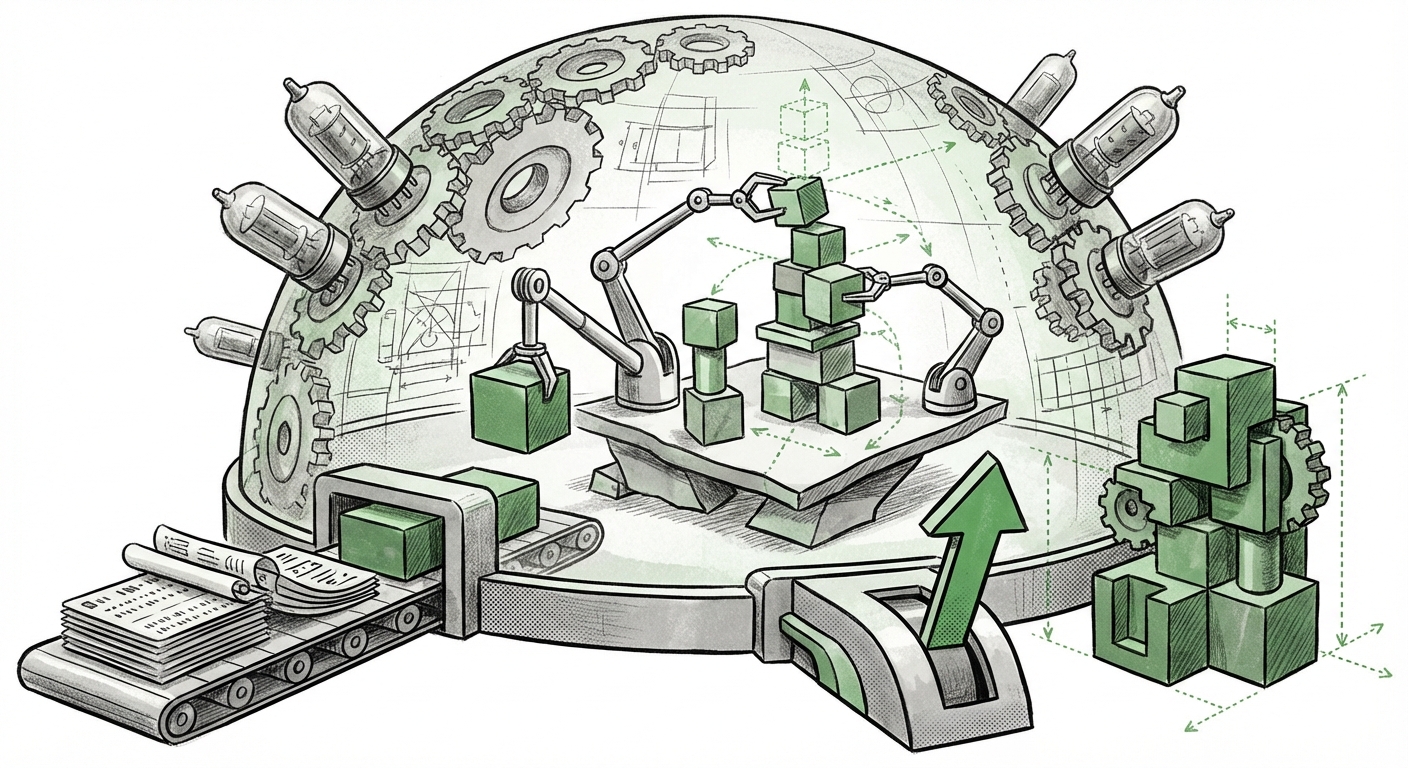

The mechanism World Labs is targeting is the concept of World Models. This is where the technical deep dive begins.

Imagine you are planning a chess move. You don't just look at the current board; you internally simulate several possible next moves, predicting your opponent’s reactions, and visualizing the board state three moves ahead. This internal, predictive simulation is a simple form of a World Model.

For AI, World Models are algorithms designed to build an internal, compressed, and predictive simulation of the environment they are observing. This allows the AI to:

- Predict Outcomes: If I push this object, where will it go?

- Plan Efficiently: What sequence of actions leads to the goal state?

- Handle Novelty: Generalize understanding to new scenarios not seen in training data, by relying on its internal physics engine.

As our search query confirmed (Query 1: `"World Models" in AI research recent breakthroughs`), this concept is receiving significant attention from major labs like Meta and DeepMind. World Labs' massive funding suggests they believe they have a shortcut or a superior architecture to scale this predictive reasoning into comprehensive spatial models.

Corroboration: A Market Moving Toward Embodiment

The $1 billion valuation for World Labs is an outlier, but it sits squarely within a rapidly accelerating market trend: the commercialization of physical AI. The focus is shifting from "Chatbots" to "Do-Bots."

Our investigation into market trends (Query 2: `Recent funding rounds for Embodied AI and Robotics startups`) shows a clear pattern. Capital is flowing into any entity that can bridge the digital-physical gap. Companies building humanoid robots, advanced warehouse automation, and sophisticated autonomous vehicles are securing valuations based on their ability to translate complex digital reasoning into reliable physical action. World Labs is positioning itself to provide the brain for these future physical agents.

This is a departure from previous investment cycles that heavily favored pure software, driven by the low cost and scalability of cloud computing. Spatial AI, by its very nature, requires massive amounts of complex, real-time sensory data—visual, tactile, and kinetic. The market is signaling that the next multi-trillion-dollar application space is not just organizing data on a server, but organizing matter in the real world.

The Visionary Fuel: Why Dr. Li Matters

In AI, funding often follows credibility. Fei-Fei Li is not merely a successful entrepreneur; she is one of the foundational figures in modern computer vision, having led the creation of ImageNet, the dataset that powered the deep learning revolution. Her strategic pivot is highly informative.

When a titan shifts focus, it indicates that the preceding paradigm has reached its inflection point. As explored through targeted interviews (Query 3: `Fei-Fei Li interview spatial intelligence future of AI`), Dr. Li has often articulated that perception (seeing and understanding the world) is the bedrock upon which true intelligence is built. Her move suggests that while LLMs have mastered the *symbolic* layer of intelligence, they are profoundly weak on the *grounded* layer. World Labs aims to solve the grounded layer, providing the necessary physics and geometry that LLMs inherently lack.

Implications: Reshaping Industry and AGI Pathways

The pursuit of spatial intelligence has sweeping implications across technology, business, and the philosophical quest for Artificial General Intelligence (AGI).

1. The Acceleration of True Robotics

This is the most immediate impact. Robotics today is often brittle; systems excel only in highly constrained, pre-programmed environments (like a fixed assembly line). The goal of general-purpose robots—ones that can clean a messy kitchen, assist the elderly in a dynamic home, or work on an unstructured construction site—requires the ability to navigate uncertainty.

Spatial intelligence provides that robustness. An AI running a World Model can look at a pile of laundry, immediately understand the geometry of the fabric, predict how different folds will affect gravity, and devise a sequence of grasps to fold it correctly, all without needing millions of pre-labeled examples of folded clothes. This dramatically lowers the barrier to deployment for advanced physical automation.

2. A Re-evaluation of AGI Timelines

Many experts believed AGI would arrive via scaling up language models until complexity led to emergent understanding. The World Labs investment proposes a competing or necessary complementary path: AGI requires a physical anchor.

If World Models succeed in creating robust internal simulations, it means AI agents could practice complex tasks—like surgery, advanced engineering, or geological surveying—millions of times in a virtual environment before ever touching the real world. This ability to internalize and rehearse reality rapidly collapses the timeline for achieving human-level competence in physical domains. The competitive landscape (Query 4: `"Spatial AI" vs "LLMs" strategic pivot`) is no longer just about who has the biggest language model, but who can build the most accurate internal simulator.

3. Redefining Enterprise Data Processing

While robotics is the obvious beneficiary, spatial AI will transform how businesses handle physical assets. Think of massive logistics centers, infrastructure management, or agriculture:

- Digital Twins: Companies will move beyond simple 3D models to active, predictive digital twins powered by World Models, allowing them to stress-test supply chains or simulate urban development changes before committing physical resources.

- Advanced Perception: Security systems, medical imaging, and autonomous driving will gain superior contextual awareness—understanding not just what is in the scene, but what will happen next in that scene.

Actionable Insights for Industry Leaders

For businesses watching the AI race, World Labs' massive raise serves as a clear directive. Focusing solely on leveraging text-based LLMs is now an incomplete strategy.

For Technology Leaders: Embrace Embodiment Now

If your core business involves interacting with the physical world—manufacturing, logistics, energy, or construction—you must begin prioritizing data streams that feed spatial reasoning:

- Invest in 3D Data Pipelines: Move beyond standard 2D photography and explore LiDAR, photogrammetry, and structured 3D scanning to build environments your AI can learn from spatially.

- Pilot World Model Integrations: Begin experimenting with foundational models designed for simulation (even if proprietary or academic for now) to build internal digital twins of your most complex operations.

- Hire Cross-Domain Talent: The future AI engineer needs to understand machine learning and principles of physics, geometry, or traditional robotics kinematics.

For Investors: Look Beyond the Cloud

The venture capital sentiment is shifting. While software models remain valuable, the highest long-term value creation may now lie in companies that successfully ground AI in the physical world. The massive valuation of World Labs sets a new benchmark for the required technical leap.

Conclusion: From Digital Oracle to Physical Agent

The pursuit of spatial intelligence represents the maturation of the AI field. We are graduating from AI systems that can talk like humans to systems that can act like competent agents in the world they inhabit. Fei-Fei Li and World Labs are betting a billion dollars that the path to true AGI runs directly through 3D space, time, and physics.

This development doesn't invalidate the progress made by LLMs; rather, it proposes the necessary architecture to plug that digital brain into a functional body. The era of the purely conversational AI is drawing to a close, making way for the era of the physically aware, problem-solving AI—an era that promises to revolutionize everything from the factory floor to the home.