The GPU Scaling Wars: How Multi-GPU Economics Define the Future of LLMs

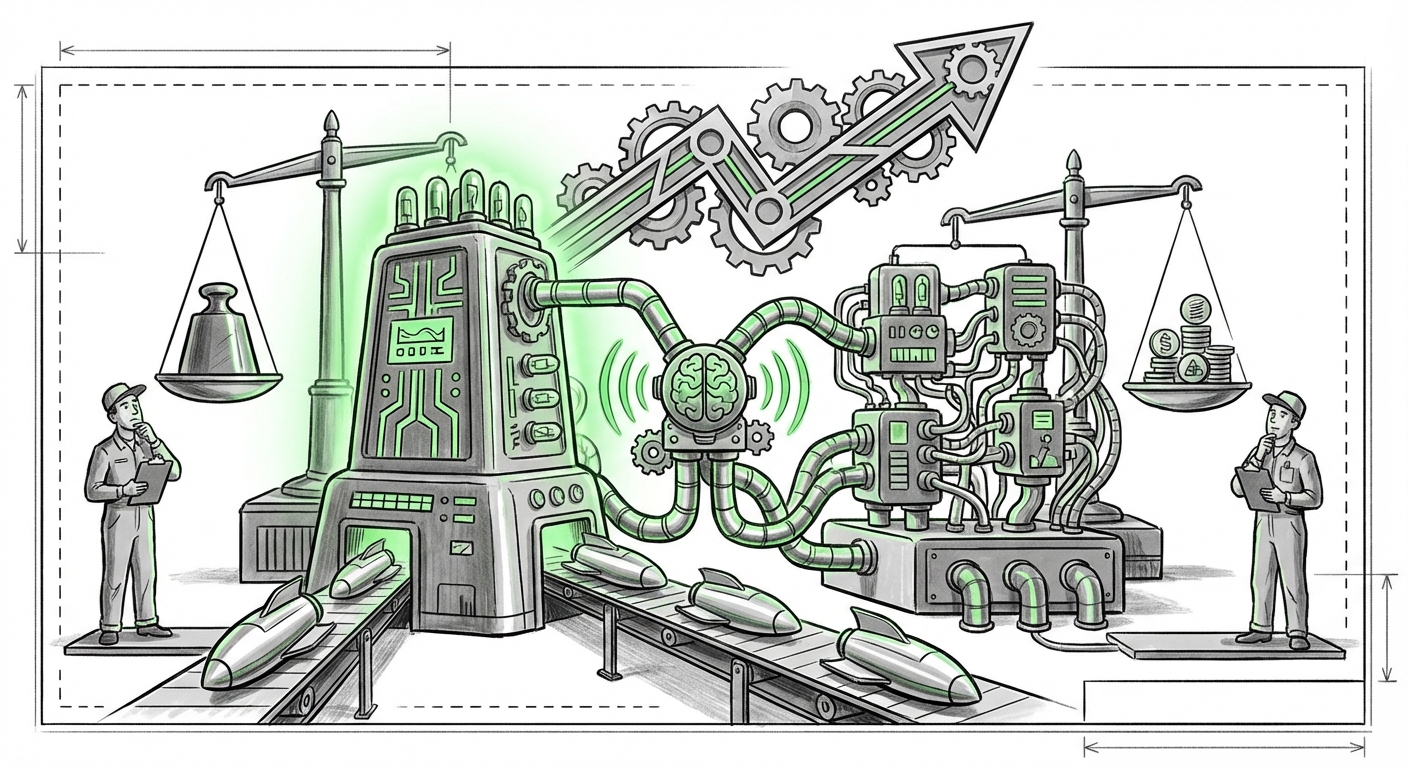

The age of Generative AI is not defined by algorithms alone; it is fundamentally constrained by the availability, cost, and architecture of the hardware that runs them. Training and deploying the massive Large Language Models (LLMs) that power everything from personalized chatbots to advanced scientific discovery requires staggering computational power. At the heart of this immense demand lies a critical, often frustrating, engineering decision: **How do we effectively combine processing units?**

Recent deep dives, such as analyses comparing the economics of single-GPU versus multi-GPU scaling using hardware like the AMD MI355X, illuminate this infrastructure battleground. This isn't just about having the fastest chip; it’s about achieving the best **Total Cost of Ownership (TCO)** for models that may contain trillions of parameters. As AI analysts, we must synthesize these hardware specifics with broader market context to predict where this industry is headed.

The Hardware Divide: AMD’s Challenge and NVIDIA’s Standard

The initial context provided centers on the AMD MI355X, signaling a maturing market where competition is heating up beyond the traditional leader. For years, the narrative has been simple: NVIDIA dominated, and their proprietary interconnect, **NVLink**, was the golden ticket for true high-performance multi-GPU scaling required for training the largest models.

However, proprietary dominance often leads to high costs and vendor lock-in. The value of an article focusing on AMD hardware is its exploration of alternatives. When we look for corroborating evidence, specifically targeting NVIDIA H100 NVLink scaling performance for LLM training, we uncover the benchmark AMD must meet. NVIDIA's strategy relies on creating dense, tightly integrated systems (like the DGX SuperPOD) where communication between GPUs is nearly instantaneous. This speed minimizes the time spent waiting for data, which is critical when a single LLM calculation must be split across dozens or hundreds of processors.

For data center managers and cloud architects, the choice is stark: Do they pay a premium for the known, highly-optimized scaling path provided by NVIDIA's ecosystem, or do they invest in a potentially lower-cost alternative like the MI355X, which might require more engineering effort to achieve comparable scaling efficiency?

Practical Implication for Infrastructure Engineers:

If an organization needs to train a cutting-edge 1-trillion-parameter model from scratch, the premium paid for NVLink-enabled multi-GPU setups often translates into faster time-to-market, justifying the higher initial capital expenditure (CAPEX). For inference—where the model is already trained and simply running for users—the economics shift, and the cost-per-query of a single, powerful card might look more appealing, provided the model fits entirely in its memory.

The Software Layer: Where Efficiency Is Really Forged

The best hardware in the world is useless if the software cannot coordinate its components. This is perhaps the most democratizing factor in the scaling debate. Even the fastest chips can run at half-speed if the communication overhead between them is too high.

This brings us to the necessity of examining software optimizations, such as those provided by frameworks like **DeepSpeed ZeRO optimization impact on multi-GPU memory utilization**. For AI/ML developers, these tools are lifesavers. They allow models to be broken down into smaller pieces that can be distributed across numerous GPUs, even those connected by slower standard links (like PCIe or slower Ethernet), rather than relying solely on ultra-fast proprietary interconnects.

ZeRO (Zero Redundancy Optimizer), for instance, strategically partitions model states (like optimizer states, gradients, and parameters) across the available GPUs. This means instead of every GPU holding a full copy of the massive model—a massive memory waste—each holds only a fraction. This software trick directly impacts the economic viability of multi-GPU setups built with less premium hardware.

Practical Implication for Developers:

The barrier to entry for leveraging multi-GPU setups is falling. Developers skilled in these optimization techniques can potentially achieve 80% of the performance of a fully integrated, proprietary cluster at 50% of the hardware cost. This empowers smaller labs and mid-sized enterprises to tackle larger models than previously possible.

The Cold Hard Facts: TCO and the Compute Budget

Technology specifications look great on paper, but business leaders care about the bottom line. The discussion around the MI355X vs. an H100 unit is ultimately a debate over **Total Cost of Ownership (TCO)** for large-scale AI training in 2024 and beyond.

When analysts investigate the TCO analysis for large-scale AI model training, they must look beyond the initial purchase price. TCO includes power consumption, cooling requirements, real estate in the data center, required maintenance staff, and—most importantly—the utilization rate of the hardware.

A $100,000 system that runs at 95% utilization 24/7 is economically superior to a $60,000 system that idles at 40% utilization because of software bottlenecks or memory limitations. The economic reality dictates that maximizing throughput—getting the most training cycles done per dollar spent on electricity and hardware—is the ultimate goal.

Practical Implication for Business Leaders (CIOs/CTOs):

The procurement strategy for AI hardware is shifting from a simple "buy the fastest" mentality to a complex optimization problem. For cloud providers, this might mean favoring commodity hardware coupled with excellent software abstraction. For large enterprises building private clusters, the decision rests on long-term depreciation and specialized workload predictability. If your workload is predictable (e.g., running the same LLM fine-tuning daily), custom hardware investment makes sense. If it is variable, relying on flexible cloud infrastructure with diverse GPU options becomes more attractive.

The Horizon: Accelerators Disrupting the GPU Hegemony

The current obsession with maximizing GPU clusters is a necessary evil of today’s technology. But what happens when the specialized hardware landscape evolves? The final piece of context is looking toward **next-generation AI accelerators vs. GPU scaling for generative AI inference**.

The industry recognizes that GPUs, built originally for graphics, are powerful generalists. They are excellent at parallel tasks, but perhaps overkill for the specific math required by inference (the act of using a trained model). Companies are aggressively developing Application-Specific Integrated Circuits (ASICs) like Google’s TPUs or specialized chips from startups like Groq, which are designed purely for the speed of matrix multiplication and low-latency sequential processing inherent in inference.

If a dedicated inference chip can serve requests at ten times the efficiency (and lower power draw) of a top-tier GPU, the multi-GPU scaling debate becomes irrelevant for deployment. Why build a complex cluster of high-bandwidth GPUs when a bank of cheaper, specialized inference cards can handle the load at a fraction of the operational cost?

Future Implication for Strategy:

The future AI architecture will likely be bifurcated:

- Training & Frontier Research: Remain reliant on massive, highly interconnected GPU clusters (NVIDIA/AMD heavy) due to the sheer complexity and need for unified memory access across enormous models.

- Deployment & Inference: Rapidly migrate toward specialized, custom ASICs that prioritize low latency and extreme power efficiency, fundamentally altering the scaling economics away from the traditional GPU model.

Actionable Insights for Navigating the Next AI Wave

For organizations planning their AI strategy over the next three years, understanding the interplay between hardware, software, and economics is paramount. Here are concrete actions derived from this multi-faceted analysis:

- Decouple Software from Silicon: Invest heavily in software engineers proficient in frameworks like PyTorch Distributed and DeepSpeed. A portable optimization strategy ensures that if a better hardware price point emerges (whether AMD, a new NVIDIA generation, or an ASIC), your existing models can be ported with minimal re-engineering effort.

- Segment Workloads by Goal: Clearly differentiate between Training workloads (where high-speed interconnects matter most) and Inference workloads (where TCO and power efficiency dominate). Do not use trillion-dollar training hardware for simple chat responses.

- Pressure-Test Vendor Claims: Do not accept vendor benchmarks at face value. If considering a challenger like AMD, build rigorous, proof-of-concept tests that mimic your actual model size and communication patterns before committing major capital. The interoperability demonstrated by robust software tools is the true measure of hardware viability.

- Monitor the ASIC Landscape: Strategic planning must account for the obsolescence of general-purpose scaling for inference. Organizations should run small pilots on emerging accelerator platforms to understand latency gains and potential cost savings before they become mainstream bottlenecks for purchasing.

The scaling dilemma—single versus multi-GPU—is a temporary phase dictated by the current state of large-scale transformer models. It is a technical puzzle being solved simultaneously by hardware manufacturers striving for faster links, and software developers finding clever ways to use existing links more intelligently. The true winner in this escalating arms race will be the entity that can most efficiently bridge the gap between bleeding-edge silicon and accessible, cost-effective software deployment.