The GPU Wars: Decoding AI Scaling Economics from Single Cards to Multi-Node Clusters

The artificial intelligence revolution is not just about smarter algorithms; it is fundamentally about computational brute force. As Large Language Models (LLMs) balloon past hundreds of billions of parameters, the question shifts from "Can we train this model?" to "Can we afford to train and run this model efficiently?" This hardware decision—specifically, how many Graphics Processing Units (GPUs) we dedicate to the task—is now the central economic battleground in enterprise AI.

Recent deep dives, such as guides analyzing the economics of the AMD MI355X versus single-GPU setups, highlight this tension perfectly. They force us to look beyond raw clock speeds and into the nuanced trade-offs between immediate, contained power (single-GPU) and complex, massive parallelism (multi-GPU and multi-node clusters).

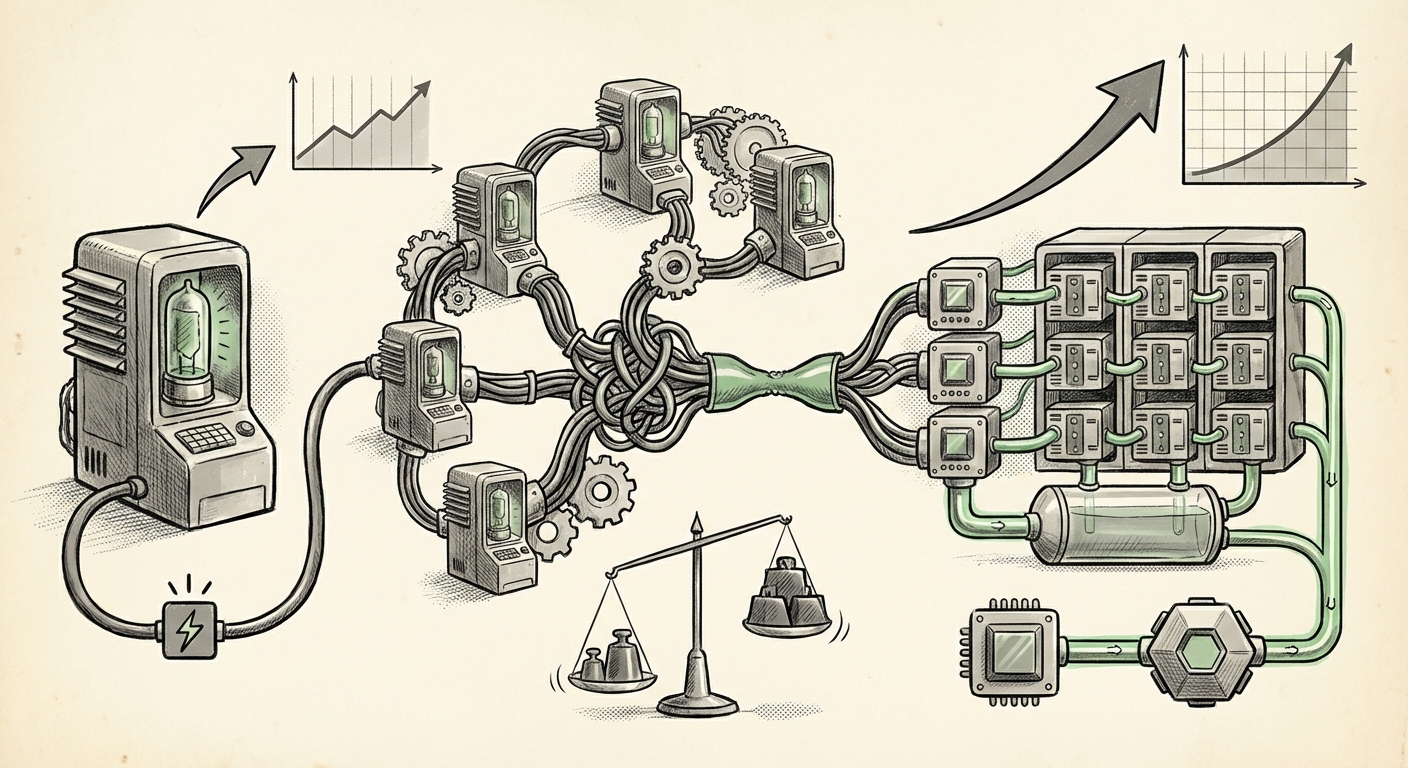

The Core Economic Trade-Off: Single vs. Scale

For many businesses beginning their AI journey, a single, powerful GPU (whether NVIDIA, AMD, or otherwise) is the starting point for smaller models or inference tasks. It offers simplicity, low latency, and minimal software headache.

However, when scaling to training state-of-the-art LLMs—which require processing terabytes of data and distributing the model itself across hundreds of gigabytes of memory—single-GPU solutions hit an insurmountable wall. This is where the calculus changes entirely. Multi-GPU scaling introduces significant overhead:

- Memory Scaling: LLMs often need to be split across several cards. The speed at which these cards can talk to each other (the interconnect) becomes the main bottleneck, not the speed of the chip itself.

- Performance Trade-offs: Splitting a job introduces synchronization costs. If the connection between two GPUs is slow, one GPU might sit idle waiting for the other, wasting expensive compute time.

- Deployment Strategy: Moving from a single server to a cluster requires sophisticated networking, specialized software frameworks, and highly trained operational staff.

Understanding this economic curve is crucial. The decision isn't just about buying hardware; it’s about choosing a scaling philosophy that matches your model size and budget. For CTOs, this means weighing the high upfront cost and complexity of a cluster against the absolute impossibility of achieving frontier AI results on limited hardware.

Contextualizing the Competition: The Shadow of Software Ecosystems

Hardware specifications tell only half the story. A powerful GPU with inaccessible software is just an expensive paperweight. This brings us directly to the most significant non-hardware factor in scaling economics: the software stack.

The analysis of hardware like the MI355X cannot ignore the long-established dominance of NVIDIA’s CUDA platform. CUDA is the bedrock upon which almost all major AI frameworks (PyTorch, TensorFlow) were originally optimized. When an enterprise decides to deploy an AMD solution, they are often making a strategic bet that the performance gains outweigh the engineering cost of adapting to the alternative software environment, ROCm.

The search query `"NVIDIA CUDA vs AMD ROCm ecosystem adoption enterprise"` reveals ongoing industry analysis comparing the developer mindshare and deployment friction. An analysis comparing the ease of deploying PyTorch/TensorFlow models across both ecosystems underscores that software compatibility is a leading economic factor. Original Context: Multi-GPU vs Single-GPU Scaling economics

For the IT Architect, the economic implication is clear: investing in cutting-edge hardware only pays off if the AI development teams can leverage it immediately. A delay of six months waiting for a critical library to support the new card architecture can erase any initial hardware cost advantage.

The Frontier Challenge: Memory and Parallelism for Trillion-Parameter Models

If training a model with 10 billion parameters requires significant multi-GPU effort, training a trillion-parameter model requires a complete re-architecture of how the job is distributed. This leads us into the world of sophisticated model parallelism.

When a model cannot fit onto the memory of a single GPU, it must be broken apart. This is done via techniques like Tensor Parallelism (splitting the calculation matrices) or Pipeline Parallelism (splitting the layers of the neural network across different GPUs). These methods demand lightning-fast communication between the GPUs.

Diving into `"LLM model parallelism techniques memory limits Tensor Pipeline"` shows that the core goal is to minimize "idle time" where GPUs wait for data transfer. Success in frontier AI is defined by mastering these communication overheads. Original Context: Multi-GPU vs Single-GPU Scaling economics

For technical teams, this means that simply adding more GPUs doesn't guarantee linear speedup. If the network linking the GPUs cannot handle the required data shuffle for tensor parallelism, performance plateaus quickly. The economics here punish inefficient scaling. A poorly designed 8-GPU cluster might perform worse than a perfectly optimized 4-GPU system if communication latency is too high.

The Future Interconnect: CXL and the End of Memory Silos

Looking ahead 3-5 years, the scaling bottleneck is poised to shift again, moving away from pure inter-GPU bandwidth (like NVLink) toward unified, coherent memory architectures. This is where Compute Express Link (CXL) enters the equation, promising to revolutionize resource pooling.

CXL allows a CPU to access the memory of an accelerator card (and vice versa) as if it were its own main memory, but with far less overhead than traditional PCIe. In the context of multi-GPU scaling, CXL promises to blur the lines between what is "GPU memory" and what is "system memory."

The comparison of `"CXL for AI accelerators scaling economics vs NVLink"` reveals that CXL could democratize memory access, potentially allowing smaller, cheaper GPUs to participate effectively in massive training jobs by pooling their local memory resources through a high-speed, coherent fabric. Original Context: Multi-GPU vs Single-GPU Scaling economics

What this means for the future: If CXL matures as expected, the economic argument for buying gigantic, monolithic GPUs with proprietary high-speed links may weaken. We could see a trend toward a larger number of moderately powerful, easily connected accelerators, dramatically lowering the entry barrier for large-scale AI training.

Practical Implications: Actionable Insights for Decision Makers

For businesses operating in this rapidly evolving space, leveraging these technological trends requires proactive strategy:

1. For the Infrastructure Architect: Diversify the Software Path

Do not let vendor lock-in dictate your future scale. While CUDA remains the path of least resistance today, businesses must invest engineering time in making their workloads agnostic or easily portable to ROCm or other open standards. This hedges against future price shocks or supply chain disruptions, making the economic calculus for adopting alternative hardware (like the MI355X) viable.

2. For the ML Engineer: Master Parallelism Techniques Early

If your model size requires more than two GPUs, you are already operating in the complex scaling domain. Focus training efforts on mastering techniques like DeepSpeed or FSDP (Fully Sharded Data Parallelism). The speed-up you gain from efficient parallelism will provide a much higher ROI than simply buying one more card without optimizing the communication fabric.

3. For the CTO/Strategist: Plan for Interconnect Evolution

When budgeting for the next generation of AI infrastructure (18–36 months out), prioritize platforms that support emerging interconnect standards like CXL. This signals a commitment to flexibility and avoiding future "memory prisons" where powerful GPUs are starved because they cannot efficiently share data across the wider system.

Conclusion: Hardware is a Means, Efficiency is the End

The current debate over single versus multi-GPU scaling economics, exemplified by discussions around specific enterprise hardware like the AMD MI355X, proves that the hardware race is fiercely competitive. However, raw performance metrics are increasingly misleading.

The true future of AI deployment—and its profitability—will be defined by three intersecting vectors:

- Software Portability: Reducing the economic friction of switching between hardware vendors.

- Algorithmic Efficiency: Mastering model parallelism to extract maximum value from every interconnected chip.

- Interconnect Maturity: Adopting open standards (like CXL) that promise coherent memory pooling, thereby fundamentally changing the cost structure of massive compute clusters.

The next wave of AI winners won't just be those who buy the most powerful chips; they will be those who design the most elegant, efficient, and adaptable scaling strategies to harness that power, turning complex clusters into streamlined, economically viable inference and training factories.