The Reasoning Revolution: How Gemini 3.1 Pro's Leap Redefines the AI Race

The world of Artificial Intelligence rarely sleeps, but recent announcements from Google have managed to significantly raise the industry’s collective heart rate. The release of Gemini 3.1 Pro, characterized by a reported doubling of performance on demanding reasoning benchmarks, is not just another incremental update; it signals a critical inflection point in the fierce battle for foundational model supremacy.

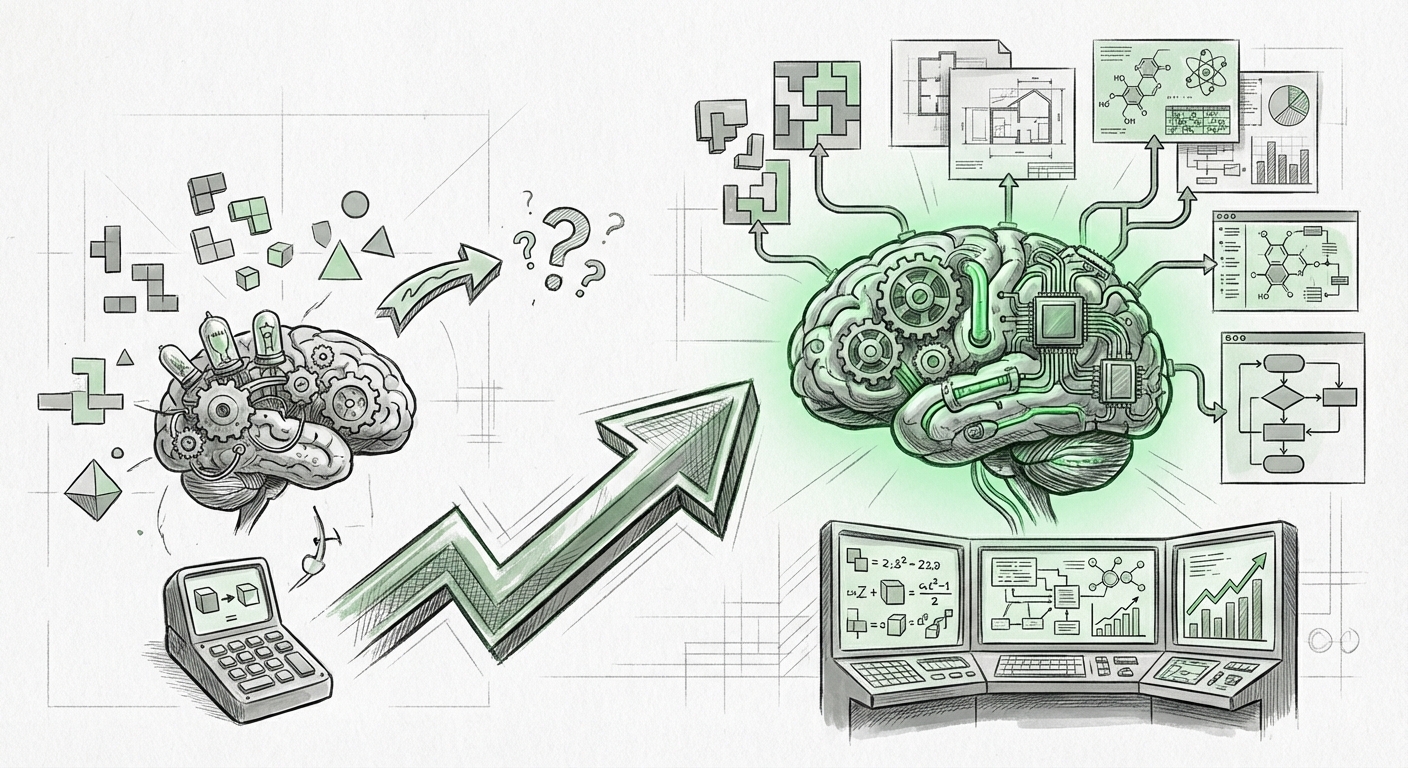

For years, Large Language Models (LLMs) have dazzled us with fluency, creativity, and vast knowledge recall. But one fundamental gap has separated advanced tools from truly intelligent agents: reasoning. Reasoning is the ability to connect disparate pieces of information, follow complex logical chains, solve multi-step problems, and adapt in novel situations—skills essential for meaningful autonomy.

As the initial reports noted, benchmarks are proxies. They tell us *how well* a model performs on a specific, pre-defined test. The real question driving the industry forward is: What does this massive leap in benchmark performance translate to in the messy, unstructured world of real-world applications? To understand the implications, we must analyze this development through the lens of competition, practicality, and strategic intent.

The Benchmark Blitz: Contextualizing the Doubling Performance

When a leading model doubles its score on a key reasoning metric, it forces a direct recalibration of expectations. This performance metric is central to how we measure true intelligence in AI systems. To put this into perspective, we must look at the current landscape.

The Competitive Crucible: Gemini vs. The Field

The primary metric for success in 2024/2025 hinges on how Google’s advancements stack up against OpenAI’s current flagship offerings. Researchers and developers are immediately asking: Does Gemini 3.1 Pro close or even surpass the perceived gap against models like GPT-4o?

Deep dives into comparative analyses, often utilizing benchmarks like GPQA (Graduate-level Problem Solving Questions in AI) or complex mathematical proofs, become essential. If Gemini 3.1 Pro is achieving state-of-the-art results in these complex reasoning tasks, it validates Google's architectural investments and suggests a genuine maturation of their model family.

This competitive pressure is immensely healthy for the ecosystem. When one giant pushes the boundary of reasoning, others are forced to accelerate their own core research, ultimately benefiting end-users. This direct comparison provides crucial context for investors and developers deciding which ecosystem to commit to.

For deeper technical context on how this model stacks up against competitors, look for detailed comparisons on specialized AI news sites focusing on benchmark reports.

Beyond the Numbers: The Illusion of Benchmarks

However, the excitement must be tempered by critical examination. As noted in the initial reports, benchmarks are just that—tests. An advanced LLM might excel at a standardized test built on known data patterns, but struggle with truly novel, common-sense challenges that require intuitive leaps.

A significant area of current AI research focuses on the limitations of current LLM reasoning benchmarks. Many existing tests risk "data contamination," where the model may have inadvertently trained on the answers. True reasoning improvement shows up when models can handle abstract concepts, multi-modal inputs (combining text, image, and audio logic), and subtle contextual shifts that the training data hasn't perfectly mapped.

For the AI ethicist and academic researcher, the critical question remains: Has this improved reasoning fostered better problem-solving, or merely better test-taking?

The Practical Pivot: Applications of Enhanced Reasoning

The doubling of reasoning power is the key that unlocks the next generation of AI applications. For too long, AI has served as an incredible assistant; with enhanced reasoning, it begins to function as a genuine collaborator capable of handling complexity previously reserved for specialized human expertise.

1. Advanced Software Engineering and Debugging

One of the most immediate beneficiaries is code generation and maintenance. Previous models could write boilerplate code or fix simple syntax errors. Enhanced reasoning allows models to understand large codebases, trace complex logic flows across multiple files, and devise solutions for subtle, architectural bugs.

This moves AI from being a coding copilot to a debugging agent. Imagine an AI that can ingest a system failure report, cross-reference documentation, trace the execution path across several services, and propose a complex patch that requires understanding distributed system behavior. This capability drastically cuts down on engineering overhead and accelerates deployment cycles.

2. Scientific Discovery and Hypothesis Generation

In chemistry, materials science, and biology, discovery often hinges on synthesizing findings from thousands of disconnected papers and applying logical constraints. An LLM with superior reasoning can be tasked with identifying novel therapeutic targets by connecting seemingly unrelated pathways described in separate research domains.

This is the gateway to AI agency in R&D. It means the AI is not just summarizing existing knowledge, but actively participating in the *generation* of new, testable hypotheses based on complex inference.

3. Enterprise Workflow Automation Beyond Simple Tasks

For business leaders, this means moving automation away from repetitive data entry and toward complex decision support. Consider legal compliance: a model can now analyze a 500-page regulatory document, cross-reference it with internal company policies across ten different regions, and logically flag potential conflicts that require nuanced human review. This level of multi-step, high-stakes reasoning transforms compliance from a manual burden into a streamlined, AI-assisted process.

The transition to genuine agency is key. See contemporary analysis on the integration of advanced reasoning into debugging tools for concrete examples of this shift.

Google’s Strategic Play: Context from the Roadmap

A major model release like Gemini 3.1 Pro is never an isolated event; it is a strategic anchor point. To understand its full weight, we must examine it within the context of Google’s broader platform strategy, often revealed during events like Google I/O.

If this reasoning leap occurs shortly after a major platform event, it shows immediate intent: Google is baking this core intelligence directly into its consumer and enterprise pillars.

- Search Reinvention: Enhanced reasoning allows Search to move past simple link aggregation toward providing synthesized, logically sound answers to complex "how-to" or analytical queries, fundamentally changing user interaction with the world’s information.

- Workspace Integration: Imagine Gmail summarizing long threads not just by topic, but by identifying the core action items, prioritizing them based on implied urgency, and drafting the next logical response step—all requiring strong inferential reasoning.

- Platform Lock-in: By significantly upgrading the intelligence underpinning their ecosystem, Google strengthens the incentive for developers and enterprises building on their cloud infrastructure (GCP) and using their foundational models, creating a higher barrier to entry for competitors.

For investors and market watchers, the immediate integration roadmap is the strongest indicator of how quickly this technological improvement will translate into market share and competitive advantage.

Actionable Insights for a Reasoning-Ready Future

The evolution from fluent text generation to robust reasoning capabilities demands a strategic response from organizations:

1. Reassess Trust Boundaries for AI Agents

If an AI can now solve complex problems reliably, where does the human oversight end and the AI’s accountability begin? Businesses must develop clear governance frameworks around tasks involving high-stakes reasoning (e.g., financial modeling, medical diagnostics support). Do not simply trust the double score; rigorously test the model in your specific domain before delegating significant decision-making.

2. Shift Training Focus to Complex Prompt Engineering

Training data preparation remains vital, but the emphasis shifts. Instead of solely focusing on feeding the model clean examples of *what* to do, focus on teaching it *how to reason* through difficulty. This involves creating prompts that explicitly require multi-step thinking, error checking, and self-correction mechanisms—this is the art of eliciting the new reasoning potential.

3. Embrace "Co-Creative" Roles

The role of human experts is changing from executioners to verifiers and directors. A scientist might no longer spend weeks running initial simulations but rather spend days refining the top five AI-generated hypotheses. Prepare your workforce for roles that emphasize high-level strategic direction and the critical evaluation of AI-derived solutions.

The new generation of models is less likely to hallucinate simple facts and more likely to perform sophisticated logical errors—errors that are harder to spot because they are buried deep within a complex, seemingly logical argument. Detecting these high-level reasoning failures will be the new premium skill.

The Road Ahead: The Quest for True General Intelligence

The doubling of reasoning scores in Gemini 3.1 Pro marks a significant stride toward Artificial General Intelligence (AGI). Reasoning is arguably the most crucial component missing from current systems, sitting just before true common sense and self-awareness.

However, the AI community must remain vigilant. As models get better at synthetic reasoning, the need for transparency and interpretability grows. How did the model arrive at that complex solution? We need tools that map the logical chain used by Gemini 3.1 Pro, not just its final answer. This drive for interpretability, often championed by researchers scrutinizing the limitations of benchmarks, will define the safety and trustworthiness of the next wave of AI deployment.

Ultimately, the release of Gemini 3.1 Pro is less about celebrating a number on a chart and more about confirming that the industry is now rapidly equipping its generalist models with specialist-level cognitive tools. The race is no longer just about scale; it’s about the depth and sophistication of thought that scale enables. The era of truly capable AI collaborators is dawning, powered by logic that is finally starting to rival the complexity of our own challenges.