Google Gemini and Lyria 3: The AI Revolution in Music Creation and Multimodality

The release of an AI model capable of generating complex, high-fidelity creative outputs—like 30-second tracks complete with vocals, lyrics, and cover art from a simple text prompt—is no longer a futuristic dream. It is today’s reality, crystallized by Google DeepMind's integration of its powerful Lyria 3 model directly into the Gemini ecosystem. This move signals a critical inflection point: generative AI is graduating from fascinating toy to indispensable creative tool, moving squarely into the mainstream.

As an AI technology analyst, this development is far more than a simple feature update. It is a strategic declaration that powerful generative capabilities must be baked into foundational models. To truly understand what this means for the future of AI, we must look beyond the captivating 30-second track and examine the technological backbone, the competitive field, and the inevitable friction points—especially legal ones—that will define this new era of synthetic media.

The Technological Leap: From Noise to Nuance with Lyria 3

For years, AI-generated music often sounded synthetic, lacking the emotional depth or complex arrangement of human composition. Lyria 3, built upon the research powerhouse of DeepMind, appears to have significantly closed that gap. The ability to generate music, lyrics, *and* accompanying visuals simultaneously from a single instruction is the hallmark of true multimodal intelligence.

Understanding Multimodality in Practice

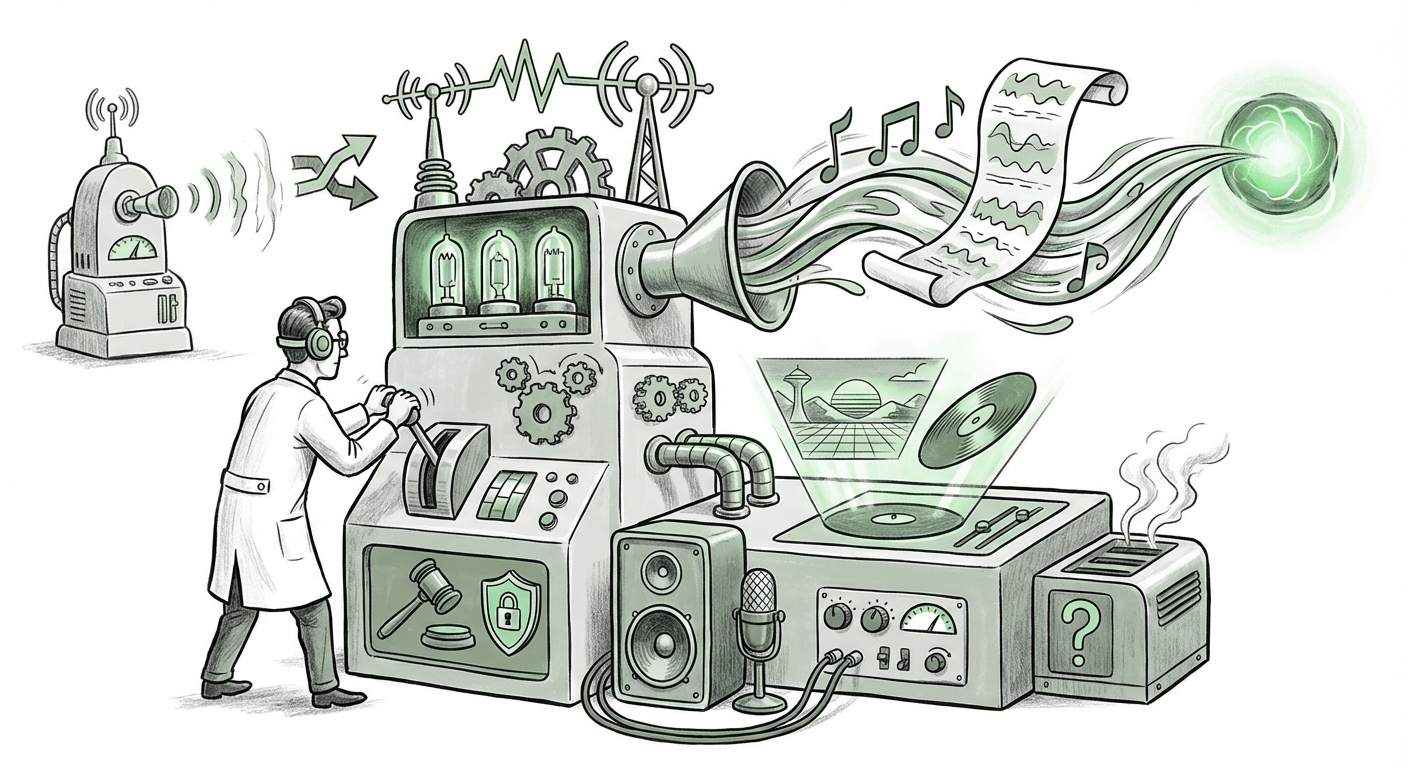

Think of it this way: older AI models were specialized. One model wrote text (like GPT), another made images (like DALL-E). Lyria 3, embedded in Gemini, showcases a unified brain. If you ask for "a hopeful, 80s synth-pop track about space exploration," the system doesn't just stitch together generic audio; it must internally compose the lyrical theme, structure the melody, select instrumentation, and then generate visual branding to match. This convergence makes the creative process startlingly immediate.

This immediacy is changing the definition of creation itself. For technical audiences, this suggests sophisticated cross-attention mechanisms are linking audio generation models with language models (for lyrics) and diffusion models (for art) seamlessly. For the general user, it means anyone can become a "producer" or "art director" with just a few words. This democratization of high-fidelity output is arguably the most disruptive trend in creative technology today.

The Competitive Arena: Race for Creative Supremacy

Google is not operating in a vacuum. The integration of Lyria 3 into Gemini places it directly into a fierce battle for supremacy in the generative audio space. We need to look at the existing players to grasp the strategic implications of Google’s entry.

- Suno AI & Stability AI (Stable Audio): These players have already demonstrated remarkable capabilities, especially Suno's ability to handle complex, user-specified vocal styles. The competitive edge for Google will rely heavily on the *quality* and *controllability* of the vocals generated by Lyria 3, alongside its superior integration within the broader Gemini toolset.

- Meta’s Efforts (AudioCraft/MusicGen): While Meta has released open-source research models, Google’s move to productize DeepMind’s best work within a consumer-facing tool like Gemini is a clear signal that they intend to leverage proprietary superiority for market share.

The core battleground, as often highlighted in competitive analyses (the kind sought via queries like "Competitive Landscape AI Audio Generation Models vs Lyria 3"), is no longer about *if* AI can make music, but *how well* it can integrate with existing workflows and *who* can control the most powerful underlying architecture. For businesses, this competition drives down the barrier to entry for high-quality content creation, forcing traditional agencies to adapt rapidly.

The Elephant in the Studio: Legal and Ethical Headwinds

The power of Lyria 3—its ability to generate human-sounding vocals and detailed compositions—brings us immediately to the most volatile aspect of generative AI: intellectual property. As investigative research into "Generative AI Music Licensing and Copyright Trends 2024" suggests, the industry is teetering on the edge of major legal clarification.

The Training Data Dilemma

If Lyria 3 was trained on vast troves of copyrighted music without explicit permission or compensation structures in place, every high-quality output risks becoming a legal landmine. Artists and publishers are demanding transparency and remuneration for the use of their life’s work in training datasets. Google, like all major players, must navigate this minefield.

The question hinges on the legal doctrine of "transformative use." Does an AI generating a song in the *style* of an existing artist fundamentally infringe on copyright, or is it a new, transformative work? The outcome of current lawsuits will dictate the viability of models like Lyria 3. If courts rule strictly against AI developers, the technology might be forced back toward closed, licensed datasets, slowing its progress.

Authenticity and Deepfakes

The generation of realistic vocals opens the door to misuse. While Google is likely implementing safeguards (e.g., watermarking or preventing the replication of specific, protected voices), the potential for creating "deepfake" musical performances or unauthorized uses of an artist’s vocal timbre remains a societal concern. Clear governance around voice cloning and content provenance will be essential to maintaining consumer trust.

Future Implications: Redefining Content Workflows

The integration of Lyria 3 into Gemini perfectly illustrates the emerging trend discussed in analyses of "Impact of Multimodal AI on Content Creation Workflows": the consolidation of creative tools.

For Small Businesses and Marketers

The ability to generate a fully branded 30-second audio advertisement, complete with music, voiceover, and album art, in minutes, eliminates major bottlenecks. Small businesses, previously priced out of professional audio production, can now generate custom, copyright-safe soundtracks for social media campaigns instantly. This moves AI from being a productivity enhancer to a genuine **production floor replacement** for low-to-mid-tier content needs.

For Professional Artists and Composers

This technology will force a necessary evolution. Artists who see AI as a threat will likely be marginalized. Those who embrace it—using Lyria 3 for rapid prototyping, generating complex backing tracks to solo over, or iterating through hundreds of sonic ideas in an hour—will gain an unprecedented speed advantage. The focus shifts from generating *every note* to becoming expert *prompt engineers* and *final editors* of AI output.

The Ecosystem Play: Gemini as the Creative Hub

Lyria 3’s placement within Gemini is the strategic masterstroke. It is not a standalone app; it is a capability woven into Google's massive AI infrastructure. This encourages user stickiness. Why use a separate tool for image generation, another for text, and yet another for music, when Gemini promises to handle all three coherently? This integration is a key indicator of where the major tech platforms are heading: toward becoming indispensable operating systems for human creativity.

Actionable Insights for Navigating the New Soundscape

For stakeholders looking to thrive in this rapidly evolving creative technology space, here are concrete takeaways based on the Lyria 3/Gemini convergence:

- Audit Your IP Strategy Now: Businesses using or planning to use AI-generated media must immediately engage legal counsel to understand exposure related to training data origins. Focus on tools that offer robust indemnification or operate on known, licensed datasets.

- Prioritize Multimodal Fluency: Treat prompt engineering as a core skill. The value will shift from those who can execute a creative task manually to those who can precisely direct a sequence of multimodal tasks (e.g., "Generate a cinematic score, with a melancholic cello lead, and prompt an image of a desolate Martian landscape to match the mood.").

- Invest in Curation, Not Just Generation: Recognize that AI output is a draft. The premium service of the future will be human experts who can take raw AI output and polish it, add proprietary human nuances, and ensure emotional resonance that algorithms often miss.

- Demand Provenance Tools: Support and utilize technologies that clearly label AI-generated content. This builds consumer trust and protects genuine human artistry from being diluted by uncredited synthetic work.

Conclusion: The Composer in the Cloud

Google’s deployment of Lyria 3 within Gemini is not merely an announcement of better music generation; it is a benchmark for the mainstreaming of integrated, high-fidelity generative AI. It confirms that the future of digital creativity is multimodal, immediate, and heavily reliant on foundational models like Gemini that can orchestrate various creative engines simultaneously.

While the technical capabilities soar, the ethical and legal frameworks struggle to keep pace. The next 18 months will be defined less by which model creates the most realistic song, and more by which company successfully navigates the complex waters of IP law while simultaneously building the most intuitive, integrated creative platform. The composer is now partially in the cloud, and the entire industry structure is being rapidly rebuilt around this new reality.