Google Gemini Unleashes Lyria 3: How AI Is Writing Its Own Hit Songs and Redefining Creativity

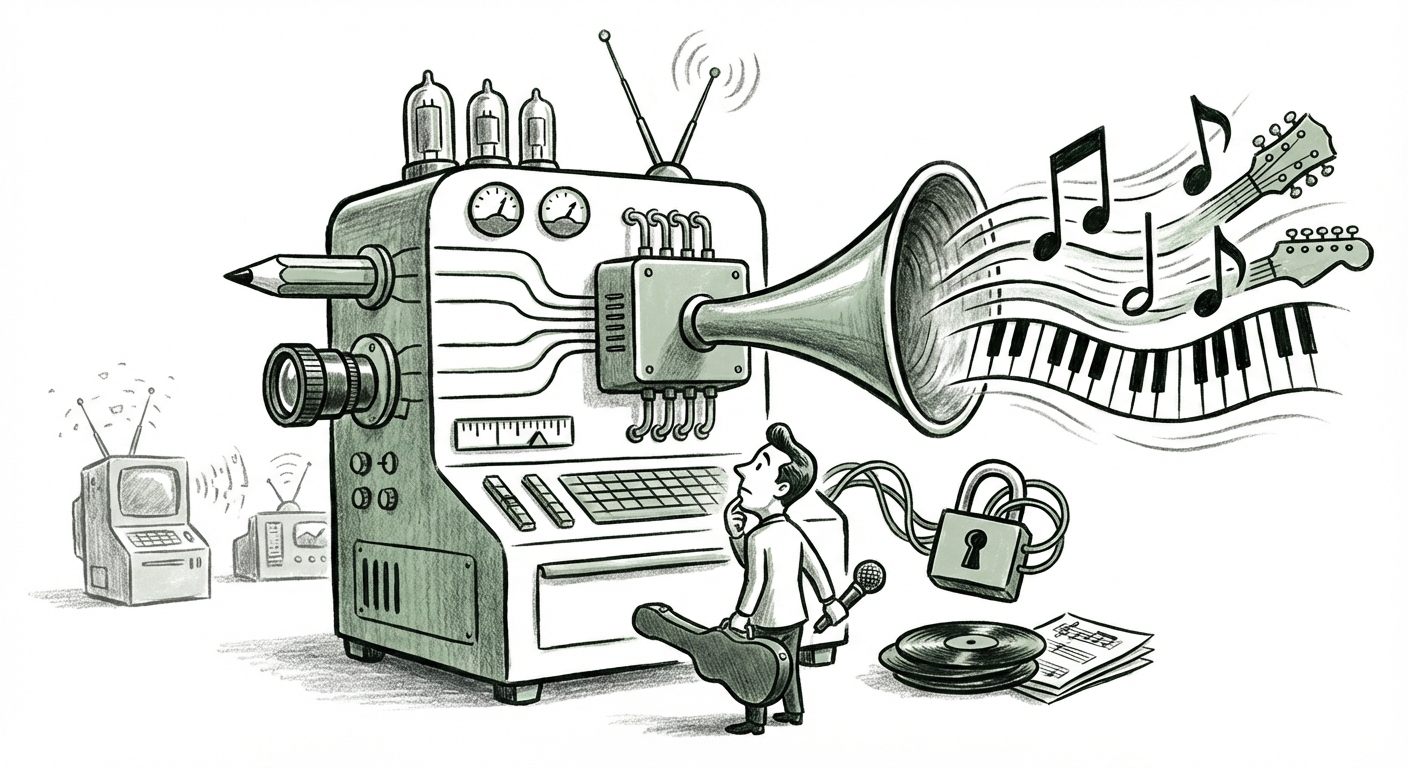

The world of generative AI is often discussed in terms of text (like ChatGPT) or images (like Midjourney). However, the creative frontier is rapidly expanding into the auditory realm. The news that Google is integrating DeepMind’s powerful new Lyria 3 model directly into its Gemini large language model ecosystem is not just an incremental update; it’s a foundational shift in how we interact with digital creation.

Lyria 3 is reported to generate complete, 30-second musical tracks—including instrumentation, lyrics, and even cover art—from simple text prompts or uploaded media. This capability pulls music generation out of specialized labs and places it into the hands of the world’s most used AI assistant. For businesses, creators, and technologists alike, this development demands a thorough analysis of the technology, the competitive fight, and the inevitable legal storms ahead.

The Leap from Prompt to Performance: Analyzing Lyria 3's Technical Significance

For years, AI music generation struggled with two major hurdles: musical coherence over time (making sure a chorus sounded like it belonged to the verse) and high-fidelity audio quality, especially when generating human-like vocals. Lyria 3, drawing from DeepMind’s advanced research, appears to be tackling both head-on.

When evaluating new models, we look beyond the marketing copy. Initial insights suggest that Lyria 3 is likely leveraging highly advanced diffusion models—the same core technology behind cutting-edge image generators, but adapted for the complex, temporal nature of sound waves. A query for **"DeepMind Lyria 3 technical specifications"** reveals that true breakthroughs often lie in how the model manages long-range dependencies in audio. If Lyria 3 can maintain musical structure and genre consistency across a 30-second snippet, that hints at a major advancement in handling complex sequential data—a prerequisite for generating full-length, professional-sounding compositions.

What makes this integration into Gemini so potent is the multimodality. A user won't just type "Create a sad song." They might prompt Gemini with: "Use the aesthetic of this image I uploaded, write lyrics about the feeling of leaving home, and generate a 1980s synth-pop track to go with it." This level of integrated creative direction—text, image, and audio synthesis in one flow—moves AI from a novelty tool to a genuine production assistant.

What This Means for AI Developers

For AI researchers, Lyria 3 proves the viability of unified creative agents. The ability to handle symbolic data (lyrics/text) and perceptual data (sound/art) within one ecosystem suggests that future AI will increasingly work across sensory boundaries seamlessly, pushing the need for better, more efficient multimodal architectures across the board.

The Competitive Soundscape: Who Is Winning the Generative Audio Race?

Google is making a decisive play, but they are entering a battle already joined. To understand Lyria 3’s placement, one must benchmark it against its major rivals. Searching for **"OpenAI Jukebox vs Google Lyria vs Meta MusicGen comparison"** highlights the current arms race.

Meta has made significant strides with open-source models like MusicGen and AudioCraft, allowing the community to test and iterate rapidly. While Meta’s efforts often prioritize accessibility and instrumental quality, Google’s focus—especially with Lyria’s history—has often centered on achieving photorealistic or human-level fidelity, which the inclusion of vocals in Lyria 3 strongly suggests.

OpenAI, while having shown early promise with Jukebox, has been quieter in recent mainstream music releases, focusing heavily on GPT for text and DALL-E for images. Google's integration of Lyria 3 directly into Gemini positions them to leapfrog the competition in user accessibility. By embedding this high-end audio engine into the world's most accessible conversational AI platform, Google is aiming for market saturation, not just technical superiority.

Future Implications: The Democratization of Sound Production

For tech strategists and venture capitalists, this means the barrier to entry for creating high-quality, branded audio content is plummeting. Creating a unique 30-second jingle for a marketing campaign, background music for a social media reel, or even placeholder music for a prototype video game can now be done instantly, cheaply, and internally.

The race now shifts from "Can AI make music?" to "Which AI can make the *best* music for a specific use case, and integrate it most easily into my workflow?"

The Unavoidable Conflict: Copyright, Ethics, and the Voice Dilemma

The most explosive area surrounding Lyria 3 is not the technology itself, but the data it was trained on—and what it is now capable of creating. The inclusion of lyrics and vocals elevates the ethical stakes dramatically. This is where an inquiry into **"AI music copyright law developments 2024"** becomes critical.

If Lyria 3 uses copyrighted music—even instrumental tracks—to learn musical structure, that is one legal debate. If it can perfectly mimic the voice, cadence, and lyrical style of a contemporary artist, that is an existential threat to those artists.

The legal environment is highly reactive. We are seeing major record labels and publishers aggressively pursuing litigation against AI developers for training data infringement. Google, as a massive data aggregator, faces intense scrutiny over the sourcing of its training sets. The outputs from Lyria 3, particularly the 30-second tracks complete with *vocals*, immediately raise questions:

- If the lyrics sound like a known artist’s style, is it plagiarism?

- If the generated voice sounds like a living singer, does that constitute voice cloning/identity theft, even if the generated lyrics are original?

- Who owns the copyright of the resulting 30-second track: the prompter, Google, or (potentially) the original rights holders whose work informed the model?

This uncertainty is precisely why music publishers are vocalizing concern. They are fighting to ensure that generative tools do not systematically bypass licensing fees and erode the value of their catalogs. Any widespread adoption of Lyria 3 will force courts and legislators to draw much clearer lines on derivative works in the audio domain.

Actionable Insight for Legal and Business Teams

Businesses utilizing Gemini for content creation must establish internal policies immediately regarding AI-generated music. Relying on "it was generated by AI" as a defense against copyright claims is rapidly becoming obsolete. Future commercial use of Lyria 3 output will likely require explicit legal indemnification from Google or a guarantee that the model uses only fully licensed or public domain training data.

The Creator Economy: Collaboration or Collapse?

Beyond the boardroom and the courtroom, the most human impact will be felt by working musicians. The query regarding the **"Impact of AI music generation on independent musicians workflow"** reveals a split perspective. On one hand, AI promises unprecedented creative liberation; on the other, it threatens economic viability.

For an independent game developer or filmmaker needing a complex score but lacking a budget for custom composition, Lyria 3 is revolutionary. It offers an on-demand, customized soundtrack that can evolve with the project instantly. This democratization empowers small studios and individual creators enormously.

However, the threat to session musicians, jingle writers, and library music providers is direct and severe. If a client can generate a 100% unique, royalty-free track for less than a dollar in seconds, the demand for human-created, standardized library music dries up.

Future of Work in Music

The actionable insight here for creators is adaptation. The future professional musician will likely be an "AI Orchestrator" or "Prompt Engineer for Sound." Success will pivot away from mastering decades of instrumental technique and toward mastering the *intent* communicated to the machine. Those who can expertly guide Lyria 3—blending its synthetic power with human refinement, editing, and arrangement—will command a premium.

The new creative workflow might look like this: Lyria 3 generates the core 30-second loop and vocal melody; the human producer then takes that output into a Digital Audio Workstation (DAW) to humanize the drums, refine the bassline, and perhaps use voice-cloning neutralization techniques to ensure legal compliance, ultimately adding the irreplaceable touch of human nuance.

Conclusion: The Symbiotic Future of Sound

Google’s move to embed Lyria 3 within Gemini is a significant marker on the timeline of generative AI evolution. It solidifies the trend toward integrated, multimodal creation tools where text prompts unlock sensory experiences across different formats. It escalates the competitive pressure among tech titans aiming to own the creative pipeline.

However, as we move forward, the pace of technological advancement must be met with a corresponding maturation of legal and ethical frameworks. The success of this technology will ultimately depend not just on how realistically it can synthesize a song, but on how fairly it can coexist with the human artists whose work made that synthesis possible.

The coming years will see AI music move from generating novelty clips to becoming the background hum of the digital world—the soundtrack to every ad, every social post, and perhaps, eventually, the foundation for new genres we haven't yet imagined. Staying informed on the technical nuances, watching the competitive chessboard, and proactively engaging with the evolving copyright landscape are essential for anyone hoping to thrive in this new auditory era.