The Enterprise AI Nexus: Decoding Multi-Environment Deployment and Hardware Wars Driving 2026 Infrastructure

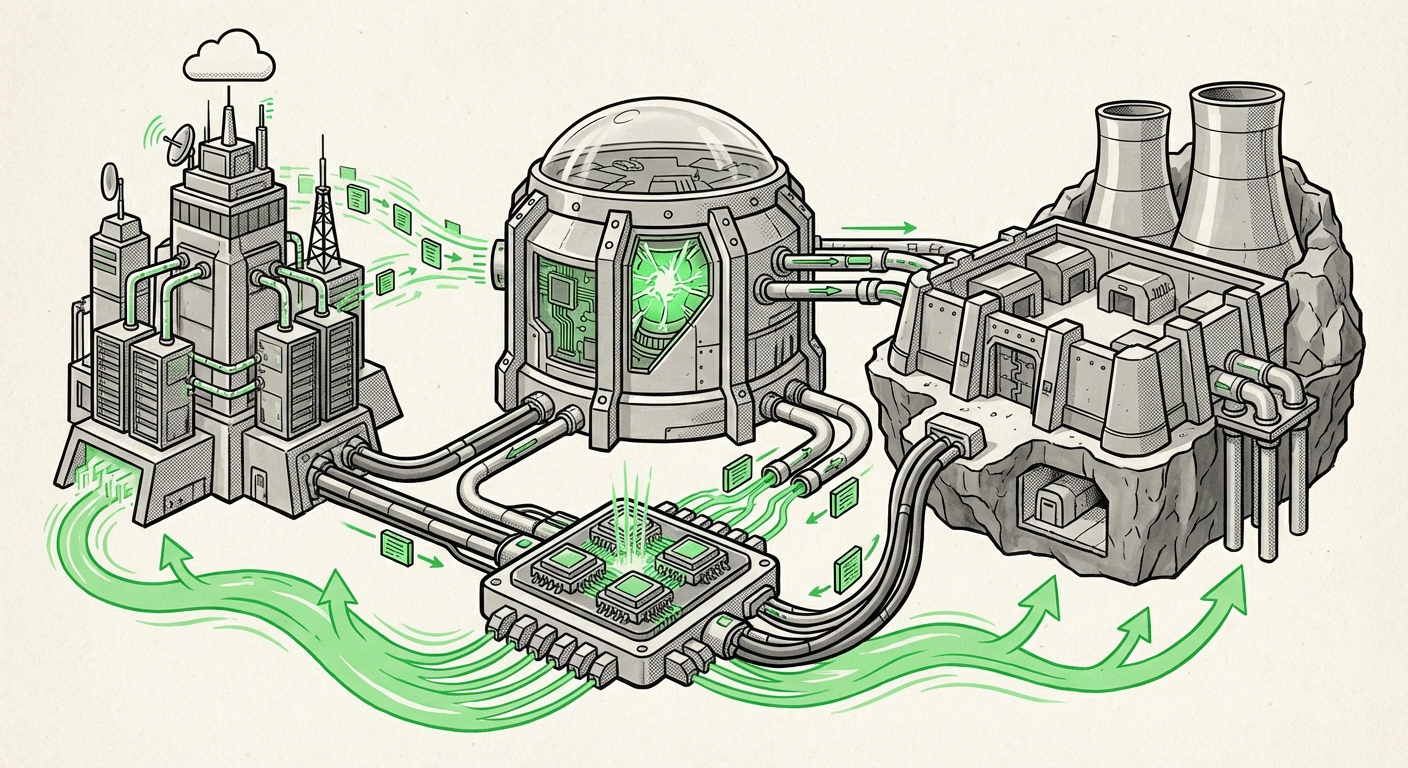

The journey from an interesting AI demo to a mission-critical business engine is paved with infrastructure decisions. Recently, guidance has emerged detailing how enterprises plan to deploy high-performance computing stacks—such as those involving the AMD MI355X accelerator—across their entire digital footprint, spanning public SaaS offerings, private Virtual Private Clouds (VPCs), and legacy on-premises data centers.

This move toward "Anywhere AI" is not merely a technical preference; it is a fundamental strategic response to the soaring costs of training Large Language Models (LLMs) and stringent regulatory demands. To truly understand the future implications of this trend, we must analyze three interlocking forces: the evolving hardware battleground, the operational complexity of hybrid deployment, and the economic pressure cooker of modern model development.

The New Arms Race: Hardware Validation Beyond the Incumbent

For years, the narrative around enterprise AI acceleration has been dominated by one entity. However, modern AI infrastructure demands diversity, resilience, and competitive pricing. The focus on hardware like the **AMD MI355X**—detailed in recent guides for inference, training, and memory scaling—signals a maturing market where vendors must aggressively compete on performance-per-watt and total cost of ownership (TCO).

Navigating the Competitive Landscape

When examining the MI355X, analysts must immediately place it against established leaders, such as NVIDIA's H200 series. The crucial question for any CIO is: Does this new solution provide comparable ecosystem maturity, especially concerning software libraries (like the comparison between ROCm and CUDA), or does its superior memory scaling offer a breakthrough for massive models?

Why this matters: For the infrastructure architect, adopting a new chip family often means rewriting deployment scripts or retraining teams. However, if the economic incentive—driven by better memory architecture for handling multi-billion parameter models—is strong enough, the short-term friction is worth the long-term flexibility. This tension between incumbent software comfort and next-generation hardware price/performance defines infrastructure budgeting for the next three years.

Actionable Insight for Architects:

Do not judge new hardware solely on raw throughput benchmarks. Focus intensely on its capability for memory scaling. Since LLMs are fundamentally memory-bound, a chip that efficiently pools resources across multiple units (as implied by guides focusing on memory scaling trade-offs) will win the day for large-scale training, regardless of peak FLOPS figures.

The Operational Hurdle: Mastering the Hybrid AI Continuum

The enterprise AI mandate today is clear: AI models must live where the data is, and where compliance requires them to be. This necessitates deployment across three distinct zones: **SaaS** (where external vendors manage everything), **VPC/Private Cloud** (for regulatory or low-latency workloads), and **On-Prem** (for highly sensitive, proprietary systems).

The Complexity of Unified Control

Deploying a single AI workflow—say, fine-tuning a model—across these three environments presents immense operational challenges. It’s not just about the hardware; it's about the abstraction layer that sits above it. This is why an "enterprise-ready guide" becomes essential. Organizations face issues like:

- Security Parity: Ensuring the same encryption and access controls apply whether the model is being queried from a public API (SaaS) or a local server (On-Prem).

- Data Gravity and Latency: Models need access to fresh data. Moving massive datasets between on-prem storage and the cloud is slow and expensive. The best solution often involves deploying smaller, specialized models closer to the data source.

- Management Sprawl: Using one set of tools (like Kubernetes or specialized MLOps platforms) to manage compute allocation, monitoring, and scaling across environments that use different underlying APIs.

For the business strategist, this complexity translates directly into risk and cost. A unified "MCP" (Multi-Cloud Platform or similar) strategy aims to tame this sprawl by creating a single pane of glass for deployment, making the underlying hardware (like the MI355X) interchangeable based on immediate need.

What This Means for Future AI Services:

We expect to see a surge in specialized MLOps tooling designed explicitly for this hybrid reality. Future tools will automate data synchronization and ensure that model artifacts adhere to governance rules specific to their deployment location, abstracting away the messy details of VPC networking and on-prem hardware management for the end-user data scientist.

The Economic Imperative: LLM Training as Capital Expenditure

The final, and perhaps most powerful, driver shaping infrastructure choices is the sheer expense of building and refining foundation models. Training LLMs consumes vast amounts of computational power over extended periods, making the initial capital outlay significant.

The Memory Crunch and Fine-Tuning

The guide’s focus on "memory scaling" speaks directly to this economic reality. Simply put, larger models require more VRAM (Video Random Access Memory) to operate efficiently. When models exceed the capacity of a single accelerator, engineers must employ complex techniques to split the model across multiple chips, which adds latency and engineering overhead.

As corroborating analyses suggest, companies are deeply focused on cost optimization and memory scaling for fine-tuning. This is where specialized hardware excels. If a platform like the MI355X can handle a larger chunk of a model’s weights directly in its fast memory compared to a competitor, it can reduce the reliance on slower, system-level memory swapping, leading to faster iterations and lower overall operational expenses.

This pressure is causing a strategic bifurcation:

- The Hyperscalers: Continue to build massive, dedicated clusters for foundational training (often using proprietary or cutting-edge vendor hardware).

- The Enterprises: Focus heavily on fine-tuning and inference using custom, secured clusters (On-Prem/VPC) built around cost-effective, high-memory-density accelerators that challenge the established order.

Practical Implication: Shifting Budgets

For CTOs, the takeaway is clear: Capital expenditure (CapEx) on specialized hardware optimized for memory efficiency is replacing reliance solely on operational expenditure (OpEx) via public cloud GPU rentals. If you plan to keep your proprietary models in-house, investing in infrastructure that maximizes the return on that hardware investment—by enabling more efficient training runs—becomes paramount.

What This Means for the Future of AI Deployment

The convergence of these three factors—competitive hardware, hybrid deployment necessity, and economic pressure—points toward a future where AI infrastructure is highly specialized, deeply integrated, and location-agnostic.

1. Infrastructure Becomes Programmable (and Portable)

The future success of deploying an MI355X stack across SaaS, VPC, and On-Prem hinges on abstraction. We are moving toward a world where the AI workload is portable, not the physical server. Cloud-native principles (containers, Kubernetes, service meshes) will become mandatory for any serious enterprise AI deployment to ensure workloads run identically whether they are being served via a cloud provider's managed service or a rack server in the company basement.

2. The Rise of the Sovereign AI Stack

Data sovereignty and regulatory compliance will continue to push high-value model development back behind corporate firewalls or into regulated private clouds. The ability of vendors to provide enterprise-ready guides that cover security and governance for these "sovereign AI stacks" will be a key differentiator. This means focusing less on global scale and more on secure, high-density, low-latency performance within defined geographic or regulatory boundaries.

3. Democratization Through Efficiency

While foundational models grow larger, the ability of newer hardware and software techniques (like efficient fine-tuning, as referenced earlier) allows smaller organizations to achieve competitive results with smaller, specialized models. If an AMD solution can provide significantly more memory scaling for the price, it lowers the barrier for a mid-sized enterprise to build a powerful, custom AI assistant without needing the budget of a tech giant.

Conclusion: Strategy Over Specification

The recent focus on guides detailing the deployment of advanced accelerators like the MI355X across SaaS, VPC, and On-Prem is a clear indicator of the enterprise mindset shift. The question is no longer "Can we run AI?" but rather, "How can we run AI securely, efficiently, and economically across every part of our business?"

Success in the next phase of AI adoption will not belong solely to those with the fastest chips, but to those with the most agile infrastructure strategy. Infrastructure architects must balance the raw performance of competitive accelerators against the operational nightmare of hybrid management, all while ensuring that every dollar spent on training yields maximum model improvement. The future of AI is flexible, financially conscious, and fundamentally hybrid.