The AI Influence Game: Why Big Tech is Pouring Millions into State Elections for 'Friendly' Lawmakers

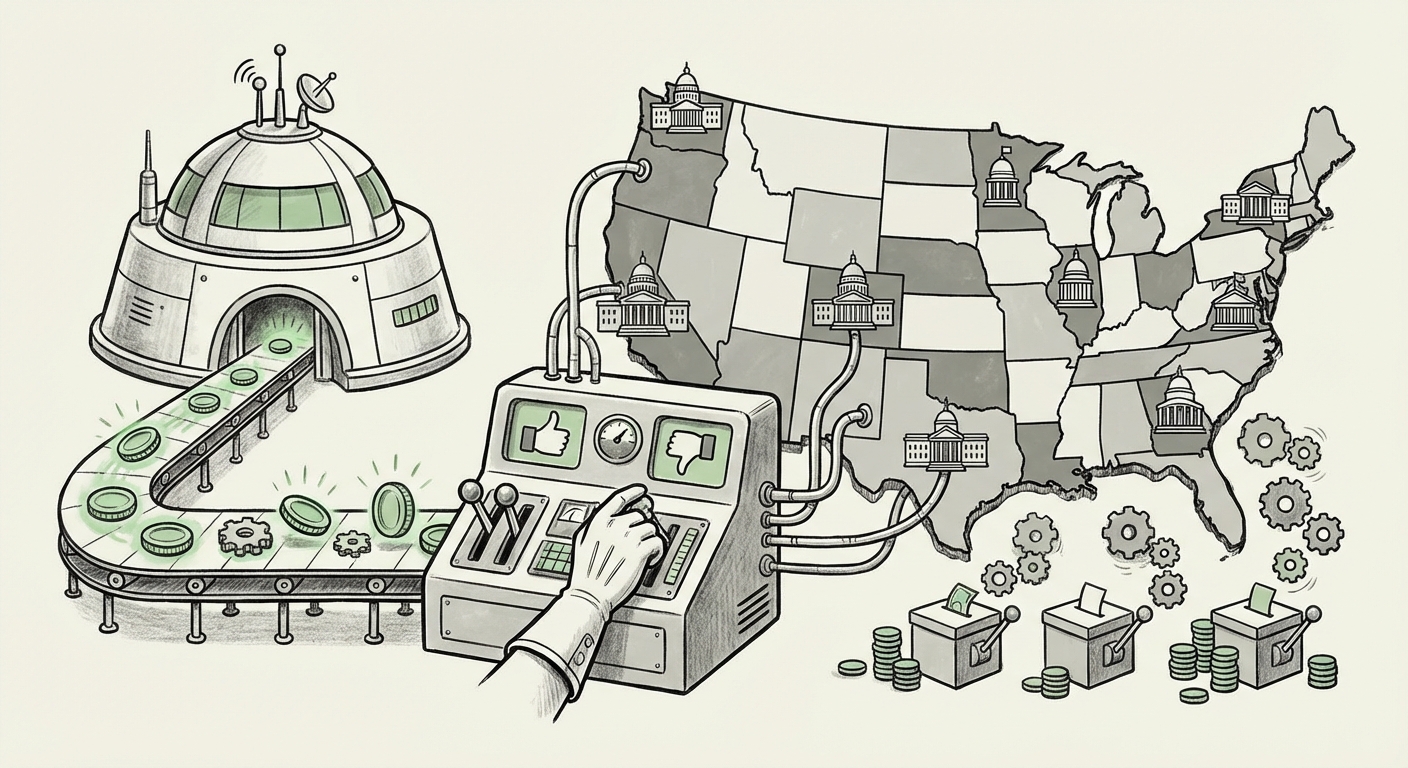

The world of Artificial Intelligence (AI) is often discussed in terms of algorithms, compute power, and breakthrough models. However, the real battleground for the future of AI adoption, deployment, and liability is rapidly shifting away from Washington D.C. and into state capitals. The revelation that Meta is pouring a reported **\$65 million** into state-level elections to back politicians deemed "AI-friendly" is not merely political maneuvering—it is a profound signal about where regulatory power is truly residing in the age of generative intelligence.

For technologists and business leaders, this means that the rules governing how your next product launches, how your data is used for training, and what legal risks you assume might be decided by a state house race, not a Congressional vote. This deep dive analyzes this strategic pivot, explores the nature of the regulations being fought over, and projects the future implications for governance and innovation.

The Regulatory Pivot: Why States Over Federal Seats?

For years, tech lobbying focused primarily on securing favorable legislation or preventing overreach in Washington. While federal efforts, like proposed comprehensive AI bills or antitrust actions, remain crucial, they often get bogged down by partisan gridlock. Meanwhile, state legislatures are moving fast.

When we examine the legislative landscape (as indicated by searching for State level AI regulation bills 2024 via the National Conference of State Legislatures), we see a flurry of activity. States are passing laws covering:

- Algorithmic Bias: Mandating audits for AI used in hiring, lending, or criminal justice.

- Data Privacy and Consent: Setting stricter rules on how personal data can feed proprietary models.

- Transparency and Labeling: Requiring disclosures when content is AI-generated.

These granular rules, while perhaps appearing minor next to a massive federal bill, are often the most immediate stumbling blocks for companies deploying AI at scale. Imagine a national rollout of a new medical diagnostic tool: if 10 states have conflicting rules on data provenance and liability waivers, the path to market becomes prohibitively complex. Meta’s strategy is to ensure that the majority of these 10 states elect leaders who favor innovation over immediate, sweeping restrictions.

Benchmarking the Investment: A New Era of Political Spending

Is \$65 million a lot for political spending? Absolutely. But its significance lies in its *location*. As investigative reports often highlight when tracking Tech industry lobbying spending state elections vs federal 2023 2024, tech spending has historically tilted heavily toward federal races. This strategic deployment at the state level suggests a calculated maturation of lobbying tactics. It implies that Big Tech has concluded that influencing the *implementers* and *early adopters* of technology policy—the Governors and State Assembly members—offers a better return on investment (ROI) than fighting endless national battles.

For the average voter, this means their state legislative races are now deeply intertwined with global AI development, often without that connection being overtly clear on the ballot.

What "AI-Friendly" Really Means in Practice

When a tech giant backs an "AI-friendly" politician, the term carries multiple meanings, extending far beyond just voting against regulation. It encompasses creating an entire socio-economic ecosystem designed to support the next generation of compute-intensive technologies.

1. The Regulatory Shield (The Obvious)

The most direct goal is preventing liability. If an AI makes an error, who pays? An AI-friendly politician is more likely to support "safe harbor" provisions or place the onus of liability on end-users or developers, rather than the platform providers themselves. They are also likely to oppose laws requiring expensive, mandatory, third-party bias audits.

2. The Infrastructure Carrot (The Economic Angle)

The "carrot" is often more effective than the "stick." To run cutting-edge AI, companies need massive amounts of power, water, and specialized real estate for data centers. This is where looking into NGA (National Governors Association) tech policy initiatives AI and related economic development news becomes vital. An "AI-friendly" state government is one that readily grants tax abatements for new data centers, fast-tracks zoning permits for high-density computing facilities, and ensures the electrical grid can handle the enormous power demands.

This financial incentive is why we see technology companies actively partnering with states on workforce development (Query 3). If the state government agrees to fund massive retraining programs focusing on cloud engineering and prompt optimization—skills that directly benefit the tech companies—the relationship becomes mutually beneficial, cementing political goodwill.

3. Shaping the Future Workforce

AI deployment hinges on a skilled local workforce. If state leaders are aligned with tech priorities, university curricula, vocational training programs, and K-12 STEM funding will naturally skew toward the skills needed to service Big Tech’s future pipelines. This creates long-term regulatory moats; as more state jobs and economic growth become dependent on the presence of large AI firms, challenging those firms politically becomes economically dangerous for local leaders.

Implications for the Future of AI Governance and Business

This decentralization of tech political influence has profound consequences that ripple across the entire technological future of the United States.

The Patchwork Quilt of AI Law

The primary outcome will be a highly fragmented regulatory landscape. Instead of a unified national standard (like GDPR in Europe), the U.S. will likely feature dozens of distinct regulatory zones. For a large AI developer, this means:

- High Compliance Overhead: Teams must track and adhere to specific state requirements regarding data ingestion and model transparency.

- Regulatory Arbitrage: Companies may subtly shift their most sensitive data processing or high-risk deployments to states with the most permissive laws, incentivizing *those* states to remain lightly regulated to attract business.

The Definition of 'Ethical AI' Becomes Localized

Ethics are not universal; they are cultural and political. What is considered acceptable bias in a hiring tool in one state might be strictly forbidden in another. When Meta invests to elect officials who define "AI-friendly," they are essentially investing in localizing the definition of ethical AI to one that prioritizes rapid commercialization over precautionary measures.

For AI ethicists and researchers (a key audience for this analysis), the challenge intensifies. Instead of lobbying a single federal agency, advocacy groups must now engage in hundreds of local campaigns, often facing well-funded incumbent politicians supported by the full might of Big Tech’s newly channeled electoral spending.

For Businesses: A Call to Action for Strategic Engagement

This environment demands that businesses—not just Meta—re-evaluate their political strategies. If you are an AI startup or a mid-sized enterprise relying on AI for core functions, you cannot afford to ignore state races.

Actionable Insights:

- Map Your Regulatory Risk by State: Identify which states are leading the charge on legislation (e.g., high disclosure requirements vs. high data privacy standards). Prioritize engagement efforts in those specific districts where key votes are being held.

- Align with Economic Development Goals: Instead of solely focusing on regulatory critique, frame your AI deployments in terms of state benefits: job creation, infrastructure modernization, and local economic uplift. This aligns your goals with the incentives of the politicians Meta is supporting.

- Monitor Infrastructure Deals: Pay close attention to news regarding data center tax breaks and utility agreements. These deals are often the precursor to favorable regulatory alignment. If the chips and power are flowing to State X, expect legislation in State X to favor the companies that build those centers.

- Focus on Non-Electoral Influence: Participate actively in state-level advisory boards, technical working groups, and professional association roundtables (often organized through bodies like the NGA). Influence is often built incrementally through trusted technical advice provided to staff and officials, long before an election occurs.

The Future: A Governance Landscape Shaped by Direct Investment

Meta’s \$65 million expenditure is a clear indicator that the era of passive compliance is over. Big Tech is moving from reacting to laws to proactively engineering the political environment that creates the laws. This is a sophisticated, well-capitalized strategy designed to ensure that the foundation upon which future AI services are built is stable, predictable, and—crucially—favorable to the incumbents driving the technology forward.

The key takeaway for the industry is that the legislative future is no longer just about writing good code; it’s about winning local policy battles. The competition for AI dominance will now be fought not just in the lab, but in the state legislative chambers, financed by tens of millions of dollars aimed at ensuring that the governors and lawmakers deciding on the future of algorithms are already bought into the vision of an AI-first world.