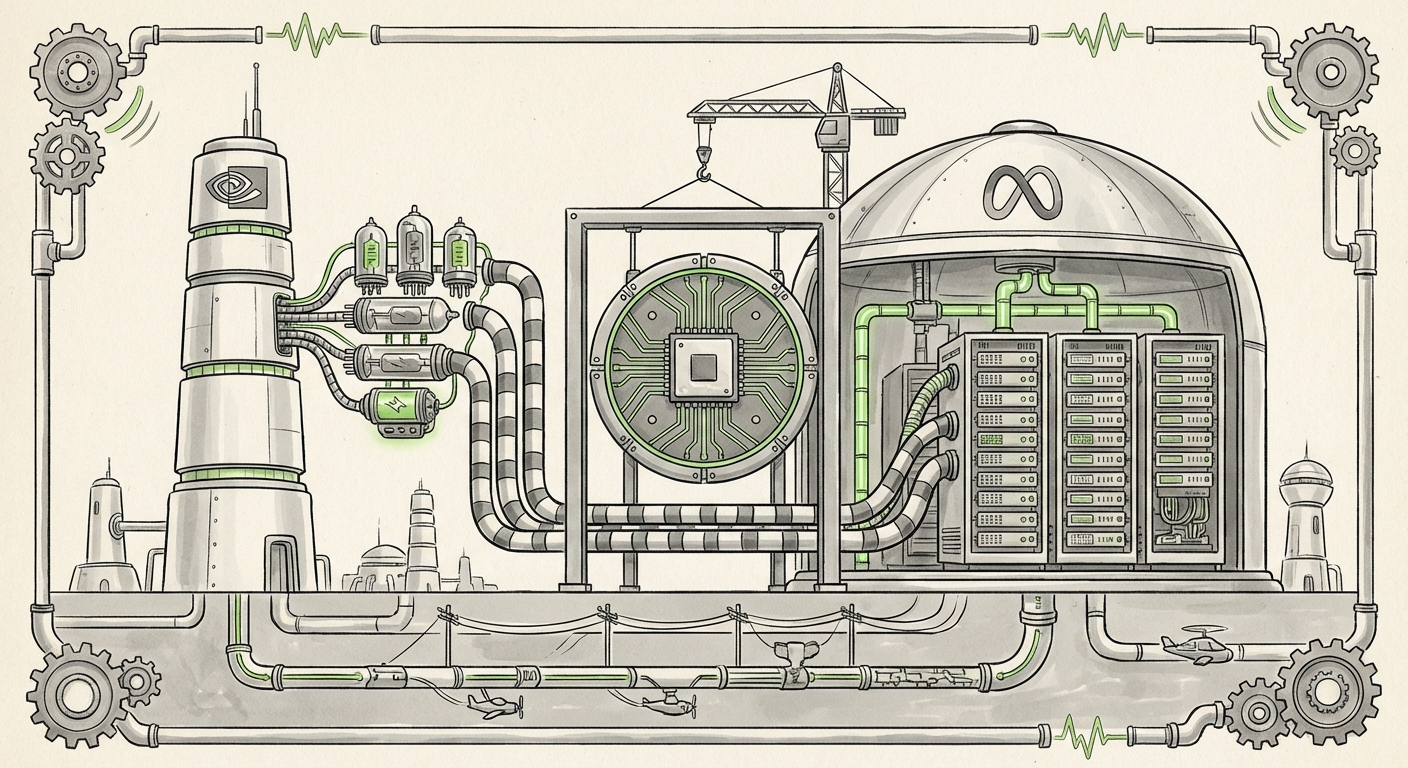

The Unseen War: Why Nvidia Selling CPUs to Meta Signals the Next Great Data Center Revolution

The artificial intelligence landscape is often visualized as a race for faster, more powerful graphics processing units (GPUs)—the essential engines of modern deep learning. While the GPU remains the undisputed champion of training massive models, recent industry movements signal that the contest is escalating far beyond the graphics card itself. The news that Meta, the parent company of Facebook, has signed a comprehensive, multiyear deal with Nvidia that explicitly includes standalone Nvidia processors (CPUs) is not just a footnote; it is a seismic event redefining the core architecture of the future data center.

The Evolution: From GPU Supplier to Full-Stack Partner

For years, the relationship between hyperscalers like Meta and Nvidia was largely transactional: Meta bought tens of thousands of H100s or A100s to feed their AI needs. The central processing unit (CPU)—the general workhorse of any server—was often sourced from competitors like Intel or AMD.

Nvidia’s inclusion of its own CPUs in this deal, likely leveraging its high-performance Grace architecture, transforms this relationship. They are no longer just selling the specialized engine; they are now providing the entire chassis and drivetrain for Meta’s AI supercomputers. This is Nvidia’s strategic counterpunch against growing market pressures.

Understanding the Competitive Pressure (The Arms Race Heats Up)

Why the sudden push into CPUs? Because the competition is refusing to play fair on the GPU front alone. Rivals are innovating rapidly, often by integrating CPU and GPU capabilities into a single chip design, known as an Accelerated Processing Unit (APU).

- The AMD Challenge: Competitors like AMD have aggressively positioned their offerings, such as the upcoming MI300X series, which aims to combine CPU cores and GPU processing units on the same package. This level of integration promises efficiency gains and potentially simpler programming for certain AI workloads. By securing a deal that includes its own CPUs, Nvidia forces customers to consider an *entire Nvidia ecosystem* (GPU + CPU + Networking) rather than just swapping out the GPU module for an AMD one.

- The Silicon Independence Mandate: The great irony of the AI era is that while companies like Meta need Nvidia’s cutting-edge GPUs today, they fiercely desire independence tomorrow to control costs and future innovation. This leads us to the second critical trend.

The Hyperscaler Paradox: Buying Today, Building Tomorrow

The Meta-Nvidia deal underscores a fundamental reality of the AI gold rush: demand currently outstrips the ability to supply, and performance is king. When training the next frontier model, every day of downtime or every percentage point of inefficiency translates into millions of dollars lost and crucial market time ceded. Nvidia currently owns the undisputed top-tier performance needed for foundational model training.

However, this dependence is temporary, and major tech companies hate single points of failure. This is where the broader trend of custom silicon development comes into sharp focus.

The In-House Silicon Strategy

Companies like Google (with its TPUs) and Amazon (with Trainium and Inferentia) have long invested billions into designing their own chips tailored precisely to their workloads. Meta is no different, pouring resources into its own custom AI accelerators.

If Meta is buying *more* from Nvidia *now*, it signifies two things simultaneously:

- Nvidia’s latest offerings (like the B100/B200 successor) are so far ahead that they are a necessary evil for immediate deployment.

- The custom chips Meta is developing are not yet mature enough to handle the heaviest, cutting-edge training demands.

The Nvidia CPU component of the deal acts as a bridge. It allows Meta to standardize more of its data center footprint onto the Nvidia software stack (CUDA), making it easier to manage system complexity while their internal custom chips mature for specific, high-volume inference tasks down the line.

Nvidia’s Masterstroke: Controlling the Full Stack Fabric

Nvidia’s ambition is not merely to sell GPUs; it is to own the entire high-speed data center environment. This strategy relies heavily on integrating software and interconnectivity alongside hardware.

Beyond the Chip: The Power of BlueField DPUs

The inclusion of CPUs is tied inextricably to Nvidia’s lower-level fabric components, such as their BlueField Data Processing Units (DPUs). A DPU is essentially a specialized computer on a card that manages all the "overhead" tasks in a server—networking, security, and storage management—freeing up the main CPU and GPU to focus solely on AI calculations.

When Nvidia sells a full stack—CPU, DPU, and GPU, all connected via their high-speed InfiniBand networking—they create a seamless, highly optimized computing platform. For a customer like Meta, this integrated approach means faster scaling and less time troubleshooting compatibility issues across different vendors.

For Nvidia, this means lock-in. Once an infrastructure is optimized for the Grace CPU and the CUDA/NVLink ecosystem, switching just one component (like swapping the CPU for an AMD or Intel equivalent) often forces a costly, time-consuming re-optimization of the entire system. Nvidia is effectively making the cost of leaving their integrated ecosystem exponentially higher.

Practical Implications for the AI Ecosystem

This deepening entanglement between the world’s leading AI platform builder (Meta) and the world’s leading AI hardware provider (Nvidia) has immediate repercussions across the industry.

For Technology Leaders and Architects: Standardizing on Integrated Solutions

Data center architects must now face the reality that "best-of-breed" component swapping is becoming less viable than adopting a cohesive, vendor-led architecture. If your AI cluster is built around Nvidia, utilizing their CPU (Grace) alongside their GPU (Hopper/Blackwell) offers immediate performance parity and simplified management that mixing vendors struggles to match.

This drives the necessity of deeply understanding the software layers, as the hardware advantage is increasingly measured by how well the components communicate via proprietary interconnects.

For Investors: Assessing Future Revenue Streams

Investors tracking the hardware sector should view this trend as a validation of Nvidia’s ecosystem strategy over pure component sales. Future revenue growth will be less dependent on the raw volume of GPUs sold and more on the adoption of their holistic data center solutions, including CPUs, networking gear, and software subscriptions.

Conversely, this signals immense pressure on competitors like Intel and AMD to prove that their decoupled CPU/GPU solutions can still offer the required latency and throughput for next-generation AI training that rivals Nvidia’s tightly coupled offerings.

For Society: The Acceleration of AI Capabilities

From a societal perspective, the acceleration of infrastructure acquisition by hyperscalers means one thing: AI capabilities will advance faster than previously modeled. Meta’s commitment to massive multiyear spending (as detailed in financial reports on infrastructure CapEx) ensures that resources are locked in for the rapid development of multimodal models, advanced robotics control systems, and increasingly sophisticated generative content engines.

The quicker these giants can provision compute, the quicker they can iterate on foundational models, shrinking the timeline for deploying powerful AI tools into consumer and enterprise products.

Actionable Insights: Navigating the New Hardware Reality

The era of discrete component purchasing is yielding to the era of integrated platforms. Businesses looking to build or upgrade their AI infrastructure must adjust their strategies accordingly:

- Audit for Stack Lock-in: Before purchasing new hardware, assess your team's current dependence on the CUDA software ecosystem. If you are heavily invested in CUDA, adopting Nvidia’s CPU offerings (Grace) will be far simpler than attempting to integrate a competitor’s CPU, despite potentially lower upfront costs elsewhere.

- Prioritize Software Enablement: Invest in engineering talent capable of managing complex, full-stack environments. The future competitive edge won't just be in who has the chips, but who can extract the most performance from the complex interactions between the CPU, DPU, and GPU.

- Factor in the Custom Chip Timeline: Assume that while hyperscalers are buying heavily from Nvidia today, they are preparing for a future of reduced reliance. Long-term enterprise strategy should involve piloting workloads on emerging open standards or, if large enough, beginning preliminary assessment of custom silicon integration feasibility.

Conclusion: The Platform Wars Have Begun

The Nvidia-Meta deal is a landmark indicator that the hardware war for AI dominance has matured. It is no longer just about the single best chip; it is about the most compelling, integrated, and scalable *platform*. Nvidia is brilliantly reinforcing its moat by turning its hardware dominance into a comprehensive system offering, ensuring that the cost of building a world-class AI data center means signing up for the entire Nvidia vision.

For Meta, this is a high-stakes necessity—securing today’s performance to win tomorrow’s AI race, even as they quietly continue to forge their path toward silicon independence. The data center architecture is fundamentally changing, moving away from loosely coupled components toward tightly integrated, intelligent fabrics. The next generation of AI progress will be built on this unified foundation, whether you are using the vendor’s bundled solution or trying to assemble your own custom symphony.