The Full Stack AI Race: Why Meta's CPU-GPU Deal with Nvidia Redefines Data Center Strategy

The foundation of the modern Artificial Intelligence revolution is built upon massive data centers overflowing with specialized hardware. For years, the narrative has been dominated by the Graphic Processing Unit (GPU)—the engine powering large language model (LLM) training. However, recent seismic shifts in vendor partnerships signal that the industry is moving beyond simply buying the best accelerator; it is now obsessed with optimizing the entire computing stack.

The news that Meta (the parent company of Facebook) has signed a sweeping, multiyear agreement with Nvidia that includes not just their industry-leading GPUs, but also, significantly, standalone Nvidia CPUs, is far more than a standard hardware purchase. It is a strategic maneuver that validates Nvidia’s aggressive diversification strategy and forces competitors to rethink their own data center offerings. As an AI technology analyst, I see this development as a critical juncture defining the next five years of enterprise computing architecture.

The End of Siloed Hardware: Optimization Across the Stack

Historically, major cloud providers like Meta built custom infrastructure by pairing best-in-class components: generally, the leading accelerators (GPUs) from one vendor with the leading general-purpose CPUs (from Intel or AMD) for everything else—data handling, orchestration, and lower-priority tasks. This new deal suggests that the friction points between the CPU and GPU—data bottlenecks, communication latency, and software incompatibility—are now costing too much performance in the age of trillion-parameter models.

When Meta chooses to integrate Nvidia’s CPUs (like the Grace line) alongside its GPUs, they are betting on a unified architecture. This is about achieving "full-stack efficiency."

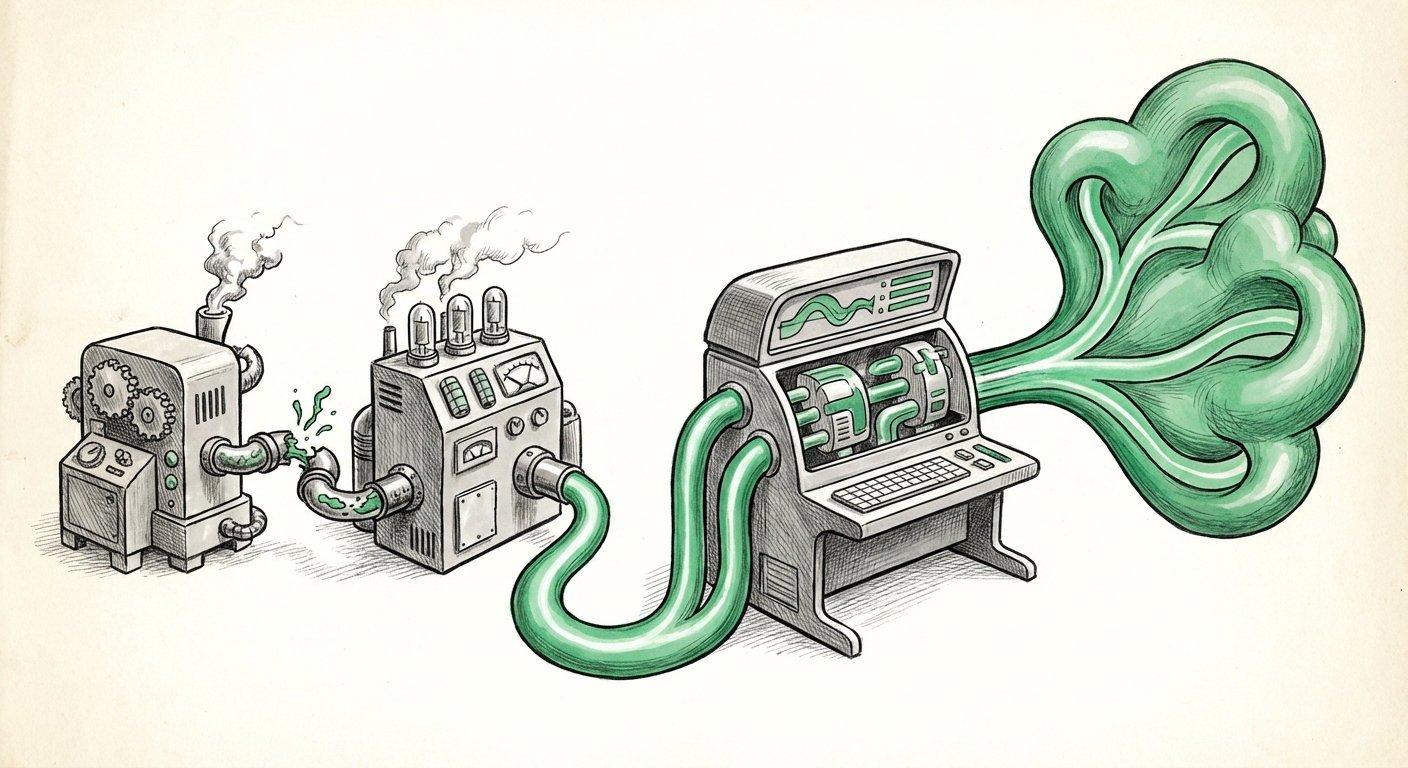

For the non-technical reader: Imagine building a race car. You need the fastest engine (the GPU for AI math), but if the chassis, the fuel lines, and the steering wheel (the CPU and supporting parts) are made by different companies that don't perfectly fit together, the car won't perform at its peak. Meta is moving toward buying the engine, chassis, and steering wheel from the same top-tier manufacturer (Nvidia) to ensure every part works together seamlessly for maximum speed in training AI.

Validating Nvidia’s Diversification: The Rise of Grace

Nvidia’s push into CPUs is no longer a side project; it is a core defense mechanism. As we track corroborating market signals, the viability of this move rests on the performance of products like the Grace CPU Superchip. Analysts closely watch benchmarks comparing Grace against established market leaders like AMD’s EPYC processors to determine if Nvidia’s integrated approach provides a genuine technical lead, not just a sales advantage.

If the performance gains from unified memory and high-speed interconnects like NVLink—which allows the CPU and GPU to communicate incredibly fast—outweigh the benefits of using a different vendor’s specialized CPU, then Nvidia has successfully weaponized its software ecosystem (CUDA) to lock customers into its entire product line.

The Competitive Firefight: Fending Off AMD and Intel

The primary driver for this aggressive integration by Nvidia is the intense pressure exerted by its rivals. The term "fend off growing competition" in the initial report is critical. AMD, in particular, has made massive strides with its Instinct accelerators (like the MI300 series), which are designed specifically to challenge Nvidia’s GPU dominance in AI training.

When competitors launch compelling alternative accelerators, the incumbent must raise the stakes. By bundling CPUs, Nvidia is essentially offering a "better deal" that isn't just about raw processing power but about total system deployment ease and guaranteed compatibility.

The Counter-Move by Competitors

The market is watching closely to see how AMD’s adoption rates are tracking. If enterprise adoption of AMD’s MI300X chips begins to accelerate significantly, Meta’s move secures a massive volume commitment for Nvidia’s full stack, insulating them from immediate swings in the accelerator market.

Conversely, Intel is also fighting back, pushing its Xeon CPUs and specialized AI accelerators (like Gaudi). This competition ensures that while Nvidia gains ground with Meta, the overall market for compute remains highly contested, driving innovation faster than ever before.

Meta’s Strategic Calculus: Custom Silicon vs. Partnership Power

Meta has long championed a hybrid approach, developing custom in-house silicon, such as their Meta Training and Inference Accelerator (MTIA) chips, intended to reduce reliance on external vendors. This Nvidia deal, therefore, raises key strategic questions:

- Immediate Capacity vs. Long-Term Independence: Is Meta sacrificing short-term hardware independence to secure the massive compute resources needed right now to train the next generation of Llama models? The race to frontier AI capability is so urgent that any delay is catastrophic.

- Stack Flexibility: Does this partnership signal a temporary consolidation of power, where Meta uses Nvidia’s full stack to achieve state-of-the-art results quickly, while continuing to refine its in-house custom chips for specialized, high-volume inference tasks later on?

This dynamic—between buying integrated best-in-class solutions and designing proprietary solutions—is the defining tension for every hyperscaler today.

The Software Lock-In: The True Value of the Full Stack

The most profound implication of bundling GPUs and CPUs is the reinforcement of Nvidia’s greatest moat: CUDA and its expansive software ecosystem.

For developers, managing a system where the general processor and the specialized accelerator speak the same language (via Nvidia’s libraries and optimized drivers) drastically reduces development time and complexity. If Meta is running its entire operational environment on a stack where the CPUs are optimized to feed data directly and efficiently to the GPUs via proprietary high-speed links, the switching cost to any competitor (who might use an AMD CPU and an Nvidia GPU, for example) becomes astronomical.

In simpler terms: CUDA is the secret sauce. By selling the CPU and GPU together, Nvidia ensures that the customer is fully immersed in the CUDA environment, making it incredibly difficult to swap out one component without rewriting significant amounts of optimized code.

Future Implications: Actionable Insights for the AI Economy

This deal is not just about Meta; it sets a blueprint for how high-performance AI will be purchased and deployed across the next decade. Here are the actionable insights derived from this trend:

1. For Enterprise Adopters: Prioritize the Stack, Not Just the Chip

Businesses looking to deploy custom AI models must shift their procurement focus. The fastest component is useless if the communication framework is slow. When evaluating infrastructure purchases, ask: How seamlessly do the central processing units communicate with the AI accelerators? Solutions offering integrated, vendor-optimized stacks (whether from Nvidia, or potentially from future integrated offerings by AMD or Intel) will likely offer superior performance-per-dollar for complex workloads.

2. For Startups and Developers: Embrace Portability (Where Possible)

While the lure of the optimized stack is strong, developers must remain wary of deep platform lock-in. Investing heavily in code that relies solely on proprietary interconnects might offer speed now, but limits future migration options. The industry needs better, open-standard interconnectivity to foster true competition beyond the GPU vendors.

3. For Investors: Look Beyond GPU Revenue

Investors tracking Nvidia must now view the company as a holistic infrastructure provider, not just an accelerator maker. Revenue streams from Grace CPUs and networking gear (InfiniBand/Spectrum-X) are now crucial indicators of Nvidia’s long-term market resilience against competitors who are trying to chip away at their GPU monopoly.

Corroborating Context for Deeper Understanding

To fully appreciate the competitive environment driving this partnership, analysts look at key indicators:

- The technical comparison between Nvidia’s Grace CPU and competitors validates Nvidia’s hardware readiness. (e.g., Technical deep-dives on Nvidia Grace CPU performance found in semiconductor publications).

- Understanding Meta's long-term vision helps frame this deal as acceleration versus permanent dependence (e.g., reporting on Meta AI infrastructure strategy published by leading tech news outlets).

- The threat posed by rival accelerators directly explains Nvidia's defensive bundling strategy (e.g., analysis of the competitive impact of the AMD MI300 deployment).

Conclusion: The Age of the Unified AI Engine

Meta’s deal with Nvidia—encompassing both GPUs and CPUs—is a powerful declaration that the era of component shopping for AI infrastructure is evolving into the era of the unified computing platform. Speed, efficiency, and the ability to scale massive foundational models demand a level of hardware harmony that siloed purchasing cannot achieve. This secures a massive win for Nvidia, confirming the strategic necessity of their CPU push, while simultaneously raising the bar for every other player in the high-stakes race to build the world’s most powerful AI.