The Trust Economy: Why Perplexity Ditching Ads Signals a Major AI Search Revolution

In the fast-moving world of Artificial Intelligence, business models are often treated as an afterthought—something to be patched on once the technology is proven. But a recent, bold move by AI startup Perplexity is forcing the entire industry to rethink this approach. Perplexity, a rising star in the domain of conversational search powered by large language models (LLMs), has decided to drop advertising from its platform, explicitly branding itself as an "accuracy business."

This decision is far more than a mere pricing adjustment; it is a direct challenge to the decades-old foundation upon which incumbent search engines like Google and Microsoft Bing have built their empires: targeted advertising. For analysts and strategists, this moment highlights a fundamental tension in the future of AI: Can an answer engine survive on precision and trust alone, or is ad-money the necessary fuel to power the massive computational costs of LLMs?

The Age-Old Conflict: Utility vs. Monetization

To understand the gravity of Perplexity's decision, we must first appreciate the economic landscape of AI search. Running a standard web search is cheap. Generating a complex, synthesized answer using a state-of-the-art LLM—which involves massive GPU clusters and constant fine-tuning—is extremely expensive. This operational cost requires a robust revenue stream.

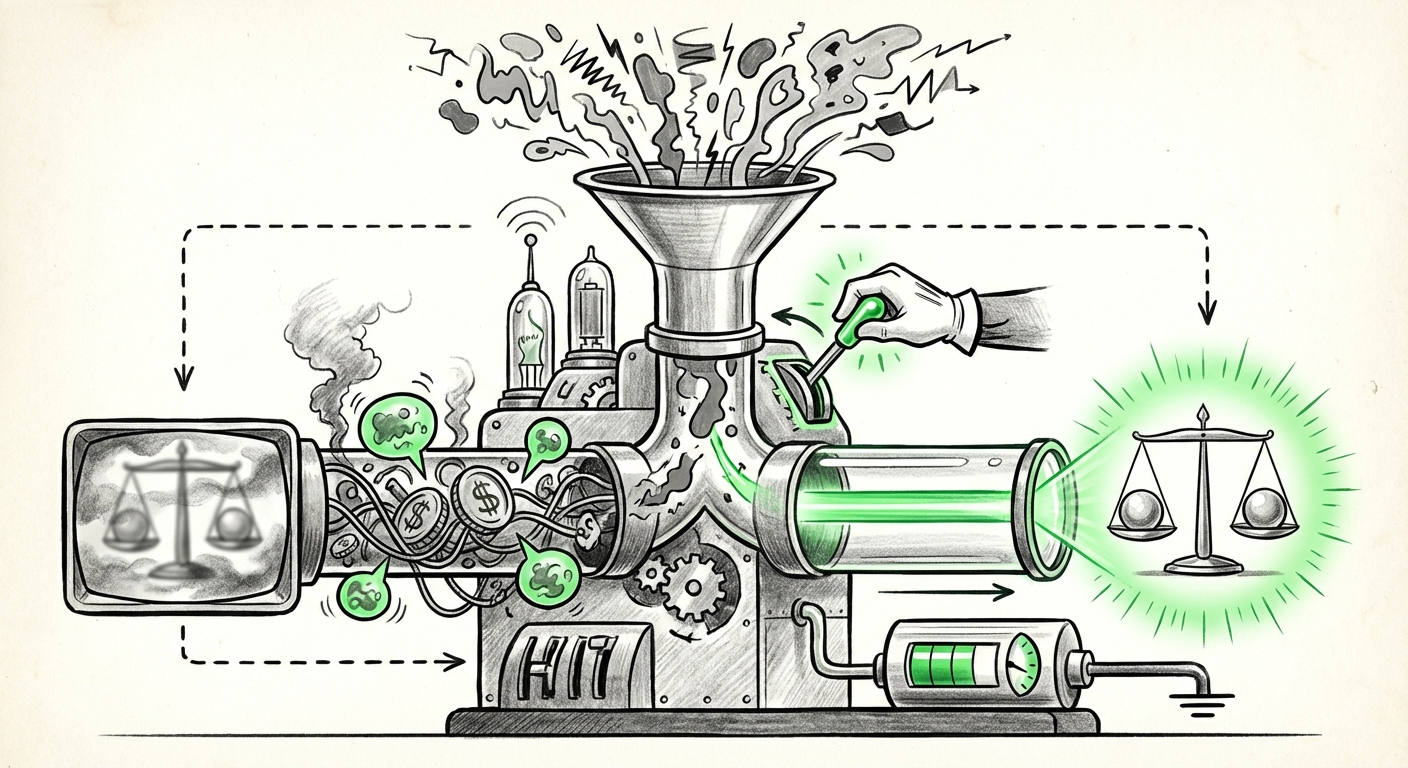

For thirty years, advertising has solved this problem beautifully for traditional search. The system works because the user’s *query* is valuable data that can be sold to advertisers. However, Perplexity argues that when an AI synthesizes information, injecting ads directly into the resulting answer inherently compromises the answer's integrity. If a search engine is paid to prioritize one outcome over another, it ceases to be a neutral, objective source of truth.

The Cost of Being Right (And Free)

When Perplexity pulls ads, it is betting that users are willing to pay—either directly through subscriptions or indirectly through high engagement with a superior, ad-free experience—for guaranteed factual rigor. This bet directly confronts the established giants, particularly as they integrate generative AI into their own platforms.

Google’s Search Generative Experience (SGE), for example, is designed to keep users within the Google ecosystem, weaving generative answers around existing ad units. This creates a difficult scenario for the user:

- The SGE Dilemma: The user receives a fast, summarized answer, but the lines between sponsored links and organic synthesis are intentionally blurred.

- The Perplexity Model: The user pays (or uses the free tier supported by lower-tier models) for an answer that is explicitly decoupled from commercial influence.

The essential question guiding our analysis is whether consumer demand for truth outweighs consumer aversion to payment for digital information. As we investigate the economic hurdles facing smaller players (Query #1: "AI search engine business model" profitability challenge), it becomes clear that many startups cannot afford the luxury of high-cost, ad-free operation indefinitely. Perplexity is testing the viability of the subscription-first, trust-as-a-feature model.

The Market Bifurcation: Two Paths for AI Search

This development solidifies a growing split in the AI information retrieval market. We are moving away from a single, monolithic search experience toward two distinct tiers based on user intent and tolerance for commercial influence.

Path 1: The Integrated Giant (Ad-Supported Synthesis)

Led by Google, this path seeks to modernize the existing, highly profitable advertising machine. The challenge here is technical and ethical. How do you ensure that an LLM doesn't subtly prioritize answers from clients who pay for premium placement? If an SGE answer defaults to recommending a specific brand of vacuum cleaner because that brand bought placements in the underlying ad auction, the utility plummets. Analysts studying this dynamic (Query #2: "Google SGE" vs "Perplexity" monetization strategy contrast) note that the incumbents must innovate ad placement that feels less intrusive than traditional banner ads, likely through subtle integration into the synthesized summary itself.

Path 2: The Accuracy Business (Subscription-Driven Trust)

Perplexity is firmly on this second path. By removing ads, they are prioritizing data quality and user experience above immediate monetization. This strategy relies heavily on proving that their premium offering (Perplexity Pro) is significantly better than the ad-ridden free alternatives. If the accuracy gap widens, users who rely on AI for critical tasks—research, learning complex subjects, or professional decision-making—will migrate to the paid, clean experience.

This model is entirely dependent on user adoption and retention (Query #4: "Perplexity subscription model" user adoption rates). If users see marginal improvement over free models, the subscription revenue will not cover the high LLM inference costs, forcing Perplexity back toward the ad dilemma it just escaped.

The Future Implication: Redefining Consumer Expectations

For society and the wider business ecosystem, Perplexity’s stance forces a necessary conversation about the ethics of information access. If the best, most accurate AI results are locked behind a paywall, does this create a new form of information inequality?

The Trust Deficit and Hallucination Costs

The core business advantage Perplexity is pursuing is *trust*. Generative AI is prone to "hallucinations"—confidently stated falsehoods. Every time a user encounters a hallucination in a free, ad-supported search tool, their overall trust in AI as a reliable source erodes. Conversely, a tool that rarely errs, even if it costs money, builds capital in the currency of trust.

Research into user behavior (Query #3: Consumer trust generative AI accuracy vs revenue) consistently shows that users have very little patience for inaccuracy, especially when the tool claims to be authoritative. For simple tasks, users tolerate errors; for complex, knowledge-based tasks, they demand verification. Perplexity is aiming for the latter demographic.

Practical Implications for Businesses

For businesses relying on AI for market research, trend spotting, or content generation, the choice of search engine directly impacts output quality:

- Risk Mitigation: Companies using ad-supported AI tools for foundational research face higher internal validation costs to correct potential hallucinations or biased sourcing driven by hidden ad incentives.

- Benchmarking Quality: Ad-free tools like Perplexity become the crucial benchmark for true, unvarnished information retrieval, informing procurement decisions for enterprise AI solutions.

- The Future of Sourcing: If LLMs become the primary interface for information discovery, the underlying sourcing methodology—ad-driven vs. trust-driven—will define the integrity of the data used across entire industries.

Actionable Insights for Navigating the New Search Landscape

As analysts, investors, and consumers, we cannot afford to be passive observers of this transition. Here are actionable insights derived from this strategic pivot:

1. Demand Transparency on Monetization: Users should actively question the business model behind any AI tool they use. If a service is "free," understand what data or attention is being sold instead. Look for explicit statements (like Perplexity's) detailing how revenue streams are separated from answer generation.

2. Test the Paywall Value Proposition: Businesses should run parallel experiments. Use the free tiers of both Google SGE and Perplexity for complex tasks, then test the paid versions. Quantify the time saved correcting errors versus the subscription cost. This cost-benefit analysis will define the ROI of "accuracy" for your specific needs.

3. Prepare for Subscription Friction: The shift away from universal "free" internet tools is underway. Whether it's Substack for newsletters or Perplexity for search, users must prepare for a future where high-quality, non-exploitative content and services require direct financial support. This is the necessary price of escaping algorithmic manipulation.

Conclusion: The Battle for the Interface

Perplexity’s rejection of advertising is a declaration of war on the status quo of the information economy. It signals that the future of search may not be about finding links faster, but about guaranteeing synthesized *truth*. This move aligns perfectly with the evolving technological capabilities of LLMs, which are inherently better suited for synthesis than mere indexing.

If this strategy succeeds, it will not only create a viable alternative to Big Tech search but will also set a new ethical standard for information services. It suggests that in the next phase of the AI arms race, the most powerful feature won't be the speed of the response, but the integrity behind it. The question now shifts from "What can AI *do*?" to "What can we *trust* AI to do for us?"