The RL Renaissance: Why David Silver's $1B Bet Signals the Next Major AI Architecture Shift

The artificial intelligence landscape, currently dominated by the dazzling capabilities of Large Language Models (LLMs), has just witnessed a seismic shift. David Silver, the visionary DeepMind veteran responsible for world-beating AI systems like AlphaGo, has secured an astounding $\text{\$}1$ billion seed round for his new venture, Ineffable Intelligence. This funding level, marking the largest seed round in European startup history, isn't just a financial statement; it’s a profound philosophical challenge to the reigning LLM orthodoxy.

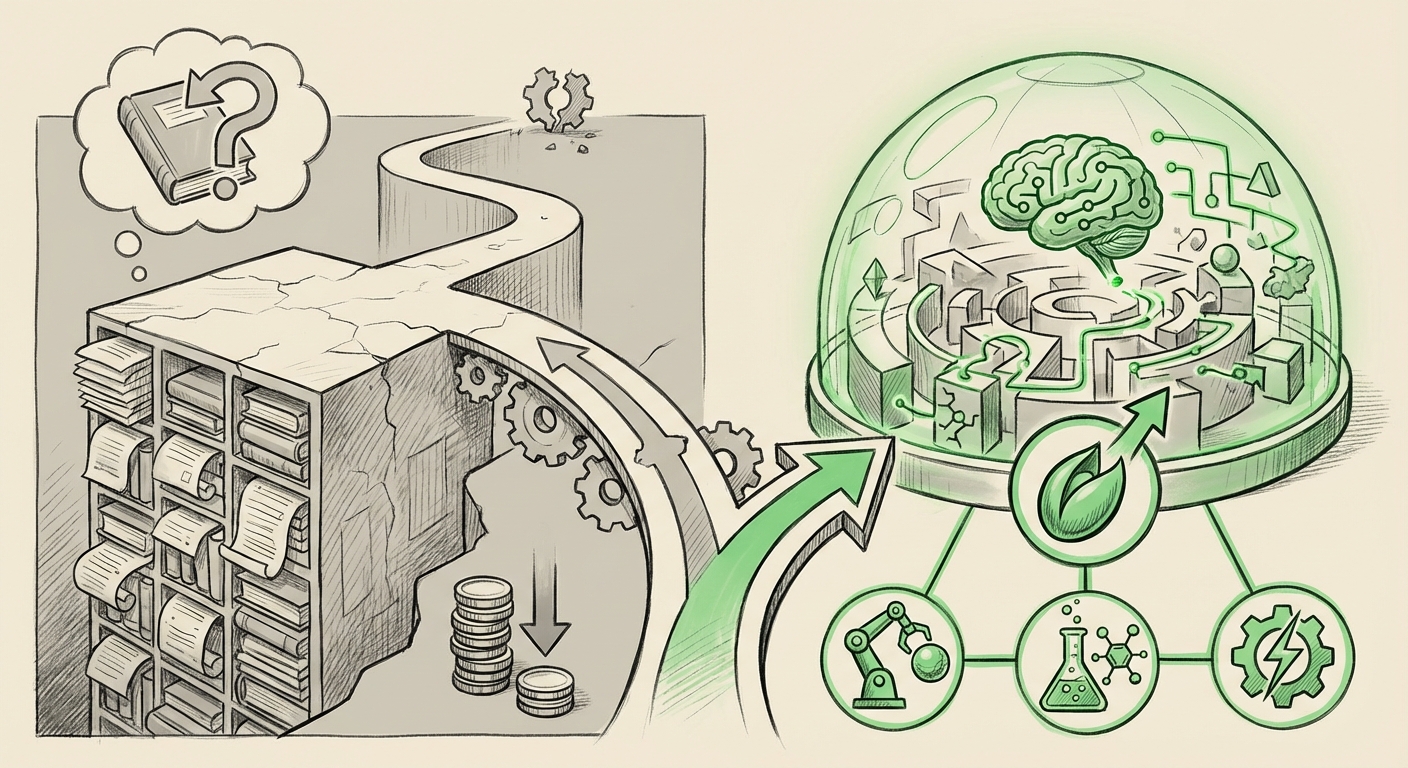

Silver’s critical divergence is simple: He is explicitly building "superintelligence without LLMs." Instead of relying on the statistical pattern matching derived from scraping the entire internet, Ineffable Intelligence is betting on Reinforcement Learning (RL) within vast, high-fidelity simulated environments. This moves AI research away from mimicking human communication and toward engineering true, self-improving, goal-oriented intelligence.

The LLM Plateau: Recognizing the Limits of Text

To understand the significance of Silver’s move, we must first look at the perceived ceiling of the current dominant architecture. LLMs, like GPT-4 or Claude, are phenomenal tools because they predict the next most likely word based on trillions of data points. They excel at fluency, translation, and summarization—tasks requiring massive knowledge recall.

However, as analysis shows (contextualized by searches like *"Critique of LLM emergent behavior limits"*), these models often lack three critical elements required for true general intelligence:

- Causality: They describe *what is*, but struggle with *why* and *what if*. They lack a true, internalized model of cause and effect in the real world.

- Planning and Grounding: LLMs are static snapshots of their training data. They don't dynamically test hypotheses or learn from failure in real-time exploration.

- Internal Consistency: This leads to the notorious issue of "hallucination"—confidently stating false information because the statistical probability of the sequence of words is high, even if factually incorrect.

Silver’s team is essentially arguing that if the goal is *superintelligence*—an entity capable of solving problems beyond current human capacity—relying on the finite, noisy, and inherently biased corpus of human text is a dead end. They seek intelligence built from the ground up, one interaction at a time.

The AlphaGo Legacy: Why RL is the Credible Challenger

The confidence behind Ineffable Intelligence is directly tied to David Silver’s pedigree. As noted in industry analyses concerning the *"David Silver AlphaGo legacy investment,"* RL is proven to solve problems where brute-force statistical methods fail.

Reinforcement Learning is the process where an AI agent learns by taking actions in an environment and receiving rewards or penalties. The agent learns the optimal *policy* (strategy) to maximize its cumulative reward. AlphaGo mastered the ancient game of Go—a game with more potential moves than atoms in the universe—not by reading every game ever played, but by playing itself millions of times.

Silver’s vision is to scale this principle exponentially. By creating hyper-realistic, massive-scale simulations (a virtual world), the agent can experience billions of lifetimes' worth of trial and error. In this controlled laboratory, the AI doesn't just memorize facts; it learns the fundamental laws governing the simulated reality. This builds intrinsic world models, allowing for genuine planning, experimentation, and adaptation.

The Shift in VC Appetite: Betting on Architecture Over Application

A $\text{\$}1$ billion seed round is unheard of for an approach that isn't immediately commercializing a generative product. This massive inflow of capital, explored when looking at *"Reinforcement Learning investment trends 2024"*, confirms a maturing view within the VC community.

The current phase of AI investment focused heavily on *application*: building wrappers, fine-tuning, and creating interfaces for existing LLMs. Now, deep-pocketed investors are looking for the *next foundational breakthrough*. They recognize that whoever cracks scalable, general-purpose RL will own the next decade of AI capability. Silver's fund signifies a major rotation toward high-risk, high-reward architectural bets.

Future Implications: What an RL-Driven Superintelligence Means

If Ineffable Intelligence succeeds where others have stalled on scaling RL, the implications for technology and society are profound:

1. True Autonomy and Robotics

LLMs can write code, but they cannot robustly operate a complex physical system that requires minute-to-minute error correction and novel adaptation (like advanced surgery or deep-sea exploration). RL agents, trained in simulation, are perfectly suited for embodiment. When Silver’s system learns physics and interaction through its simulated world, it can seamlessly transfer those abstract rules to the real world, leading to robotics and autonomous systems that don't just follow pre-programmed instructions but *reason* about dynamic physical constraints.

2. Scientific Discovery Beyond Human Intuition

The most complex problems in science—climate modeling, fusion energy design, or novel drug discovery—often involve dynamic systems with too many variables for human intuition to manage. An RL agent operating within a highly accurate scientific simulation can explore solution spaces in ways human researchers cannot, identifying causal levers and counter-intuitive optimization strategies.

3. A Shift in the Talent Market

While the demand for prompt engineers and LLM fine-tuners will continue, the massive funding flowing into RL creates an acute demand for specialists in dynamic systems, causal inference, computational efficiency in simulation, and advanced state representation learning. Researchers grounded in classical AI, control theory, and optimization will see their skills become exponentially more valuable.

Practical Actionable Insights for Businesses

For leaders managing technology strategy, Silver's venture serves as a crucial strategic indicator:

Insight 1: Don't Mistake Fluency for Intelligence

Businesses must stop treating LLM output as inherently trustworthy reasoning. While excellent for content and summarization, complex decision-making processes involving sequential steps or novel scenarios still require human oversight or structured symbolic systems. Prepare for a hybrid AI environment where LLMs handle linguistics and RL agents handle sequential control.

Insight 2: Invest in Simulation Infrastructure

If RL is the future, the data it generates—which is *experience*, not text—is the new currency. Companies with deep, proprietary, high-fidelity simulation environments (whether in manufacturing, logistics, finance, or biotech) possess a massive competitive advantage in the next generation of AI development. This infrastructure is as valuable as the GPU clusters are today.

Insight 3: Watch the European Ecosystem

The scale of this funding highlights London's rising stature in deep, foundational AI research (as noted by reports on *"European AI start-up funding record breaking rounds"*). This geographical context means access to cutting-edge research might diversify away from the traditional Silicon Valley axis. Businesses seeking foundational partnerships or talent should look closely at the deep-tech ecosystems maturing in Europe, which appear willing to back long-term, fundamental science projects.

The Road Ahead: Convergence or Conflict?

The most exciting question remains: Will Ineffable Intelligence succeed in creating a general intelligence *without* LLMs, or will the future be a convergence? Many researchers believe that the ultimate AGI will require both:

- The RL Agent: Provides the reasoning, planning, and grounded interaction with the world.

- The LLM Component: Provides the massive knowledge base, the ability to communicate complex outputs to humans, and the ability to absorb human instruction efficiently.

David Silver is currently choosing the cleaner, more fundamental path—building the engine of reasoning first. If he masters the learning agent, integrating language capabilities later might prove far easier than trying to teach true reasoning to a statistical parrot.

This $1$ billion seed round is not a dismissal of generative AI; it is an enormous vote of confidence in a parallel, arguably deeper, branch of AI research. It signifies that the industry is ready to move past the LLM sprint and enter the marathon toward genuine, adaptive superintelligence, driven by the power of learning through doing.