The Copyright Crucible: Why the Warner Bros. vs. ByteDance AI Fight Defines Generative AI's Future

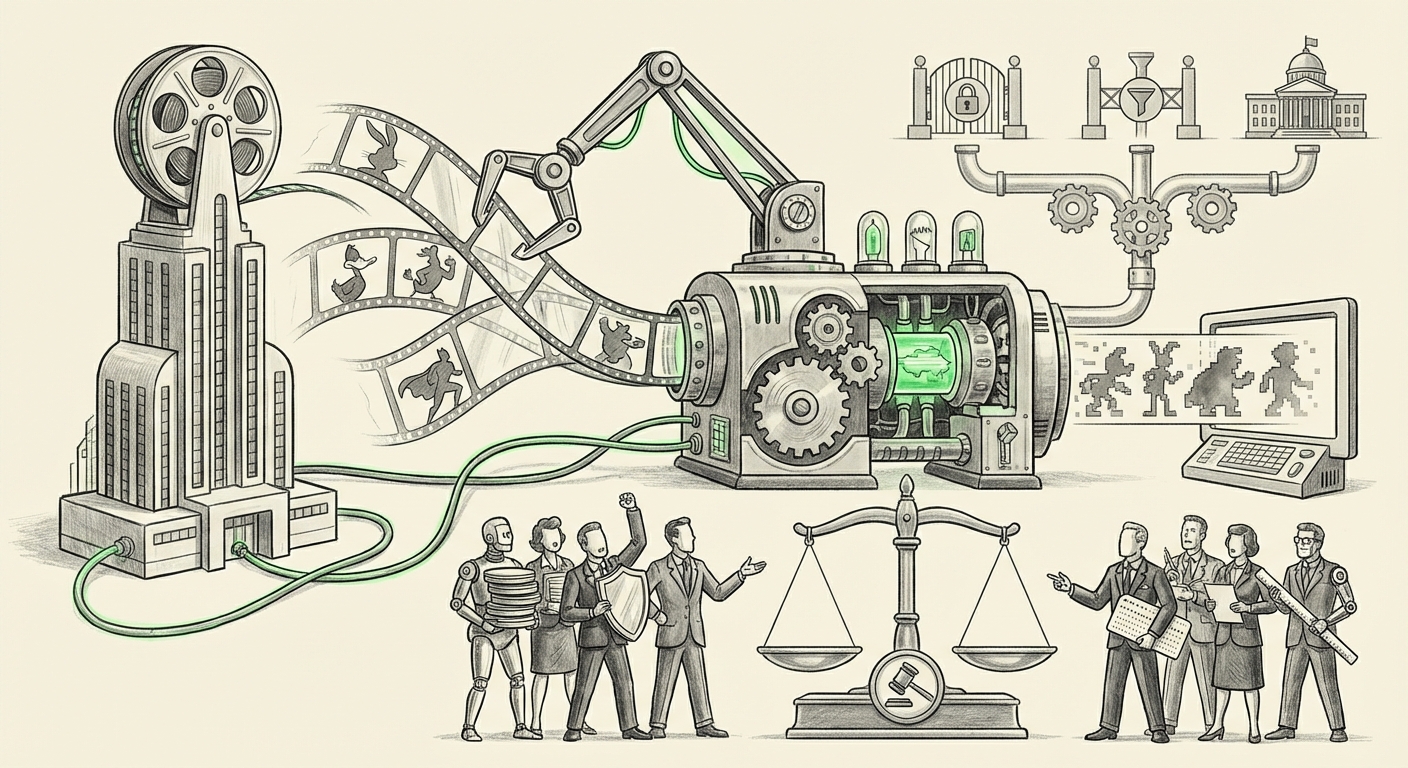

The technological landscape is currently experiencing its biggest earthquake since the dawn of the commercial internet: the explosion of powerful Generative AI. While we marvel at text generators and image synthesizers, the foundation upon which these tools are built—massive datasets—has become the new legal and ethical battleground. The recent accusation by Warner Bros. that ByteDance, the parent company of TikTok, deliberately trained its AI video service, Seedance 2.0, on copyrighted characters and intellectual property (IP) is far more than a corporate squabble. It is a flashpoint that will determine the trajectory of AI development, media creation, and digital ownership for the next decade.

The Accusation: IP Theft or Necessary Fuel?

At the heart of this conflict is a fundamental disagreement over what constitutes "training data." Warner Bros.’s claim is stark: ByteDance allegedly used its vast library of characters—characters protected by rigorous copyright and built through billions in investment—to teach Seedance how to generate new video content. For the studios, this is theft, pure and simple. They argue that their IP is being converted into commercial products without permission or compensation.

To simplify this for everyone, imagine training a new chef (the AI) by forcing them to look at thousands of copyrighted cookbooks (the IP). If that chef then opens a restaurant and sells dishes that look exactly like the ones from the copyrighted books, the original cookbook authors would rightfully sue. Warner Bros. is arguing that ByteDance has essentially forced its AI chef to study their secret recipes.

However, the technology developers often counter by invoking the concept of "Fair Use," arguing that using data to train an AI model is akin to a human reading a book to learn writing style. It’s not reproducing the original work; it’s extracting statistical patterns and knowledge from it.

Contextualizing the Conflict: A Broader Legal Tsunami

The Warner Bros./ByteDance friction does not exist in a vacuum. It is one wave in a rising legal tsunami hitting the tech industry. To understand its significance, we must look at the surrounding litigation:

The Expanding Front of Copyright Battles

We are seeing numerous high-stakes cases where creators and rights-holders are demanding accountability from AI developers. Whether it's authors suing OpenAI for training on their novels or visual artists challenging image generators, the theme is consistent: unauthorized ingestion of copyrighted material for commercial gain.

These broader lawsuits are vital because the outcomes in any major ruling will set critical precedents. If courts decide that scraping large swathes of copyrighted material to build foundational models constitutes infringement, it forces a massive technical and financial pivot for every AI company globally. Conversely, if broad 'fair use' is established, the creative industries face an existential threat to their ability to monetize their historic output.

The Technical Reality of Model Training

The engineering perspective highlights *why* this conflict is so potent. Modern Large Language Models (LLMs) and their visual counterparts rely on gargantuan datasets, often sourced through indiscriminate web scraping (like Common Crawl). These datasets inevitably contain copyrighted material because much of the high-quality, structured content on the web belongs to media companies.

For video models like Seedance, the requirement for high-fidelity, temporally coherent outputs means the model needs to learn about character consistency, motion, and visual style. Learning that means observing Batman moves like Batman, or that a specific animated style belongs to a specific studio. For ByteDance, the defense likely rests on whether Seedance merely learned the *rules* of animation or whether it can reproduce a recognizable, protected *instance* of a character. This technical distinction is what the courts will have to untangle.

Hollywood's Unified Front: Labor, Studios, and Protectionism

The industry backlash against unchecked AI development is not just limited to the C-suite of Warner Bros.; it is embedded deeply within Hollywood’s labor structure. The recent, landmark strikes by writers (WGA) and actors (SAG-AFTRA) centered heavily on establishing guardrails for AI usage.

The union agreements secured specific language preventing studios from using actors’ digital likenesses without clear consent and compensation, and ensuring AI-generated scripts couldn't undermine writers' employment. When Warner Bros. sues ByteDance, they are sending a loud signal that aligns perfectly with the unions' stance: AI tools must respect existing economic structures and creative rights. This convergence—studios and unions finding common ground against perceived technological overreach—is a powerful indicator of how seriously the creative sector views this threat.

This consensus among competitors (studios) and former adversaries (studios and unions) means that any future AI model seeking broad access to Hollywood IP will face significant resistance, not just legally, but practically, through contractual negotiations.

The Counter-Narrative: Seeking Defense in the Algorithm

In any high-stakes legal confrontation, the accused party must mount a defense. While specifics regarding ByteDance’s legal response to the Seedance allegations may emerge through official statements or court filings, their general approach will likely mirror that of other tech firms:

- Statistical Abstraction: Arguing that the model does not store copies of the copyrighted works but rather abstract mathematical representations of patterns, which fall outside traditional copyright definitions.

- Public Availability Defense: Claiming that the data used was publicly accessible on the internet, suggesting a lower bar for usage in training compared to explicitly licensed material.

- Transformative Use: Asserting that the resulting video generator is "transformative"—creating a new utility (a tool for creation) rather than merely reproducing the original content.

If ByteDance can successfully argue that Seedance creates only *inspired* content rather than infringing copies, it bolsters the argument for rapid, unrestricted AI development. If they fail, it paves the way for mandatory licensing frameworks.

What This Means for the Future of AI Development

The outcome of disputes like this will dictate the next phase of AI evolution. There are three primary paths forward:

1. The Licensed Future (The High Road)

If courts heavily favor IP holders, the industry will shift rapidly toward "clean room" AI development. Companies will be forced to license data explicitly, leading to high costs for model training. This favors large, well-funded players who can afford massive licensing deals (like Adobe has done with its Firefly model). The consequence? AI innovation slows down initially, but models become legally sound and inherently more trustworthy for enterprise use.

2. The Technical Redaction Future (The Engineering Fix)

If the legal battle proves too murky, developers might focus intensely on data filtering and provenance tracking. This involves creating AI systems that can detect and actively filter out known copyrighted material from training sets, or developing verifiable mechanisms to prove *what* data was used. This requires significant technical overhead but avoids the heavy cost of licensing.

3. The Regulatory Clampdown Future (The Policy Solution)

If litigation drags on without clear rulings, legislative bodies will step in. We could see the creation of new "Digital Training Rights"—a compulsory licensing scheme where AI companies pay a small micro-fee for any scraped data, managed by a central body, similar to music performance rights organizations. This path offers clarity but often stifles the speed of innovation that characterized early open-source models.

Practical Implications for Businesses and Creators

For executives, developers, and creators, this moment demands immediate strategic consideration:

For Media & Entertainment Businesses:

This is the time to audit your IP protection strategies. You must move beyond merely registering copyrights to actively monitoring AI outputs that mimic your style or characters. Furthermore, studios should be prepared to enter the licensing market—either by licensing your own dormant IP to AI firms for revenue or by negotiating tighter restrictions on your contractors' use of AI tools.

For AI Developers and Tech Companies:

The era of "scrape first, ask for forgiveness later" is rapidly ending. Future development roadmaps must budget for licensing costs or invest heavily in technical solutions for data provenance and exclusion. Reliance on purely public, unfiltered datasets for commercial models is now a significant legal liability that investors will scrutinize.

For Individual Creators (Artists, Writers, Developers):

Understand that the value of your unique style and body of work is being actively fought over. If you operate independently, seek platforms or models that are transparent about their training data (like those that use ethically sourced or public domain data). Your negotiation power in the coming years will be derived directly from the uniqueness and defensibility of your creative output.

Actionable Insights Moving Forward

Navigating this highly charged environment requires proactive measures:

- Establish a 'Data Vetting' Pipeline: Before deploying any generative model internally, create a clear, documented process to check training sources against known copyrighted pools. If you are building, know exactly what you are feeding your engine.

- Prioritize Licensing Frameworks: Begin discussions now with IP holders whose work you admire or need access to. A negotiated, paid relationship is far safer than a courtroom defense.

- Advocate for Technical Transparency: Support industry standards that demand "nutrition labels" for AI models, clearly stating what percentage of the training data came from copyrighted, licensed, or public domain sources. This transparency builds trust.

The confrontation between Warner Bros. and ByteDance is the canary in the coal mine. It signals that the honeymoon phase for unrestricted data scraping in commercial AI is over. The industry is moving toward an era defined by rights management, traceability, and explicit consent. The AI that wins the future won't just be the most powerful; it will be the one deemed the most legally responsible.

This analysis synthesized information surrounding ongoing media industry legal challenges to AI training practices, drawing context from broader lawsuits and labor negotiations.