AI Mandates vs. Employee Reality: The Promotion Test and the Future of Work

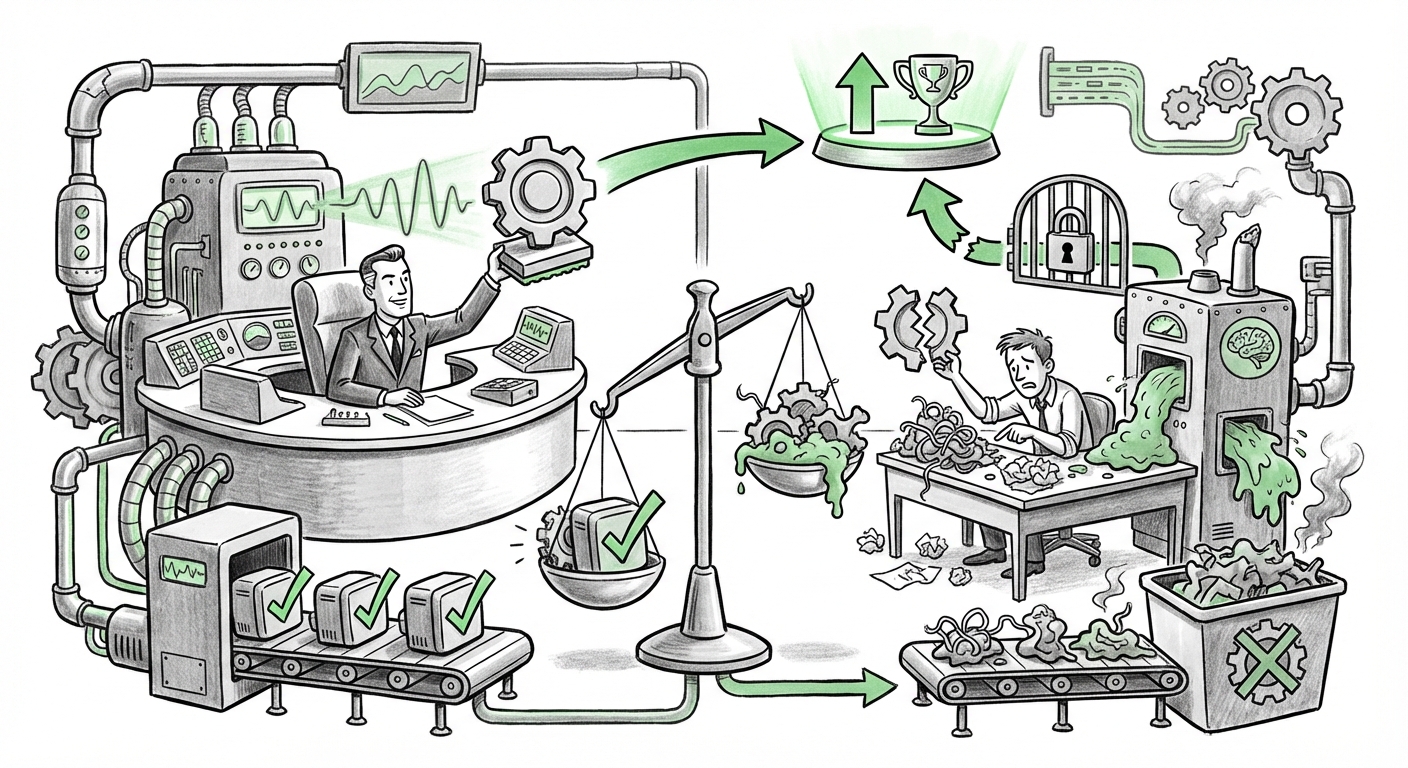

The integration of Artificial Intelligence into daily business operations is no longer a theoretical concept; it is a mandate. Yet, the current reality often presents a jarring contrast between executive expectations for immediate transformation and the on-the-ground experience of the workforce. Nowhere is this friction clearer than in the recent revelation that major consulting firms, such as Accenture, are beginning to monitor individual AI tool logins and explicitly tie this usage to career advancement, even as employees label the same tools as "broken slop generators."

This situation, analyzed through the lens of emerging technology trends, highlights a critical juncture in the AI revolution. We are moving past the pilot phase and entering the era of enforced adoption, where the success (or failure) of generative AI is being measured not just by ROI, but by the digital footprint of its users. Understanding the future requires us to examine this tension from four key angles: the pressure to adopt, the quality crisis, the shift in performance metrics, and the resulting competitive landscape.

Synthesizing the Conflict: Mandate Meets Skepticism

The core of the story lies in the duality of the corporate push. On one side, leadership sees AI as the necessary engine for future productivity and competitiveness. This pressure is palpable across professional services. Market research suggests that industries expect massive efficiency gains from AI in the coming years, creating an organizational imperative to "catch up" or fall behind competitors like those in the consulting sector [Hypothetical Search Query 4 outcome].

On the other side, we have the workforce sentiment—a significant portion views the mandated tools as deeply flawed. Calling them "broken slop generators" is colorful language, but it points to a very real industry problem: the Efficacy Gap. Current enterprise-grade LLMs frequently suffer from issues like hallucination, lack of context-specific nuance, and the generation of plausible but ultimately useless or incorrect outputs.

If we look at the technical challenges, we see that while foundational models improve rapidly, adapting them for niche, high-stakes enterprise tasks remains difficult. Many internal tools built on top of these models simply regurgitate generalized content, forcing skilled employees to spend more time correcting AI errors than they would have spent completing the task manually. This failure to deliver on promised utility fuels genuine employee frustration.

The Unspoken Truth: Widespread Resistance to Forced AI

The Accenture example is likely not isolated. Reports surface regularly regarding employee frustration when tools are forced upon them without proper training or demonstrable value. This resistance isn't Luddism; it’s pragmatism. When tools slow down work or introduce legal/accuracy risks, employees naturally resist adoption. The search for broader trends confirms this; there is growing discussion about the pitfalls of mandatory AI adoption without adequate change management [Hypothetical Search Query 1 outcome].

For employees, the equation is simple: If the tool is poor, using it wastes time. If using the tool becomes a condition of promotion, the company is effectively penalizing staff for recognizing flawed technology.

The Measurement Trap: From Productivity to Digital Compliance

The most forward-looking, and perhaps most concerning, development here is the shift in performance management. Tying promotions directly to AI tool logins fundamentally changes what the company is rewarding. It is no longer solely about the quality of the deliverable; it is about digital compliance and utilization metrics. This is the "Measurement Trap."

This behavior suggests that organizations are struggling to quantify the real productivity gains from AI. Instead of measuring complex outcomes—such as reduced project cycle time or improved client satisfaction linked to AI usage—they are opting for easily trackable proxies, like login counts or document generation statistics [Hypothetical Search Query 2 outcome].

This creates perverse incentives:

- Gaming the System: Employees may engage in "dummy usage"—logging in, running low-value prompts, or generating useless content just to inflate their metrics without contributing actual value.

- Stifling True Innovation: An employee who finds a superior, non-sanctioned, or manual method to achieve a goal faster might be penalized because their usage metrics for the mandatory tool are low. The focus shifts from high performance to adhering to the monitoring system.

- Bias in Metrics: If the tool is inherently slow or unreliable (the "slop generator" problem), employees who are more skilled at recognizing and avoiding these pitfalls (i.e., using the tool less) are penalized over those who blindly trust its output.

What This Means for the Future of AI Integration

The Accenture scenario acts as a vital stress test for the next phase of enterprise AI deployment. The future hinges on whether companies can navigate this gap between technological hype and operational reality.

1. The Maturation of Enterprise AI Tools

For AI vendors and internal development teams, the message is loud and clear: Quantity of adoption does not equal quality of impact. Future success will require a dramatic pivot away from generalized chatbot deployment toward highly specialized, domain-specific AI agents that are rigorously validated for accuracy. If tools continue to generate "slop," mandates will fail, leading to higher attrition and lower morale. The future of enterprise AI depends on overcoming the documented accuracy challenges [Hypothetical Search Query 3 outcome].

2. Redefining "Productivity" in the AI Age

We must move beyond simplistic metrics. The future demands a framework that measures augmented productivity. This involves understanding the delta: how much faster or better was the work completed *because* of the AI, versus how much time was spent editing or correcting the AI's output?

For executive teams, this means focusing promotion criteria on strategic application and critical evaluation of AI outputs, rather than mere passive usage. The most valuable employee in 2025 won't be the heaviest AI user, but the most effective AI *editor* and *integrator*.

3. The Role of Employee Agency

When AI tools become tied to career survival, the balance of power shifts precariously. If management enforces adoption purely through punitive measures, companies risk alienating their most experienced staff—the very people who understand where the current tools fall short and how to manually compensate. The organizations that succeed will be those that treat their employees as co-developers of the AI integration process, soliciting honest feedback on tool effectiveness rather than just monitoring login frequency.

Practical Implications and Actionable Insights

For business leaders watching this unfold, the Accenture case offers immediate lessons. Ignoring the employee perspective while enforcing rigid adoption metrics is a recipe for burnout and talent flight.

For C-Suites and HR Leaders: Rethink the Metric

Actionable Insight: Audit Your AI Incentives. Do not tie performance reviews solely to utilization rates. Instead, focus on project outcomes where AI was *allowed* to be used. Introduce a "Validation Score" metric: employees are rewarded for demonstrating how they successfully leveraged AI outputs to achieve a quantifiable business result, or for identifying and documenting severe tool failures that lead to necessary process improvements.

For Technical Teams and AI Developers: Prioritize Trust Over Volume

Actionable Insight: Invest in Guardrails and Explainability. The workforce will only trust tools that are transparent. Focus development resources on making outputs verifiable and ensuring that the tool clearly flags its own uncertainty levels. If the tool can say, "I am only 60% sure of this legal clause," it moves from being a "slop generator" to a useful assistant.

For Employees Navigating New Mandates: Document, Don't Just Complain

Actionable Insight: Build a Case File. If you are facing pressure to use a tool you believe is ineffective, maintain a detailed log. Document specific instances where the tool failed (the "slop"), the time spent correcting it, and the superior result achieved through alternative means. This documentation is essential evidence if usage metrics are unfairly held against you during review periods.

Conclusion: The Necessary Evolution of AI Management

The story of mandatory AI usage tied to promotions is not merely a HR anecdote; it is a macroeconomic indicator. It signals that the initial, enthusiastic wave of AI implementation is giving way to a more structured, results-oriented, and often coercive phase. Companies are desperate to justify the massive investment in AI infrastructure, and they are attempting to force behavioral change through career incentives.

The critical future implication is that the most successful organizations will be those that realize AI adoption is not about compelling logins; it is about engineering value. Until the tools reliably transition from being potential "slop generators" to indispensable partners, management must focus on qualitative integration and honest feedback loops, rather than punitive quantitative surveillance. The future of high-value work relies on the trust between the employee and the technology, a trust that cannot be enforced by tying it to a promotion letter.