The Reasoning Revolution: How Gemini 3.1 Pro Signals AI's Shift Beyond Scale to Deep Intelligence

The pace of development in Artificial Intelligence feels less like an evolutionary process and more like a series of controlled explosions. Every few months, a new foundational model emerges, pushing the boundaries of what we thought was possible. The recent announcement regarding **Google’s Gemini 3.1 Pro**, particularly its significant performance leap in complex reasoning tasks, is not just another iteration; it marks a pivotal inflection point in the industry’s trajectory.

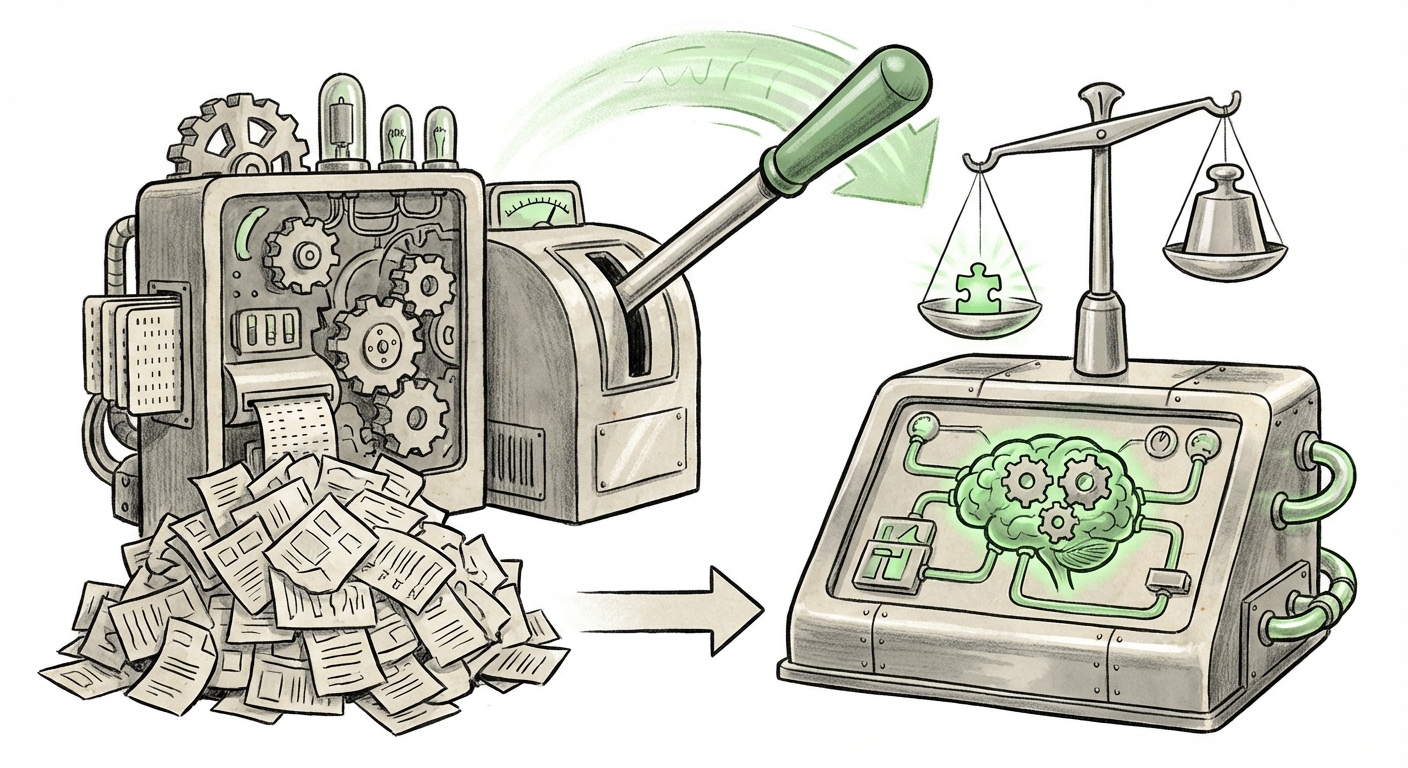

For years, the headline metric in the LLM space was scale: more parameters, larger datasets, bigger models. While size clearly contributed to fluency and general knowledge, true high-level intelligence—the ability to logically connect disparate pieces of information, solve multi-step problems, and adapt abstract concepts—remained the industry's Everest. Gemini 3.1 Pro suggests we are finally gaining significant altitude on that mountain.

The Benchmark Barrier: Moving Beyond Fluff to Function

The initial reports surrounding Gemini 3.1 Pro highlight a performance that has "more than doubled" on demanding reasoning benchmarks compared to its predecessor. This is the technical equivalent of a sports car suddenly gaining rocket boosters. But as the original reporting wisely cautions, benchmarks are precisely that—benchmarks.

For the technical audience—the engineers and data scientists building real-world applications—this number is deeply compelling. Reasoning ability (the capacity for planning, deduction, and counterfactual thinking) is the primary bottleneck preventing LLMs from handling truly mission-critical, end-to-end tasks. A model that excels here can move from being a brilliant writing assistant to a genuine co-pilot in fields like software development, advanced diagnostics, and regulatory compliance.

However, for the non-technical observer, or the business leader, we must translate this: Improved reasoning means the AI is becoming less likely to confidently produce nonsensical or logically flawed answers. It implies a higher degree of trustworthiness in outputs that require deep, sequential thought.

Contextualizing the Leap: The Competitive Arena

Google’s move does not happen in a vacuum. It forces a direct confrontation with market leaders like OpenAI and Anthropic. To truly assess the significance, we must look at how this release stacks up in the ongoing AI arms race.

When evaluating new models, analysts inherently look for external validation against competitors. We seek articles that perform direct comparisons:

- Search Focus: `"Gemini 3.1 Pro" vs GPT-4 reasoning benchmark comparison`

Such comparisons contextualize whether Google has merely caught up, or if they have established a new temporary lead. If Gemini 3.1 Pro sets a new SOTA (State of the Art) on reasoning tasks that require deep structural understanding—tasks where previous models often failed by hallucinating a logical bridge—it signals that the competition is now focusing on the same core challenge: cognitive depth over sheer breadth.

The Practical Implications: From Chatbot to Autonomous Agent

The shift to enhanced reasoning is the most significant driver for enterprise adoption since the initial release of powerful generative models. Why? Because complex business processes require robust logic, not just eloquent text generation.

If we are trying to understand the practical future, we must examine:

- Search Focus: `practical implications of LLM reasoning improvements for enterprise adoption`

The implications are transformative:

- Code Generation and Debugging: Current models write code snippets well. Reasoning-enhanced models can comprehend entire codebases, identify subtle logical errors across multiple files, and propose architectural fixes—moving toward autonomous software engineering.

- Financial Modeling and Risk Assessment: A model that can reason about dependencies, constraints, and probabilistic outcomes can build far more accurate financial simulations, going beyond simple descriptive analysis to predictive, causal modeling.

- Scientific Hypothesis Generation: In research, improved reasoning allows the model to connect findings from disparate papers, identify gaps in current knowledge, and propose novel, testable hypotheses that a human might overlook due to information overload.

For business leaders, this means the ROI calculation for AI adoption is fundamentally changing. We are moving past efficiency gains (faster content creation) toward capability gains (performing tasks previously impossible without a highly specialized human expert).

Architectural Underpinnings: The Why Behind the What

To be truly forward-looking, an analyst must ask how Google achieved this leap. Is it purely a matter of throwing more computational power at the problem (scaling laws), or is there an architectural innovation at play? This requires looking beneath the surface of the marketing announcements.

Our third search direction focuses here:

- Search Focus: `next generation AI model architecture focusing on reasoning over scale`

When models improve reasoning dramatically without necessarily doubling in size overnight, it often points toward smarter ways of training and structuring the neural network. This could involve:

- Better Training Regimens: Utilizing reinforcement learning from AI feedback (RLAIF) specifically targeted at logical consistency, rather than just human preference for style.

- Mixture-of-Experts (MoE) Optimization: More efficient routing mechanisms that direct complex reasoning queries to the most specialized "expert" sub-networks within the model, making the process faster and deeper.

- New Contextual Handling: Innovations in how the model maintains a persistent, complex "scratchpad" or working memory to manage intermediate steps in a logical chain, preventing mid-task drift.

This pursuit of architectural refinement over brute-force scaling is the hallmark of a maturing field. It suggests that the next wave of breakthroughs will come from elegance and efficiency, rather than simply requiring more data centers.

The Future of AI: From Tools to Teammates

The concept of the "autonomous agent" has been AI hype for years. Models that simply execute simple, pre-defined scripts are not true agents. True autonomy requires sound judgment and robust reasoning.

The release of Gemini 3.1 Pro, corroborated by industry analysis of its competitive standing and the underlying technical shifts, solidifies the direction for the next 18 months of AI development: The focus is now on reliability, logic, and verifiability.

Actionable Insights for Technology Leaders

For organizations investing heavily in AI transformation, these developments demand a strategic pivot:

- Re-evaluate Trust Thresholds: If a model doubles its reasoning performance, your organization should immediately re-assess which tasks you are willing to automate fully versus those that still require mandatory human-in-the-loop verification. Tasks previously deemed too complex might now be viable for limited autonomy.

- Invest in Prompt Engineering for Logic: Simple prompts will not unlock advanced reasoning. Engineers must develop sophisticated chains of thought (CoT) and self-correction prompts designed to force the model through its logical paces, capitalizing on its new inherent strengths.

- Demand Transparent Benchmarking: When evaluating vendors, move beyond general accuracy scores. Demand performance data on specific, industry-relevant reasoning challenges—ideally problems where previous models failed spectacularly.

The future of AI is not just about generating more content; it is about generating better decisions. While the initial article was right to note that benchmarks are just benchmarks, the magnitude of the reported improvement in Gemini 3.1 Pro suggests this is a fundamental step toward reliable, complex artificial cognition. We are transitioning from models that imitate intelligence to models that are demonstrably using it.

This new era demands that developers and businesses alike stop asking, "What can AI talk about?" and start asking, "What can AI reliably solve?" The answer, increasingly, is complex problems that require genuine thought.

Further context on competitive positioning can be found by searching for analyses similar to: "Google’s Gemini 3.1 Pro is out—and it’s a serious challenger to OpenAI and Anthropic" (Representative competitive analysis).

For strategic planning, explore white papers discussing the operational impact, such as those titled: practical implications of LLM reasoning improvements for enterprise adoption (Representative Gartner/MIT Tech Review style analysis).

Deep technical insight into architectural evolution is often found where researchers discuss: next generation AI model architecture focusing on reasoning over scale (Representative research blog or arXiv summary).