The New Regulatory Wild West: Why Meta’s $65M State Spending Signals AI’s Political Future

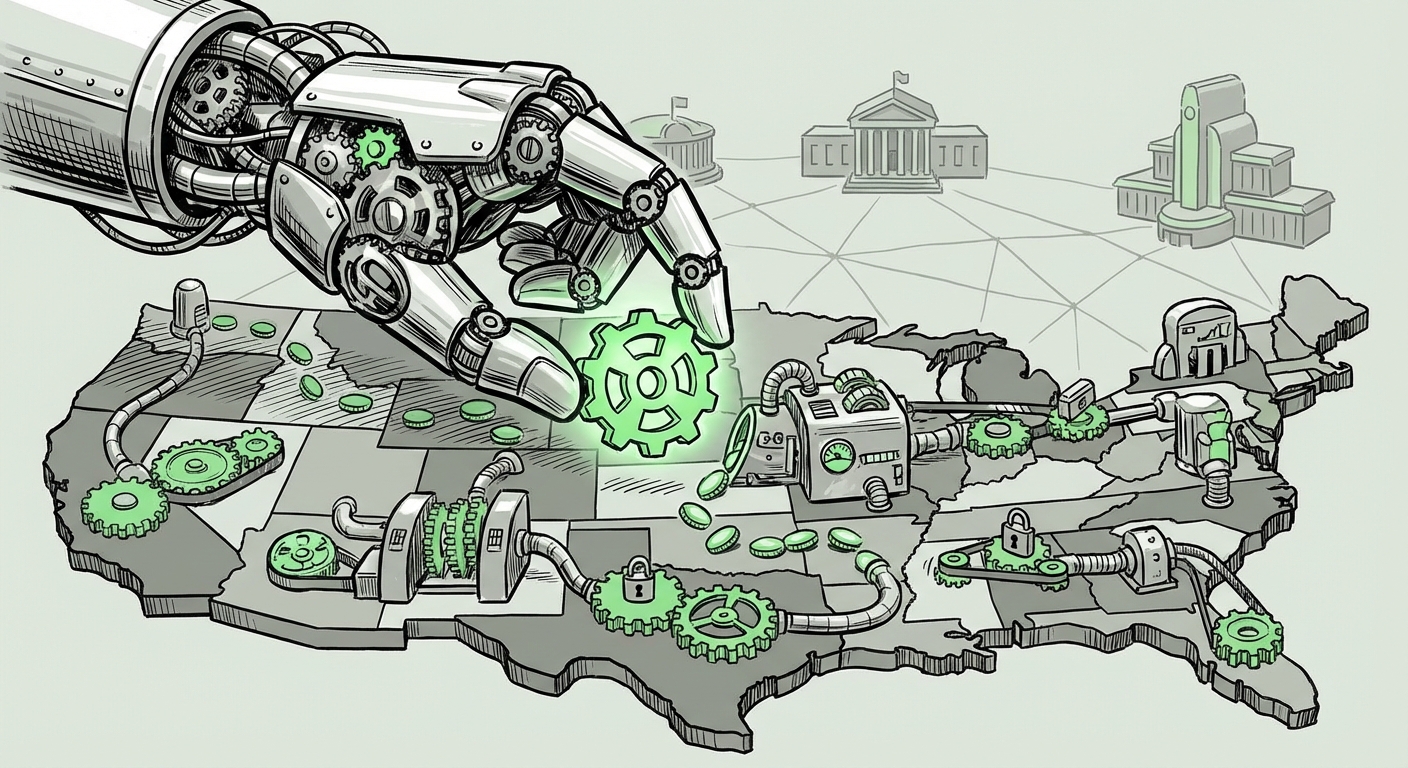

The Artificial Intelligence revolution is moving faster than federal legislatures can possibly keep up. While Congress debates the sweeping, long-term framework for AI safety and governance, the real, immediate action is happening at the state level. This is where the rules governing everything from data usage in model training to the deployment of autonomous systems are being written today.

The recent report detailing Meta’s investment of **\$65 million to influence state-level elections, specifically backing politicians deemed 'AI-friendly,'** is not just a financial footnote; it is a landmark strategic declaration. It signals that Big Tech recognizes the decentralized nature of emerging regulation and is moving decisively to shape its environment from the ground up. For technology analysts, this means the future of AI deployment will be a patchwork quilt of state laws, rather than a single national standard.

The Decoupling: Why States Matter More Than Ever

For years, major tech policy battles—privacy, content moderation, antitrust—were fought in the halls of the U.S. Capitol. Today, the gridlock in Congress regarding AI has created a vacuum, and state governments are rushing to fill it. When the federal government hesitates, states step in to address local anxieties.

This decentralized regulatory environment presents both risks and opportunities for AI developers. A restrictive law in California on data usage might clash with a permissive data environment in Texas. This fragmentation demands companies like Meta move beyond singular, national lobbying efforts. They must now engage in a complex, multi-jurisdictional campaign.

Contextualizing the Spend: The State-Level Policy Surge

Our investigation into the current legislative environment confirms this strategic pivot. We are seeing a significant uptick in bills related to AI governance across various statehouses:

- Deepfakes and Misinformation: States are rapidly passing laws governing synthetic media, especially concerning elections. Politicians want demonstrable action against deceptive content before election cycles.

- Algorithmic Accountability: States are beginning to target how AI is used in high-stakes public sectors, such as determining credit scores, insurance rates, or even sentencing guidelines. This involves mandates for auditing and transparency, directly affecting Meta's ability to deploy its models in sensitive areas.

- Data and Privacy Overlap: While federal privacy laws remain elusive, states like Virginia, Colorado, and Utah have established comprehensive data rights frameworks. AI models rely heavily on vast datasets; any change in state-level data access fundamentally alters the economics of training large models.

Meta’s \$65 million investment is essentially an insurance premium against a wave of potentially contradictory or restrictive state legislation. They are prioritizing candidates who understand the technical necessities of AI innovation over those who might champion sweeping, but potentially stifling, consumer protection measures.

The Bigger Picture: Lobbying Trends and Financial Footprints

To truly appreciate the scale of this move, we must place it within the broader context of Big Tech political spending. While \$65 million earmarked for AI-friendly candidates is remarkable, it reflects an escalating trend:

Escalating Tech Lobbying at the Local Level

Historically, tech lobbying focused heavily on federal agencies and powerful committees. Now, contributions are spreading to state legislative races, judicial appointments, and local regulatory bodies. This shift is driven by necessity. Federal regulation is slow; state politics are immediate.

As broader tech scrutiny continues (regarding antitrust, content moderation, and platform liability), AI represents the next major regulatory frontier. Companies realize that if they can secure a favorable regulatory climate in the top 10 most populous or economically significant states, they can effectively create a de facto national standard—or at least inoculate themselves against the worst potential outcomes until (or unless) federal action materializes.

This mirrors the broader political finance landscape, where "Big Tech lobbying spending" in 2024 is often characterized by its targeted, nuanced approach, focusing on emerging technology niches rather than broad regulatory buckets.

The Core Conflict: Intellectual Property and Generative AI

Perhaps the most immediate, high-stakes issue Meta and other generative AI developers are fighting over concerns Intellectual Property (IP). Generative models like those powering image and text creation are trained on massive swathes of the internet, often including copyrighted material.

The IP Tightrope Walk

Artists, writers, and news organizations are demanding compensation or regulatory frameworks that limit the use of their work for training purposes. If state legislatures begin passing laws that aggressively empower creators to sue or demand licensing fees for training data—even preempting federal copyright law—the cost and feasibility of developing leading LLMs could skyrocket.

Therefore, Meta is not just funding general support; they are likely funding campaigns centered on candidates who support an interpretation of "fair use" favorable to technology development, or those who prefer to keep IP debates squarely in the jurisdiction of federal courts rather than state-level common law challenges. This lobbying focus is critical for the long-term economic model of generative AI.

Societal Anxiety as a Political Driver

It is naive to think that large corporate spending occurs in a political vacuum. Meta’s strategy is directly responding to—and attempting to manage—significant public concern over AI.

The Public’s Fear and the Politicians’ Response

Surveys consistently show a rising tide of public unease about AI, often centering on two key areas: **job displacement** and the proliferation of **synthetic misinformation (deepfakes)**. When the public is worried, politicians feel compelled to *act*. This pressure translates into proposed legislation, which, if hastily written, can severely restrict innovation.

Meta’s investment aims to inject a counter-narrative into these crucial races: the narrative that responsible innovation requires a light touch, or at least an informed regulatory framework that doesn't kill the technology before it matures. By backing "AI-friendly" candidates, they secure allies who will advocate for nuance—stressing economic benefits and safety through industry self-regulation, rather than broad government mandates based on fear.

What This Means for the Future of AI and Technology Adoption

The \$65 million investment is a powerful signal. It confirms that the governance of artificial intelligence will be characterized by **fragmentation, strategic state-level focus, and intense financial influence.**

1. The Regulatory Patchwork

For businesses relying on AI (which is now almost every business), the future is complex. Instead of navigating one set of federal rules, they must now master 50 different sets. This increases compliance costs, slows down deployment in less mature markets, and favors large organizations like Meta that can afford massive legal and lobbying teams to track every state bill.

Practical Implication: Companies must immediately prioritize **regulatory mapping** at the state level. Compliance teams need AI-specific expertise for state privacy laws, bias auditing requirements, and consumer notification mandates. The ability to quickly adapt AI tools to local standards will become a competitive advantage.

2. Innovation vs. Constraint

The outcome of these state races will determine the speed of AI innovation. If "AI-friendly" candidates win, we can expect a more permissive environment focused on light-touch regulation, encouraging rapid deployment in fields like healthcare diagnostics or autonomous logistics, provided they respect core data rights.

Conversely, if candidates favoring stringent preemptive regulation win, innovation in those specific states might slow down, pushing cutting-edge development into friendlier jurisdictions. This creates the risk of regulatory arbitrage, where the most advanced—and potentially riskiest—AI is developed entirely outside the oversight of highly regulated states.

3. The Erosion of Federal Primacy

When corporations invest this heavily at the state level, they inadvertently diminish the future authority of federal regulators. If states pass diverse and conflicting laws, any future federal law seeking to "harmonize" the landscape will face immense resistance from entrenched state interests. This spending locks in state influence, making it harder for Washington to impose a unified framework later.

Actionable Insight for Policymakers: Current federal policy discussions must now account for the existing, deep political investment at the state level. Ignoring state-level victories achieved through this spending will lead to protracted legal battles and legislative gridlock.

Actionable Insights for the AI Ecosystem

The AI landscape is no longer purely technical; it is deeply political. Businesses and researchers must adapt their strategies accordingly:

- Invest in Local Policy Advocacy: Beyond national tech trade groups, small and medium-sized AI firms need dedicated resources to monitor and engage with state legislative sessions. Your product might be fantastic, but if the local zoning law for algorithmic deployment bans it, the technology remains hypothetical.

- Design for Interoperability: Build AI systems with modular compliance layers. The system should be able to swap out its data processing pipeline instantly if it moves from a state with strict opt-in requirements to one that is more permissive. Flexibility is survival.

- Focus on Transparency as a Political Shield: While lobbying shapes the rules, building public trust remains essential. Companies that proactively embrace transparent audits (even if not strictly mandated yet) can neutralize political attacks driven by public fear, regardless of which candidates win office.

Meta's \$65 million expenditure is more than a campaign contribution; it is a textbook example of strategic foresight in the age of digital governance. They are not waiting for the storm of regulation; they are actively seeding the ground to ensure that when the rain comes, it nurtures—rather than drowns—their technological aspirations. For the rest of the industry, this spending confirms a critical truth: mastering the algorithm now requires mastering the state legislator.