The Imperative Shift: Mastering Post-Training AI Interpretability in the Age of Black Box Models

For years, the promise of truly understandable Artificial Intelligence existed primarily in the realm of theory or within controlled laboratory settings. When building smaller, specialized models, we could often "look inside" the mechanics—understanding precisely why a decision was made. However, with the explosion of modern foundation models, particularly Large Language Models (LLMs) containing billions, even trillions, of parameters, this traditional approach has broken down. The discussion, as highlighted by recent critiques like "The Sequence Opinion #810," is shifting critically: If we cannot interpret these colossal systems during training, we must become experts at understanding them after they are deployed.

This transition from pre-training interpretability (designing for clarity) to post-training interpretability (auditing for safety and compliance) is arguably the most significant governance challenge facing AI today. It moves interpretability from a niche academic pursuit into a core requirement for industry survival and public trust.

The End of Pre-Training Comfort

Historically, interpretability methods like examining feature importance or visualization tools were baked into the development lifecycle. If a simpler model (like a decision tree) was used, transparency was inherent. If a neural network was used, researchers often employed techniques like LIME or SHAP to get local explanations for specific predictions during the testing phase.

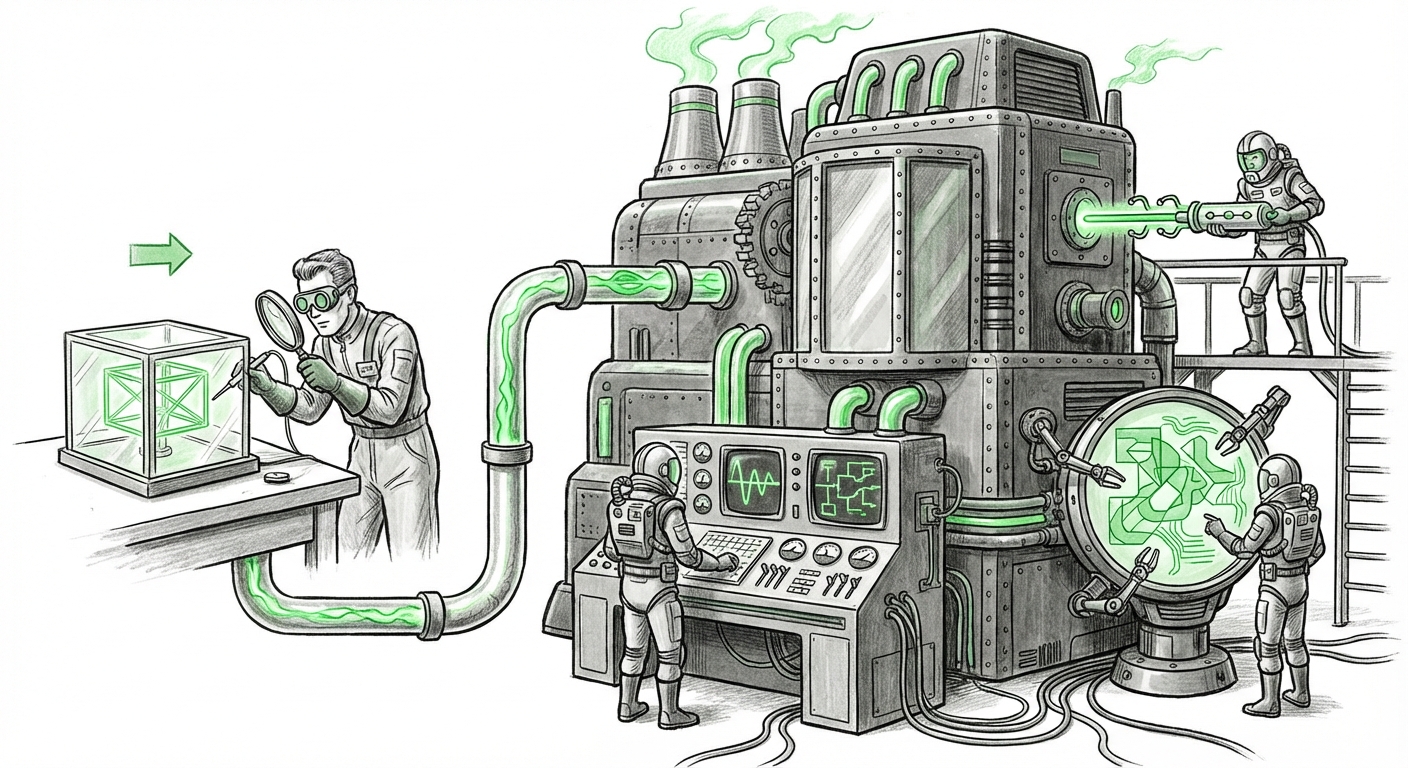

But today's frontier models—the generative engines driving everything from code completion to strategic content creation—are too vast and too complex for these early-stage checks to suffice. They are, by necessity, black boxes. We train them on vast portions of the internet, and they emerge with emergent behaviors we did not explicitly program. The question is no longer, "Can we design it to be transparent?" but rather, "How do we safely govern what is already running?"

This need to dissect an opaque system after it has learned its knowledge base forms the core of the post-training imperative.

The Three Pillars Driving the Post-Training Focus

Our analysis, corroborated by current industry and regulatory trends, suggests this shift is being driven by three interlocking forces: regulatory mandates, technical necessity, and the sheer scale of current deployments.

Pillar 1: The Regulatory Hammer Drops (The "Why Now")

The leisurely pace of purely academic exploration is over. Governments worldwide are formalizing rules that demand accountability from deployed AI systems. For businesses, this means interpretability is rapidly becoming a compliance checkpoint, not just a best practice.

Specifically, initiatives like the EU AI Act target high-risk AI systems, demanding traceability, transparency, and documentation regarding the system's decision-making process even after it’s in the market. If a bank uses an LLM to assess loan applications, regulators need assurance that the model is not relying on prohibited proxy features (like zip codes that correlate with race). This assurance cannot come from pre-training documentation alone; it requires continuous, post-deployment auditing.

This regulatory push forces organizations to implement post-deployment explainability mechanisms simply to avoid massive fines or market exclusion. For policy makers and compliance teams, the focus must shift to verifying adherence to established rules, regardless of the model's internal architecture.

Pillar 2: Unlocking the Black Box with Mechanistic Interpretability (The "How To")

How do researchers actually go inside a finished LLM? The technical frontier here is exciting, focusing on methods to probe the model’s internal representations—the mathematical 'thoughts' the model has between input and output. This is often referred to as Mechanistic Interpretability.

Instead of just asking, "Why did you say X?", researchers are asking, "What specific neurons or computational pathways fired to generate X?" Techniques involve creating small 'probes'—secondary, simpler models—trained to predict human-understandable concepts (like sentiment, factual recall, or even deceptive intent) based on the frozen internal states (activations) of the main black box model.

Leading organizations, such as Anthropic, are deeply invested in this area. Their work in identifying and mapping specific "circuits" within their models that perform specific tasks—for instance, identifying the pathways responsible for simulating deception or exhibiting specific biases—is crucial. As noted in their research exploring how internal features are learned, these techniques allow us to map high-level behaviors back to tangible, internal mechanisms, effectively reverse-engineering the learned knowledge structure post-training (Anthropic's Blog on Mechanistic Interpretability).

For technical audiences, this is the new arms race: building better probes that can run against closed or proprietary APIs, moving beyond simple input/output analysis to genuine internal diagnostics.

Pillar 3: The Scalability Nightmare (The Reality Check)

Even if we have the best tools, we face the overwhelming constraint of size. A trillion-parameter model requires immense computational resources simply to run once. Analyzing every possible pathway or every internal activation state is computationally prohibitive.

Traditional post-hoc explanation methods that rely on perturbing inputs (like SHAP or Integrated Gradients) become incredibly expensive or impossible when the input space is limitless (as with natural language) and the internal structure is opaque. We are seeing analyses pointing out the inherent overhead of trying to audit these massive systems effectively. The sheer cost limits what auditing firms or even the original developers can afford to do on a continuous basis.

This challenge forces a trade-off: We must prioritize *where* we look. Instead of full introspection, we focus on targeted auditing of high-risk functions or adversarial inputs, seeking the most impactful insights for the lowest computational cost. This pragmatic focus on efficiency, rather than exhaustive analysis, is defining the future of large-scale model auditing.

Implications for the Future of AI Development and Deployment

The pivot to post-training interpretability fundamentally changes how AI will be adopted across the enterprise and society.

For Developers and Researchers: The Rise of the AI Auditor

The job description for AI professionals is evolving. It's no longer enough to achieve state-of-the-art performance metrics (like high accuracy or fluency). Engineers must now demonstrate that the system is safe, auditable, and compliant at the point of deployment. This means interpretability tooling must become standard components of MLOps pipelines, running automated checks against model outputs based on regulatory triggers.

Furthermore, the future will likely see a bifurcation: smaller, purpose-built models might remain relatively transparent, while the massive general-purpose models will be treated as necessary, powerful utilities that require significant external or internal oversight teams dedicated solely to interpretation and vulnerability assessment.

For Businesses: Risk Management Becomes Model Management

Businesses deploying high-stakes AI (e.g., in finance, healthcare, or hiring) can no longer delegate responsibility by claiming ignorance of the black box. Post-training interpretability is now the mechanism for quantifying and mitigating liability. If an adverse event occurs, the ability to trace that failure back to a specific set of learned parameters or a corrupted training data influence becomes essential for legal defense and remediation.

This necessity drives demand for third-party AI auditing services that specialize in running these deep, post-hoc diagnostic techniques. Model governance moves from merely monitoring uptime and latency to actively probing for hidden toxicities or discriminatory pathways.

For Society: Trust Built on Verifiable Safety

Public trust in advanced AI hinges on its perceived reliability. When an AI system makes a catastrophic error—say, spreading harmful misinformation or causing financial havoc—the immediate societal demand is for an explanation. If developers cannot provide a verifiable reason based on the model’s learned behavior, trust erodes rapidly.

Post-training analysis provides the necessary bridge between opaque algorithmic power and human accountability. It allows us to move past blaming the software to understanding the specific failure modes that need patching, retraining, or decommissioning.

Actionable Insights: Navigating the Post-Training Landscape

How can organizations prepare for this new era where governing the deployed system is as important as building it?

- Mandate Interpretability Benchmarks: Integrate standardized post-hoc auditing scores into your MLOps release criteria. If a model cannot pass basic probing tests for bias or alignment after training, it should not ship.

- Invest in External vs. Internal Probing: If you are using third-party foundation models (via API), invest heavily in data logging and output analysis to build your own external interpretability layer. If you train models in-house, dedicate compute cycles specifically to deep mechanistic probing research.

- Focus on High-Leverage Interventions: Given the scalability limits, focus scarce interpretability resources on known high-risk areas (e.g., safety filters, adversarial prompts, critical decision points) rather than trying to map the entire knowledge graph. Target the highest risk first.

- Engage with Policy Early: Understand how forthcoming regulations, like the EU AI Act, will define "explainability" in your sector. Build audit trails now that satisfy future compliance requirements, even if the current operational demands are lower.

Conclusion: Interpreting the Inevitable

The era of accepting powerful black boxes on blind faith is drawing to a close. The trajectory of AI innovation, coupled with increasing regulatory scrutiny, makes the shift to post-training interpretability not just advisable, but inevitable. It is a complex challenge, demanding breakthroughs in mechanistic understanding while grappling with astronomical scale and demanding external governance.

The future of responsible AI deployment lies not in forcing every massive neural network to become transparent like a glass box, but in developing sophisticated, scalable tools that allow us to confidently audit and govern the opaque machinery we unleash upon the world. Mastering this post-training analysis is the key differentiator between AI that scales responsibly and AI that collapses under the weight of its own incomprehensibility.