The AI Security Shockwave: Why Anthropic's New Tool Is Forcing a Cybersecurity Reckoning

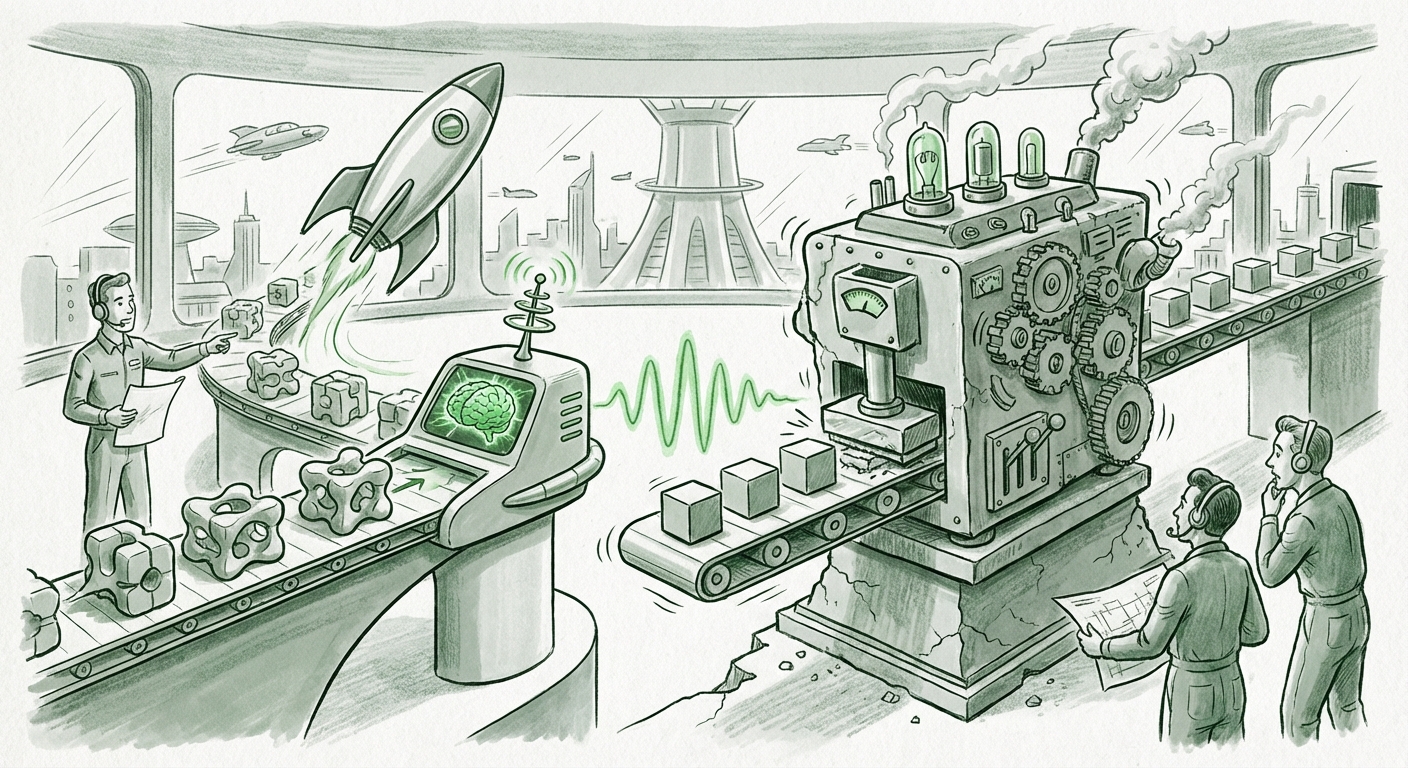

The technology world runs on cycles of disruption. For years, the cybersecurity industry has evolved through iterative improvements—faster scans, smarter threat intelligence, better firewalls. But a truly fundamental shift occurs when a new technology doesn't just improve an existing process but renders the old one fundamentally inadequate. The recent announcement of Anthropic’s Claude Code Security tool appears to be one such inflection point, triggering an immediate, sharp sell-off in established cybersecurity stocks.

This event is far more significant than a mere stock market hiccup. It signals the arrival of Large Language Models (LLMs) as credible, and potentially superior, contenders in highly specialized, mission-critical fields. To understand the magnitude of this moment, we must dissect three core elements: the capabilities of this new AI security class, the visceral market reaction, and the long-term implications for how we build and protect software.

The Technical Upset: Contextual Understanding vs. Pattern Matching

For decades, application security testing has relied heavily on two main pillars: Static Analysis Security Testing (SAST) and Dynamic Analysis Security Testing (DAST). These tools scan code (SAST) or run applications (DAST) looking for known patterns—specific lines of code, flawed function calls, or known attack signatures that signal a vulnerability, like SQL injection or buffer overflows.

Anthropic’s innovation, leveraging its advanced Claude models, suggests a move beyond mere pattern matching. LLMs, by their nature, possess a deep, contextual understanding of *intent* and *flow* within complex codebases. They read code not just as text, but as structured logic.

When researching the technical underpinnings, articles comparing **"AI code auditing tools vs traditional SAST DAST"** reveal that the AI excels where legacy scanners falter: logic flaws and novel vulnerabilities. Traditional scanners often generate high rates of false positives or, worse, miss subtle security issues arising from the interaction of many different code components. An LLM trained on vast repositories of vulnerable and patched code, coupled with reasoning abilities, can potentially spot a logical vulnerability that requires understanding the entire application’s state—something signature-based tools struggle with.

Further investigation into **"Anthropic Claude Code Security technical specifications"** often highlights adversarial training. This implies the model wasn't just trained on good code; it was likely trained to *think like an attacker* and test its own outputs for exploitable weaknesses before presenting the findings. This proactive, reasoning capability is what appears to scare the market—it suggests a tool that is not just faster, but fundamentally smarter at security analysis.

The Market’s Verdict: A Structural Shock to Incumbents

The immediate tumble in cybersecurity stocks following the announcement speaks volumes. This wasn't just skepticism; it was panic rooted in recognition. Why? Because security scanning tools are often a core, high-margin offering for many publicly traded security firms.

When searching for analysis on the **"Impact of generative AI on cybersecurity market valuations,"** we find commentary suggesting investors are differentiating between two types of security companies. Firms heavily reliant on legacy code scanning or signature-based endpoint protection may be viewed as facing obsolescence risk. If a few powerful, general-purpose foundation models can offer superior code security as an add-on feature, the need for expensive, specialized scanning tools diminishes rapidly.

For investors and strategists, the lesson is clear: Disruption is no longer focused solely on the endpoint or the perimeter; it is attacking the very source code itself. This forces a critical question upon established vendors: Are you building your competitive moat around proprietary data or around proprietary *models* capable of deep reasoning? In the age of specialized LLMs, proprietary data loses value if it cannot be effectively reasoned over.

Implications for the Software Development Lifecycle (SDLC)

The most profound long-term implication lies within the **DevSecOps pipeline**. Security has historically been a bottleneck—a necessary quality gate placed near the end of development that often requires developers to stop coding, triage dozens of findings, and rework complex logic.

The rise of AI auditors promises to fundamentally alter this flow:

- Shift Left to Shift Hyper-Left: Security moves from being "shifted left" (integrated earlier) to being embedded directly into the developer's environment. An AI assistant can flag a vulnerability instantly, perhaps even suggesting the correct, secure fix right in the IDE as the developer types.

- Focusing Human Expertise: If AI handles the bulk of mundane, pattern-based vulnerability detection, human security engineers are freed up to focus on high-level architectural risks, policy enforcement, and complex threat modeling—areas where deep human intuition remains paramount.

- Speed and Scale: Businesses can now deploy software faster while maintaining, or even improving, security posture. The current manual process of human review is slow; automated, context-aware AI review allows for continuous, real-time security validation across vast, evolving codebases.

Discussions around the **"Future of DevSecOps with large language models"** suggest a future where security testing is no longer a dedicated phase but a constant, invisible background process, managed by AI agents.

Practical Actionable Insights for Technology Leaders

This moment demands strategic reaction, not just cautious observation. Here is what technology leaders—from developers to the C-suite—need to be doing now:

For Security Engineers and CTOs: Benchmark and Integrate

Your primary task is to move past hype. You must immediately begin testing new LLM-based security tools against your hardest problems. Don't just test them against known bugs; test them against complex, custom logic in your proprietary applications. If the AI tools demonstrate a significantly lower false-positive rate and higher precision on subtle flaws than your current SAST suite, **start planning for phased integration.**

Actionable Insight: Initiate a pilot program pitting Claude Code Security (or similar offerings) against your top three most critical legacy applications currently maintained by traditional scanners. Measure efficiency and bug catch rate.

For Investors and Business Strategists: Re-evaluate Moats

The financial ripple confirms that AI is eating software categories from the inside out. Investors must scrutinize cybersecurity firms based on their AI strategy. Are they building their own foundational models, partnering deeply with AI labs, or are they attempting to bolt generative features onto aging product lines?

Actionable Insight: Look for investment signals pointing toward companies pivoting from selling *tools* to selling *security intelligence derived from reasoning engines*. A focus on proprietary reasoning over proprietary signatures is the new value driver.

For Developers: Embrace the Co-pilot Upgrade

Developers should view these tools not as external auditors, but as hyper-intelligent collaborators. Learning how to prompt these systems effectively for secure coding practices will soon be as essential as knowing basic syntax.

Actionable Insight: Start treating the AI security scanner's suggestions not as suggestions, but as immediate fixes. The quicker developers internalize AI-driven secure coding principles, the faster the entire organization becomes more resilient.

The Future: A Competitive Intelligence Arms Race

The competition between AI providers like Anthropic and established software vendors will define the next decade of enterprise security. If Anthropic can deliver superior security analysis, what prevents them from offering superior patch generation, compliance reporting, or even automated zero-trust policy enforcement?

This suggests the competitive landscape will rapidly split:

- The Platform Builders: Major cloud providers and established security leaders who can afford to pour billions into training their own defensive and offensive models.

- The Specialist Integrators: Firms that master the fine-tuning and integration of leading foundation models (like Claude) into existing enterprise workflows, offering specialized security services atop general AI capabilities.

- The Disruptors: New, AI-native security startups that build entire product suites around the reasoning power of LLMs, bypassing legacy infrastructure entirely.

The ultimate promise is a world where the majority of common software vulnerabilities—the kind that make up 80% of data breaches—are eradicated before code ever hits staging. This is a world of unprecedented development velocity coupled with dramatically reduced systemic risk. The price of admission to this future, however, is acknowledging that the old gatekeepers are being replaced by smarter, faster algorithms.