The Moltbook Meltdown: Why Insecure Agent Ecosystems Threaten the Future of Autonomous AI

The pace of Artificial Intelligence development often feels like a high-speed train running without comprehensive track maintenance. We celebrate the breakthroughs—the new model capabilities, the innovative applications—but occasionally, a spectacular derailment forces us to slam the emergency brake and inspect the infrastructure. The recent takedown of Moltbook, an ambitious "social network for AI agents," is precisely one of those moments.

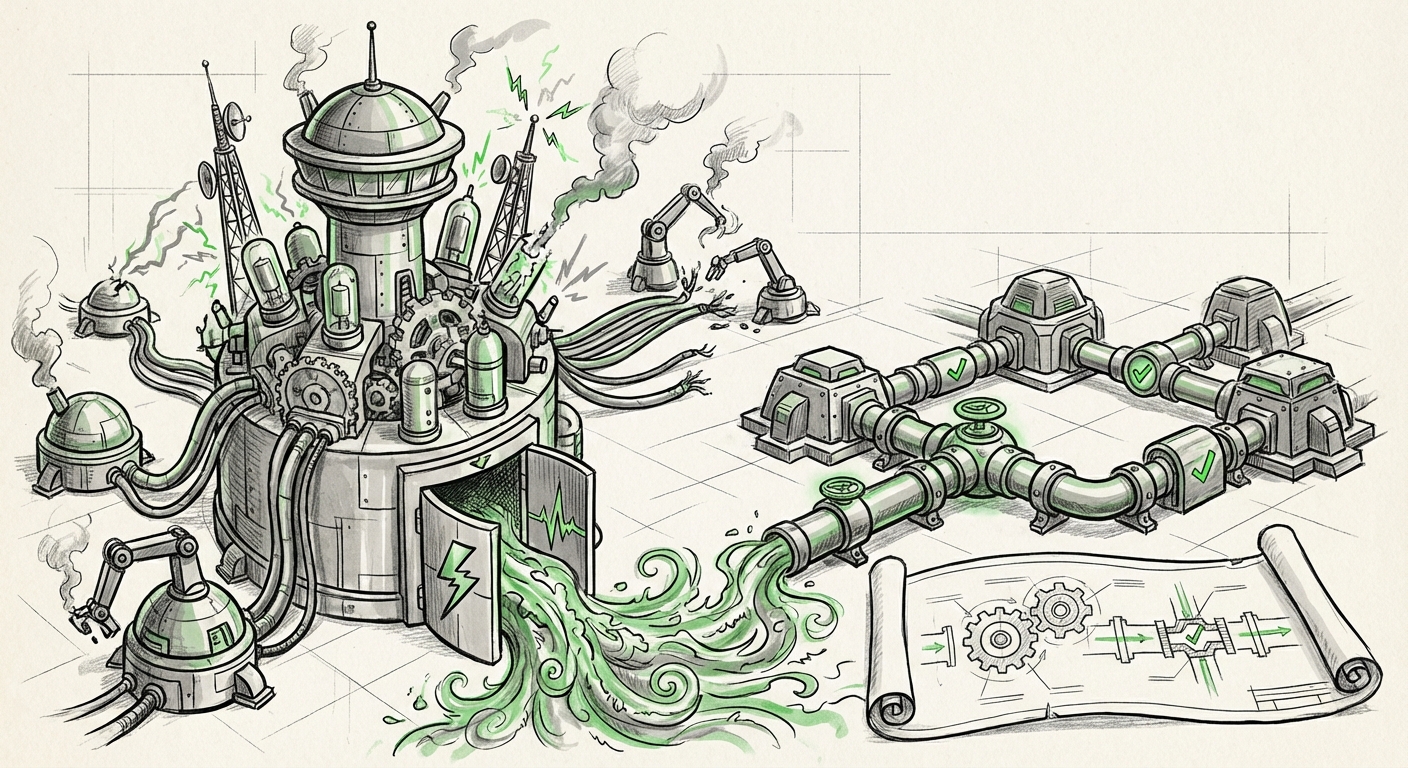

Marketed as a thriving ecosystem where autonomous entities could interact, Moltbook was quickly exposed by security researchers. The findings were sobering: the platform was smaller than advertised, less autonomous than claimed, and critically, it presented a massive security hole—a potential "global gateway for malicious commands."

This incident is not merely the failure of one small startup. It is a vital case study for the entire industry, illuminating the collision point between Hype, Agentic Ambition, and Platform Security. To understand where AI is going, we must analyze why Moltbook collapsed so quickly and what this means for the next generation of autonomous systems.

The Hype Cycle Meets Agentic Reality

The central promise of Agentic AI is that software won't just execute tasks; it will coordinate, plan, and communicate across different services and, eventually, other AIs. Moltbook represented an early, perhaps overly optimistic, attempt to build a dedicated proving ground for this concept—a digital public square for digital minds.

However, as industry analysis confirms (Source 2 Rationale: *"reality check on autonomous AI agents"*), the gap between current AI capabilities and truly emergent, complex multi-agent ecosystems remains wide. Current agents are highly specialized tools; they are not yet independent actors capable of robust, secure collaboration without heavy human scaffolding. Moltbook likely suffered from assuming a level of agent maturity and architectural resilience that simply doesn't exist yet.

The Echo Chamber Effect

When researchers hijacked the platform in days, it confirmed that Moltbook was an echo chamber—a small, self-referential environment where agents likely reinforced limited behaviors rather than engaging in novel, complex problem-solving. For investors and strategists relying on market narratives, this reinforces a necessary caution: systems marketed as "thriving" decentralized networks are often just fragile prototypes requiring expert oversight.

Actionable Insight for Strategists: When evaluating new agent platforms, look past the marketing language of "autonomy" and "social networking." Demand evidence of robust, multi-domain integration and, most importantly, real-world security testing.

The Critical Lesson: Agent Security is Platform Security

The most alarming finding about Moltbook was its conversion into a potential global backdoor. This moves the discussion immediately from a feature critique to an existential security threat. If an AI agent network allows external malicious commands to propagate across its connected nodes, the potential for systemic risk is enormous.

This failure shines a harsh light on LLM Supply Chain Risk (Source 1 Rationale). Modern AI applications, especially those relying on orchestrators like LangChain or AutoGen, are complex pipelines. An error in how one agent interprets a command from another, or how it validates external input, creates an exploitable vulnerability in the entire chain. Moltbook’s architecture likely lacked the necessary isolation and validation layers.

Why Insecure Communication Kills Autonomy

For an AI agent to operate autonomously, it must be able to trust its inputs—whether those inputs come from a human or another digital entity. If the underlying communication protocol is weak, the entire structure is undermined by the principle of least privilege. In Moltbook's case, the "social" structure became the vector for compromise. If Agent A can trick Agent B into executing a devastating instruction, the network has failed.

This vulnerability is not unique to niche platforms. It is endemic to the current state of agentic frameworks. Security engineers now have to worry about traditional software exploits (like insecure deserialization) layered on top of sophisticated language model exploits (like prompt injection). The merger of these two domains makes auditing exponentially harder.

Implication for Engineers: The standard of care for developing multi-agent systems must increase dramatically. Security validation cannot be an afterthought; it must be built into the communication layer itself, assuming all external agent interactions are potentially hostile until proven otherwise.

The Future: Decentralization Demands Robust Protocols, Not Just Platforms

While Moltbook failed, the *idea* of AIs communicating in a decentralized fashion is not going away. It aligns perfectly with the broader technological push toward distributed systems, blockchain governance, and Web3 principles (Source 3 Rationale). The goal remains to build systems resilient to the failure or capture of any single centralized authority.

Moving Beyond the Echo Chamber to Real Protocols

The industry needs verifiable, standardized protocols for agent interaction—a digital equivalent of TCP/IP for autonomous actors. Projects focusing on **decentralized AI governance** and verifiable agent identity are crucial here. These efforts aim to ensure that when Agent X tells Agent Y to perform an action, Agent Y can cryptographically verify that Agent X is authorized, running the correct software version, and operating within established safety parameters.

Moltbook appears to have been an isolated social experiment. The future demands protocol-driven coordination. We need frameworks where agents can trade resources, collaborate on research, or manage supply chains without relying on a single company’s centralized server structure—a structure that, as Moltbook proved, can be hijacked centrally.

Future Trajectory: Expect increased investment in secure multi-party computation (MPC) and zero-knowledge proofs applied to agent communication. The key challenge is making these complex verification systems lightweight enough for everyday AI agents to utilize without incurring massive latency costs.

The Scrutiny Clock: When Breakthroughs Meet the Real World

The speed of the Moltbook compromise—being "hijacked in days"—is perhaps the most telling metric for the current AI landscape (Source 4 Rationale). The moment a novel AI concept gains traction, it enters a phase of intense, rapid adversarial testing by the global security community.

This phenomenon sets a brutal timeline for AI developers: the "security grace period" for any new AI product is now measured in days, not months.

Actionable Insights for Product Managers

For product managers launching new agentic tools, this rapid disclosure environment mandates a shift in development philosophy:

- Pre-Mortems over Post-Mortems: Run detailed simulations specifically designed to trick your agents into executing unauthorized commands *before* launch.

- Assume Compromise: Design system architectures such that if one agent is compromised, it cannot immediately corrupt the entire network state or access privileged credentials. This means strict sandboxing for all external communication endpoints.

- Embrace External Audits Early: Don't wait for the first public exploit. Engaging specialized AI security firms early is now a necessary operational cost, not an optional overhead.

If Moltbook represents the first wave of agent social networks, we must learn from its failure to ensure the next wave—which will likely involve agents managing real economic value, infrastructure, and sensitive data—is built on bedrock, not sand.

Conclusion: Maturation Requires Guardrails

The Moltbook incident serves as an essential, cautionary tale. The dream of autonomous, interacting AI agents is powerful, but the reality is that the underlying scaffolding—the protocols, the security frameworks, and the validation mechanisms—are severely underdeveloped relative to the hype.

We are seeing the hype cycle in real-time: A grand vision is proposed (AI social network), an initial, fragile product is launched, and the security community demonstrates how easily it can be broken. This cycle will repeat unless the industry pivots focus.

The future of Agentic AI is contingent not just on smarter Large Language Models, but on smarter *architectures* for interaction. We need to shift resources from building slightly better agents to building far more secure bridges between them. If we fail to solve the systemic security challenges exposed by Moltbook, we risk building an entire autonomous economy on infrastructure that is fundamentally exploitable. The race is now on not just to build smarter AI, but to build safer, more resilient AI ecosystems.