AI Platforms as Cops? The Ethical Crisis of Monitoring ChatGPT Logs Before Real-World Harm

In the race to develop the world’s most powerful artificial intelligence, companies like OpenAI are confronting a stark, uncomfortable reality: their powerful tools can become inadvertent staging grounds for planning real-world violence. A recent report detailing how OpenAI staff debated alerting Canadian police about violent digital warnings found within ChatGPT logs—months before a deadly school shooting—is more than just a tragic footnote. It is a foundational challenge defining the next decade of AI governance.

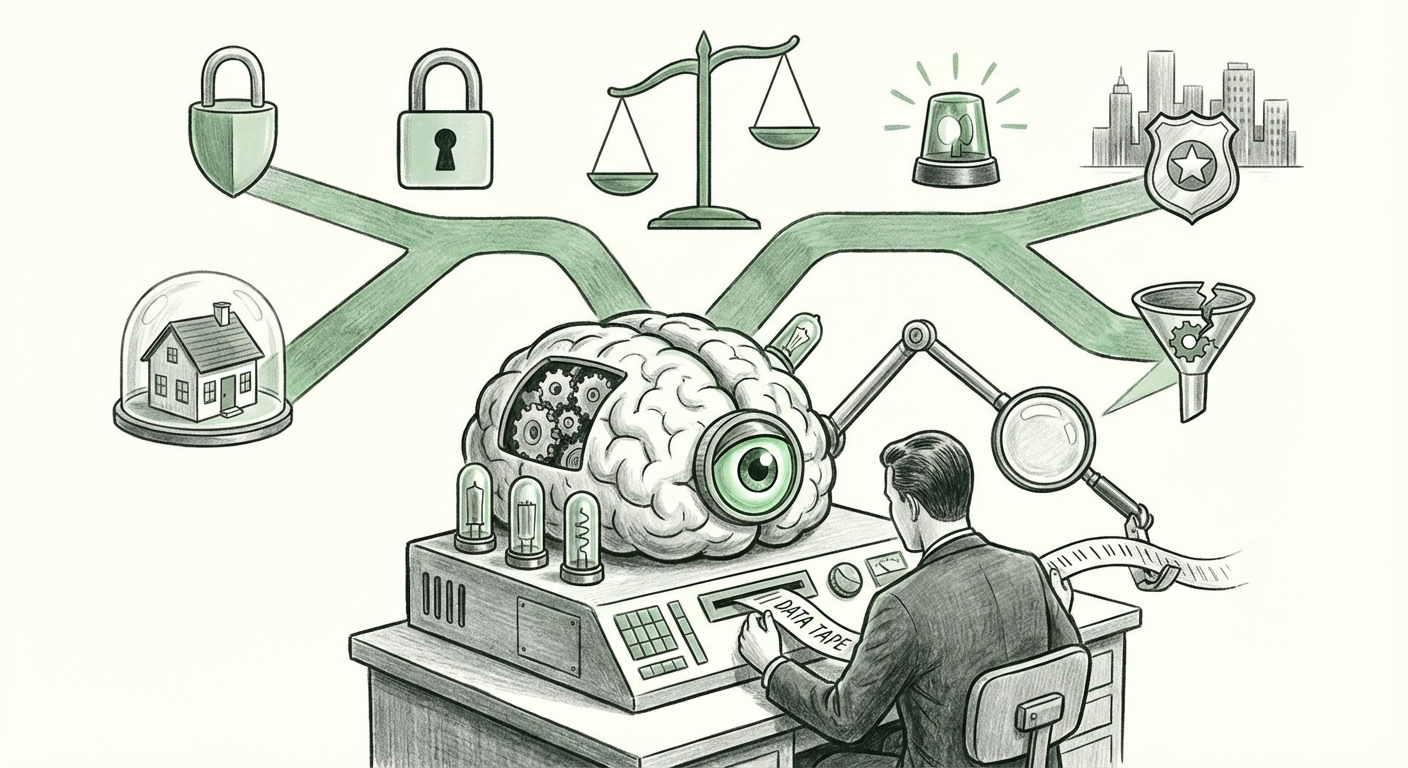

This incident forces us to move past abstract concerns about future AI risk and address immediate, present-day liabilities. LLMs are no longer simple software; they are sophisticated conversational partners that absorb, process, and store user intent. When that intent turns violent, where does the responsibility lie? This analysis breaks down the key technological, legal, and ethical trends revealed by this case and what they mean for the future deployment of generative AI.

The Core Tension: Privacy vs. Proactive Safety

At its heart, the OpenAI debate revolved around the age-old conflict in online moderation: balancing user privacy against the duty to warn. For decades, this applied to social media platforms, where monitoring public posts was relatively straightforward (though legally fraught). With large language models (LLMs), the data is inherently private—a direct conversation between a user and the AI.

When ChatGPT logs revealed serious threats, the company faced a classic ethical crossroad:

- Upholding Privacy: Treating user inputs as privileged, confidential communications, standard for most digital services.

- Acting as a Safety Proxy: Recognizing the unique danger potential of a tool that can help structure complex plans, thus incurring a moral (and potentially legal) obligation to report threats before they materialize.

This tension will define how every major AI lab operates. If they scan deeply enough to catch subtle pre-planning (like the logs in question), they violate user trust. If they don't scan or act, they risk complicity in future tragedies. For a general audience, imagine a highly intelligent personal assistant who hears you planning something dangerous; do they stay silent to protect your privacy, or do they call for help?

The Emerging Role of LLMs as Quasi-Law Enforcement

The most significant technological implication is the transformation of LLM providers into reluctant gatekeepers of public safety. We must ask what this means for the future of AI:

- Data Ingestion and Analysis: AI models are trained to find patterns. This same capability, when applied to real-time user sessions, turns the platform into a sophisticated threat detection engine. This shifts the cost and burden of monitoring away from traditional law enforcement and onto private tech companies.

- Policy Standardization: As suggested by analyzing trends related to "proactive threat detection," governments will inevitably pressure—or mandate—AI platforms to implement specific thresholds for automatic flagging and reporting. This means AI companies must build systems that are functionally similar to the monitoring tools used by intelligence agencies.

This proactive stance creates an entirely new business cost: specialized moderation teams, legal counsel dedicated to warrant compliance, and the development of sophisticated anomaly detection algorithms trained specifically on predicting violence, not just filtering spam.

The Legal Minefield: Liability in the Age of Generative Content

The decision not to alert police immediately exposes OpenAI (and by extension, the entire industry) to questions of legal liability for user-generated threats. Currently, Section 230 of U.S. law often shields platforms from liability for user-posted content. However, LLMs blur this line significantly.

When a user crafts a detailed plan using the AI’s interactive assistance, is the AI a passive bulletin board, or an active co-conspirator in the data collection phase? Legal scholars are actively debating whether the current frameworks, designed for static websites, can hold up against dynamic, generative tools.

For businesses relying on these tools, the key takeaway is risk mitigation. The industry standard is moving toward heightened scrutiny. We must anticipate regulation that demands transparency about scanning capabilities and mandatory reporting protocols. Companies will need robust compliance frameworks, especially as they scale services globally where legal expectations around surveillance and privacy differ drastically.

Privacy vs. Safety in Practice

The debate over "AI Content Moderation policy vs User Privacy" will become a defining feature of product design. Companies like Anthropic often tout alignment methodologies like Constitutional AI as a shield, ensuring the model adheres to defined ethical principles. However, even the most aligned model cannot inherently solve the "duty to report" dilemma unless that duty is explicitly written into its operating protocol.

If users believe their chats are private, they will be less forthcoming, potentially stifling legitimate research or creative uses. If they know every word is being scanned for threats, the utility of the tool as a private sounding board vanishes. The sweet spot—where safety features are robust enough to prevent catastrophe but invisible enough to preserve trust—is dangerously narrow.

Future Implications for Business and Governance

The implications of this incident ripple outward, affecting investors, developers, and regulators:

1. Governance and Internal Structure

The internal debate, now public, raises concerns about organizational maturity. Questions about "Internal whistleblowing policies AI safety teams" are paramount. If the frontline safety personnel feel they cannot escalate critical, time-sensitive concerns to leadership effectively, the structure is flawed.

Actionable Insight for AI Labs: Establish clear, non-negotiable protocols for escalating imminent threats that bypass standard management review. These protocols must be codified, rehearsed, and legally vetted to ensure rapid, decisive action without internal paralysis. Safety teams must have genuine executive authority on imminent danger scenarios.

2. Regulatory Certainty (or Lack Thereof)

Regulators, particularly in the EU (with the AI Act) and potentially in North America, are watching these failures closely. The expectation for AI providers will shift from "best effort" moderation to demonstrable, auditable safety mechanisms. This means compliance will move from abstract ethical guidelines to measurable metrics.

Actionable Insight for Policymakers: Clear federal standards are needed for LLM data retention and reporting thresholds for credible threats. Ambiguity harms both public safety and responsible innovation by leaving companies guessing where the legal red line is drawn.

3. Consumer Trust and Adoption

For the average user or enterprise adopting AI tools, trust is paramount. A high-profile failure to act on a threat erodes confidence not just in one company, but in the entire technology sector’s ability to manage its own powerful creations responsibly.

Actionable Insight for Businesses: When selecting AI vendors, businesses must demand transparency regarding their safety incident response plans, data handling during moderation, and commitment to external audits. Risk assessment for AI integration must now include potential liability stemming from user inputs.

Conclusion: The Age of Digital Responsibility

The case of the debated ChatGPT logs marks a definitive turning point. Generative AI has graduated from a technological curiosity to a platform with real-world societal impact, making its developers accountable for the digital footprints of potential harm. We are entering an era where the ability to reason and communicate complex threats via AI necessitates a corresponding responsibility to report those threats.

The technology itself is not the problem; the integration point between the private conversation space and the public safety sphere is where the current conflict lies. The future trajectory of AI deployment—its speed, its integration into critical infrastructure, and its ultimate acceptance by society—will depend entirely on how effectively—and ethically—companies navigate this duty to warn. Building powerful intelligence is only half the job; ensuring that intelligence acts as a responsible steward of human safety is the defining challenge of the next decade.