OpenAI's Hardware Gambit: Why the Proactive AI Speaker Signals the End of the App Era

The generative AI revolution has, until now, largely been confined to the screen—a window opened on a laptop or smartphone. That era appears to be drawing to a swift close. Recent reports indicating that OpenAI is developing a consumer hardware lineup, spearheaded by a proactive smart speaker featuring a camera and facial recognition, represent far more than just a new product launch. It signifies a calculated, aggressive pivot toward controlling the *interface* through which we interact with artificial intelligence. This is the shift from AI as a tool to AI as an ambient companion.

As an AI technology analyst, I see this not as a simple competition with Amazon Alexa or Google Nest, but as a foundational move that could redefine personal computing. To understand the magnitude of this development, we must examine the ambition behind the hardware, the technical leap required for true ambient intelligence, and the inevitable societal friction points that follow.

The Leap from Chatbot to Ambient Companion

Current smart speakers are fundamentally reactive. You must wake them up ("Hey Google," "Alexa") and then issue a command. They wait passively. OpenAI’s rumored device, capable of proactively suggesting actions—like telling a user when to go to bed based on observation—moves firmly into the territory of ambient computing. This is AI that is always present, aware of context, and ready to intervene helpfully, minimizing the need to explicitly ask for anything.

This vision aligns perfectly with the direction the most advanced Large Language Models (LLMs) are taking. They are becoming increasingly multimodal—meaning they can process text, images, and eventually video and sound simultaneously. A device with a camera and proactive suggestions demands this multimodal capability. It needs to see the room, hear the environment, and understand the user’s state.

Corroborating the Vision: A Full Hardware Lineup

The rumor mill suggests this speaker is just the vanguard. Reports indicating an entire "lineup in the pipeline," potentially including smart glasses and an AirPods competitor, reveal a comprehensive strategy. OpenAI is not aiming to sell one gadget; it aims to embed its AI core into the physical fabric of daily life. This echoes the strategic thinking seen in explorations around a dedicated AI-native phone, an endeavor that aims to strip away legacy operating systems (like iOS or Android) to create a seamless, AI-first experience. As analysts look into the "OpenAI hardware strategy rumors," the theme is clear: own the edge devices where interaction occurs.

For businesses and investors, this signals that the control layer is moving away from platform owners (like Apple or Google) and toward the foundational model creators. If the best intelligence resides on the device, the hardware supporting it becomes secondary—or, at least, a strategic accessory to the intelligence.

Defining the New Battlefield: Ambient Computing vs. The Smart Home

Why build new hardware when billions of smart devices already exist? Because existing smart home technology is fragmented, application-driven, and lacks genuine intelligence. If you want your light to turn on, you must first open an app or issue a precise voice command. This friction breaks the sense of seamlessness.

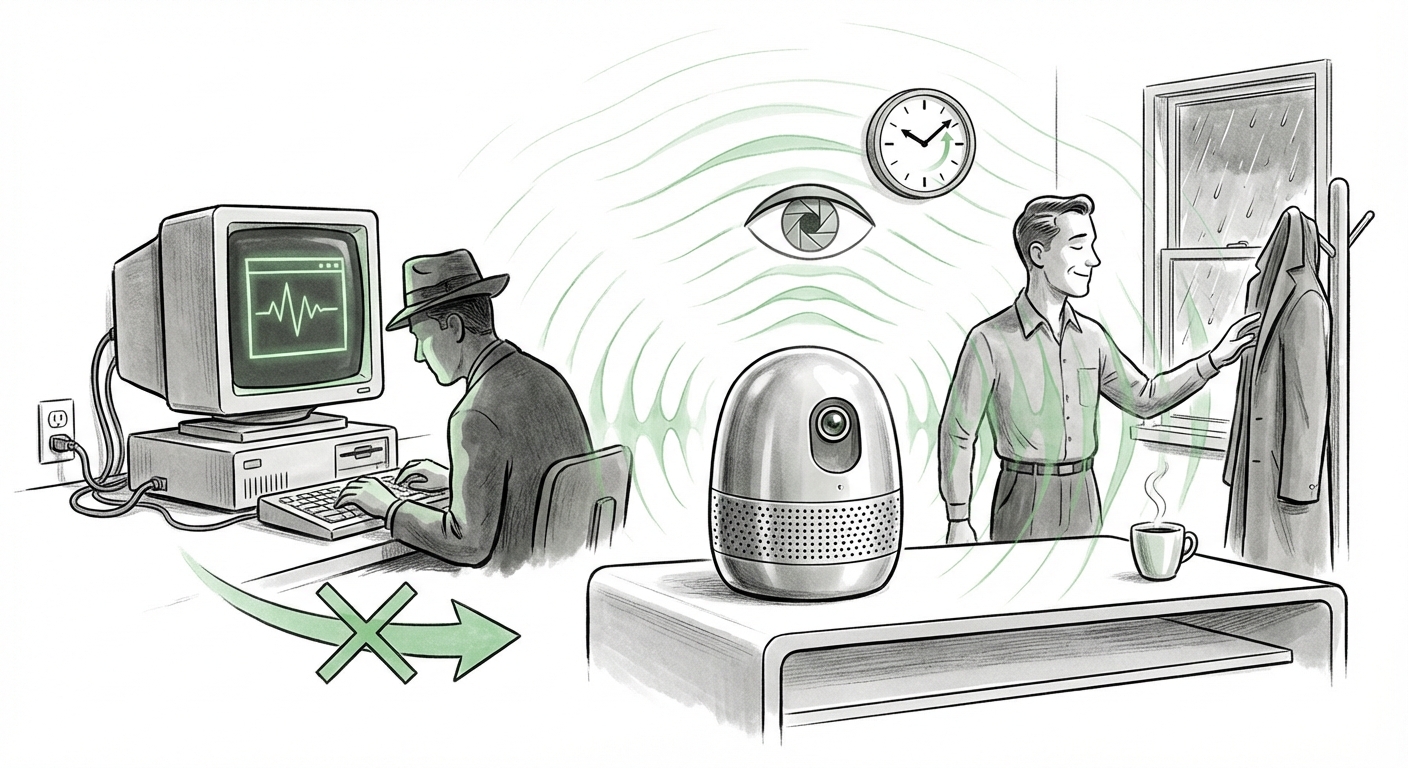

True ambient computing, as envisioned here, smooths out that friction. Instead of asking an assistant for the weather, the ambient AI notices you grabbing your coat and quietly notifies you that rain is expected in an hour. This is a radical departure from the current market. When examining the difference between "Ambient computing vs smart home," we find that the former prioritizes context and proactivity, whereas the latter prioritizes discrete commands.

For the user—and this is a simple concept to grasp—ambient AI means the technology starts anticipating needs rather than merely responding to demands. If the device knows your sleep patterns via camera and activity sensors, suggesting bedtime is a natural extension of its continuous contextual awareness. This demands far more powerful, low-latency local processing capabilities.

The Technical Hurdle: Powering Proactivity Affordably

For a device priced between $200 and $300 to offer truly proactive, multimodal AI, it must solve significant engineering challenges. It cannot rely solely on sending every frame of video and every audio snippet to the cloud, as that incurs massive latency and bandwidth costs, making the experience slow and expensive to operate.

This brings us to the crucial role of silicon. Analysis into "AI chip requirements for on-device multimodal processing" reveals that success hinges on highly efficient Neural Processing Units (NPUs). The device must be capable of running smaller, specialized models locally to analyze immediate context (e.g., "Is the user looking at the TV? Are they standing by the door?"). Only when a deeper, more complex query is needed would it offload to the cloud.

The price point suggests a tight integration of custom or near-custom silicon optimized specifically for OpenAI’s inference requirements. If they succeed in balancing high performance with low cost in a consumer product, they will have effectively democratized high-level contextual awareness, setting a new baseline for what a "smart" device should be.

The Inevitable Backlash: Privacy in the Age of Always-On Sensing

The core tension surrounding this hardware is unavoidable: the most helpful, proactive AI requires the most invasive sensing. A device that tells you when to go to bed by observing your activity must watch you, and a device that understands your commands must listen constantly. The inclusion of a camera and facial recognition transforms the smart speaker from a voice assistant into a persistent home surveillance node, albeit one designed for personal convenience.

Reports focusing on "Consumer reaction privacy AI camera facial recognition" highlight the immediate red flags raised by privacy advocates and regulators. For OpenAI to gain mass adoption, it must resolve this trust deficit far more convincingly than existing players have.

Transparency is the New Currency

To win consumer trust, OpenAI cannot rely on opaque "We promise we anonymize the data" statements. The hardware must offer radical transparency. This could involve:

- Physical Indicators: Clear, undeniable visual and auditory cues when processing is happening locally versus when data is transmitted.

- On-Device Guarantees: Strong evidence (perhaps through open-sourcing the local inference stack) that sensitive visual data is never sent off-device without explicit, per-instance user authorization.

- Purpose Limitation: Strict, enforceable boundaries on how the collected context can be used—it must only serve the user's immediate, stated needs, not feed generalized advertising models.

The Competitive Landscape: Facing the Tech Titans

OpenAI’s move is a direct declaration of intent against entrenched giants, particularly Apple. While Google and Amazon are hardware-centric, Apple controls the most important device in the world: the smartphone, along with a fiercely loyal user base that values design and privacy integration above almost all else. Analysts are keen to compare "Apple vs OpenAI on consumer hardware AI."

Apple’s strategy appears to be about weaving advanced AI features deeply into its existing operating systems (iOS, watchOS), enhancing the user experience without necessarily requiring a new, dedicated appliance that duplicates phone functionality. OpenAI, conversely, appears to be creating a dedicated, AI-native *agent* that operates independently of the smartphone interface.

If OpenAI successfully launches a superior agent experience on its own hardware, it forces consumers to make a choice: remain within the walled garden of a trusted platform (Apple) where AI is an addition, or jump to a new paradigm where AI is the foundation (OpenAI), accepting the associated risks and learning curve.

Implications for Business and Society

The trajectory toward ambient, proactive AI has profound implications across sectors:

For Businesses: The Rise of the Agent Economy

The smart speaker is the pilot project. If it succeeds, the next wave of hardware will be deeply personalized agents that manage workflows, communication, and scheduling with near-perfect autonomy. Businesses must start developing 'agentic workflows' now—tasks that an AI can complete end-to-end rather than just answer questions about. The ability to command an AI to "Manage all vendor invoices this month, flag any payment over 10% higher than last quarter, and schedule a review meeting" will replace tedious manual processes.

For Society: Redefining Autonomy and Attention

When AI proactively manages daily routines (like telling you when to sleep), the line blurs between helpful suggestion and subtle control. This raises critical questions about human autonomy. If the AI optimizes your life for peak efficiency or health (as defined by its programming), are you truly making your own decisions? Furthermore, for companies focused on ad revenue, an ambient device that knows exactly where you are, what you are looking at, and when you are most susceptible to suggestion represents the ultimate advertising frontier—one that must be navigated ethically.

Actionable Insights for Navigating the Shift

This is a pivot point. Technology adoption will be governed not just by capability, but by trust and accessibility. Here are actionable takeaways:

- Invest in Multimodal Expertise: Businesses relying on text-only customer service or internal documentation must urgently pivot toward understanding how vision and voice context can enhance their AI interactions.

- Prepare for Platform Agnosticism: Do not tie your long-term strategy exclusively to current mobile operating systems. Assume the next major interaction layer will be ambient, contextual, and potentially delivered via proprietary, specialized hardware.

- Establish Proactive Data Governance: Before mass deployment, internal teams must establish rigid internal policies regarding proactive data collection. Determine what data—visual, auditory, temporal—is absolutely necessary for the AI to function and clearly separate that from data used for model training or external analysis.

OpenAI’s foray into the physical world, signaled by its push into smart speakers, confirms that the battle for AI dominance is moving off the cloud servers and into our living rooms. The winners will be those who can deliver true, frictionless ambient intelligence while simultaneously earning the necessary consumer trust to justify the cameras and microphones required to see and hear our world.