The $100 Billion Question: Why AI Costs Are Spiraling and What It Means for Tech Leadership

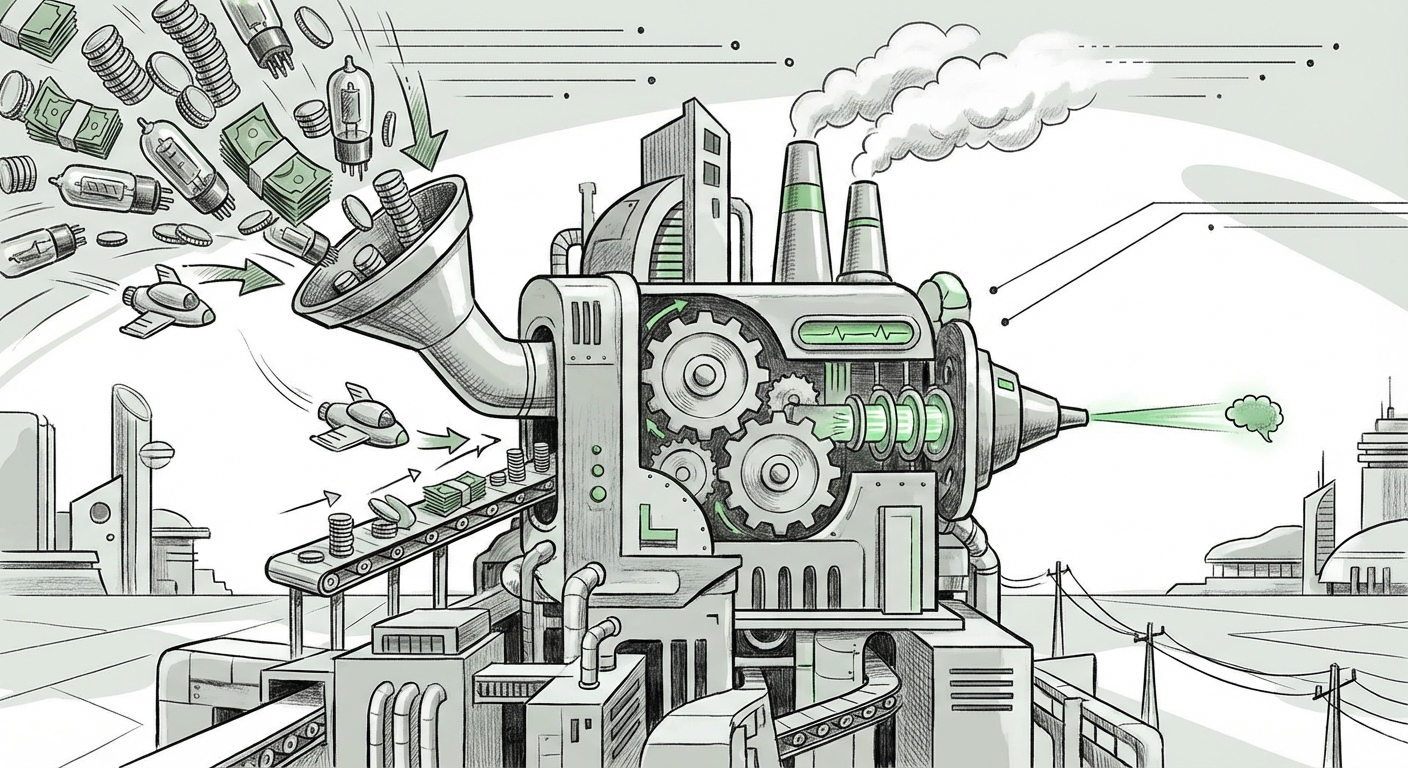

The narrative surrounding Artificial Intelligence has often been one of infinite potential and exponential growth. Yet, beneath the surface of groundbreaking demos and soaring stock prices lies a stark financial reality: the race to build the most powerful AI models is *incredibly* expensive. The recent news that OpenAI is dramatically increasing its cash burn forecast—warning investors that the cost to train and run their advanced models is outpacing revenue growth—serves as a critical inflection point. This isn't just a company update; it’s a declaration that the current frontier of AI is defined by a fundamental tension: Cost vs. Scalability.

To truly understand what this means for the future of technology, we must look beyond the headline number. We need to examine the infrastructure bottlenecks, the strategic responses from competitors, and the sustainability of a business model reliant on multi-billion dollar bets every few months.

The Core Problem: Compute is the New Oil

At its heart, the increased cash burn forecast confirms what many industry insiders have whispered: training a state-of-the-art Large Language Model (LLM) like the rumored GPT-5 or its successors requires capital expenditure (CapEx) on a scale previously reserved for building nuclear power plants or launching global satellite networks. This massive outlay is driven almost entirely by hardware.

The GPU Bottleneck: A Supply Chain Crisis

The engine of modern AI is the specialized accelerator chip, overwhelmingly supplied by Nvidia. When we look for corroborating evidence, the scarcity of these components immediately jumps out. Queries focused on the "Nvidia H100 shortage" reveal that demand is so immense that even for companies with deep pockets, acquiring the necessary hardware to *train* new models is a multi-year waiting game.

These chips are not just expensive to buy; they require massive infrastructure to support them. A single data center cluster capable of running cutting-edge training might cost billions, involving specialized cooling, networking, and power delivery. This hardware constraint acts as a hard ceiling on how quickly any company—even OpenAI—can innovate. The fact that costs are spiraling *beyond* initial projections suggests either that the models are requiring exponentially more data and compute cycles than anticipated, or that the unit price of acquiring this necessary infrastructure (GPUs) continues to climb due to overwhelming demand.

The Ecosystem Responds: Who is Paying the Bill?

OpenAI does not operate in a vacuum. Its financial stability is intrinsically linked to its primary strategic partner, Microsoft. Understanding this partnership illuminates the industrial scale of AI investment.

Microsoft’s Role as Financial Backstop and Infrastructure Giant

By investigating reports on "Microsoft AI capex spending," we see the systemic validation of OpenAI's situation. Microsoft isn't just writing checks; they are physically building out the Azure supercomputers necessary to run these models. When Microsoft signals massive increases in its own capital expenditure—often exceeding $20 billion quarterly—it directly validates the enormous operational costs OpenAI is describing. This isn't just OpenAI burning cash; it’s the entire foundational layer of modern AI infrastructure requiring continuous, massive capital injections.

For businesses, this means that relying on a foundational model provider is also reliant on the financial health and strategic priorities of its hyperscaler partner. The relationship shields OpenAI from immediate bankruptcy but locks them into a specific technological path dictated by the needs of the cloud provider.

The Competitive Arena: Can Efficiency Win Over Brute Force?

If the current path is defined by an endless capital arms race, where is the innovation that might break this cycle? The answer lies in the competitive response from rivals like Meta and Google, who are exploring efficiency as a counter-strategy.

The Open-Source Challenge and Efficiency Gains

Searches related to "Meta Llama open source efficiency" highlight a crucial divergence in strategy. While OpenAI pursues AGI via the largest possible models (requiring the most capital), competitors are aggressively targeting smaller, faster, and more specialized models that can run affordably on existing hardware. This quest for efficiency is vital for future scalability.

If Meta’s open-source models, or Google’s customized Tensor Processing Units (TPUs) for Gemini, can achieve 85% of the performance of GPT-4 at 20% of the inference cost, the economic landscape shifts dramatically. This means the "cost spiral" that OpenAI is experiencing might eventually be contained by optimized architecture or specialized hardware designed specifically for inference (running the model) rather than just training (building the model).

The Profitability Paradox: Can Revenue Keep Pace?

The most immediate implication for OpenAI is the sustainability of its business model. They are increasing revenue forecasts, which is positive, but the cash burn rate is exceeding that growth. This forces a hard look at pricing and adoption rates.

Analyzing the SaaS vs. Compute Ratio

The final piece of the puzzle involves analyzing "AI subscription retention rates" and comparing them against compute costs. For the API business, every query costs real money, and that cost is increasing as users demand larger, smarter models. If the per-token cost for customers remains flat or decreases (due to competitive pressure), but the underlying inference cost rises, the profit margin shrinks or vanishes entirely.

For businesses integrating AI, this suggests a necessary shift in mindset. You cannot treat advanced AI models as a cheap commodity service. They are high-value, capital-intensive resources. Businesses must move from simply experimenting with AI to deploying it only in areas where the generated value demonstrably outweighs the premium cost of accessing the frontier model.

Future Implications: What This Means for Leadership and Innovation

The soaring cash burn forecast signals three major shifts in the AI landscape:

- The Era of the Oligopoly: Only a handful of entities—those backed by near-limitless capital (Microsoft/OpenAI, Google, Amazon, perhaps Meta)—can afford to play in the absolute frontier. This concentrates the power to define the next generation of foundational AI in very few hands.

- The Rise of Inference Optimization: The economic pressure will force a massive innovation push into making models run cheaper (inference). Future market winners might not be those with the *best* foundational training, but those who can deliver the *most accessible* and *cheapest usable* intelligence.

- The Slowdown Risk: If revenue growth cannot sustainably outpace hardware CapEx, developers may be forced to slow down the pace of major model releases, prioritizing cost reduction over achieving incremental performance gains.

Actionable Insights for Businesses and Developers

How should businesses react to this capital-intensive reality?

1. Architect for Cost Flexibility (The "Model Agnostic" Approach)

Do not anchor your entire product line to the most expensive, bleeding-edge model. Design your applications so you can easily swap between models based on task complexity. Use the largest model only when absolutely necessary. For summarizing emails, a smaller, cheaper model suffices; for complex legal drafting, spring for the premium tier.

2. Prioritize Inference Efficiency in Deployment

When selecting deployment partners or building internal tooling, prioritize providers who demonstrate strong efficiency metrics (low latency, low energy usage per query). This is where cost savings will be found in the short term, even if training costs remain high for the giants.

3. Evaluate the True ROI of "Frontier" Performance

Before paying top dollar for API access to the newest model, conduct a rigorous A/B test. Does the marginal improvement offered by the $100B model translate into a measurable, quantifiable ROI (e.g., 10% better conversion rate, 20% time savings) that justifies the increased per-query cost? Often, older, cheaper models provide 90% of the business value.

4. Understand the Hardware Dependencies

Be aware that supply chain shocks (like the H100 shortage) directly translate into potential price increases or service instability for you, the end user. Diversifying vendor reliance where possible is prudent risk management in this capital-intensive environment.

The era of "move fast and break things" is giving way to an era of "build big and spend massively." OpenAI’s increased cash burn forecast is the clearest signal yet that the next phase of AI leadership will be less about clever algorithms alone and more about mastering the unforgiving economics of silicon and scale. The cost pressure is not a bug; it is a feature of building the most advanced intelligence the world has ever seen.