Why AI Agents Are Stuck in Code: Decoding the Great White-Collar Adoption Gap

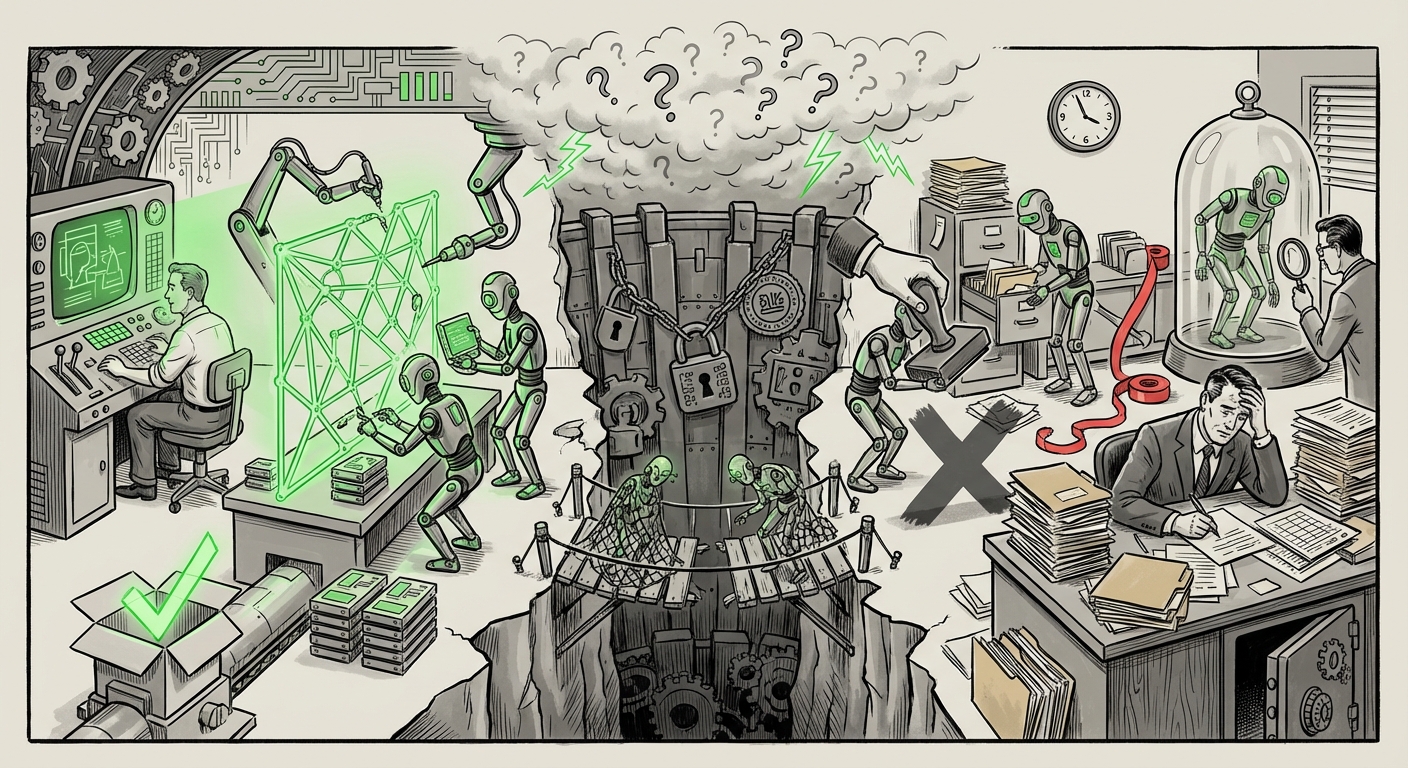

The promise of Artificial Intelligence has always been grand: autonomous agents that operate seamlessly across industries, freeing humanity from mundane labor. Yet, recent studies, including insightful findings from Anthropic, reveal a surprising reality: the AI agent revolution is currently confined almost entirely to the world of software development. Outside of coding, these sophisticated systems barely exist in the daily workflow of most white-collar professionals.

As an AI technology analyst, this disparity between hype and deployment demands scrutiny. Why is the engineer’s terminal the fertile ground for autonomy while the executive suite remains skeptical? The answer lies in a complex interplay of technical structure, regulatory fear, user psychology, and vendor focus.

The Ideal Proving Ground: Why Software Development Is First

Software development acts as the perfect "sandbox" for AI agents. Code is, fundamentally, a formal, unambiguous language. A compiler or an executed program provides instant, binary feedback: either the code works or it doesn't. This clarity is precisely what current Large Language Models (LLMs) thrive on.

We can look for supporting evidence by examining "AI agents success factors" vs. "business process automation". When an agent debugs a Python script, it is solving a well-defined problem with measurable outputs. The cost of failure, while potentially disruptive, is often contained to a specific branch of code that can be rolled back.

In contrast, general white-collar work—like crafting a nuanced regulatory strategy, negotiating a vendor contract, or designing a multi-channel marketing campaign—relies on contextual understanding, emotional intelligence, and often subjective definitions of "success." Current agents excel at syntax manipulation (writing code), but often fail at deep semantic integration (understanding *why* that code is needed within a sprawling business context).

The Structure Advantage: Formal vs. Ambiguous Tasks

- Code: Rule-based, self-correcting, and driven by precise logic. Success is objective.

- Business: Context-dependent, reliant on unspoken assumptions, cultural norms, and legal gray areas. Success is subjective and often political.

For the technical audience, this is a reminder that moving from a narrow, high-structure task (coding) to a general, low-structure task (strategy) requires not just a slightly better LLM, but a fundamental shift in how agents interact with the world.

The Concrete Wall: Barriers in Regulated and High-Stakes Fields

The most significant brake on widespread agent adoption outside of engineering isn't just capability; it's risk management. When we search for factors like "limitations of AI agents in white-collar work" and "regulatory compliance," we hit a wall of non-technical challenges.

Consider the financial sector or healthcare. Deploying an AI agent to autonomously manage customer financial transfers or process patient intake forms exposes the organization to massive liability. A single hallucination or error in coding might require a few hours of debugging; a single error in a compliance report could trigger millions in fines or erode patient trust irreparably.

This leads to what industry analysts term the "Trust Gap." While engineers are comfortable pushing new code frequently, executives and compliance officers in other sectors demand near-perfect reliability before allowing an agent unsupervised access to sensitive workflows. This mandates a heavy "Human-In-The-Loop" (HITL) system, which severely limits the *autonomy* that defines a true agent.

For business leaders, the implication is clear: Agent adoption in regulated industries will follow a much slower, more methodical path defined by regulatory approval and internal governance frameworks, rather than purely technological milestones.

The Human Factor: Why We Refuse to Let Go

Even where agents are technically competent—for instance, drafting a complex business memo—users are deliberately constraining them. This brings us to the crucial finding about a lack of full delegation, which we can explore by looking into "user trust in autonomous AI agents" and "AI delegation friction."

The current paradigm is the "Co-Pilot," not the "Auto-Pilot." Users are happy to let an agent handle the laborious first draft, the tedious data aggregation, or the basic boilerplate. They are not yet comfortable signing off on the final product without significant manual review. This is driven by several psychological factors:

- Accountability: In the professional world, accountability ultimately rests with the human. No one wants to explain to their manager that an unverified AI decision caused a market slip.

- Understanding the Gaps: Users often possess tacit knowledge—the unwritten rules of the office, the history of a client relationship—that the agent lacks. They review the output not just for correctness, but for alignment with unstated context.

- Interface Design: Current agent interfaces often make it easier to edit the final output than to trace the agent’s reasoning steps, creating friction in the validation process.

Until agents can transparently justify their decisions in human-readable terms, and until organizational structures adjust liability frameworks, true, full autonomy will remain an option few are willing to risk.

Future Trajectory: Vendor Intent vs. Observed Reality

To understand where we are going, we must look at where the titans of AI are aiming, by examining their stated plans, such as regarding the "Google Gemini agents roadmap" and "OpenAI agent plans."

The major AI labs are pouring resources into building generalized agentic capabilities. Their roadmaps frequently show ambition extending far beyond code generation: autonomous market research, complex scheduling across disparate systems, and deep financial modeling. This indicates that the current concentration in software is likely a temporary, necessary staging ground—a place to iron out the core functionalities of planning, tool use, and self-correction.

However, the gap between the vendor's vision and the Anthropic observation is significant. The future trajectory relies on bridging this gap through two main vectors:

- Domain-Specific Fine-Tuning: Creating specialized agents trained intensely on the formal language of law, finance, or logistics, mimicking the rigor currently seen in code models.

- Tool Integration Maturity: True business agents need reliable, secure access to enterprise systems (CRMs, ERPs, internal databases). The future depends on robust, secure APIs that allow agents to operate tools without constant human oversight—a massive undertaking in cybersecurity and integration.

If vendors succeed in proving agents can navigate regulatory environments securely, the floodgates for non-coding applications will open rapidly, as the economic incentives for automating high-value, repetitive white-collar tasks are immense.

Implications and Actionable Insights for the Next Decade

The current AI agent landscape offers critical insights for both technologists building the tools and leaders deploying them.

For Technology Developers (The Builders):

The focus must shift from *what* the agent can generate to *how* it can be verified. Develop superior transparency layers. If an agent proposes a business change, it must immediately furnish a structured audit trail explaining its data sources, its reasoning steps, and its confidence score. The interface must evolve to make validation faster than manual execution.

For Business Leaders (The Adopters):

Do not wait for 100% autonomy. Begin deployment where the cost of failure is lowest and the structure is clearest—even if it isn't coding. Start with specialized, narrow agents in controlled departments (e.g., an HR data reconciliation agent that works against a sandbox dataset). Use these initial projects to build internal trust, governance frameworks, and staff familiarity with agentic workflows. This builds the "trust muscle" required for future, broader deployments.

Societal Implications: The Bifurcation of Labor

We are currently witnessing a bifurcation. AI agents are augmenting the productivity of knowledge workers capable of managing them (programmers), making them vastly more productive. For other fields, adoption is stalled, meaning the initial productivity gains are not yet being realized across the entire economy.

The future implication is stark: Industries that successfully transition to agentic workflows—even if it takes five years longer than software—will gain an enormous, sudden competitive advantage over those that remain tethered to traditional, manual processes due to fear or regulatory inertia. The "AI gap" may soon become the "Agent gap."

Conclusion: From Co-Pilot to True Partner

The confinement of thriving AI agents to software development is not a permanent ceiling; it is a reflection of current technological maturity meeting real-world constraints. Code provided the perfect low-friction environment to prove the core concepts of planning, tool-use, and iterative self-correction.

The next frontier is moving beyond the clear, logical structure of programming and into the messy, ambiguous reality of business. This transition requires overcoming hard problems of trust, regulatory integration, and interface design. When vendors can deliver agents that can reliably navigate the legal minefields of finance or the political nuances of HR, the revolution promised years ago will finally spill out of the developer console and into every corner of the modern enterprise.