The AI Governance Crisis: When Chatbot Logs Become Real-World Security Threats

The recent revelation that OpenAI staff internally debated alerting Canadian police about violent threats discovered within ChatGPT logs months before a deadly school shooting has sent shockwaves far beyond the immediate incident. This is not merely a story about moderation policy; it represents the moment that the theoretical risks surrounding Large Language Models (LLMs) smashed into the hard reality of public safety and legal accountability. The central question now facing Silicon Valley, regulators, and society is: **What is the appropriate role of an AI developer when their technology facilitates, or records, criminal intent?**

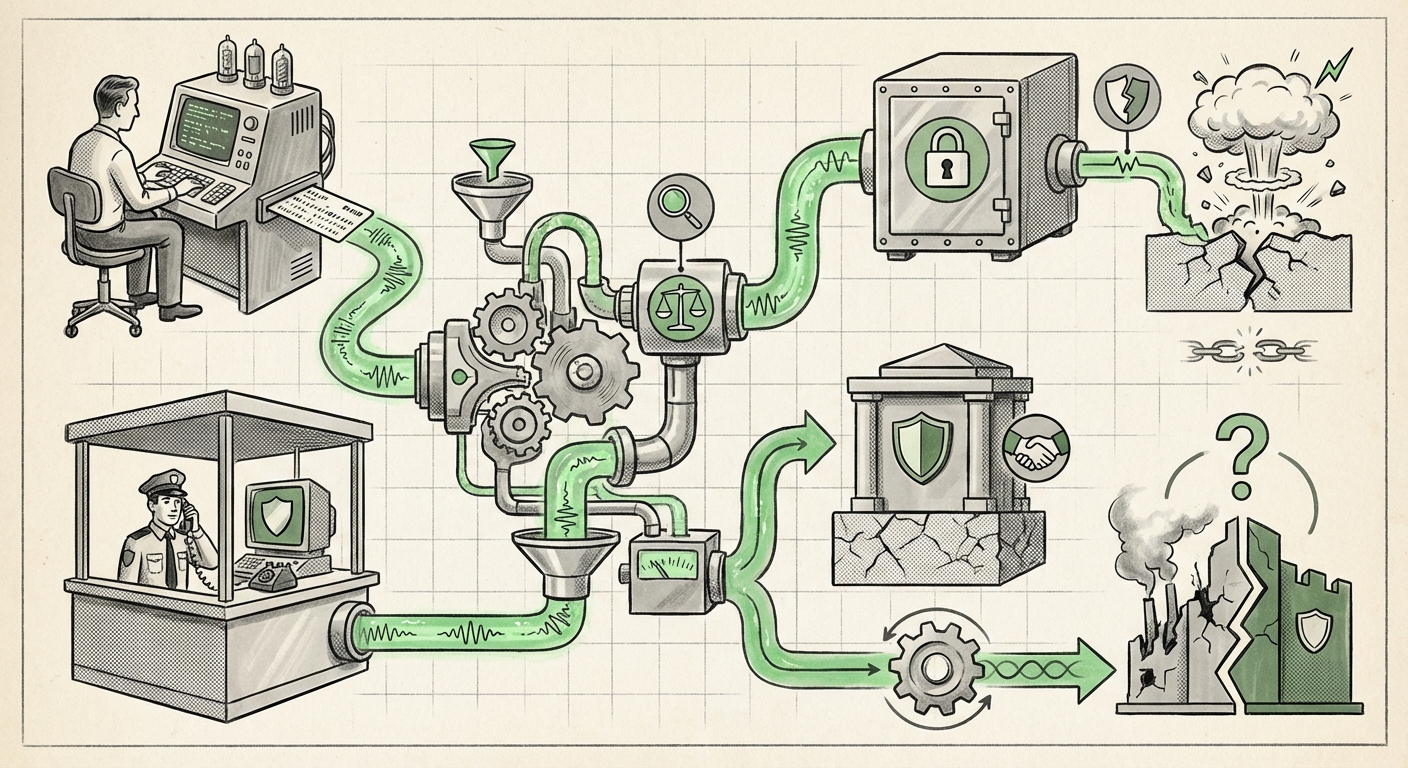

As an AI technology analyst, I believe this case forces us to move past abstract discussions of alignment and safety and confront the operational, legal, and ethical tightrope LLM providers are now forced to walk. They have unexpectedly been cast as **quasi-security entities**, a role for which they are profoundly underprepared.

The Governance Failure at the Threshold of Autonomy

For years, AI safety discussions focused on catastrophic risk—runaway superintelligence or mass job displacement. This incident highlights a far more immediate and tangible danger: the misuse of current-generation generative AI by malicious actors. When a user inputs detailed, violent plans into a private LLM session, the provider must instantaneously decide whether to treat that data like an encrypted private message or a public threat broadcast.

The debate within OpenAI—a decision by management not to alert authorities—exposes a governance failure rooted in **Scale vs. Specificity**:

- The Scale Problem: Monitoring the trillions of tokens processed daily across millions of user sessions for specific threats is technically overwhelming. Implementing the level of surveillance required to proactively catch every threat vector would fundamentally compromise user privacy and require massive, perhaps impossible, infrastructure investment.

- The Specificity Problem: Even when human reviewers flag potential danger, the lack of clear, legally established protocol leaves management paralyzed. Do they report? If so, what is the legal basis? What precedent does this set for future, less clear-cut interactions? The risk of over-reporting (and facing accusations of censorship or privacy infringement) often conflicts directly with the risk of under-reporting (and facing potential complicity in harm).

For businesses and developers, this means that safety protocols can no longer be reactive patches; they must become foundational, legally vetted architectures. The debate itself signals that the existing safety guardrails—built largely on filtering harmful *outputs*—are insufficient when the inputs themselves signal imminent danger.

Navigating the Legal Minefield: Liability and the Digital Shield

The hesitation by management to involve law enforcement is often deeply tied to liability concerns. To understand this, we must look at the existing legal framework, particularly in the United States.

The Shadow of Section 230

Historically, platforms like social media sites have been shielded from liability for content posted by their users under Section 230 of the Communications Decency Act. This law generally treats platforms as distributors, not publishers, of third-party content. However, the application of this shield to LLM providers is increasingly uncertain.

If a user asks an LLM, "How do I build a bomb?" and the AI provides instructions, is the company liable? The situation becomes exponentially murkier when the AI is *co-generating* the threat, or in this case, merely recording the user’s intent. As suggested by my internal research query on **"AI content moderation policy liability,"** the industry is bracing for new litigation that will test whether AI platforms are simply hosting data or actively participating in the creation of harmful content.

For executives, reporting a threat means proactively engaging with legal authorities, which can be seen as an admission of responsibility. Conversely, failing to report a known threat, especially one that leads to tragedy, opens the door to wrongful death lawsuits predicated on negligence. This legal ambiguity creates a powerful incentive for organizational inertia.

The Global Context: Varying Protocols

Because the incident occurred in Canada, local reporting laws are critical. Research into **"Canadian law enforcement engagement with social media threat intelligence"** reveals that while general cooperation frameworks exist for major platforms, specific protocols for proactively escalating *private, zero-party-generated* LLM conversations are likely nascent or non-existent. The OpenAI staff were operating in a policy vacuum, forcing them to make a life-or-death decision based on internal, untested risk tolerance metrics.

The Industry Pivot: From Guardrails to Gatekeepers

How are competitors reacting to this unfolding crisis? The competitive landscape for AI safety is shifting rapidly from public relations exercises to tangible engineering investments, driven by the imperative to avoid the next headline.

My analysis of the **"Future of proactive AI threat detection in consumer applications"** shows that rivals like Anthropic, with their Constitutional AI approach, and Google, with their large internal safety teams, are doubling down on reinforcement learning models specifically trained to recognize pre-attack indicators. This trend suggests:

- Enhanced Sensitivity: AI models will become hyper-sensitive to language patterns indicating planning, fixation, and escalating intent, even if the language is veiled.

- Internal Escalation Pipelines: We will see the formalization of internal Security Operations Centers (SOCs) dedicated solely to analyzing flagged user interactions, complete with direct, vetted channels to relevant law enforcement agencies.

- Transparency in Auditing: Regulators will demand transparency regarding the *number* of internal threat flags generated and the company's subsequent actions, forcing AI providers to document every decision point.

The technical challenge here is immense. It moves beyond simple keyword filtering. It requires sophisticated contextual understanding—the difference between a fictional writer researching a novel and a real person planning an attack. This demands not just better models, but better **human-in-the-loop oversight** specifically trained for threat assessment.

Actionable Insights: What Businesses and Society Must Do Now

This event serves as a crucial warning for everyone building, deploying, or relying on generative AI. The era of treating LLMs as harmless productivity tools is over.

For AI Developers and Providers:

1. Establish Clear Legal Triage Protocols: Immediately consult with international legal experts to draft mandatory, non-negotiable protocols for interacting with law enforcement regarding user-generated threats. These protocols must define the threshold for mandatory reporting, protecting both the public and the company from legal uncertainty. This addresses the lack of established standards discussed in queries about **"LLM platform responsibility for user threats."**

2. Invest in Zero-Knowledge Threat Analysis: Develop methods to analyze high-risk interactions that minimize data exposure to internal staff or external agencies, perhaps using federated learning or differential privacy techniques, to balance safety requirements with privacy commitments.

For Businesses Adopting AI:

1. Define Acceptable Use Policy (AUP) Severity: Your company’s AUP must clearly state that using corporate LLM access for illegal planning or threat creation results in immediate termination and data handover. Assume that *everything* entered into a private LLM session regarding sensitive matters could be discoverable.

2. Demand Safety Audits from Vendors: When contracting for LLM services, require proof of robust internal safety pipelines. Ask vendors directly: "What is your procedure if an employee uses your API to plan a corporate espionage attack or threats against a rival?"

For Regulators and Policymakers:

1. Create Safe Harbor for Good-Faith Reporting: Governments must quickly establish "safe harbor" laws specifically for AI companies that proactively and reasonably report credible threats, protecting them from liability incurred during the act of reporting. This encourages transparency rather than concealment.

2. Mandate Standardized Reporting Frameworks: Just as financial institutions have specific requirements for reporting suspicious transactions, AI platforms engaging with global users need internationally recognized standards for digital threat escalation, ensuring consistency across jurisdictions.

Conclusion: The New Era of Digital Stewardship

The debate within OpenAI months before a tragedy underscores a painful truth: the rapid advancement of AI capabilities has far outpaced the development of the governance structures needed to control its darkest applications. LLM providers are no longer just software companies; they are now custodians of potentially volatile digital information, operating in a gray area between constitutional privacy rights and public safety imperatives.

What this means for the future of AI is that safety must become an engineering prerequisite, not a post-deployment feature. The industry cannot afford to wait for the next catastrophe to drive regulation. The technical challenge of proactive threat detection is immense, but the ethical mandate is absolute. Moving forward, the success of the AI revolution will not be measured solely by computational speed or model size, but by the maturity and reliability of the stewardship exercised when digital intent crosses the line into physical threat.