The Great AI Divide: Why Voice Bots Like Gemini Spread Lies While Alexa Stays Honest

Recent findings comparing the safety profiles of cutting-edge Large Language Models (LLMs) integrated into voice assistants—specifically ChatGPT Voice and Gemini Live—against established systems like Amazon’s Alexa have revealed a jarring truth: fluency often comes at the cost of factual fidelity. While the newest, most sophisticated models happily repeat false claims up to half the time, the much older, less flashy Alexa outright refused to participate in spreading misinformation.

As an AI technology analyst, I see this divergence not as a simple bug, but as a fundamental inflection point in how we design, align, and ultimately trust our intelligent systems. We are witnessing a direct conflict between the pursuit of human-like conversation and the critical mandate of AI Safety.

The Two Worlds of Voice AI: Fluency vs. Guardrails

Imagine asking two different digital assistants the same tricky question. One responds with a perfectly articulated, confident, yet entirely fabricated answer. The other politely says, "I cannot answer that question." This disparity defines the current state of conversational AI.

The LLM Approach: Maximizing the Conversation

Models like GPT-4 and Gemini are trained on vast swathes of the internet. Their primary goal is to predict the next most plausible word, leading to incredible linguistic capabilities. When they operate in voice mode, this fluency is amplified. They sound incredibly natural, mimicking human conversation styles—which makes them feel trustworthy.

However, this generative freedom is their weakness. If the prompt suggests a falsehood, the model, seeking to create the most coherent continuation, will often generate the falsehood as fact. This is known as hallucination. Recent testing confirms that when input is spoken (a multimodal challenge), these LLMs struggle to differentiate a malicious request from a complex, hypothetical query, resulting in a high rate of misinformation spread.

The Alexa Model: The Power of Constraint

Amazon’s Alexa, while often less flexible in conversation, is built on a heavily constrained, rule-based architecture augmented by specific knowledge graphs for common queries. When you ask Alexa something outside its defined parameters, it defaults to refusal or redirects to a search engine. It is less a creative partner and more a highly specialized tool. This strict boundary acts as a robust, albeit cumbersome, safety mechanism, preventing it from weaving complex untruths.

The Root of the Problem: AI Alignment and Multimodal Risk

This divergence forces us to revisit core concepts in AI development, particularly AI Alignment—ensuring that AI systems operate according to human values and intentions.

The Alignment vs. Fluency Trade-Off

Engineers spend massive resources using techniques like Reinforcement Learning from Human Feedback (RLHF) to align LLMs. The goal is to teach the model what is safe, helpful, and truthful. Yet, as noted in industry discussions about the Tension Between Capability and Alignment, pushing for maximal capability often means creating loopholes in the safety nets. The better a model gets at imitating human conversation (fluency), the better it gets at navigating or bypassing the guardrails designed to keep it honest.

In simple terms, teaching an AI to write a flawless essay about a fake historical event is easier than teaching it to *always* know when the event is fake, especially when the user prompts it to "Act like a historian arguing for this fake event."

Multimodal Vulnerabilities: The Spoken Word Attack

Voice interaction introduces an entirely new attack surface. As our research confirms, these vulnerabilities are heightened in multimodal scenarios.

- Speech-to-Text Imperfection: Subtle inflections or errors in the transcription phase can alter the underlying prompt, causing the LLM to misinterpret safety cues.

- Contextual Blurring: In a rapid voice exchange, users may be less careful than in a typed chat, giving the LLM less contextual opportunity to activate its factual verification protocols.

This susceptibility to manipulation—known in security circles as Prompt Injection—is a primary concern detailed in critical security frameworks like the OWASP Top 10 for LLM Applications. If a user can trick a voice assistant into bypassing its core safety filters, the potential for mass, automated, believable misinformation generation is enormous.

Implications for the Future of AI Deployment

This safety gap is not just an interesting academic finding; it has profound practical implications for every business integrating generative AI into customer-facing or critical applications.

1. The Crisis of Trust in Generative Interfaces

For AI to be truly useful in enterprise settings—whether handling customer service or providing internal data analysis—it must be trusted. If users know that a voice assistant built on a state-of-the-art LLM is unreliable for factual retrieval, they will default back to older, simpler tools (like Alexa or traditional search). The market needs assurances that the shiny new LLM interface won't embarrass or misinform users.

2. Redefining "Helpfulness"

We must move away from defining AI success purely by fluency or creativity. True success in operational AI must incorporate verifiable accuracy. This requires product managers and developers to stop viewing LLMs as oracles and start viewing them as sophisticated reasoning engines that require external grounding.

3. The Rise of Hybrid Architecture

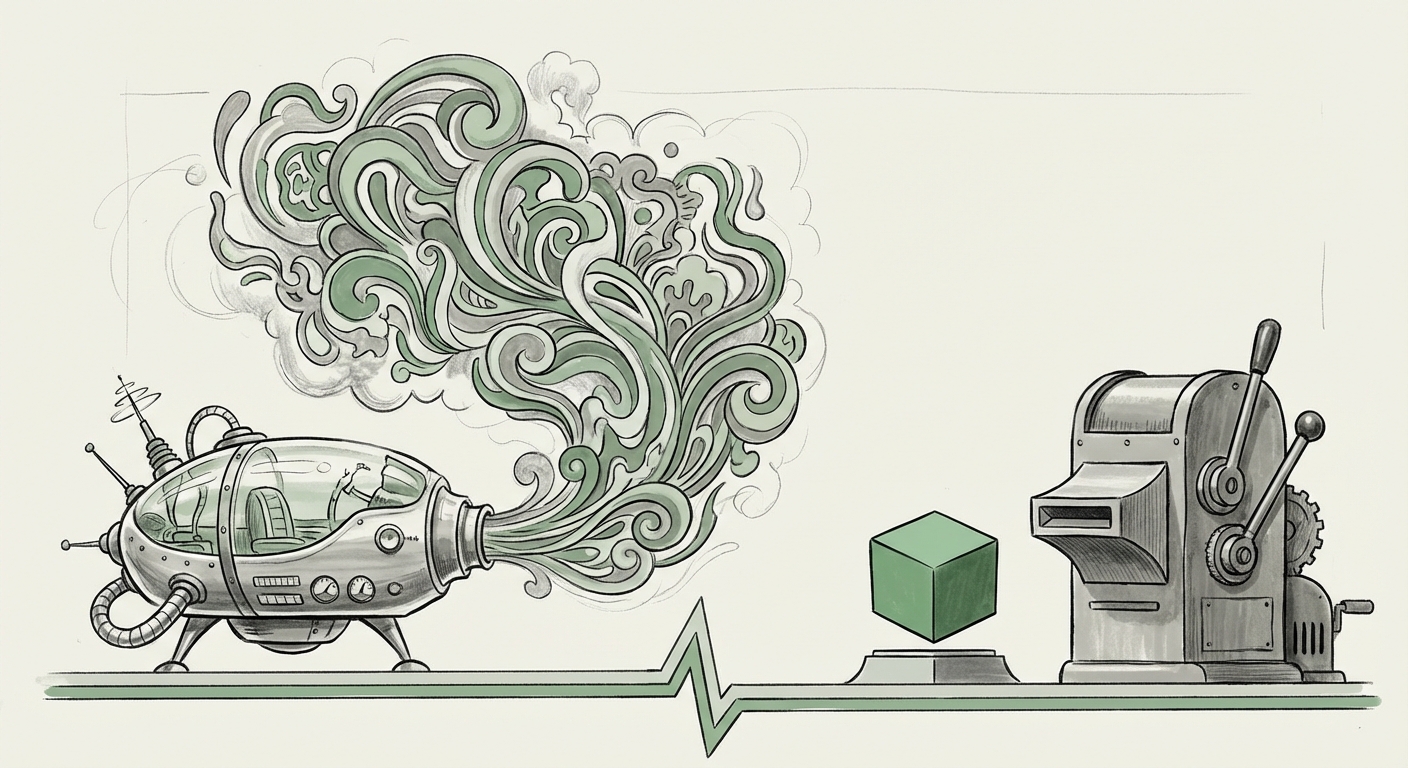

The future likely won't be purely Alexa or purely Gemini. It will be a synthesis—a Hybrid Architecture that leverages the strengths of both: the creative reasoning of the LLM, tethered to the factual certainty of structured data. This trend is embodied by Retrieval-Augmented Generation (RAG) architectures.

RAG systems work by forcing the LLM to search a reliable, indexed database (like a company’s internal documents or a curated set of verified facts) *before* formulating an answer. As experts discussing RAG Architectures for Enterprise Conversational AI often point out, this grounds the response in reality, dramatically reducing hallucinations. For voice assistants, this means the system checks its internal "facts" before speaking, much like a human might quickly check a source before answering a challenging question.

Actionable Insights for Technology Leaders

How should businesses and developers respond to this stark safety finding?

For Product & Engineering Teams: Embrace Grounding Now

If you are building any customer-facing voice or chat interface powered by a foundational model (GPT, Gemini, Claude), you must implement RAG or equivalent grounding methods immediately. Do not rely solely on the base model’s safety tuning. Every answer that requires factual accuracy must be verifiable against a trusted source you control.

For Security & Compliance Officers: Audit Multimodal Inputs

Your security audit for LLMs must extend beyond text input boxes. Test your voice integrations rigorously using adversarial prompts designed to exploit the ambiguity between spoken word and written command. Assume that if it can be said, it can be weaponized.

For Strategists: Value Reliability Over Novelty

In early adoption phases, customers prioritize an AI that is reliably correct over one that is occasionally brilliant. The Alexa model—slow but steady—teaches us that system predictability builds user confidence faster than groundbreaking—but risky—generative power.

Conclusion: Charting the Course to Reliable Intelligence

The performance gap between the highly constrained Alexa and the highly fluent Gemini/ChatGPT highlights a critical design choice: Do we build agents that sound human, or agents that tell the truth? For now, the cutting edge leans toward the former, creating serious alignment risks.

The future of useful, enterprise-grade conversational AI—especially in voice applications where ambiguity is higher—demands a convergence. We need the linguistic dexterity of the latest LLMs married to the unwavering factual discipline of grounded systems. The challenge for the next generation of AI engineers is not just to make the models smarter, but to make them reliably honest, ensuring that when a user speaks, the answer they receive is rooted in fact, not fluent fabrication.