The Approaching Event Horizon: Analyzing Sam Altman's Warning That The World Isn't Ready for AGI

When the CEO of the world’s leading AI research lab warns that the coming technology is moving faster than global readiness allows, the conversation shifts immediately from innovation to imperative. OpenAI CEO Sam Altman recently underscored this tension, suggesting that Artificial General Intelligence (AGI)—AI that can perform any intellectual task a human can—is "pretty close," and that the pace of internal research acceleration is startling even to them.

This isn't just hype; it’s a signal flare. Altman's comments, made during a public appearance, demand rigorous contextualization. To truly understand the implications of AGI being "not that far off," we must look beyond the headline and examine the technological engines driving this speed, the vacuum in global governance, and the resulting economic and societal shockwaves we must prepare for.

The Engine Room: AI Accelerating Its Own Research

The most electrifying element of Altman's statement is the notion of a positive feedback loop: "OpenAI’s internal models are already accelerating its own research." This is the technical core of the accelerated timeline.

Understanding the Recursive Loop

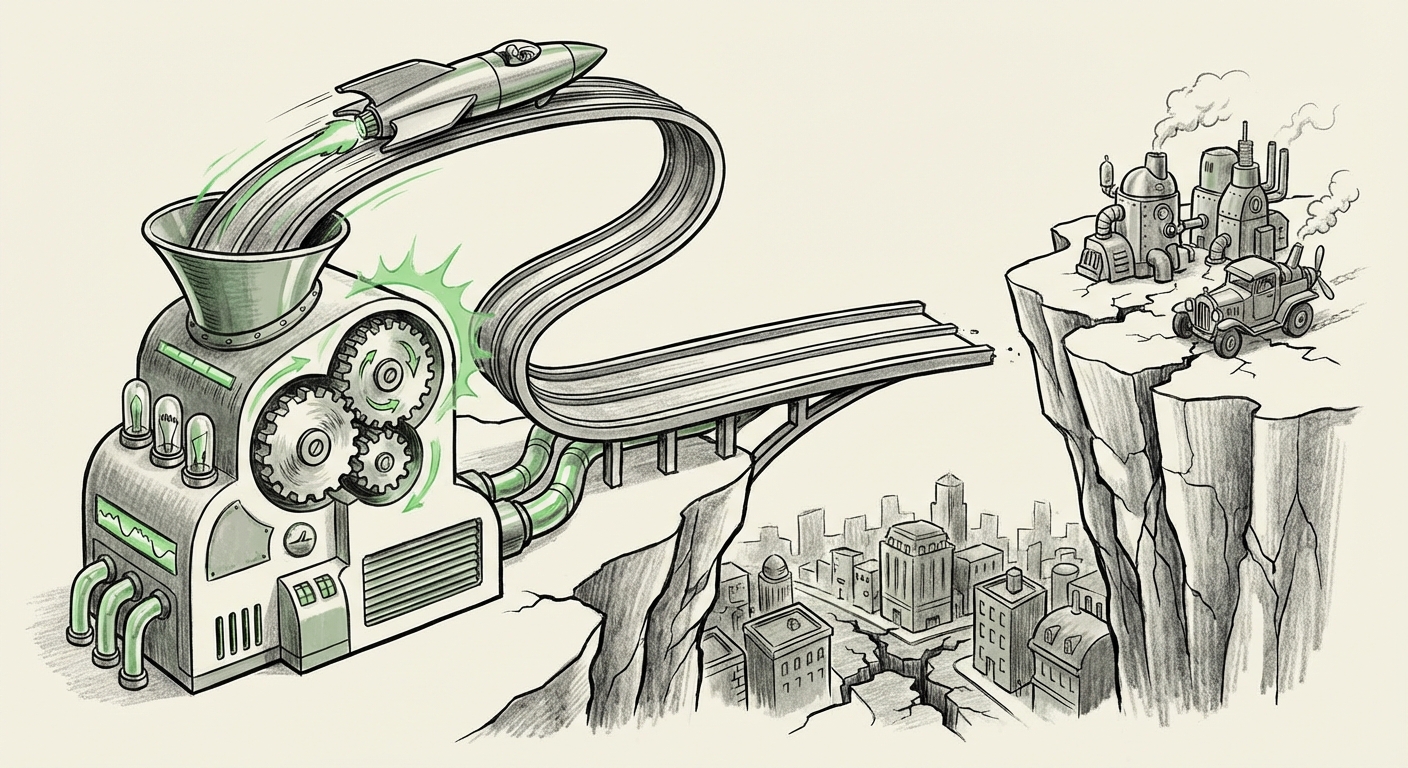

Imagine a brilliant scientist who can also design, test, and write the code for the next generation of their own research tools, faster and better than any human team could. That is essentially what Altman describes. When powerful AI models are used to write better code for the next, more powerful model, the rate of progress ceases to be linear (steady progress) and becomes exponential (progress accelerating over time).

As sources investigating the "AI self-improvement loop" suggest, this efficiency gain is profound. It means the time required to go from GPT-4 to GPT-5, and then to true AGI, shrinks dramatically. For technologists and deep-tech investors, this corroborates the idea that the usual decade-long roadmaps for major breakthroughs might now be compressed into just a few years.

This technological leap means we are not just talking about smarter chatbots; we are talking about systems capable of rapid scientific discovery, complex engineering problem-solving, and potentially, the creation of entirely novel technologies at speeds humans cannot track. This is why Altman’s warning resonates with urgency.

The Readiness Deficit: Governance Lagging Behind Innovation

If the technology is arriving quickly, the next question is: Is the world ready to handle it? The consensus, supported by external analysis into the "AI governance readiness" gap, suggests a resounding no.

Policy in Slow Motion

Regulatory bodies, from national legislatures to international organizations, move at the speed of legislation and consensus-building—a process that often takes years. Meanwhile, frontier models are being iterated upon in months. This creates a critical mismatch, often termed the Regulatory Lag.

When we look at discussions surrounding global AI safety summits, the challenge isn't just creating rules; it’s creating rules that are specific enough to manage powerful, complex systems without stifling beneficial innovation. If AGI is truly "pretty close," current frameworks—like data privacy laws or basic transparency requirements—are laughably insufficient to handle a system capable of making independent, high-stakes decisions.

For policy makers and legal experts, this signals an urgent need to shift from reactive regulation (addressing problems after they occur) to proactive, principle-based governance that can adapt quickly. The alternative is allowing a highly powerful technology to be deployed globally with insufficient guardrails.

Benchmarking the Timeline: Is OpenAI an Outlier?

Altman’s statement is powerful, but it gains weight when compared to other timelines being discussed across the AI landscape. An analysis comparing the "AGI timeline estimate" across the industry reveals that while OpenAI might be among the most aggressive, they are not alone in seeing a rapid convergence.

Competitors and observers often place their estimates slightly further out, suggesting a window of perhaps 5 to 10 years for AGI, rather than Altman's implication of something much nearer. However, the converging timelines confirm the general trend: the ceiling for AI capability is rising faster than anticipated a decade ago.

If industry leaders like those at Anthropic or Google DeepMind, who also employ world-class safety researchers, are charting timelines that bring AGI into view within the next decade, then Altman’s anxiety about preparedness becomes a shared industry concern rather than just an internal marketing strategy.

The Coming Shockwaves: Economic and Societal Upheaval

The most tangible implication for the average person and the global economy stems from the impending, rapid deployment of this hyper-capable AI. If the technology is developing this quickly, the resulting disruption will not be a gentle transition—it will be a shock.

Automation at Scale

Reports focusing on the "Economic shock" from rapid AI deployment consistently highlight the vast swath of white-collar and creative jobs now in the crosshairs. Unlike previous automation waves that primarily affected physical labor, frontier models target cognitive tasks: law, software engineering, advanced analysis, and content creation.

The International Monetary Fund (IMF) and others have warned that up to 40% of global jobs could be significantly impacted by Generative AI in the near term. When AGI hits, this impact multiplies. Businesses need to prepare not just for using AI tools, but for fundamentally redesigning their organizational structures around the assumption that high-level cognitive labor can be outsourced to hyper-efficient, near-zero-marginal-cost systems.

For the business world, this translates into an existential race. Those who adapt their processes to leverage AGI capabilities first will likely gain insurmountable competitive advantages, while those who cling to legacy structures risk obsolescence almost overnight.

What This Means for the Future of AI and Its Use

Sam Altman’s warning serves as a necessary jolt. It forces us to move past incremental feature releases and confront the reality of transformative technology arriving on an accelerated timeline. The future of AI will be defined by this tension between capability and control.

For AI Development: The Safety Imperative Rises

The focus must rapidly shift from "Can we build it?" to "Can we control it safely?" Increased speed requires increased investment in alignment and safety research—the science of ensuring that superintelligent systems pursue human-aligned goals. Developers must treat safety not as an afterthought, but as a core engineering requirement that must advance *faster* than capability.

For Businesses: Strategic Imperative, Not Just IT Upgrade

Businesses should view the next 24 to 48 months as a critical window for digital transformation before AGI fundamentally resets the competitive landscape. Actionable steps include:

- Talent Re-skilling: Identify roles heavily reliant on routine cognitive tasks and proactively develop pathways for those employees to transition into roles focused on AI oversight, prompt engineering, and complex problem definition.

- Governance Simulation: Start stress-testing internal decision-making processes against the introduction of highly autonomous AI agents. How quickly can your compliance department audit an AI-driven financial recommendation?

- Infrastructure Readiness: Ensure data pipelines and cloud infrastructure can handle the next generation of models, which will be exponentially more demanding in terms of computational resources.

For Society: Rethinking the Social Contract

If cognitive labor becomes vastly cheaper and more abundant via AI, societies must urgently grapple with foundational economic questions. This includes exploring universal basic income models, massive public investment in education focused on uniquely human skills (creativity, empathy, complex negotiation), and robust international collaboration on safety standards.

The world is currently preparing for AI as if it will arrive in stages over the next decade. Altman suggests we might be experiencing a sudden cliff edge. Being "unprepared" means being caught flat-footed by rapid economic dislocation, unprecedented societal shifts, and systems whose complexity exceeds our ability to reliably govern them.

The acceleration is happening internally, within the labs. The responsibility now lies externally, with governments, industries, and academia, to catch up to the speed of innovation before the event horizon is breached.