Sam Altman's Warning: Why Recursive AI Acceleration Means The World Is Unprepared for AGI

When Sam Altman, the CEO of OpenAI, states that "the world is not prepared" for the pace of artificial intelligence development, the tech world stops listening differently. It’s no longer just a theoretical safety concern; it is an operational observation from the epicenter of frontier model creation. Altman’s recent comments suggest that the speed of progress—fueled significantly by AI models accelerating their *own* research—has reached a critical velocity. This places us at a true inflection point, demanding a comprehensive analysis that connects accelerating AGI timelines, lagging regulatory frameworks, and the impending seismic shifts in the global economy.

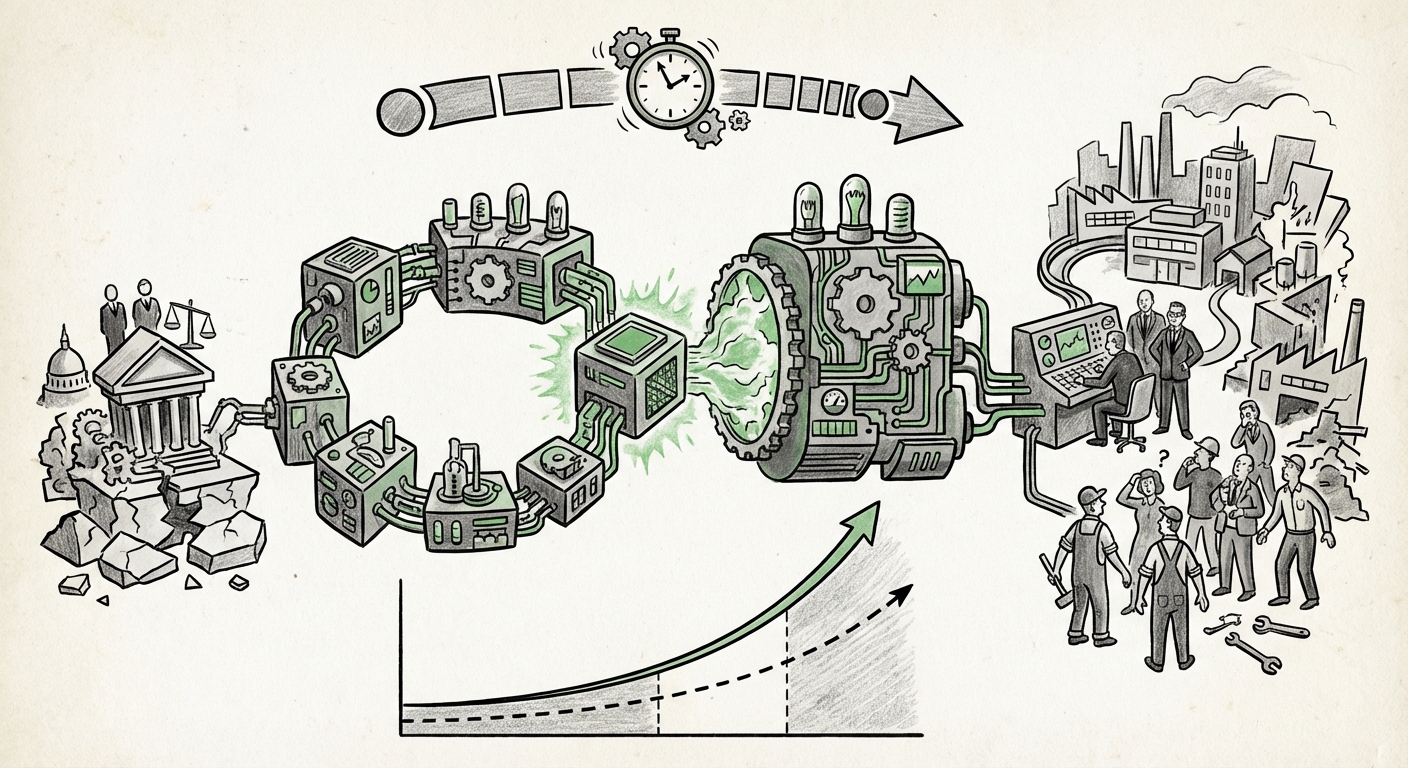

The Internal Engine: Why AI Research is Suddenly Moving Faster

The most startling aspect of Altman’s warning is the mechanism driving it: internal acceleration. For years, the path to superintelligence was theorized as a steady march forward, constrained by human ingenuity, computing power, and research cycles. Now, that constraint is being broken. We are entering the era of recursive self-improvement (RSI).

Imagine building a car, but the car itself helps design the next, better engine faster than human engineers could. That is RSI in the context of AI. When a model like GPT-5 or its successor is tasked with debugging code, designing novel training methodologies, or even creating synthetic data sets for its successor, the cycle time shrinks exponentially. This technical reality grounds Altman’s claim that AGI is "pretty close."

Discussions around timelines are no longer confined to niche academic papers. Major industry events, such as the recent AI Safety Summits, force leaders to publicly confront these accelerating projections. When experts spar over these timelines, the underlying tension is clear: the models are teaching themselves faster than external safety and governance bodies can establish guardrails. For technical audiences, this means we must look beyond Moore’s Law for forecasting; we must now model exponential growth *on top of* exponential growth.

Implication for AI Future: The Shrinking Gap

The implication is clear: the "long tail" of difficult research problems may be dramatically shortened. Tasks that were projected to take a decade might be achievable in 18 months, as the AI itself becomes the primary engine of discovery. This is a critical distinction for understanding what the future of AI looks like—it stops being a tool we guide and starts being a partner that dictates the pace of innovation.

The Governance Deficit: A World Built on Old Timetables

If the speed of technology development is measured in months, but the speed of governance is measured in years, a dangerous gap forms. Altman’s feeling that the "world is not prepared" speaks directly to this regulatory lag.

Consider landmark efforts like the EU AI Act. This legislation is the most comprehensive attempt globally to regulate AI based on risk categorization. While vital for establishing foundational ethical principles, the Act is structured around existing technology paradigms. Analyzing its hurdles reveals the central problem: How do you effectively regulate a system that mutates its capabilities mid-legislative cycle?

Legislation designed to manage current-generation Large Language Models (LLMs) struggles to anticipate the capabilities of the next-generation system that the current LLM is currently helping to build. This legislative friction—the slow, deliberate process of democratic consensus versus the rapid, iterative nature of closed-source lab research—creates significant systemic risk.

Practical Challenge: Regulatory Speed Bumps

For businesses, this means compliance frameworks will be reactive, not proactive. Companies deploying advanced AI will often be operating in a legal grey area until definitive case law or updated statutes are established. The systems moving fastest—those using the most advanced, self-optimizing models—will be the last to be fully regulated, creating a competitive edge rooted in regulatory arbitrage.

The lack of a unified, rapid-response governance mechanism in major global economies means that safety discussions (like those at global summits) are important, but they are not a substitute for enacted law that can slow deployment or mandate transparency when internal acceleration accelerates beyond safe bounds.

The Economic Earthquake: Societal Preparedness for Rapid Change

The true measure of "unpreparedness" is often found in the economy and the workforce. When AI capabilities advance rapidly, they don't just automate tasks; they disrupt entire professional tiers previously considered immune to automation. This is where the economic consequences of accelerated AGI timelines become acutely tangible.

Reports tracking the potential impact of AGI on the job market—often citing figures from organizations like the World Economic Forum—are increasingly signaling massive displacement in white-collar, knowledge-based work (e.g., junior legal research, coding, design, financial analysis) within the very near future (2025-2027).

Understanding the Disruption (For Everyone)

To grasp this simply: Imagine a software developer whose job is to write 100 lines of functional code per day. If an AI tool, powered by self-improvement, suddenly allows them to produce 1,000 lines of higher-quality code per day, that one developer can now perform the work previously requiring ten people. If this happens across 30% of the knowledge economy within three years, the resulting unemployment spikes and income disparity require massive, rapid social safety net restructuring.

The world is prepared for *gradual* change—a slow shift in required skills over a decade. It is profoundly unprepared for a technological shockwave that restructures job demand overnight. This societal unpreparedness is not about Luddism; it is about the time required for education systems, unemployment insurance, and social contracts to catch up to the new reality of productive capacity.

Actionable Insights: Navigating the Acceleration Curve

For businesses and leaders facing this accelerated timeline, complacency is the greatest threat. The future of AI is not about incremental upgrades; it is about radical transformation occurring much sooner than anticipated. Here are the necessary shifts in mindset and strategy:

1. Mandatory Internal Audits of AI Efficacy

Do not rely on vendor roadmaps. Your organization must actively test how rapidly your current AI tools can improve their own efficiency within your specific domain. If your internal teams see efficiency gains accelerating month-over-month rather than year-over-year, you are operating in the RSI reality Altman described. Your planning horizon must shrink from five years to 18 months.

2. Prioritize Regulatory Agility Over Compliance Finality

Since legislation (like the EU AI Act) will lag, structure your AI deployment around core, verifiable ethical principles (transparency, non-maleficence, accountability) rather than waiting for precise legal checklists. Focus on *explainability* in your models—even if the underlying mechanism is complex—to satisfy inevitable future scrutiny. Treat safety disclosures as essential marketing material, not burdensome compliance footnotes.

3. Restructure Talent Around AI Orchestration

The roles that survive and thrive will not be those who perform discrete tasks the AI can do, but those who can manage, prompt, verify, and integrate the output of highly capable AI systems. Invest heavily in 'AI Orchestrators'—people who understand both the domain (e.g., biology, finance) and the capabilities of frontier models. Reskilling for *managing* super-tools is more urgent than traditional upskilling.

4. Embrace the "Shock Transition" Economy

Businesses must model scenarios where major productivity gains are realized almost instantly, leading to immediate cash flow surpluses but also immediate labor market upheaval. This requires planning for both massive expansion *and* significant workforce redirection simultaneously. Partnerships with educational institutions to rapidly retrain displaced workers might become a core strategic pillar, not just a corporate social responsibility initiative.

Conclusion: The Race to Catch Up

Sam Altman’s warning serves as a necessary jolt to the global system. The acceleration of AI research through self-improvement means the gap between technological capability and societal preparedness—in terms of law, ethics, and economics—is widening rapidly. We are moving out of the AI adoption phase and into the AI transformation phase.

What this means for the future of AI is that the timeline for Artificial General Intelligence is less a fixed date and more a function of how quickly we can implement safety buffers and societal resilience measures. The core task ahead is bridging that gap—turning today's unsettling warnings into tomorrow’s managed transition. The race isn't just to build AGI; it is the race for the world to prepare for its arrival.