The AI Security Reckoning: How Anthropic’s New Tool Just Rewrote the Cybersecurity Playbook

The pace of disruption in the technology sector has rarely been as visible or immediate as it was when Anthropic announced its new security tool. The news that their AI, reportedly capable of finding sophisticated software bugs that traditional scanners miss, caused an instant sell-off in major cybersecurity stocks was more than just a financial headline; it was a profound signal about the future trajectory of artificial intelligence.

This isn't just another software update. It represents the moment AI began seriously turning its capabilities inward—using advanced reasoning to secure the digital foundations upon which everything else is built. To understand the gravity of this shift, we must move beyond the stock charts and examine the technical capabilities, the inevitable economic ripple effects, and what this means for the human element of digital defense.

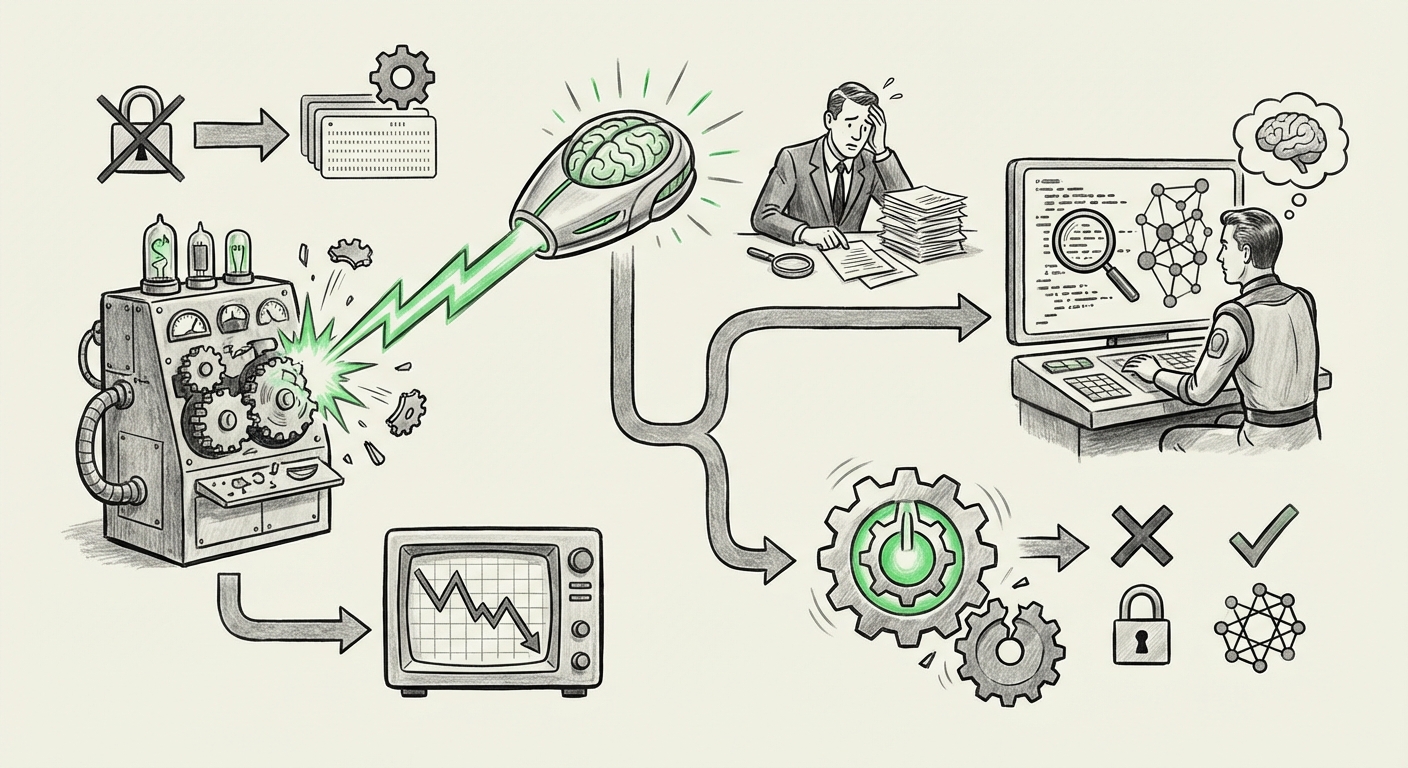

The Core Disruption: AI vs. Legacy Scanning

For decades, software security checking has relied heavily on Static Analysis Security Testing (SAST) and Dynamic Analysis Security Testing (DAST). Think of these as very smart, but rigid, grammar checkers for code. They look for patterns they have been explicitly trained to recognize as dangerous.

Anthropic’s entry, signaled by the launch of a tool like Claude Code Security, suggests a leap from pattern matching to contextual reasoning. This is the key difference that caused the market tremor.

What is Contextual Reasoning in Code?

If a traditional scanner sees a known vulnerable function being called, it flags it (a "known bug"). An advanced LLM, however, can understand the entire application's logic flow. It can see how data moves through five different modules, even if they are written in different languages, and realize that a specific sequence of valid, seemingly safe operations results in a massive security hole (a "novel bug").

Our initial research suggests a necessary validation point: the technical community is keenly seeking benchmarks that compare this new LLM accuracy against established SAST methods (Search Query 1: "LLM code analysis accuracy" vs "traditional static analysis security testing"). If these new AI tools can reliably find classes of vulnerabilities that have historically required weeks of expensive human auditing, the technical justification for the market’s panic becomes clear.

In simple terms: Legacy scanners check if you followed the basic safety rules. Advanced LLMs read the entire architectural blueprint and point out flaws in the building design itself.

The Economic Earthquake: Winners and Losers in the Security Sector

The immediate stock market reaction (Search Query 2: "AI code security tool impact on cybersecurity stock performance") underscores the financial risk associated with technological obsolescence. Established companies built around comprehensive, but slower, manual auditing processes face immense pressure.

Short-Term Integration vs. Long-Term Replacement

In the short term, we are likely to see Augmentation over Replacement. Established security vendors will rush to integrate LLM capabilities into their existing platforms to avoid being seen as laggards. They will market this as a "faster, smarter version" of what they already offer.

However, the long-term trajectory points toward replacement for specific, repetitive tasks. If an AI can perform the heavy lifting of scanning millions of lines of code faster and more accurately than a massive team of human auditors using old tools, the cost structure for application security testing (AST) collapses for those legacy methods.

This pressure favors two groups:

- The AI Innovators: Companies like Anthropic and the startups they inspire, focusing purely on model performance and security reasoning.

- The Shifters Left: Development teams who embrace AI security tools directly into their Continuous Integration/Continuous Deployment (CI/CD) pipelines, shifting security checks so far "left" (early in development) that the need for extensive post-release auditing shrinks dramatically.

Implications for the Human Workforce: The Evolution of the Analyst

Perhaps the most sensitive implication involves the cybersecurity profession itself. Will these tools replace security analysts? The answer, as with most major technological shifts, is nuanced: they will replace *tasks*, not necessarily *jobs*—but the required skillset must evolve rapidly.

If the AI handles the rote identification of common or even complex coding flaws, what is left for the human expert? This leads us directly to the essential question driving workforce planning (Search Query 3: "future of security analyst role with AI vulnerability detection").

From Finder to Strategist

The security professional's role moves up the value chain:

- Threat Hunting and Context: AI is excellent at finding bugs within existing codebases. Humans will become essential for understanding *how an attacker might chain these bugs together* across systems, or for identifying novel social engineering or supply chain risks that don't reside in the source code itself (like configuration errors or hardware vulnerabilities).

- AI Prompt Engineering for Security: Security professionals will need to become expert "prompt engineers" for their security AIs—learning how to query the models to stress-test assumptions and validate the AI’s own findings.

- Policy and Governance: As AI creates more code and audits more aggressively, humans must set the ethical and compliance boundaries. Who is responsible when an AI-written patch introduces a new bug? Human oversight becomes the final guarantor of trust.

This shift means that junior roles focused solely on running standard vulnerability scanners may diminish. Instead, the demand for analysts who understand software architecture, threat modeling, and complex systemic risk will skyrocket. The goal is to use AI to handle the *known knowns* so humans can focus on the *unknown unknowns*.

The Future Trajectory: AI Securing AI

Anthropic’s development is a prime example of AI eating its own dog food—using advanced generative models to secure the very systems that power the modern digital economy.

The next frontier is clearly in AI Securing AI. As more critical infrastructure moves to rely on Large Language Models (LLMs) for decision-making, ensuring those models themselves are safe, trustworthy, and non-exploitable becomes paramount. Claude Code Security is a precursor to tools designed specifically to audit model weights, guardrail effectiveness, and prevent model poisoning or prompt injection attacks.

This cycle—where AI is used to build the systems, and then more advanced AI is used to secure those systems—will accelerate exponentially. It creates a continuous, high-speed security arms race where only those utilizing the most cutting-edge AI tools will maintain a competitive advantage.

Practical Implications and Actionable Insights for Businesses

For businesses across all sectors, the message is clear: Security strategy must be re-evaluated through an AI lens. Waiting for established vendors to slowly integrate these features is a strategy for obsolescence.

For Technology Leaders (CTOs, CISOs):

- Audit Your AST Stack: Immediately review your current Static and Dynamic Application Security Testing tools. How reliant are they on simple signature matching? Begin testing emerging LLM-based solutions, even in pilot phases, to gauge real-world performance improvement against your current baseline.

- Invest in Contextual Training: Reallocate training budgets away from basic tool usage and toward threat modeling, complex attack path analysis, and prompt engineering for security validation. Prepare your teams for strategic roles.

- Shift Left Aggressively: Mandate that security findings from new AI tools are integrated directly into the developer workflow, not treated as an after-the-fact compliance hurdle. Speed is now the enemy of old security processes.

For Investors and Business Strategists:

- Re-evaluate Market Dominance: Recognize that vulnerability management is moving from a high-cost, high-labor solution to a high-intelligence, software-driven solution. Assess which cybersecurity companies have access to the necessary proprietary data or foundational models to compete.

- Look for "Deep Tech" Startups: The next wave of major cybersecurity unicorns will not be building better compliance dashboards; they will be building novel reasoning engines capable of surpassing human cognitive limits in code analysis.

Conclusion: Embracing the Inevitable Velocity

The sudden market reaction to Anthropic’s launch proves that the industry recognizes a paradigm shift when it sees one. Advanced generative AI is not just improving existing security tooling; it is fundamentally redefining the achievable standard of code quality and safety. This accelerates the security lifecycle, making speed and accuracy paramount.

The future of AI is not just about creating new capabilities; it is about using those new capabilities to secure the resulting complexity. Security is becoming less about reacting to known exploits and more about preemptively reasoning away unknown flaws. For businesses, the choice is simple: adopt the AI that hardens your core, or risk being disrupted by those who do.