The AI Dev Revolution: Why Desktop LLMs Are Transitioning Code Assistants into Autonomous Workflow Agents

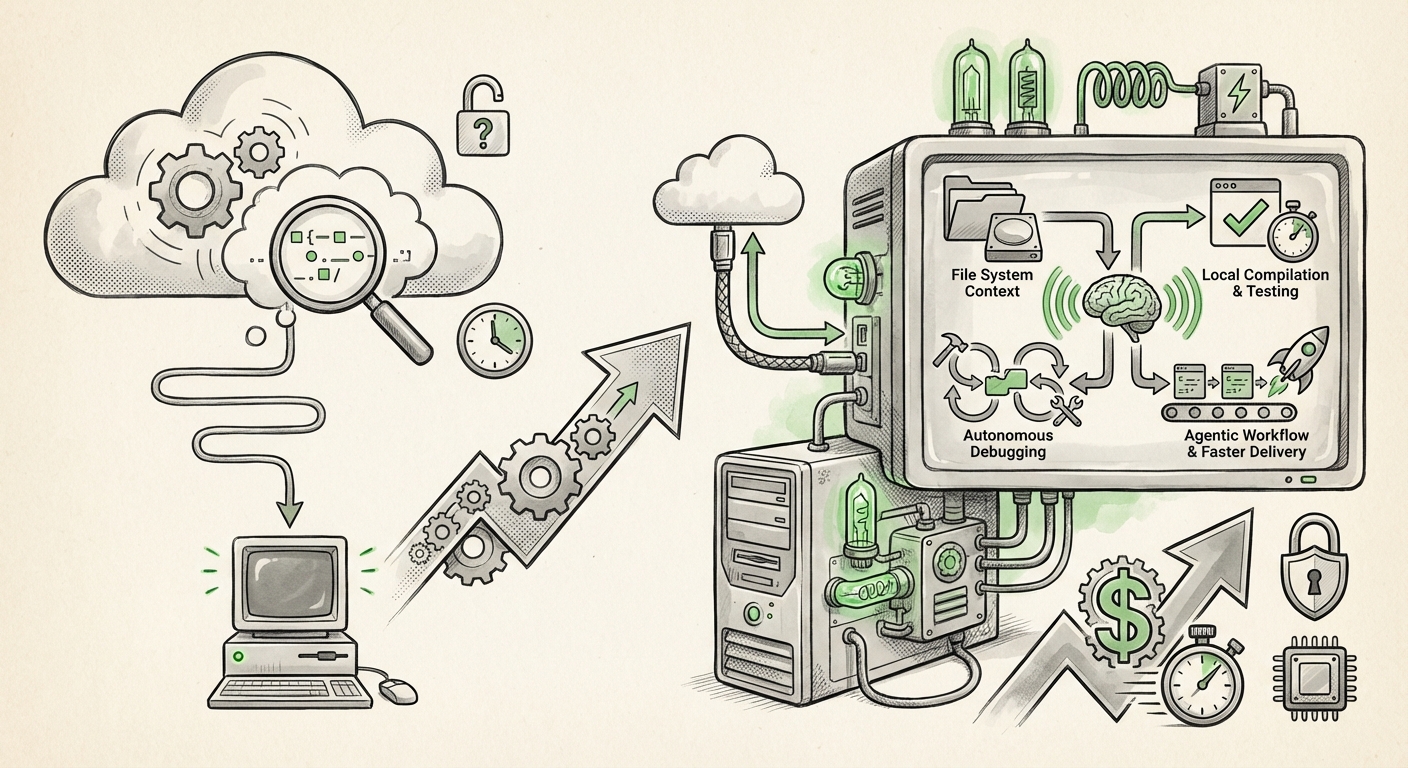

The evolution of Artificial Intelligence assistance in software development has been rapid, moving from simple auto-completion suggestions to complex scaffolding tools. However, the latest announcements, particularly Anthropic’s rollout of desktop features for Claude Code, suggest we are standing at the precipice of a far greater transformation. This isn't just about smarter autocomplete; it’s about embedding AI so deeply into the development environment that it shifts from being a passive helper to an active, integrated, and semi-autonomous workflow manager.

When an AI tool like Claude Code moves from a web interface to the desktop, it gains access to the crucial context that lives locally: the file system, the running processes, the local error logs, and the security parameters of the development machine. This move signals the definitive end of the "cloud-only" coding assistant paradigm. To fully understand the weight of this trend, we must analyze the competitive pressures, the technological shift to edge computing, and the market validation proving that this deeper integration is where productivity gains truly live.

The Escalating Arms Race: Competition Dictates Deeper Integration

Innovation in AI tooling is no longer incremental; it is fiercely competitive. Anthropic’s move is impossible to analyze in a vacuum. The development landscape is dominated by the synergy between Microsoft and OpenAI, centered on GitHub Copilot. The immediate market expectation is that if one major player moves toward deeper workflow integration, the others must follow or risk obsolescence.

Analysis of the competitive landscape reveals a clear race toward embedding functionality directly where developers spend their time—inside the Integrated Development Environment (IDE). While Copilot began in the cloud, Microsoft has continuously pushed for deeper context awareness within VS Code and has recently emphasized projects like GitHub Copilot Workspace. These next-generation tools aim not just to suggest the next line of code, but to understand a high-level, multi-step task (e.g., "Add feature X and ensure all related tests pass") and execute the necessary steps across multiple files.

For Anthropic, embedding desktop features is essential to prove parity and superiority. If Claude Code can leverage local environment data more effectively than a cloud-bound competitor, it offers a compelling value proposition. This is corroborated by industry focus on integrating AI features locally. If developers are forced to constantly copy/paste error messages or switch browser tabs to query the AI, the productivity gains are immediately eroded. The necessity of seamless integration—the benchmark set by competitors—is forcing all AI labs to evolve their assistants into environment natives.

Actionable Insight for Businesses: Standardize on Platform Integration

For CTOs and Engineering Managers, this means that evaluating AI tooling should no longer focus solely on model performance benchmarks (like MMLU scores). The critical metric now is integration depth. Teams should prioritize tools that minimize context switching and offer native hooks into their existing IDEs, source control, and local debugging cycles.

The Critical Shift: From Cloud API to Edge/Desktop LLM

The most significant technological implication of Anthropic’s desktop update is the embrace of edge computing—or, in this context, running powerful Large Language Models (LLMs) directly on the user's hardware.

For years, the consensus was that the most capable models (like GPT-4 or Claude 3 Opus) required massive cloud infrastructure. However, advancements in model quantization (making models smaller without losing too much intelligence) and the growing power of consumer hardware (especially specialized chips like Apple’s M-series) are challenging this. When an assistant runs locally, two major benefits emerge:

- Latency Reduction: Sending a code snippet to a distant server, waiting for processing, and receiving the response takes time. For rapid-fire coding, even a 300-millisecond delay is noticeable and disruptive. Local execution dramatically cuts this latency, making the AI feel instantaneous and truly interactive, like native IDE features.

- Security and Privacy: This is paramount for enterprise adoption. When proprietary source code is sent to a third-party cloud API, it creates a data transmission risk. Desktop integration—especially if it allows for purely on-device processing for sensitive tasks—offers a crucial layer of data sovereignty and security compliance that cloud-only models cannot match. We see this trend validated by the increasing interest in open-source local LLMs (like those deployed via tools such as Ollama) for enterprise use cases.

The trade-off, as sought in queries about "On-device LLM performance vs cloud latency," is power versus speed. Desktop deployment often means using a smaller, specialized model for immediate tasks, while reserving the massive cloud models for complex, architectural planning. This hybrid approach optimizes performance where it matters most.

Implication for AI Infrastructure: The Rise of the Hybrid Model

We are moving toward a world where AI infrastructure is decentralized. Businesses will need strategies not just for API access, but for managing local model deployments, ensuring consistency across developer machines, and balancing the use of local, private models against external, state-of-the-art foundation models. This requires new IT governance frameworks.

Validating the Promise: Productivity Gains as the Driving Force

Why are companies like Anthropic making these complex engineering investments? Because the data supports the transition. The productivity gains promised by AI coding assistants are no longer theoretical; they are quantifiable business realities.

Corroborating industry data consistently shows significant ROI. Recent developer surveys indicate that AI assistance can reduce the time spent on routine tasks—like writing boilerplate code, fixing simple bugs, or searching documentation—by 30% to 50%. This is not marginal improvement; it frees up senior engineers to focus on novel problem-solving, architecture, and innovation.

When Anthropic updates Claude Code with desktop features aimed at "automating more of the dev workflow," they are betting that the productivity lift from a truly integrated assistant will far exceed the capabilities of a simple conversational bot. This moves the goalposts from assistance to automation.

The Business Case: Faster Time-to-Market

For product-driven organizations, faster coding equals faster iteration and market responsiveness. If an AI agent embedded on the desktop can handle the tedious, context-switching portions of a developer's day, the entire project timeline compresses. This validates the investment in deep integration over superficial feature additions.

The Final Leap: From Co-pilot to Agentic Workflow

The ultimate destination for these integrated tools is true agentic workflow. This is the key difference between the current generation of tools and what Anthropic is hinting at.

A co-pilot suggests a line or a function based on the immediate prompt. An agent takes a high-level directive, breaks it down into sub-tasks, executes those tasks sequentially using appropriate tools (like running tests, editing multiple files, searching logs), evaluates the results, and iterates until the goal is met.

When Claude Code gains desktop access, it gains the context necessary to become an agent. It can see the entire codebase structure, monitor the running application state, and even initiate command-line operations (under strict user control, presumably). This alignment with discussions on "Agentic workflows in software development future" shows a clear strategic direction:

- Task Decomposition: The AI can accept a ticket summary and generate a multi-step plan.

- Tool Use: The AI can decide when to use a compiler, when to run a linter, or when to query a dependency manager.

- Self-Correction: If the local unit tests fail after a code change, the agent analyzes the failure logs locally and attempts a fix immediately, without requiring a human intermediary to re-prompt the initial cloud query.

This level of automation is revolutionary. It promises to lift the entire development floor, allowing less experienced engineers to tackle more complex problems under the supervision of a highly capable, locally integrated AI mentor, and allowing seniors to focus exclusively on high-level architecture.

Practical Implications and Future Roadmaps

The integration of specialized LLMs directly into the developer’s environment has cascading effects:

For Developers: Skill Transformation

Developers will need to become excellent prompt engineers and rigorous reviewers of AI output. The skill shifts from memorizing syntax or documentation search efficiency to verifying the architectural correctness and security integrity of machine-generated code. Trust, but verify, becomes the central tenet of the day.

For Security Teams: New Attack Vectors

If agents can execute commands, the risk profile changes. Security teams must rapidly develop protocols for vetting and sandbox-ing these AI agents. Are the commands being generated by the agent malicious or prone to introducing vulnerabilities like buffer overflows? Robust auditing of agentic actions will become a mandatory compliance layer.

For Enterprise Software Vendors: Consolidation or Extinction

Software vendors focused on development tools (IDEs, version control systems, debugging utilities) must either build their own LLM capabilities directly into their core offerings or face being commoditized by foundational model providers like Anthropic, who are now embedding their intelligence straight into the user’s machine. The battleground is moving away from external SaaS platforms and into the local toolchain itself.

Conclusion: The Ambient Developer Experience

Anthropic’s pivot with Claude Code is emblematic of a maturing AI landscape. We are leaving the era of novelty chatbots and entering the age of ambient intelligence—AI that is always present, context-aware, and functionally integrated into the core tasks of a profession. The desktop features are not merely cosmetic upgrades; they are the necessary infrastructure to support the next generation of autonomous, agentic software creation.

The future developer experience will be defined by how seamlessly the AI agent can operate within the local environment, balancing the raw power of cloud models with the speed and security of edge processing. Those organizations that successfully embrace this shift—by retraining their teams, redefining security protocols, and demanding deeper platform integration from their vendors—will capture the exponential productivity gains that this new AI revolution promises.