The Desktop Revolution: How Anthropic's Claude Code Push Redefines AI Software Development Workflows

Anthropic’s recent announcement regarding new desktop features for Claude Code is more than a minor update; it signals a profound shift in how developers will interact with Artificial Intelligence. For years, AI coding assistance meant hopping between a local Integrated Development Environment (IDE) like VS Code and a browser window filled with AI chat results. This context switching was a constant source of friction and inefficiency.

By pushing advanced capabilities directly into the desktop environment, Anthropic is executing a strategy focused on embedding AI deeply into the *entire* software development lifecycle. This moves the needle from AI as a helpful chatbot to AI as an integrated, workflow-native coworker. To truly understand the future implications of this move, we must synthesize this announcement with broader technological trends across specialized models, agentic automation, and enterprise security concerns.

The Shift from Cloud Chat to Desktop Utility

Think of the difference between asking someone to quickly write a recipe on a notepad (the old cloud chat model) versus having that person sit at your kitchen counter, watching you cook, and handing you the next ingredient right when you need it (the new desktop model). The latter is far more efficient because it eliminates travel time and hesitation.

Anthropic’s desktop features aim to solve the "context latency problem." When a developer is deep within a complex codebase, switching to a web interface breaks concentration. Desktop integration implies better local context awareness—understanding the project structure, actively monitoring files being edited, and injecting fixes or suggestions precisely where they are needed, often before the developer consciously asks. This drastically accelerates tasks ranging from boilerplate generation to complex debugging scenarios.

Contextualizing the Move: Benchmarks and Model Superiority

Why invest heavily in desktop integration now? Because the underlying models have reached a point where they are powerful enough to justify this level of integration. If an AI assistant is only marginally helpful, developers will tolerate the friction of switching windows. If it is consistently brilliant, they demand immediacy.

This brings us to the competitive landscape. To justify this strategic investment, Anthropic needs its underlying Claude model family (like Claude 3 Opus) to demonstrate cutting-edge performance in coding tasks. As we look into industry benchmarks (the subject of searches like "Code LLM benchmarks" "developer productivity" "GPT-4o vs Claude 3"), the race is heating up. Superior performance in areas like multi-file coherence (the ability to fix a bug that spans several different files correctly) is what separates a useful tool from a transformative one.

For Software Architects and AI Researchers: The desktop integration confirms that specialized LLMs are winning the specialized tasks. It suggests that the most valuable models in the near term will be those fine-tuned explicitly for coding, debugging, and version control systems, rather than general-purpose giants.

The Inevitable March Toward Agentic Development

The most exciting, and perhaps daunting, implication of deep desktop integration is the pathway it opens for true AI Agentic Workflows.

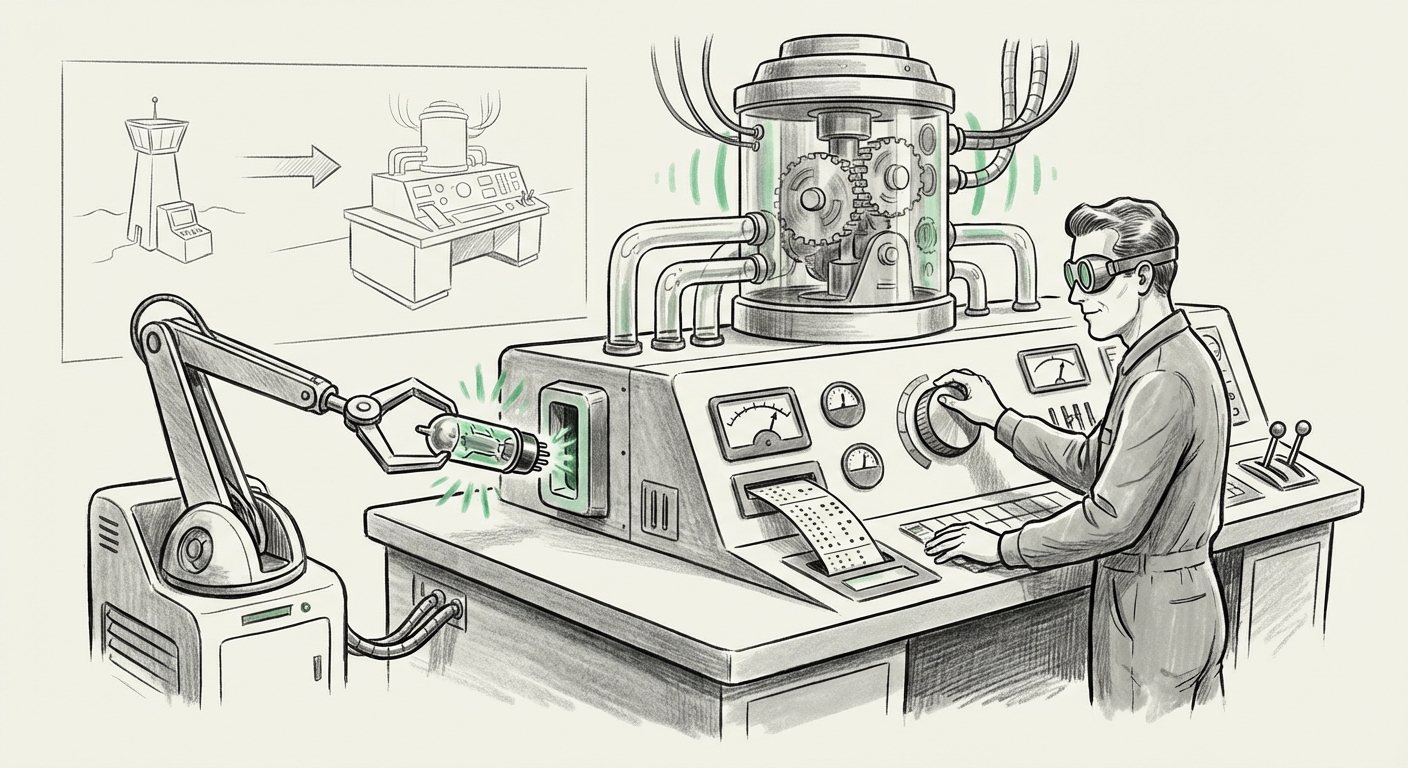

Currently, GitHub Copilot acts as a superb pair programmer, reacting to your keystrokes. An AI agent, however, acts as a true colleague. It can receive a task—"Implement OAuth integration using library X and update the authentication service"—and then autonomously manage the sequence of actions: opening files, writing code, running unit tests, documenting changes, and creating the final commit message.

Anthropic’s desktop push provides the necessary scaffolding for this. To execute multi-step tasks without human intervention, the AI needs persistent access to the development environment—the local file system, the terminal, and the debugger. A purely web-based system cannot robustly handle this level of interaction.

Articles tracking the rise of tools like Devin AI (a concept explored via searches like "AI agentic workflow" "software development automation") show that the industry goal is to automate entire engineering sprints, not just individual lines of code. Claude Code’s new features are fundamentally about creating an operational environment where these agents can run locally and interact seamlessly with the surrounding toolchain.

For CTOs and Product Managers: This signifies the beginning of exponential growth in developer output. The question shifts from "How many developers do we need?" to "How effective can we make our existing developers using these autonomous agents?" Productivity gains of 50% to 100% become plausible if agents can successfully handle 70% of repetitive, low-complexity engineering tasks.

The Critical Hurdle: Adoption and Seamless Integration

Power is meaningless without adoption. Developers are notoriously protective of their established toolchains. For decades, engineers have honed their craft within specific IDEs (VS Code, IntelliJ). Introducing a powerful new AI layer that forces a switch to a proprietary web platform is a non-starter for most professionals.

This is why Anthropic’s focus on desktop functionality is smart strategy. It suggests deep compatibility with existing ecosystems. Contextual analysis through searches like "LLM IDE integration challenges" reveals that developer acceptance hinges on minimal friction. Does the AI work perfectly within the editor's shortcuts? Does it respect existing project configurations? Can it pull context from local Git branches instantly?

If Claude Code successfully marries the computational power of a leading LLM with the native feel of a deeply embedded IDE plugin, adoption rates will soar. Conversely, if it feels bolted on or slow, developers will quickly revert to simpler, albeit less powerful, browser-based solutions.

The Psychological Shift for Developers

This trend implies a necessary mental adjustment for engineers. The job is shifting from primary code creation to AI orchestration and verification. Instead of writing 100 lines of code, the developer might spend 10 minutes verifying the 100 lines the AI agent wrote, ensuring security, efficiency, and compliance. This elevates the role of the engineer toward system design and high-level problem-solving, areas where human intuition remains paramount.

The Elephant in the Room: Security and Trust in the Local Environment

The deeper the AI integration, the more sensitive the data it handles. When an AI assistant lives in the browser, the data shared is typically limited to the prompt you send. When it lives on your desktop and is automating complex workflows, it requires access to the entire repository, build logs, and potentially even local secrets management systems.

This necessitates a crucial conversation about security, which is the focus of inquiries like "LLM local processing vs cloud" "code security compliance AI".

For companies dealing with sensitive intellectual property (IP), proprietary algorithms, or regulated data, trusting a third-party vendor with deep access to local code is a major governance hurdle. This pressure drives two potential future trends:

- Increased Demand for On-Premises/Private Cloud LLMs: Enterprises will accelerate the deployment of smaller, highly optimized coding models that can run entirely within their own secure perimeters.

- Verification and Transparency of Desktop Access: Anthropic (and its competitors) must offer iron-clad assurances about what data leaves the local machine, how it is processed, and how user input controls are enforced. Desktop applications must be designed with security governors that are more granular than cloud APIs.

For Security Officers: The risk profile of the developer workstation is increasing exponentially. Desktop AI tools are not just productivity enhancers; they are new vectors for data leakage. Robust access controls and automated auditing of AI actions within the development environment will become standard compliance requirements.

Synthesizing the Future Implications

Anthropic’s move with Claude Code is a microcosm of the broader technological push: intelligence is moving from the periphery to the core of operational systems.

1. Hyper-Specialization of AI: We will see a further splintering of LLMs. Instead of one model doing everything, we will have models optimized for Java backend services, Python data science pipelines, frontend component generation, and infrastructure-as-code scripting. Desktop interfaces will become the environment where these specialized models coordinate.

2. The Productivity Chasm: Companies that quickly and safely adopt deep workflow integration will see their development velocity skyrocket. Those slow to adapt, either due to security concerns or cultural resistance, risk falling critically behind in terms of product release cycles and maintenance speed.

3. The Rise of the Orchestrator AI: The future desktop environment won't run just one AI helper. It will run an orchestrator AI—perhaps Claude, perhaps another system—that manages a team of specialized, smaller models running locally or in a secure enclave to complete a given development task. The user delegates the entire process, not just individual lines of code.

Actionable Insights for Moving Forward

For organizations looking to capitalize on this trend while mitigating risk, several actions are immediately necessary:

- Pilot Deep Integration Strategically: Start testing desktop AI tools in non-critical projects first. Focus pilots on reducing time spent on refactoring, documentation generation, and writing unit tests—tasks where the immediate productivity payoff is highest.

- Establish Clear AI Governance Policies: Before widespread deployment, define strict rules on what kind of code (e.g., PII-handling, core algorithms) is permitted to be processed by external, cloud-connected desktop AI tools. Mandate local execution where possible.

- Invest in Developer Education on Verification: Training must pivot. Engineers need to become expert reviewers and verifiers of AI-generated code, focusing on edge cases, performance optimization, and security vulnerability detection, rather than simply syntax construction.

- Evaluate Vendor Trust and Transparency: When selecting a desktop AI provider, prioritize those who offer transparent security models, clear data residency options, and robust auditing features, acknowledging the elevated trust required for local environment access.

The integration of powerful LLMs like Claude directly into the desktop developer environment marks the end of the "chat-bot programming era" and heralds the arrival of the truly automated, agentic software factory. The speed at which engineering teams adapt to this new, deeply embedded reality will define competitive advantage in the coming decade.