The Gemini Price Shock: How Efficiency is Redefining the Future of High-Performance AI

The Artificial Intelligence landscape is defined by a relentless, often dizzying, race for raw capability. For years, the narrative has been simple: bigger models, more data, higher performance—and commensurately higher costs. However, a recent preview of Google’s **Gemini 3.1 Pro** has sent a potent signal that this equation is changing. Leading the 'Artificial Analysis Intelligence Index' while costing less than half its rivals is more than just a marketing win; it signals a fundamental shift in AI economics and accessibility.

This development forces us to look past the headline score and investigate the three critical pillars supporting this shift: the integrity of the benchmarks, the reality of AI pricing wars, and the underlying engineering innovation that makes such efficiency possible. This is not just about one model winning; it's about what affordable, top-tier performance means for the next wave of technological adoption.

The Shifting Sands of Benchmarking: What Does "Top Score" Really Mean?

When a new model claims the top spot, the first question savvy technologists ask is: "Which test did it ace?" The established giants of AI evaluation, such as MMLU (Massive Multitask Language Understanding) and HumanEval (for coding proficiency), serve as widely accepted yardsticks. However, the rise of specialized indices, like the one Gemini 3.1 Pro dominated, requires closer inspection.

If we explore the methodology behind the **Artificial Analysis Intelligence Index**—a step vital for true technological understanding—we seek to answer Query 1: *Analysis of Artificial Analysis Intelligence Index methodology vs MMLU and HumanEval*. If this index emphasizes complex reasoning, multimodal synthesis, or specific enterprise analysis tasks, Gemini's lead suggests targeted optimization for commercial workloads rather than generalized academic excellence. This matters immensely. For an enterprise user, a model that excels at deep document analysis for 1/2 the cost is infinitely more valuable than one that slightly outperforms on a general knowledge test.

For the AI researcher, this highlights a necessary evolution. As models become extremely capable across the board, differentiation moves toward specialization. If Google is driving innovation in benchmarks that reflect *real-world application performance*, it suggests they are anticipating where the market value will be generated next.

The Economics of Inference: The Sub-$0.005 Revolution

The most disruptive aspect of the Gemini 3.1 Pro preview is the price tag. A cost reduction of over 50% compared to established leaders like GPT-4o or Claude 3 Opus flips the business case for AI integration on its head. To validate this claim, one must turn to Query 2: *Cost comparison LLM inference pricing 2024 Gemini vs GPT-4o vs Claude 3 Opus*.

Imagine this scenario, which recent market analysis strongly suggests: if top-tier performance is available for less than \$0.005 per million input tokens, the economic friction associated with deploying AI across massive datasets effectively vanishes. For years, the primary constraint for startups and mid-sized businesses wasn't talent or ideas; it was the crippling operational expenditure (OpEx) of running complex LLMs at scale.

This price erosion forces a fundamental reassessment of the Total Cost of Ownership (TCO) for AI projects. For CTOs and Enterprise AI Buyers, the immediate implication is clear: models that were previously considered too expensive for bulk processing—such as customer support summaries, continuous quality assurance analysis, or exhaustive legal review—are now economically viable. This isn't just about saving money; it's about unlocking entirely new use cases previously gated by high inference fees.

The Competitive Response

This aggressive pricing strategy is a clear shot across the bow of OpenAI and Anthropic. In the commercial AI sector, price often acts as a primary differentiator when performance parity is approached. If Gemini 3.1 Pro offers 95% of the performance at 40% of the price, market share acquisition becomes a near certainty unless competitors rapidly adjust their own pricing tiers, initiating a healthy, but volatile, price war.

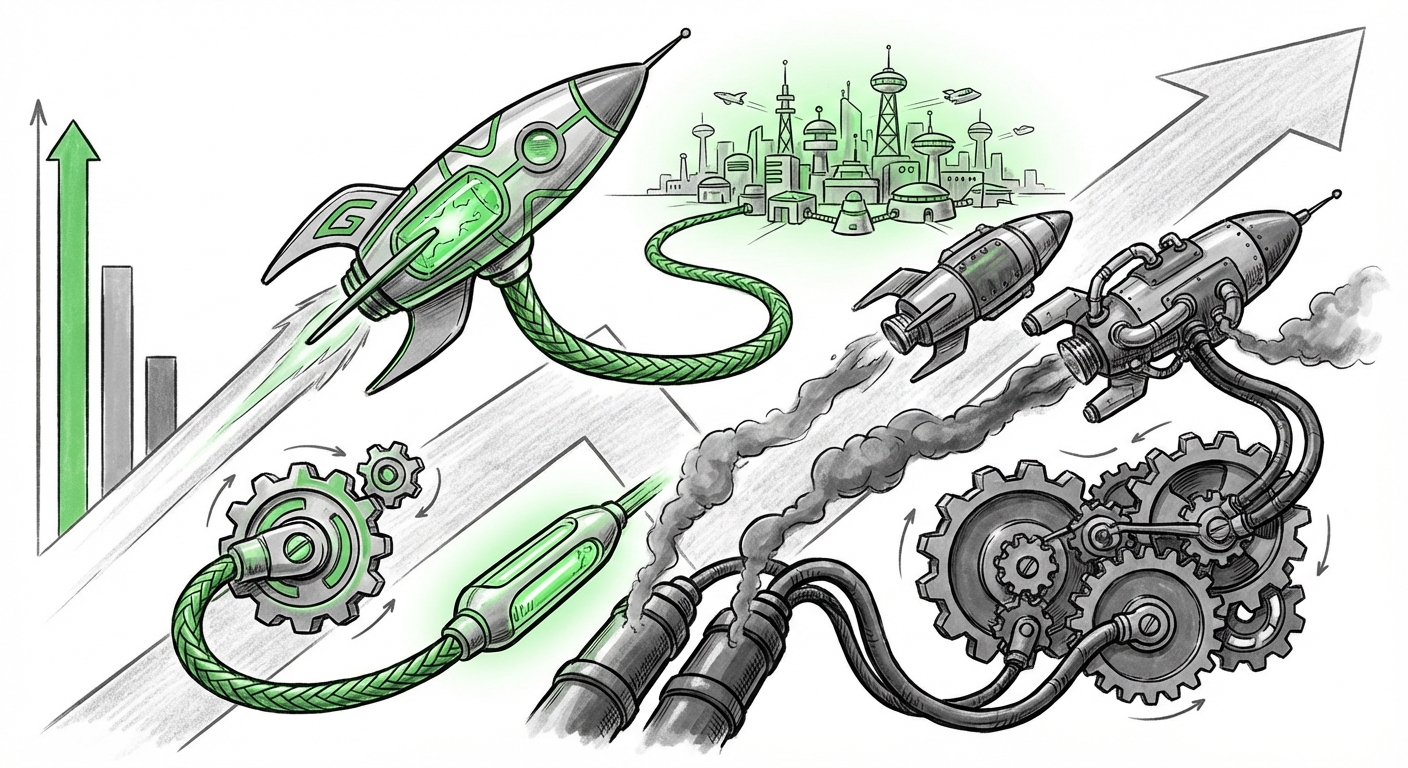

The Engineering Backbone: Efficiency Over Brute Force

How does a company deliver top-tier results at half the cost? The answer lies deep within the architecture and infrastructure—the focus of Query 4: *Google's strategy scaling Gemini models efficiency vs raw parameter count*. Historically, achieving better performance meant adding billions more parameters, which requires exponentially more computing power (and thus, more energy and cost) to run.

Google’s advantage here is often rooted in its vertical integration, particularly its Tensor Processing Units (TPUs). Unlike competitors who often rely on general-purpose GPUs, Google designs its hardware specifically for the matrix multiplication tasks central to neural networks. This synergy between specialized silicon and optimized model architecture (perhaps through advanced quantization techniques or innovative mixture-of-experts routing) allows them to achieve superior *performance per watt* and *performance per dollar*.

For hardware analysts and deep learning engineers, this signals a mature phase of AI development. The focus is shifting from sheer scale to *architectural intelligence*. Future dominance may belong not to the company with the largest model, but to the one that can serve the most useful model most cheaply.

Future Implications: The Democratization Wave

The most profound consequence of this cost-to-performance ratio is the acceleration of **AI democratization**. This is the theme explored in Query 3: *Implications of cheaper, high-performance LLMs on AI startup ecosystem*.

When the cost of intelligence drops dramatically, the barriers to entry for innovation fall away:

- Startup Viability: Small teams can now build complex, data-intensive applications that previously required massive seed funding just to cover API calls. This empowers innovative startups to focus resources on product differentiation rather than cloud compute bills.

- Hyper-Specialization: Businesses can afford to fine-tune these powerful base models on extremely narrow, proprietary datasets without breaking the bank. We move from general-purpose chatbots to hyper-specialized AI agents capable of mastering niche domains like specific legal compliance frameworks or proprietary industrial diagnostic procedures.

- Edge Deployment & Local Capabilities: While Gemini 3.1 Pro is cloud-based, the efficiency demonstrated hints at faster pathways toward highly capable, smaller models that can run locally on devices (Edge AI), enhancing privacy and reducing latency for billions of users.

This democratization has a societal impact as well. High-quality analytical tools, historically the domain of large corporations due to prohibitive costs, become accessible to educators, non-profits, and individuals globally. This can foster a significant leveling of the information playing field, though it simultaneously necessitates stronger governance around misinformation, as powerful generative tools become ubiquitous.

Practical Insights: Actionable Steps for Today's Leaders

Regardless of whether you are a business leader steering technology spending or an engineer designing the next application, the Gemini 3.1 Pro announcement demands strategic adjustments:

For Enterprise AI Buyers and CTOs: Re-evaluate Your Stack

Actionable Insight: Do not rely on legacy pricing agreements. Immediately task your procurement and engineering teams to stress-test the Gemini 3.1 Pro Preview against your highest-volume workflows. If the model confirms a 50%+ cost reduction for comparable quality, initiate migration planning. The time lag between performance parity and price parity is shrinking; locking into expensive contracts now is a risk.

If you are using models for complex tasks, start exploring the **Artificial Analysis Index** benchmarks referenced in the initial report. If your needs align with its focus (e.g., deep analysis over simple Q&A), your ROI potential is enormous.

For Developers and Product Managers: Design for Scale, Not Scarcity

Actionable Insight: Shift your product design philosophy. Previously, developers had to build "AI light" features to conserve tokens. With sub-cent LLM access, you can now design for *maximum intelligence* in every user interaction. Integrate deep reasoning, complex multi-step tool use, and exhaustive summarization without fear of runaway billing. Design for **intelligence density**.

For Investors and VCs: Look Beyond the Giants

Actionable Insight: The barrier to entry has dropped. Investigate startups that are deeply specialized and were previously bottlenecked by compute costs. These companies, now armed with cheaper, smarter tools, are poised to capture vertical market segments rapidly. Focus on application layer innovation that leverages this new economic reality.

Conclusion: The Era of Computational Thrift

Google's Gemini 3.1 Pro is more than just a competitor; it represents a maturation point for the Generative AI industry. The era defined solely by exponential parameter growth is giving way to the **Era of Computational Thrift**. Performance is now being decoupled from exorbitant cost through engineering excellence.

The future of AI will not be defined by who has the *biggest* models, but by who can deploy the *smartest* models across the broadest possible user base most affordably. This dynamic competition—where performance gains are immediately weaponized as cost advantages—will ultimately benefit end-users, driving a wave of accessible, powerful, and deeply integrated AI tools across every facet of business and daily life.